Tutorials

Multi-Source Legal Evidence Reporting: Building an Investigation Platform with TwelveLabs via AWS Bedrock and NeMo Retriever

Hrishikesh Yadav

Legal investigators reviewing 40+ hours of multi-source video evidence (dashcams, bodycams, CCTV, doorbell cameras) spend $200-500/hour in paralegal time manually searching for critical moments. This tutorial shows how to build a cross-source evidence search platform using TwelveLabs through AWS Bedrock for video intelligence and NeMo Retriever for document search, enabling natural language queries across 12+ disparate video sources with sub-3-second response times. The result: 40 hours of evidence review becomes 4 hours of targeted investigation, with automated timeline reconstruction, entity tracking, and structured compliance analysis.

Legal investigators reviewing 40+ hours of multi-source video evidence (dashcams, bodycams, CCTV, doorbell cameras) spend $200-500/hour in paralegal time manually searching for critical moments. This tutorial shows how to build a cross-source evidence search platform using TwelveLabs through AWS Bedrock for video intelligence and NeMo Retriever for document search, enabling natural language queries across 12+ disparate video sources with sub-3-second response times. The result: 40 hours of evidence review becomes 4 hours of targeted investigation, with automated timeline reconstruction, entity tracking, and structured compliance analysis.

In this article

No headings found on page

Join our newsletter

Join our newsletter

Receive the latest advancements, tutorials, and industry insights in video understanding

Receive the latest advancements, tutorials, and industry insights in video understanding

Search, analyze, and explore your videos with AI.

2026/04/25

16 Minutes

Copy link to article

Introduction

Legal teams processing video evidence face a compounding problem. A single case can involve 40+ hours of footage from dashcams, bodycams, CCTV systems, doorbell cameras, and insurance submissions; all in different formats, resolutions, and timestamps. Investigators spend $200-500/hour in paralegal time manually reviewing this content. Miss a critical 10-second clip buried in hour 23 of camera feed 7, and the case outcome changes.

The traditional approach (manual review, basic metadata tagging, frame-by-frame analysis) doesn't scale. Legal teams need to search across disparate sources simultaneously, reconstruct timelines from fragmented footage, and identify critical moments without watching every second of video.

This tutorial demonstrates how to build a legal evidence investigation platform using TwelveLabs through AWS Bedrock for video intelligence and NVIDIA NeMo Retriever for document search. The result: investigators search 12 video sources with natural language queries ("find the red sedan" or "show me when the person entered the building"), get ranked results with exact timestamps, and generate structured compliance reports in minutes instead of days.

What you'll build: A multi-source evidence investigator that ingests mixed-format surveillance footage, enables cross-source semantic search, performs automated compliance analysis, and reconstructs chronological timelines from disparate video sources.

Time investment: 40 hours of evidence review becomes 4 hours of targeted investigation.

You can explore the demo of the application here: Legal Evidence Investigator Application

You can check out the source code here: GitHub Repository

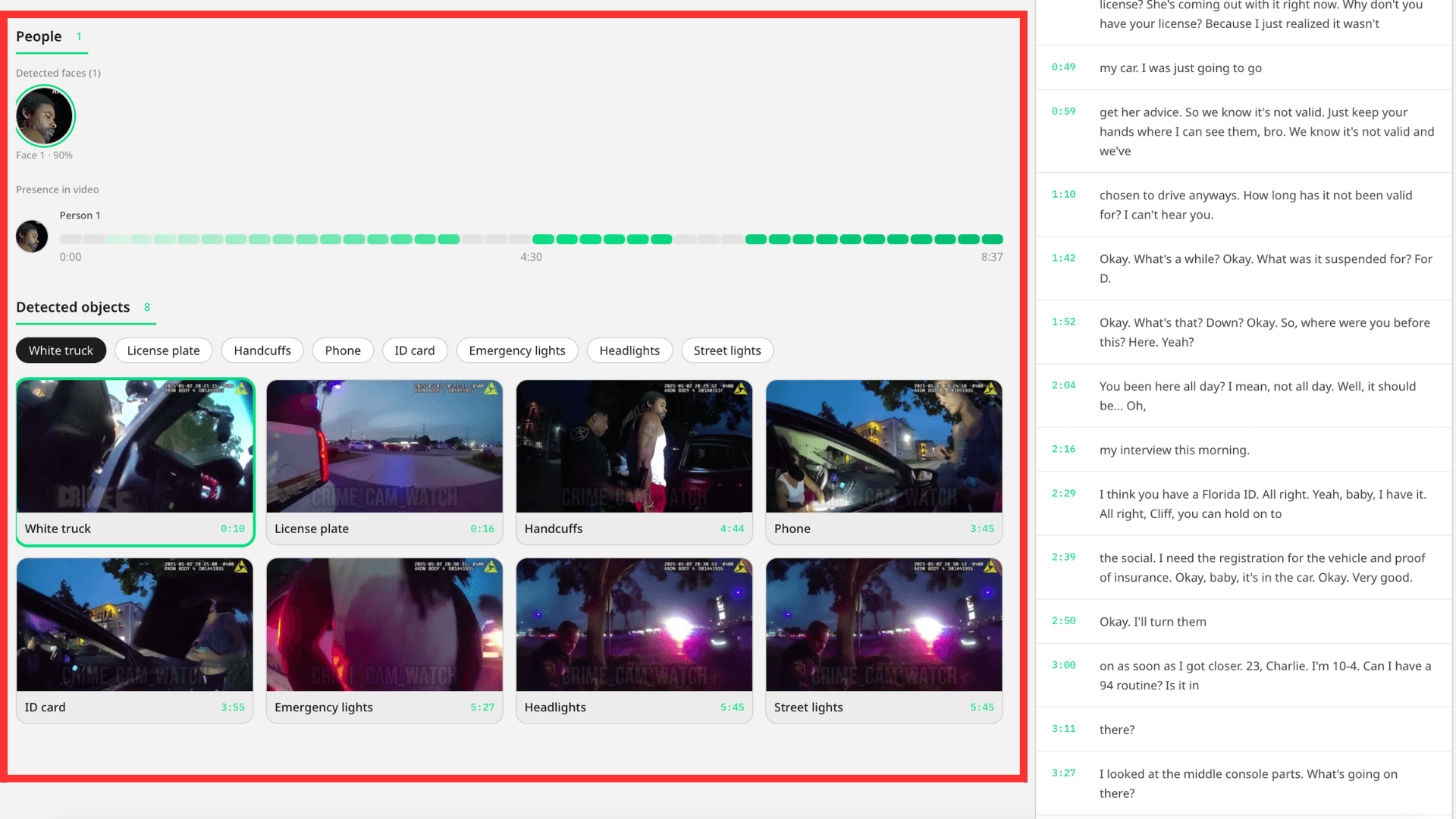

Demo Application

This demonstration shows how the platform handles real investigative workflows: searching across multiple video sources, identifying critical moments with precise timestamps, generating structured compliance analysis, and providing an interactive Q&A interface for deeper investigation.

Key capabilities demonstrated:

Cross-source search across 12+ disparate video formats

Entity tracking (find specific person/vehicle across all sources)

Automated compliance analysis with risk categorization

Timeline reconstruction showing sequential evidence

Conversational video Q&A with clickable timestamps

System Architecture: Why Single-Index Multi-Source Design Matters

The core architectural decision in this application addresses a fundamental constraint: TwelveLabs search operates at the index level: you search one index per request, not individual videos. This shapes everything.

The naive approach would create one index per video source. This fails immediately: you'd need 12 separate search requests to find a person across 12 cameras, then manually merge and sort results in application logic. Slow, complex, brittle.

The correct approach uses a single-index multi-source strategy: all evidence videos live in one TwelveLabs index, differentiated by rich metadata tagging. One search query hits all sources simultaneously. Results come back pre-ranked by relevance, grouped by source video, with metadata filters enabling scoped searches when needed ("only bodycam footage" or "only footage from Main Street location").

System components:

Video ingestion pipeline: Upload mixed-format footage to S3 → Index via

twelvelabs.marengo-embed-3-0-v1:0through Bedrock → Store multimodal embeddings with source metadataDocument ingestion pipeline: Extract text from PDFs → Chunk intelligently → Embed via

nvidia/llama-nemotron-embed-vl-1b-v2→ Store in document indexHybrid retrieval layer: Parallel search across video embeddings (Marengo) and document chunks (NeMo) → Merge results → Return unified response

Analysis engine: Generate structured compliance reports via

twelvelabs.pegasus-1-2-v1:0including title, risk categories, detected objects, face timelines, and transcript segmentsConversational interface: Video Q&A with clickable timestamp citations

This architecture delivers cross-source search without sacrificing performance. Single-request latency stays under 3 seconds even with 12 indexed videos because the index-level search pattern is optimized for exactly this scenario.

Preparation: What You Need Before Building

1 - AWS Bedrock Access for TwelveLabs Models

Set up AWS credentials with permissions for:

Amazon Bedrock runtime and model access

S3 for video storage and Bedrock async output

Access to these TwelveLabs models through Bedrock:

twelvelabs.marengo-embed-3-0-v1:0(multimodal video embeddings)twelvelabs.pegasus-1-2-v1:0(video analysis and reasoning)

Why these models: Marengo generates the searchable representations of video content (the "encoder"), while Pegasus performs reasoning and structured analysis (the "interpreter"). You need both: Marengo makes video searchable, Pegasus makes it understandable.

2 - S3 Bucket Configuration

Create one S3 bucket structured for:

Video uploads and storage

Bedrock async embedding output location (required for batch jobs)

Generated thumbnails and analysis artifacts

Document storage

Why S3-based: Bedrock's async embedding API requires S3 input/output locations. This also enables scalable storage without hitting local disk limits.

3 - NVIDIA API Key

Get an NVIDIA API key to access:

nvidia/llama-nemotron-embed-vl-1b-v2for document chunk embeddings

Why NeMo Retriever: Legal evidence isn't just video; it's police reports, witness statements, insurance forms. NeMo handles text retrieval while TwelveLabs handles video, giving you multimodal search across all evidence types.

4 - Clone and Configure

git clone https://github.com/Hrishikesh332/tl-compliance-intelligence cd

Follow backend environment setup in .env.example:

AWS credentials (Access Key ID, Secret Access Key, region)

S3 bucket name and Bedrock output path

NVIDIA API key

Application-specific settings (index configuration, match thresholds)

Implementation Deep Dive

Part 1: Video Ingestion and Embedding Generation

The ingestion pipeline transforms uploaded surveillance footage into searchable multimodal embeddings. This happens asynchronously because Bedrock's embedding jobs can take several minutes for hour-long videos.

1.1 - Starting the Marengo Embedding Job

When a video uploads, the system immediately stores it in S3 and kicks off a Bedrock async embedding job:

Source: backend/app/services/bedrock_marengo.py (Line 111)

def start_video_embedding( s3_uri: str, output_s3_uri: str, bucket_owner: str | None = None, ) -> dict: client = get_bedrock_client() owner = bucket_owner body = { "inputType": "video", "video": { "mediaSource": media_source_s3(s3_uri, owner), "embeddingOption": ["visual", "audio"], "embeddingScope": ["clip", "asset"], }, } resp = client.start_async_invoke( modelId=MARENGO_MODEL_ID, modelInput=body, outputDataConfig={"s3OutputDataConfig": {"s3Uri": output_s3_uri}}, ) return { "invocation_arn": resp.get("invocationArn", ""), "status": "pending", }

Why embeddingScope: ["clip", "asset"] matters: This generates embeddings at two levels:

Clip embeddings (6-second segments): Enable granular search -> find the exact 8-second window where a person appears

Asset embeddings (whole video): Enable video-level similarity and grouping

Both are necessary: clip embeddings power precise temporal search, asset embeddings enable "find videos similar to this one" workflows.

Why async processing is required: A 2-hour video generates 1,200 clip embeddings (2 hours ÷ 6 seconds per clip). This takes time. Async processing lets users upload multiple videos simultaneously without blocking the UI while embeddings are generated in the background.

1.2 - Background Job Queue and Completion Polling

The system maintains an in-memory queue of pending embedding jobs and polls Bedrock for completion:

Source: backend/app/utils/video_helpers.py (Line 104)

while True: job = bedrock_queue.get() task_id = job["task_id"] s3_uri = job["s3_uri"] output_uri = job["output_uri"] filename = job["filename"] meta = job["meta"] log.info("[QUEUE] Processing Bedrock start for %s (%s)", filename, task_id) success = False for attempt in range(1, max_retries + 1): try: result = start_video_embedding(s3_uri, output_uri) arn = result.get("invocation_arn", "") log.info("[QUEUE] Bedrock started for task_id=%s", task_id) video_tasks[task_id]["status"] = "indexing" video_tasks[task_id]["invocation_arn"] = arn video_tasks[task_id]["output_s3_uri"] = output_uri for rec in vs_index(): if rec.get("id") == task_id: rec.setdefault("metadata", {})["status"] = "indexing" break vs_save() with bedrock_poller_lock: bedrock_poller_jobs.append({ "task_id": task_id, "invocation_arn": arn, "output_s3_uri": output_uri, "started_at": time.monotonic(), }) success = True break

What this accomplishes: The queue processor updates task status from "pending" to "indexing," stores the Bedrock invocation ARN for tracking, and hands off to a separate polling thread. That poller checks job completion every 30 seconds, loads finished embeddings from S3, and marks videos as "ready" when processing completes.

Why retry logic matters: Bedrock has rate limits. The retry mechanism with exponential backoff ensures jobs eventually succeed even during high-volume uploads, preventing silent failures that would leave videos in permanent "pending" state.

Design pattern: Videos and entities share the same vector index, differentiated by type metadata. Documents use a separate chunk-based index. This gives unified video+entity search while keeping document retrieval independent.

1.3 - Document Ingestion with Semantic Chunking

Documents require different processing than video. PDFs are extracted, split into semantic chunks (by section when possible), embedded via NeMo, and stored as searchable records:

Source: backend/app/services/nemo_retriever.py (Line 688)

def ingest_document(file_path: str, doc_id: str, filename: str) -> dict: extra: dict = {} try: pdf_info = store_pdf_document(file_path, doc_id) extra.update(pdf_info) except Exception as exc: log.warning("Could not persist PDF for %s (%s)", doc_id, type(exc).__name__) ext = os.path.splitext(file_path)[1].lower() chunks: list[str] = [] sections: list[str] = [] if ext == ".pdf": try: pairs = split_into_semantic_chunks(file_path) sections = [s for s, _ in pairs] chunks = [t for _, t in pairs] log.info("Smart PDF chunking produced %d chunks for doc %s", len(chunks), doc_id) except Exception as exc: log.warning("Smart PDF extraction failed for doc %s, falling back to NeMo (%s)", doc_id, type(exc).__name__) if not chunks: chunks = extract_document(file_path) if not chunks: log.warning("No content extracted for doc %s", doc_id) return {"doc_id": doc_id, "chunks": 0, "status": "empty"} embeddings = embed_texts(chunks) add_chunks( doc_id, filename, chunks, embeddings, sections=sections or None, extra_metadata=extra or None, ) return {"doc_id": doc_id, "chunks": len(chunks), "status": "ready"}

Smart chunking strategy: The system attempts section-based splitting first (preserving document structure), then falls back to paragraph-based chunking if structure detection fails. This matters for legal documents where section headers ("Incident Timeline," "Witness Statements") provide important retrieval context.

Embedding model selection: nvidia/llama-nemotron-embed-vl-1b-v2 generates embeddings optimized for passage retrieval. Each chunk gets its own vector, enabling precise document search at the paragraph level rather than whole-document matching.

Metadata preservation: The system stores original section headers and document links alongside chunk text, so retrieval results can point investigators back to the source paragraph in the original PDF.

Embedding generation via NVIDIA API:

Source: backend/app/services/nemo_retriever.py (Line 515)

def embed_via_requests(texts: list[str], input_type: str) -> list[list[float]]: """Call NVIDIA embeddings API directly with requests""" import requests t0 = time.perf_counter() resp = requests.post( "https://integrate.api.nvidia.com/v1/embeddings", headers={ "Authorization": f"Bearer {NVIDIA_API_KEY}", "Content-Type": "application/json", }, json={ "input": texts, "model": EMBED_MODEL, "encoding_format": "float", "input_type": input_type, }, timeout=60, ) resp.raise_for_status() data = resp.json() vectors = [d["embedding"] for d in data["data"]] first_dim = len(vectors[0]) if vectors else 0 return vectors

The input_type parameter: NeMo distinguishes between "passage" (document chunks being indexed) and "query" (search requests). This improves retrieval accuracy by optimizing embeddings for their intended use: passages are embedded for storage, queries are embedded for matching.

1.4 - Creating Searchable Entity Records from Face Images

Legal investigations often require tracking specific individuals across multiple camera sources. The entity system enables this by converting face images into searchable embeddings:

Image embedding generation:

def embed_image(media_source: dict) -> list[float]: return invoke_embedding_model({ "inputType": "image", "image": {"mediaSource": media_source}, })

This uses Marengo's image embedding capability to generate a visual representation of a face. Unlike text embeddings, image embeddings capture visual features directly (facial structure, appearance, clothing) making them suitable for visual matching across video frames.

Entity creation from uploaded face image:

@entities_bp.route("/entities/from-image", methods=["POST"]) def api_entities_from_image(): if "image" not in request.files: return jsonify({"error": "No 'image' file provided"}), 400 file = request.files["image"] if file.filename == "": return jsonify({"error": "Empty filename"}), 400 data = request.form or {} name = data.get("name") or (request.get_json(silent=True) or {}).get("name") or "" if not name.strip(): return jsonify({"error": "Missing 'name'"}), 400 image_bytes = file.read() faces = detect_and_crop_faces(image_bytes, min_confidence=ENTITY_FACE_MIN_CONFIDENCE) if not faces: return jsonify( {"error": "No face detected in image. Use a clear, front-facing photo with good lighting."} ), 404 best = faces[0] face_b64 = best["image_base64"] embed_b64 = best.get("embedding_crop_base64") or face_b64 import base64 face_bytes = base64.b64decode(embed_b64) media = media_source_base64(face_bytes) try: embedding = embed_image(media) except Exception: return jsonify({"error": "Internal server error"}), 500 entity_id = name.strip().lower().replace(" ", "-") rec = index_add( id=entity_id, embedding=embedding, metadata={"name": name.strip(), "face_snap_base64": face_b64}, type="entity", ) return jsonify( { "indexId": FIXED_INDEX_ID, "entity": {"id": rec["id"], "name": name.strip()}, "face_snap_base64": face_b64, } )

The face detection step: Uses OpenCV's ResNet10 SSD detector to isolate faces before embedding. This preprocessing ensures Marengo receives a clean face crop rather than a full scene, improving match accuracy during search. The detector returns confidence scores, only faces above the minimum threshold are processed.

Why entity records live in the video index: Storing entity embeddings alongside video embeddings enables direct similarity search. When an investigator searches for "person-of-interest-x," the system compares that entity's embedding against all video clip embeddings in one retrieval operation.

Part 2: Cross-Source Search and Retrieval

Once videos and documents are indexed, the platform enables unified search across all sources. This is where the single-index architecture delivers its value: one query, all sources, ranked results.

2.1 - Video Content Search with Multimodal Embeddings

The search flow supports three query types through a single interface:

Text queries: Natural language descriptions ("person in red jacket")

Image queries: Upload a screenshot, find matching footage

Entity queries: Search for a previously registered person/object

Source: backend/app/routes/search.py (Line 30)

def search_video_index( data: dict, *, request_query: str = "", request_top_k: int | None = None, image_bytes: bytes | None = None, ) -> tuple[list[dict], str, str | None]: query_emb, display_query, is_entity_search, err = get_search_embedding_from_request( data, request_query=request_query, image_bytes=image_bytes, )

Input normalization: get_search_embedding_from_request() handles all query types and returns a single embedding vector. Text queries get embedded via Marengo's text encoder, image queries via image encoder, entity queries retrieve the stored embedding directly. The search logic downstream doesn't care about input type—it just sees a vector to match.

Entity search optimization:

for r in results: meta = r.get("metadata", {}) clips = [] output_uri = meta.get("output_s3_uri") or f"{S3_EMBEDDINGS_OUTPUT}/{r['id']}" if is_entity_search: clips = clips_above_threshold( query_emb, output_uri, min_score=ENTITY_CLIP_MIN_SCORE, visual_only=True, max_clips=clips_per_video, ) if not clips: clips = clip_search( query_emb, output_uri, top_n=clips_per_video, min_score=clip_min_score, visual_only=is_entity_search, )

The scoring difference: Entity searches use visual_only=True and higher match thresholds because face matching requires stronger visual similarity than general content search. Text queries can match via audio transcription or visual content (either modality counts). Entity queries must match visually.

Clip-level grounding:

out.append({ "id": r["id"], "score": r["score"], "metadata": meta, "clips": clips, }) return out, display_query, None

Results include not just which videos match, but exactly where in each video the match occurs. An investigator searching for "person exiting vehicle" gets results like:

Video: "Dashcam - Main St" → Clips at 00:03:42-00:03:48, 00:07:15-00:07:21

Video: "CCTV - Parking Lot" → Clip at 00:12:03-00:12:09

This clip-level precision eliminates "search for needle, still get entire haystack" problems common in video search systems.

2.2 - Entity-Aware Video Search: Finding People Across Sources

Entity search enables investigators to track specific individuals across multiple unrelated camera sources. Upload a face image once, search all footage:

How it works:

Entity embedding (generated during entity creation) is loaded from index

System retrieves clip embeddings for each indexed video from S3 Bedrock output

Similarity scoring identifies clips where the entity likely appears

Videos are ranked by match strength and consistency (how many clips matched, how strong the scores)

Why this is faster than runtime face detection: Pre-computing clip embeddings during ingestion means search only performs similarity comparison, not face detection. Comparing 10,000 clip embeddings against an entity embedding takes milliseconds. Running face detection on 10,000 clips would take hours.

Match threshold tuning: The system uses ENTITY_CLIP_MIN_SCORE to filter weak matches. Setting this too low produces false positives (similar-looking people). Setting it too high misses valid matches (same person in different lighting/angles). The demo uses 0.75 as a balance point; your production system should make this user-configurable.

2.3 - Hybrid Search: Video + Document Retrieval

Legal evidence isn't just video. Investigators need to cross-reference footage with police reports, witness statements, and insurance forms. Hybrid search runs video and document retrieval in parallel:

Document search implementation:

def search_document_index(text_query: str, doc_top_k: int) -> list[dict]: from app.services.nemo_retriever import embed_query, search_docs t0 = time.perf_counter() log.info("[DOC_SEARCH] Started doc search top_k=%d", doc_top_k) query_emb = embed_query(text_query) docs = search_docs(query_emb, top_k=doc_top_k) return docs

Parallel execution pattern: The hybrid search handler starts video and document searches simultaneously using threading, then merges results once both complete. This keeps total latency close to the slower of the two searches rather than their sum.

Result merging strategy: Video results and document results are kept separate in the response (not interleaved by score) because they serve different investigative purposes. Videos provide visual evidence, documents provide corroborating narrative. Investigators review them differently.

For complete hybrid search implementation: View source

Part 3: Structured Compliance Analysis and Reporting

Search finds evidence. Analysis interprets it. The platform uses Pegasus through Bedrock to generate structured compliance reports from raw footage:

3.1 - Generating Structured Video Analysis

Pegasus transforms unstructured video into structured legal documentation: categorized risk levels, detected persons, timestamped transcripts, and compliance summaries.

Source: backend/app/services/bedrock_pegasus.py (Line 72)

body: dict = { "inputPrompt": prompt[:4000], "mediaSource": { "s3Location": { "uri": s3_uri, "bucketOwner": owner, } }, } if temperature is not None: body["temperature"] = temperature if response_schema is not None: body["responseFormat"] = {"jsonSchema": response_schema}

Temperature setting: Using temperature: 0 for compliance analysis ensures deterministic, repeatable output. The same video always produces the same analysis structure, critical for legal documentation where consistency matters.

Response schema enforcement: The jsonSchema parameter instructs Pegasus to return structured JSON rather than free-form text. This guarantees parse-able output and eliminates post-processing fragility.

Bedrock model invocation:

response = client.invoke_model( modelId=model_id, body=payload, contentType="application/json", accept="application/json", )

The response contains generated text extracted from Bedrock's JSON response format. This text represents Pegasus's interpretation of the video content based on the provided prompt instructions.

Analysis prompt and parsing:

Source: backend/app/routes/videos.py (Line 335)

raw_text = pegasus_analyze_video( s3_uri, get_video_analysis_prompt(), temperature=0, ) log.info("[ANALYSIS] Pegasus response received in %.1fs (len=%d)", time.perf_counter() - t0, len(raw_text or "")) analysis_dict = parse_video_analysis_response(raw_text)

The analysis prompt (view source) instructs Pegasus to:

Categorize the video content (traffic incident, workplace safety, criminal activity, etc.)

Identify risk levels and specific risk factors

Extract timestamped transcript segments

Detect persons of interest with descriptions

Generate a compliance-focused summary

Parsing strategy: parse_video_analysis_response() handles malformed JSON gracefully (recovers triple-backtick wrapping, trailing commas, incomplete responses) because LLM outputs aren't always perfectly formatted. Robust parsing prevents analysis failures from minor formatting issues.

Transcript generation: The system runs a separate Pegasus call optimized for transcription, requesting timestamped segments in structured JSON. This produces ordered transcript entries with start/end times, enabling investigators to jump directly to spoken content.

3.2 - Object Detection and Face Keyframe Extraction

Beyond basic transcription, the platform identifies detected objects and useful face keyframes with precise timestamps (the specific moments where faces appear clearly):

Source: backend/app/routes/videos.py (Line 707)

raw_response = pegasus_analyze_video(s3_uri, get_detect_prompt()) log.info("[INSIGHTS] Pegasus response received in %.1fs (%d chars)", time.perf_counter() - t0, len(raw_response or "")) detect_data = parse_detect_response(raw_response) objects_raw = detect_data["objects"] face_keyframes = detect_data["face_keyframes"]

The detection prompt (view source) asks Pegasus to identify:

Objects: Vehicles, weapons, physical evidence, environmental details

Face keyframes: Timestamps where faces appear with sufficient clarity for identification

Why keyframes matter: Not every frame containing a face is useful. Faces seen from behind, in motion blur, or poorly lit don't help identification. Pegasus selects keyframes where faces are frontal, well-lit, and clear; the frames an investigator would actually screenshot for evidence.

Post-processing: Once Pegasus returns keyframes and objects, the system extracts those specific frames, generates thumbnails, and stores them for quick review. This eliminates re-processing video every time an investigator needs to see a face.

3.3 - Face Presence Timeline: Where Does This Person Appear?

After detecting faces, the system builds a presence timeline showing when each detected person appears throughout the video:

Source: backend/app/routes/videos.py (Line 1027)

if use_marengo: # Marengo-based presence, match each face embedding to clip embeddings for j, emb in enumerate(face_embeddings): if not emb: continue clips = clips_above_threshold( emb, output_uri, min_score=FACE_PRESENCE_MATCH_THRESHOLD, visual_only=True, max_clips=50, ) for clip in clips: c_start = float(clip.get("start", 0.0)) c_end = float(clip.get("end", c_start + 0.5)) for i in range(n_segments): s0 = i * seg_dur s1 = (i + 1) * seg_dur if c_end > s0 and c_start < s1: presence_by_face[j]["segment_presence"][i] = 1

How the timeline is constructed:

Video is divided into fixed-duration segments (e.g., 30-second windows)

Each detected face gets its embedding compared against all clip embeddings

Clips scoring above threshold are marked as "person present"

Matched clips are mapped onto the segment timeline

Result: A binary presence map showing which segments contain each person

Why this matters for investigations: An investigator can see at a glance: "Person A appears in segments 3, 7, 12, and 18" without watching the entire video. Click segment 7, jump directly to that 30-second window, confirm visual match.

The threshold trade-off: FACE_PRESENCE_MATCH_THRESHOLD controls sensitivity. Higher values reduce false positives but may miss valid appearances (different angles, lighting changes). Lower values catch more instances but produce more false matches requiring manual review. The demo uses 0.70 - production systems should let investigators adjust this per-case.

Operational Considerations for Production Deployment

This demo proves the concept. Deploying to production requires addressing several operational concerns:

Scalability: The single-index approach works for demos (12 videos) but needs architectural adjustments for production loads (1,000+ videos per case). Consider index partitioning strategies or migrating to a dedicated vector database for clip-level embeddings.

Cost management: Bedrock charges per embedding generation. A 2-hour video generates ~1,200 clip embeddings. Processing 100 hours of footage per case means 60,000+ embeddings. Plan for batch processing, caching strategies, and reuse of embeddings across related cases.

Security and compliance: Legal evidence requires chain-of-custody tracking, access controls, and audit logs. The demo stores videos in S3; production needs encryption at rest, role-based access, and immutable audit trails.

Accuracy validation: Entity matching and timeline reconstruction should include confidence scores and require human review before being submitted as legal evidence. AI-generated analysis supports investigators but doesn't replace human judgment.

Format handling: The demo assumes standard video codecs. Production systems must handle corrupted files, non-standard formats, encrypted footage, and low-quality sources gracefully.

Conclusion

This application demonstrates how video intelligence transforms legal evidence review from a time-intensive manual process into a targeted investigation workflow. By combining TwelveLabs through AWS Bedrock for video understanding with NeMo Retriever for document search, investigators can:

Search 12+ disparate video sources simultaneously with natural language queries

Track specific individuals or vehicles across unrelated camera feeds

Reconstruct chronological timelines from fragmented footage

Generate structured compliance analysis with risk categorization

Access transcript segments and detected objects with precise timestamps

The efficiency gain: Manual review of 40 hours of multi-source footage takes an investigator 40-60 hours. This platform reduces that to 4-6 hours of targeted review so that investigators spend time validating findings instead of hunting for them.

For legal tech ISVs building evidence management platforms, this architecture provides a reference implementation showing how TwelveLabs integrates into existing workflows without requiring wholesale platform rewrites. The single-index multi-source pattern, hybrid video+document retrieval, and structured analysis capabilities translate directly to production legal tech products.

Additional Resources

TwelveLabs on AWS Bedrock: Learn more about model access

NeMo Retriever documentation: Explore document retrieval capabilities

TwelveLabs sample applications: Browse additional use cases

Join the TwelveLabs community: Discord

Next steps:

Clone the reference implementation

Configure AWS Bedrock access and test with your video sources

Adapt the single-index architecture to your specific legal workflows

Integrate with your existing evidence management systems

Introduction

Legal teams processing video evidence face a compounding problem. A single case can involve 40+ hours of footage from dashcams, bodycams, CCTV systems, doorbell cameras, and insurance submissions; all in different formats, resolutions, and timestamps. Investigators spend $200-500/hour in paralegal time manually reviewing this content. Miss a critical 10-second clip buried in hour 23 of camera feed 7, and the case outcome changes.

The traditional approach (manual review, basic metadata tagging, frame-by-frame analysis) doesn't scale. Legal teams need to search across disparate sources simultaneously, reconstruct timelines from fragmented footage, and identify critical moments without watching every second of video.

This tutorial demonstrates how to build a legal evidence investigation platform using TwelveLabs through AWS Bedrock for video intelligence and NVIDIA NeMo Retriever for document search. The result: investigators search 12 video sources with natural language queries ("find the red sedan" or "show me when the person entered the building"), get ranked results with exact timestamps, and generate structured compliance reports in minutes instead of days.

What you'll build: A multi-source evidence investigator that ingests mixed-format surveillance footage, enables cross-source semantic search, performs automated compliance analysis, and reconstructs chronological timelines from disparate video sources.

Time investment: 40 hours of evidence review becomes 4 hours of targeted investigation.

You can explore the demo of the application here: Legal Evidence Investigator Application

You can check out the source code here: GitHub Repository

Demo Application

This demonstration shows how the platform handles real investigative workflows: searching across multiple video sources, identifying critical moments with precise timestamps, generating structured compliance analysis, and providing an interactive Q&A interface for deeper investigation.

Key capabilities demonstrated:

Cross-source search across 12+ disparate video formats

Entity tracking (find specific person/vehicle across all sources)

Automated compliance analysis with risk categorization

Timeline reconstruction showing sequential evidence

Conversational video Q&A with clickable timestamps

System Architecture: Why Single-Index Multi-Source Design Matters

The core architectural decision in this application addresses a fundamental constraint: TwelveLabs search operates at the index level: you search one index per request, not individual videos. This shapes everything.

The naive approach would create one index per video source. This fails immediately: you'd need 12 separate search requests to find a person across 12 cameras, then manually merge and sort results in application logic. Slow, complex, brittle.

The correct approach uses a single-index multi-source strategy: all evidence videos live in one TwelveLabs index, differentiated by rich metadata tagging. One search query hits all sources simultaneously. Results come back pre-ranked by relevance, grouped by source video, with metadata filters enabling scoped searches when needed ("only bodycam footage" or "only footage from Main Street location").

System components:

Video ingestion pipeline: Upload mixed-format footage to S3 → Index via

twelvelabs.marengo-embed-3-0-v1:0through Bedrock → Store multimodal embeddings with source metadataDocument ingestion pipeline: Extract text from PDFs → Chunk intelligently → Embed via

nvidia/llama-nemotron-embed-vl-1b-v2→ Store in document indexHybrid retrieval layer: Parallel search across video embeddings (Marengo) and document chunks (NeMo) → Merge results → Return unified response

Analysis engine: Generate structured compliance reports via

twelvelabs.pegasus-1-2-v1:0including title, risk categories, detected objects, face timelines, and transcript segmentsConversational interface: Video Q&A with clickable timestamp citations

This architecture delivers cross-source search without sacrificing performance. Single-request latency stays under 3 seconds even with 12 indexed videos because the index-level search pattern is optimized for exactly this scenario.

Preparation: What You Need Before Building

1 - AWS Bedrock Access for TwelveLabs Models

Set up AWS credentials with permissions for:

Amazon Bedrock runtime and model access

S3 for video storage and Bedrock async output

Access to these TwelveLabs models through Bedrock:

twelvelabs.marengo-embed-3-0-v1:0(multimodal video embeddings)twelvelabs.pegasus-1-2-v1:0(video analysis and reasoning)

Why these models: Marengo generates the searchable representations of video content (the "encoder"), while Pegasus performs reasoning and structured analysis (the "interpreter"). You need both: Marengo makes video searchable, Pegasus makes it understandable.

2 - S3 Bucket Configuration

Create one S3 bucket structured for:

Video uploads and storage

Bedrock async embedding output location (required for batch jobs)

Generated thumbnails and analysis artifacts

Document storage

Why S3-based: Bedrock's async embedding API requires S3 input/output locations. This also enables scalable storage without hitting local disk limits.

3 - NVIDIA API Key

Get an NVIDIA API key to access:

nvidia/llama-nemotron-embed-vl-1b-v2for document chunk embeddings

Why NeMo Retriever: Legal evidence isn't just video; it's police reports, witness statements, insurance forms. NeMo handles text retrieval while TwelveLabs handles video, giving you multimodal search across all evidence types.

4 - Clone and Configure

git clone https://github.com/Hrishikesh332/tl-compliance-intelligence cd

Follow backend environment setup in .env.example:

AWS credentials (Access Key ID, Secret Access Key, region)

S3 bucket name and Bedrock output path

NVIDIA API key

Application-specific settings (index configuration, match thresholds)

Implementation Deep Dive

Part 1: Video Ingestion and Embedding Generation

The ingestion pipeline transforms uploaded surveillance footage into searchable multimodal embeddings. This happens asynchronously because Bedrock's embedding jobs can take several minutes for hour-long videos.

1.1 - Starting the Marengo Embedding Job

When a video uploads, the system immediately stores it in S3 and kicks off a Bedrock async embedding job:

Source: backend/app/services/bedrock_marengo.py (Line 111)

def start_video_embedding( s3_uri: str, output_s3_uri: str, bucket_owner: str | None = None, ) -> dict: client = get_bedrock_client() owner = bucket_owner body = { "inputType": "video", "video": { "mediaSource": media_source_s3(s3_uri, owner), "embeddingOption": ["visual", "audio"], "embeddingScope": ["clip", "asset"], }, } resp = client.start_async_invoke( modelId=MARENGO_MODEL_ID, modelInput=body, outputDataConfig={"s3OutputDataConfig": {"s3Uri": output_s3_uri}}, ) return { "invocation_arn": resp.get("invocationArn", ""), "status": "pending", }

Why embeddingScope: ["clip", "asset"] matters: This generates embeddings at two levels:

Clip embeddings (6-second segments): Enable granular search -> find the exact 8-second window where a person appears

Asset embeddings (whole video): Enable video-level similarity and grouping

Both are necessary: clip embeddings power precise temporal search, asset embeddings enable "find videos similar to this one" workflows.

Why async processing is required: A 2-hour video generates 1,200 clip embeddings (2 hours ÷ 6 seconds per clip). This takes time. Async processing lets users upload multiple videos simultaneously without blocking the UI while embeddings are generated in the background.

1.2 - Background Job Queue and Completion Polling

The system maintains an in-memory queue of pending embedding jobs and polls Bedrock for completion:

Source: backend/app/utils/video_helpers.py (Line 104)

while True: job = bedrock_queue.get() task_id = job["task_id"] s3_uri = job["s3_uri"] output_uri = job["output_uri"] filename = job["filename"] meta = job["meta"] log.info("[QUEUE] Processing Bedrock start for %s (%s)", filename, task_id) success = False for attempt in range(1, max_retries + 1): try: result = start_video_embedding(s3_uri, output_uri) arn = result.get("invocation_arn", "") log.info("[QUEUE] Bedrock started for task_id=%s", task_id) video_tasks[task_id]["status"] = "indexing" video_tasks[task_id]["invocation_arn"] = arn video_tasks[task_id]["output_s3_uri"] = output_uri for rec in vs_index(): if rec.get("id") == task_id: rec.setdefault("metadata", {})["status"] = "indexing" break vs_save() with bedrock_poller_lock: bedrock_poller_jobs.append({ "task_id": task_id, "invocation_arn": arn, "output_s3_uri": output_uri, "started_at": time.monotonic(), }) success = True break

What this accomplishes: The queue processor updates task status from "pending" to "indexing," stores the Bedrock invocation ARN for tracking, and hands off to a separate polling thread. That poller checks job completion every 30 seconds, loads finished embeddings from S3, and marks videos as "ready" when processing completes.

Why retry logic matters: Bedrock has rate limits. The retry mechanism with exponential backoff ensures jobs eventually succeed even during high-volume uploads, preventing silent failures that would leave videos in permanent "pending" state.

Design pattern: Videos and entities share the same vector index, differentiated by type metadata. Documents use a separate chunk-based index. This gives unified video+entity search while keeping document retrieval independent.

1.3 - Document Ingestion with Semantic Chunking

Documents require different processing than video. PDFs are extracted, split into semantic chunks (by section when possible), embedded via NeMo, and stored as searchable records:

Source: backend/app/services/nemo_retriever.py (Line 688)

def ingest_document(file_path: str, doc_id: str, filename: str) -> dict: extra: dict = {} try: pdf_info = store_pdf_document(file_path, doc_id) extra.update(pdf_info) except Exception as exc: log.warning("Could not persist PDF for %s (%s)", doc_id, type(exc).__name__) ext = os.path.splitext(file_path)[1].lower() chunks: list[str] = [] sections: list[str] = [] if ext == ".pdf": try: pairs = split_into_semantic_chunks(file_path) sections = [s for s, _ in pairs] chunks = [t for _, t in pairs] log.info("Smart PDF chunking produced %d chunks for doc %s", len(chunks), doc_id) except Exception as exc: log.warning("Smart PDF extraction failed for doc %s, falling back to NeMo (%s)", doc_id, type(exc).__name__) if not chunks: chunks = extract_document(file_path) if not chunks: log.warning("No content extracted for doc %s", doc_id) return {"doc_id": doc_id, "chunks": 0, "status": "empty"} embeddings = embed_texts(chunks) add_chunks( doc_id, filename, chunks, embeddings, sections=sections or None, extra_metadata=extra or None, ) return {"doc_id": doc_id, "chunks": len(chunks), "status": "ready"}

Smart chunking strategy: The system attempts section-based splitting first (preserving document structure), then falls back to paragraph-based chunking if structure detection fails. This matters for legal documents where section headers ("Incident Timeline," "Witness Statements") provide important retrieval context.

Embedding model selection: nvidia/llama-nemotron-embed-vl-1b-v2 generates embeddings optimized for passage retrieval. Each chunk gets its own vector, enabling precise document search at the paragraph level rather than whole-document matching.

Metadata preservation: The system stores original section headers and document links alongside chunk text, so retrieval results can point investigators back to the source paragraph in the original PDF.

Embedding generation via NVIDIA API:

Source: backend/app/services/nemo_retriever.py (Line 515)

def embed_via_requests(texts: list[str], input_type: str) -> list[list[float]]: """Call NVIDIA embeddings API directly with requests""" import requests t0 = time.perf_counter() resp = requests.post( "https://integrate.api.nvidia.com/v1/embeddings", headers={ "Authorization": f"Bearer {NVIDIA_API_KEY}", "Content-Type": "application/json", }, json={ "input": texts, "model": EMBED_MODEL, "encoding_format": "float", "input_type": input_type, }, timeout=60, ) resp.raise_for_status() data = resp.json() vectors = [d["embedding"] for d in data["data"]] first_dim = len(vectors[0]) if vectors else 0 return vectors

The input_type parameter: NeMo distinguishes between "passage" (document chunks being indexed) and "query" (search requests). This improves retrieval accuracy by optimizing embeddings for their intended use: passages are embedded for storage, queries are embedded for matching.

1.4 - Creating Searchable Entity Records from Face Images

Legal investigations often require tracking specific individuals across multiple camera sources. The entity system enables this by converting face images into searchable embeddings:

Image embedding generation:

def embed_image(media_source: dict) -> list[float]: return invoke_embedding_model({ "inputType": "image", "image": {"mediaSource": media_source}, })

This uses Marengo's image embedding capability to generate a visual representation of a face. Unlike text embeddings, image embeddings capture visual features directly (facial structure, appearance, clothing) making them suitable for visual matching across video frames.

Entity creation from uploaded face image:

@entities_bp.route("/entities/from-image", methods=["POST"]) def api_entities_from_image(): if "image" not in request.files: return jsonify({"error": "No 'image' file provided"}), 400 file = request.files["image"] if file.filename == "": return jsonify({"error": "Empty filename"}), 400 data = request.form or {} name = data.get("name") or (request.get_json(silent=True) or {}).get("name") or "" if not name.strip(): return jsonify({"error": "Missing 'name'"}), 400 image_bytes = file.read() faces = detect_and_crop_faces(image_bytes, min_confidence=ENTITY_FACE_MIN_CONFIDENCE) if not faces: return jsonify( {"error": "No face detected in image. Use a clear, front-facing photo with good lighting."} ), 404 best = faces[0] face_b64 = best["image_base64"] embed_b64 = best.get("embedding_crop_base64") or face_b64 import base64 face_bytes = base64.b64decode(embed_b64) media = media_source_base64(face_bytes) try: embedding = embed_image(media) except Exception: return jsonify({"error": "Internal server error"}), 500 entity_id = name.strip().lower().replace(" ", "-") rec = index_add( id=entity_id, embedding=embedding, metadata={"name": name.strip(), "face_snap_base64": face_b64}, type="entity", ) return jsonify( { "indexId": FIXED_INDEX_ID, "entity": {"id": rec["id"], "name": name.strip()}, "face_snap_base64": face_b64, } )

The face detection step: Uses OpenCV's ResNet10 SSD detector to isolate faces before embedding. This preprocessing ensures Marengo receives a clean face crop rather than a full scene, improving match accuracy during search. The detector returns confidence scores, only faces above the minimum threshold are processed.

Why entity records live in the video index: Storing entity embeddings alongside video embeddings enables direct similarity search. When an investigator searches for "person-of-interest-x," the system compares that entity's embedding against all video clip embeddings in one retrieval operation.

Part 2: Cross-Source Search and Retrieval

Once videos and documents are indexed, the platform enables unified search across all sources. This is where the single-index architecture delivers its value: one query, all sources, ranked results.

2.1 - Video Content Search with Multimodal Embeddings

The search flow supports three query types through a single interface:

Text queries: Natural language descriptions ("person in red jacket")

Image queries: Upload a screenshot, find matching footage

Entity queries: Search for a previously registered person/object

Source: backend/app/routes/search.py (Line 30)

def search_video_index( data: dict, *, request_query: str = "", request_top_k: int | None = None, image_bytes: bytes | None = None, ) -> tuple[list[dict], str, str | None]: query_emb, display_query, is_entity_search, err = get_search_embedding_from_request( data, request_query=request_query, image_bytes=image_bytes, )

Input normalization: get_search_embedding_from_request() handles all query types and returns a single embedding vector. Text queries get embedded via Marengo's text encoder, image queries via image encoder, entity queries retrieve the stored embedding directly. The search logic downstream doesn't care about input type—it just sees a vector to match.

Entity search optimization:

for r in results: meta = r.get("metadata", {}) clips = [] output_uri = meta.get("output_s3_uri") or f"{S3_EMBEDDINGS_OUTPUT}/{r['id']}" if is_entity_search: clips = clips_above_threshold( query_emb, output_uri, min_score=ENTITY_CLIP_MIN_SCORE, visual_only=True, max_clips=clips_per_video, ) if not clips: clips = clip_search( query_emb, output_uri, top_n=clips_per_video, min_score=clip_min_score, visual_only=is_entity_search, )

The scoring difference: Entity searches use visual_only=True and higher match thresholds because face matching requires stronger visual similarity than general content search. Text queries can match via audio transcription or visual content (either modality counts). Entity queries must match visually.

Clip-level grounding:

out.append({ "id": r["id"], "score": r["score"], "metadata": meta, "clips": clips, }) return out, display_query, None

Results include not just which videos match, but exactly where in each video the match occurs. An investigator searching for "person exiting vehicle" gets results like:

Video: "Dashcam - Main St" → Clips at 00:03:42-00:03:48, 00:07:15-00:07:21

Video: "CCTV - Parking Lot" → Clip at 00:12:03-00:12:09

This clip-level precision eliminates "search for needle, still get entire haystack" problems common in video search systems.

2.2 - Entity-Aware Video Search: Finding People Across Sources

Entity search enables investigators to track specific individuals across multiple unrelated camera sources. Upload a face image once, search all footage:

How it works:

Entity embedding (generated during entity creation) is loaded from index

System retrieves clip embeddings for each indexed video from S3 Bedrock output

Similarity scoring identifies clips where the entity likely appears

Videos are ranked by match strength and consistency (how many clips matched, how strong the scores)

Why this is faster than runtime face detection: Pre-computing clip embeddings during ingestion means search only performs similarity comparison, not face detection. Comparing 10,000 clip embeddings against an entity embedding takes milliseconds. Running face detection on 10,000 clips would take hours.

Match threshold tuning: The system uses ENTITY_CLIP_MIN_SCORE to filter weak matches. Setting this too low produces false positives (similar-looking people). Setting it too high misses valid matches (same person in different lighting/angles). The demo uses 0.75 as a balance point; your production system should make this user-configurable.

2.3 - Hybrid Search: Video + Document Retrieval

Legal evidence isn't just video. Investigators need to cross-reference footage with police reports, witness statements, and insurance forms. Hybrid search runs video and document retrieval in parallel:

Document search implementation:

def search_document_index(text_query: str, doc_top_k: int) -> list[dict]: from app.services.nemo_retriever import embed_query, search_docs t0 = time.perf_counter() log.info("[DOC_SEARCH] Started doc search top_k=%d", doc_top_k) query_emb = embed_query(text_query) docs = search_docs(query_emb, top_k=doc_top_k) return docs

Parallel execution pattern: The hybrid search handler starts video and document searches simultaneously using threading, then merges results once both complete. This keeps total latency close to the slower of the two searches rather than their sum.

Result merging strategy: Video results and document results are kept separate in the response (not interleaved by score) because they serve different investigative purposes. Videos provide visual evidence, documents provide corroborating narrative. Investigators review them differently.

For complete hybrid search implementation: View source

Part 3: Structured Compliance Analysis and Reporting

Search finds evidence. Analysis interprets it. The platform uses Pegasus through Bedrock to generate structured compliance reports from raw footage:

3.1 - Generating Structured Video Analysis

Pegasus transforms unstructured video into structured legal documentation: categorized risk levels, detected persons, timestamped transcripts, and compliance summaries.

Source: backend/app/services/bedrock_pegasus.py (Line 72)

body: dict = { "inputPrompt": prompt[:4000], "mediaSource": { "s3Location": { "uri": s3_uri, "bucketOwner": owner, } }, } if temperature is not None: body["temperature"] = temperature if response_schema is not None: body["responseFormat"] = {"jsonSchema": response_schema}

Temperature setting: Using temperature: 0 for compliance analysis ensures deterministic, repeatable output. The same video always produces the same analysis structure, critical for legal documentation where consistency matters.

Response schema enforcement: The jsonSchema parameter instructs Pegasus to return structured JSON rather than free-form text. This guarantees parse-able output and eliminates post-processing fragility.

Bedrock model invocation:

response = client.invoke_model( modelId=model_id, body=payload, contentType="application/json", accept="application/json", )

The response contains generated text extracted from Bedrock's JSON response format. This text represents Pegasus's interpretation of the video content based on the provided prompt instructions.

Analysis prompt and parsing:

Source: backend/app/routes/videos.py (Line 335)

raw_text = pegasus_analyze_video( s3_uri, get_video_analysis_prompt(), temperature=0, ) log.info("[ANALYSIS] Pegasus response received in %.1fs (len=%d)", time.perf_counter() - t0, len(raw_text or "")) analysis_dict = parse_video_analysis_response(raw_text)

The analysis prompt (view source) instructs Pegasus to:

Categorize the video content (traffic incident, workplace safety, criminal activity, etc.)

Identify risk levels and specific risk factors

Extract timestamped transcript segments

Detect persons of interest with descriptions

Generate a compliance-focused summary

Parsing strategy: parse_video_analysis_response() handles malformed JSON gracefully (recovers triple-backtick wrapping, trailing commas, incomplete responses) because LLM outputs aren't always perfectly formatted. Robust parsing prevents analysis failures from minor formatting issues.

Transcript generation: The system runs a separate Pegasus call optimized for transcription, requesting timestamped segments in structured JSON. This produces ordered transcript entries with start/end times, enabling investigators to jump directly to spoken content.

3.2 - Object Detection and Face Keyframe Extraction

Beyond basic transcription, the platform identifies detected objects and useful face keyframes with precise timestamps (the specific moments where faces appear clearly):

Source: backend/app/routes/videos.py (Line 707)

raw_response = pegasus_analyze_video(s3_uri, get_detect_prompt()) log.info("[INSIGHTS] Pegasus response received in %.1fs (%d chars)", time.perf_counter() - t0, len(raw_response or "")) detect_data = parse_detect_response(raw_response) objects_raw = detect_data["objects"] face_keyframes = detect_data["face_keyframes"]

The detection prompt (view source) asks Pegasus to identify:

Objects: Vehicles, weapons, physical evidence, environmental details

Face keyframes: Timestamps where faces appear with sufficient clarity for identification

Why keyframes matter: Not every frame containing a face is useful. Faces seen from behind, in motion blur, or poorly lit don't help identification. Pegasus selects keyframes where faces are frontal, well-lit, and clear; the frames an investigator would actually screenshot for evidence.

Post-processing: Once Pegasus returns keyframes and objects, the system extracts those specific frames, generates thumbnails, and stores them for quick review. This eliminates re-processing video every time an investigator needs to see a face.

3.3 - Face Presence Timeline: Where Does This Person Appear?

After detecting faces, the system builds a presence timeline showing when each detected person appears throughout the video:

Source: backend/app/routes/videos.py (Line 1027)

if use_marengo: # Marengo-based presence, match each face embedding to clip embeddings for j, emb in enumerate(face_embeddings): if not emb: continue clips = clips_above_threshold( emb, output_uri, min_score=FACE_PRESENCE_MATCH_THRESHOLD, visual_only=True, max_clips=50, ) for clip in clips: c_start = float(clip.get("start", 0.0)) c_end = float(clip.get("end", c_start + 0.5)) for i in range(n_segments): s0 = i * seg_dur s1 = (i + 1) * seg_dur if c_end > s0 and c_start < s1: presence_by_face[j]["segment_presence"][i] = 1

How the timeline is constructed:

Video is divided into fixed-duration segments (e.g., 30-second windows)

Each detected face gets its embedding compared against all clip embeddings

Clips scoring above threshold are marked as "person present"

Matched clips are mapped onto the segment timeline

Result: A binary presence map showing which segments contain each person

Why this matters for investigations: An investigator can see at a glance: "Person A appears in segments 3, 7, 12, and 18" without watching the entire video. Click segment 7, jump directly to that 30-second window, confirm visual match.

The threshold trade-off: FACE_PRESENCE_MATCH_THRESHOLD controls sensitivity. Higher values reduce false positives but may miss valid appearances (different angles, lighting changes). Lower values catch more instances but produce more false matches requiring manual review. The demo uses 0.70 - production systems should let investigators adjust this per-case.

Operational Considerations for Production Deployment

This demo proves the concept. Deploying to production requires addressing several operational concerns:

Scalability: The single-index approach works for demos (12 videos) but needs architectural adjustments for production loads (1,000+ videos per case). Consider index partitioning strategies or migrating to a dedicated vector database for clip-level embeddings.

Cost management: Bedrock charges per embedding generation. A 2-hour video generates ~1,200 clip embeddings. Processing 100 hours of footage per case means 60,000+ embeddings. Plan for batch processing, caching strategies, and reuse of embeddings across related cases.

Security and compliance: Legal evidence requires chain-of-custody tracking, access controls, and audit logs. The demo stores videos in S3; production needs encryption at rest, role-based access, and immutable audit trails.

Accuracy validation: Entity matching and timeline reconstruction should include confidence scores and require human review before being submitted as legal evidence. AI-generated analysis supports investigators but doesn't replace human judgment.

Format handling: The demo assumes standard video codecs. Production systems must handle corrupted files, non-standard formats, encrypted footage, and low-quality sources gracefully.

Conclusion

This application demonstrates how video intelligence transforms legal evidence review from a time-intensive manual process into a targeted investigation workflow. By combining TwelveLabs through AWS Bedrock for video understanding with NeMo Retriever for document search, investigators can:

Search 12+ disparate video sources simultaneously with natural language queries

Track specific individuals or vehicles across unrelated camera feeds

Reconstruct chronological timelines from fragmented footage

Generate structured compliance analysis with risk categorization

Access transcript segments and detected objects with precise timestamps

The efficiency gain: Manual review of 40 hours of multi-source footage takes an investigator 40-60 hours. This platform reduces that to 4-6 hours of targeted review so that investigators spend time validating findings instead of hunting for them.

For legal tech ISVs building evidence management platforms, this architecture provides a reference implementation showing how TwelveLabs integrates into existing workflows without requiring wholesale platform rewrites. The single-index multi-source pattern, hybrid video+document retrieval, and structured analysis capabilities translate directly to production legal tech products.

Additional Resources

TwelveLabs on AWS Bedrock: Learn more about model access

NeMo Retriever documentation: Explore document retrieval capabilities

TwelveLabs sample applications: Browse additional use cases

Join the TwelveLabs community: Discord

Next steps:

Clone the reference implementation

Configure AWS Bedrock access and test with your video sources

Adapt the single-index architecture to your specific legal workflows

Integrate with your existing evidence management systems

Introduction

Legal teams processing video evidence face a compounding problem. A single case can involve 40+ hours of footage from dashcams, bodycams, CCTV systems, doorbell cameras, and insurance submissions; all in different formats, resolutions, and timestamps. Investigators spend $200-500/hour in paralegal time manually reviewing this content. Miss a critical 10-second clip buried in hour 23 of camera feed 7, and the case outcome changes.

The traditional approach (manual review, basic metadata tagging, frame-by-frame analysis) doesn't scale. Legal teams need to search across disparate sources simultaneously, reconstruct timelines from fragmented footage, and identify critical moments without watching every second of video.

This tutorial demonstrates how to build a legal evidence investigation platform using TwelveLabs through AWS Bedrock for video intelligence and NVIDIA NeMo Retriever for document search. The result: investigators search 12 video sources with natural language queries ("find the red sedan" or "show me when the person entered the building"), get ranked results with exact timestamps, and generate structured compliance reports in minutes instead of days.

What you'll build: A multi-source evidence investigator that ingests mixed-format surveillance footage, enables cross-source semantic search, performs automated compliance analysis, and reconstructs chronological timelines from disparate video sources.

Time investment: 40 hours of evidence review becomes 4 hours of targeted investigation.

You can explore the demo of the application here: Legal Evidence Investigator Application

You can check out the source code here: GitHub Repository

Demo Application

This demonstration shows how the platform handles real investigative workflows: searching across multiple video sources, identifying critical moments with precise timestamps, generating structured compliance analysis, and providing an interactive Q&A interface for deeper investigation.

Key capabilities demonstrated:

Cross-source search across 12+ disparate video formats

Entity tracking (find specific person/vehicle across all sources)

Automated compliance analysis with risk categorization

Timeline reconstruction showing sequential evidence

Conversational video Q&A with clickable timestamps

System Architecture: Why Single-Index Multi-Source Design Matters

The core architectural decision in this application addresses a fundamental constraint: TwelveLabs search operates at the index level: you search one index per request, not individual videos. This shapes everything.

The naive approach would create one index per video source. This fails immediately: you'd need 12 separate search requests to find a person across 12 cameras, then manually merge and sort results in application logic. Slow, complex, brittle.

The correct approach uses a single-index multi-source strategy: all evidence videos live in one TwelveLabs index, differentiated by rich metadata tagging. One search query hits all sources simultaneously. Results come back pre-ranked by relevance, grouped by source video, with metadata filters enabling scoped searches when needed ("only bodycam footage" or "only footage from Main Street location").

System components:

Video ingestion pipeline: Upload mixed-format footage to S3 → Index via

twelvelabs.marengo-embed-3-0-v1:0through Bedrock → Store multimodal embeddings with source metadataDocument ingestion pipeline: Extract text from PDFs → Chunk intelligently → Embed via

nvidia/llama-nemotron-embed-vl-1b-v2→ Store in document indexHybrid retrieval layer: Parallel search across video embeddings (Marengo) and document chunks (NeMo) → Merge results → Return unified response

Analysis engine: Generate structured compliance reports via

twelvelabs.pegasus-1-2-v1:0including title, risk categories, detected objects, face timelines, and transcript segmentsConversational interface: Video Q&A with clickable timestamp citations

This architecture delivers cross-source search without sacrificing performance. Single-request latency stays under 3 seconds even with 12 indexed videos because the index-level search pattern is optimized for exactly this scenario.

Preparation: What You Need Before Building

1 - AWS Bedrock Access for TwelveLabs Models

Set up AWS credentials with permissions for:

Amazon Bedrock runtime and model access

S3 for video storage and Bedrock async output

Access to these TwelveLabs models through Bedrock:

twelvelabs.marengo-embed-3-0-v1:0(multimodal video embeddings)twelvelabs.pegasus-1-2-v1:0(video analysis and reasoning)

Why these models: Marengo generates the searchable representations of video content (the "encoder"), while Pegasus performs reasoning and structured analysis (the "interpreter"). You need both: Marengo makes video searchable, Pegasus makes it understandable.

2 - S3 Bucket Configuration

Create one S3 bucket structured for:

Video uploads and storage

Bedrock async embedding output location (required for batch jobs)

Generated thumbnails and analysis artifacts

Document storage

Why S3-based: Bedrock's async embedding API requires S3 input/output locations. This also enables scalable storage without hitting local disk limits.

3 - NVIDIA API Key

Get an NVIDIA API key to access:

nvidia/llama-nemotron-embed-vl-1b-v2for document chunk embeddings

Why NeMo Retriever: Legal evidence isn't just video; it's police reports, witness statements, insurance forms. NeMo handles text retrieval while TwelveLabs handles video, giving you multimodal search across all evidence types.

4 - Clone and Configure

git clone https://github.com/Hrishikesh332/tl-compliance-intelligence cd

Follow backend environment setup in .env.example:

AWS credentials (Access Key ID, Secret Access Key, region)

S3 bucket name and Bedrock output path

NVIDIA API key

Application-specific settings (index configuration, match thresholds)

Implementation Deep Dive

Part 1: Video Ingestion and Embedding Generation

The ingestion pipeline transforms uploaded surveillance footage into searchable multimodal embeddings. This happens asynchronously because Bedrock's embedding jobs can take several minutes for hour-long videos.

1.1 - Starting the Marengo Embedding Job

When a video uploads, the system immediately stores it in S3 and kicks off a Bedrock async embedding job:

Source: backend/app/services/bedrock_marengo.py (Line 111)

def start_video_embedding( s3_uri: str, output_s3_uri: str, bucket_owner: str | None = None, ) -> dict: client = get_bedrock_client() owner = bucket_owner body = { "inputType": "video", "video": { "mediaSource": media_source_s3(s3_uri, owner), "embeddingOption": ["visual", "audio"], "embeddingScope": ["clip", "asset"], }, } resp = client.start_async_invoke( modelId=MARENGO_MODEL_ID, modelInput=body, outputDataConfig={"s3OutputDataConfig": {"s3Uri": output_s3_uri}}, ) return { "invocation_arn": resp.get("invocationArn", ""), "status": "pending", }

Why embeddingScope: ["clip", "asset"] matters: This generates embeddings at two levels:

Clip embeddings (6-second segments): Enable granular search -> find the exact 8-second window where a person appears

Asset embeddings (whole video): Enable video-level similarity and grouping

Both are necessary: clip embeddings power precise temporal search, asset embeddings enable "find videos similar to this one" workflows.

Why async processing is required: A 2-hour video generates 1,200 clip embeddings (2 hours ÷ 6 seconds per clip). This takes time. Async processing lets users upload multiple videos simultaneously without blocking the UI while embeddings are generated in the background.

1.2 - Background Job Queue and Completion Polling

The system maintains an in-memory queue of pending embedding jobs and polls Bedrock for completion:

Source: backend/app/utils/video_helpers.py (Line 104)

while True: job = bedrock_queue.get() task_id = job["task_id"] s3_uri = job["s3_uri"] output_uri = job["output_uri"] filename = job["filename"] meta = job["meta"] log.info("[QUEUE] Processing Bedrock start for %s (%s)", filename, task_id) success = False for attempt in range(1, max_retries + 1): try: result = start_video_embedding(s3_uri, output_uri) arn = result.get("invocation_arn", "") log.info("[QUEUE] Bedrock started for task_id=%s", task_id) video_tasks[task_id]["status"] = "indexing" video_tasks[task_id]["invocation_arn"] = arn video_tasks[task_id]["output_s3_uri"] = output_uri for rec in vs_index(): if rec.get("id") == task_id: rec.setdefault("metadata", {})["status"] = "indexing" break vs_save() with bedrock_poller_lock: bedrock_poller_jobs.append({ "task_id": task_id, "invocation_arn": arn, "output_s3_uri": output_uri, "started_at": time.monotonic(), }) success = True break

What this accomplishes: The queue processor updates task status from "pending" to "indexing," stores the Bedrock invocation ARN for tracking, and hands off to a separate polling thread. That poller checks job completion every 30 seconds, loads finished embeddings from S3, and marks videos as "ready" when processing completes.

Why retry logic matters: Bedrock has rate limits. The retry mechanism with exponential backoff ensures jobs eventually succeed even during high-volume uploads, preventing silent failures that would leave videos in permanent "pending" state.

Design pattern: Videos and entities share the same vector index, differentiated by type metadata. Documents use a separate chunk-based index. This gives unified video+entity search while keeping document retrieval independent.

1.3 - Document Ingestion with Semantic Chunking

Documents require different processing than video. PDFs are extracted, split into semantic chunks (by section when possible), embedded via NeMo, and stored as searchable records:

Source: backend/app/services/nemo_retriever.py (Line 688)

def ingest_document(file_path: str, doc_id: str, filename: str) -> dict: extra: dict = {} try: pdf_info = store_pdf_document(file_path, doc_id) extra.update(pdf_info) except Exception as exc: log.warning("Could not persist PDF for %s (%s)", doc_id, type(exc).__name__) ext = os.path.splitext(file_path)[1].lower() chunks: list[str] = [] sections: list[str] = [] if ext == ".pdf": try: pairs = split_into_semantic_chunks(file_path) sections = [s for s, _ in pairs] chunks = [t for _, t in pairs] log.info("Smart PDF chunking produced %d chunks for doc %s", len(chunks), doc_id) except Exception as exc: log.warning("Smart PDF extraction failed for doc %s, falling back to NeMo (%s)", doc_id, type(exc).__name__) if not chunks: chunks = extract_document(file_path) if not chunks: log.warning("No content extracted for doc %s", doc_id) return {"doc_id": doc_id, "chunks": 0, "status": "empty"} embeddings = embed_texts(chunks) add_chunks( doc_id, filename, chunks, embeddings, sections=sections or None, extra_metadata=extra or None, ) return {"doc_id": doc_id, "chunks": len(chunks), "status": "ready"}

Smart chunking strategy: The system attempts section-based splitting first (preserving document structure), then falls back to paragraph-based chunking if structure detection fails. This matters for legal documents where section headers ("Incident Timeline," "Witness Statements") provide important retrieval context.

Embedding model selection: nvidia/llama-nemotron-embed-vl-1b-v2 generates embeddings optimized for passage retrieval. Each chunk gets its own vector, enabling precise document search at the paragraph level rather than whole-document matching.

Metadata preservation: The system stores original section headers and document links alongside chunk text, so retrieval results can point investigators back to the source paragraph in the original PDF.

Embedding generation via NVIDIA API:

Source: backend/app/services/nemo_retriever.py (Line 515)

def embed_via_requests(texts: list[str], input_type: str) -> list[list[float]]: """Call NVIDIA embeddings API directly with requests""" import requests t0 = time.perf_counter() resp = requests.post( "https://integrate.api.nvidia.com/v1/embeddings", headers={ "Authorization": f"Bearer {NVIDIA_API_KEY}", "Content-Type": "application/json", }, json={ "input": texts, "model": EMBED_MODEL, "encoding_format": "float", "input_type": input_type, }, timeout=60, ) resp.raise_for_status() data = resp.json() vectors = [d["embedding"] for d in data["data"]] first_dim = len(vectors[0]) if vectors else 0 return vectors

The input_type parameter: NeMo distinguishes between "passage" (document chunks being indexed) and "query" (search requests). This improves retrieval accuracy by optimizing embeddings for their intended use: passages are embedded for storage, queries are embedded for matching.

1.4 - Creating Searchable Entity Records from Face Images

Legal investigations often require tracking specific individuals across multiple camera sources. The entity system enables this by converting face images into searchable embeddings:

Image embedding generation:

def embed_image(media_source: dict) -> list[float]: return invoke_embedding_model({ "inputType": "image", "image": {"mediaSource": media_source}, })

This uses Marengo's image embedding capability to generate a visual representation of a face. Unlike text embeddings, image embeddings capture visual features directly (facial structure, appearance, clothing) making them suitable for visual matching across video frames.

Entity creation from uploaded face image:

@entities_bp.route("/entities/from-image", methods=["POST"]) def api_entities_from_image(): if "image" not in request.files: return jsonify({"error": "No 'image' file provided"}), 400 file = request.files["image"] if file.filename == "": return jsonify({"error": "Empty filename"}), 400 data = request.form or {} name = data.get("name") or (request.get_json(silent=True) or {}).get("name") or "" if not name.strip(): return jsonify({"error": "Missing 'name'"}), 400 image_bytes = file.read() faces = detect_and_crop_faces(image_bytes, min_confidence=ENTITY_FACE_MIN_CONFIDENCE) if not faces: return jsonify( {"error": "No face detected in image. Use a clear, front-facing photo with good lighting."} ), 404 best = faces[0] face_b64 = best["image_base64"] embed_b64 = best.get("embedding_crop_base64") or face_b64 import base64 face_bytes = base64.b64decode(embed_b64) media = media_source_base64(face_bytes) try: embedding = embed_image(media) except Exception: return jsonify({"error": "Internal server error"}), 500 entity_id = name.strip().lower().replace(" ", "-") rec = index_add( id=entity_id, embedding=embedding, metadata={"name": name.strip(), "face_snap_base64": face_b64}, type="entity", ) return jsonify( { "indexId": FIXED_INDEX_ID, "entity": {"id": rec["id"], "name": name.strip()}, "face_snap_base64": face_b64, } )

The face detection step: Uses OpenCV's ResNet10 SSD detector to isolate faces before embedding. This preprocessing ensures Marengo receives a clean face crop rather than a full scene, improving match accuracy during search. The detector returns confidence scores, only faces above the minimum threshold are processed.

Why entity records live in the video index: Storing entity embeddings alongside video embeddings enables direct similarity search. When an investigator searches for "person-of-interest-x," the system compares that entity's embedding against all video clip embeddings in one retrieval operation.

Part 2: Cross-Source Search and Retrieval

Once videos and documents are indexed, the platform enables unified search across all sources. This is where the single-index architecture delivers its value: one query, all sources, ranked results.

2.1 - Video Content Search with Multimodal Embeddings

The search flow supports three query types through a single interface:

Text queries: Natural language descriptions ("person in red jacket")

Image queries: Upload a screenshot, find matching footage

Entity queries: Search for a previously registered person/object

Source: backend/app/routes/search.py (Line 30)

def search_video_index( data: dict, *, request_query: str = "", request_top_k: int | None = None, image_bytes: bytes | None = None, ) -> tuple[list[dict], str, str | None]: query_emb, display_query, is_entity_search, err = get_search_embedding_from_request( data, request_query=request_query, image_bytes=image_bytes, )

Input normalization: get_search_embedding_from_request() handles all query types and returns a single embedding vector. Text queries get embedded via Marengo's text encoder, image queries via image encoder, entity queries retrieve the stored embedding directly. The search logic downstream doesn't care about input type—it just sees a vector to match.

Entity search optimization: