Product

Building Pegasus 1.5: From Clip-Based QA to Time-Based Metadata

Kian Kim, Shannon Hong

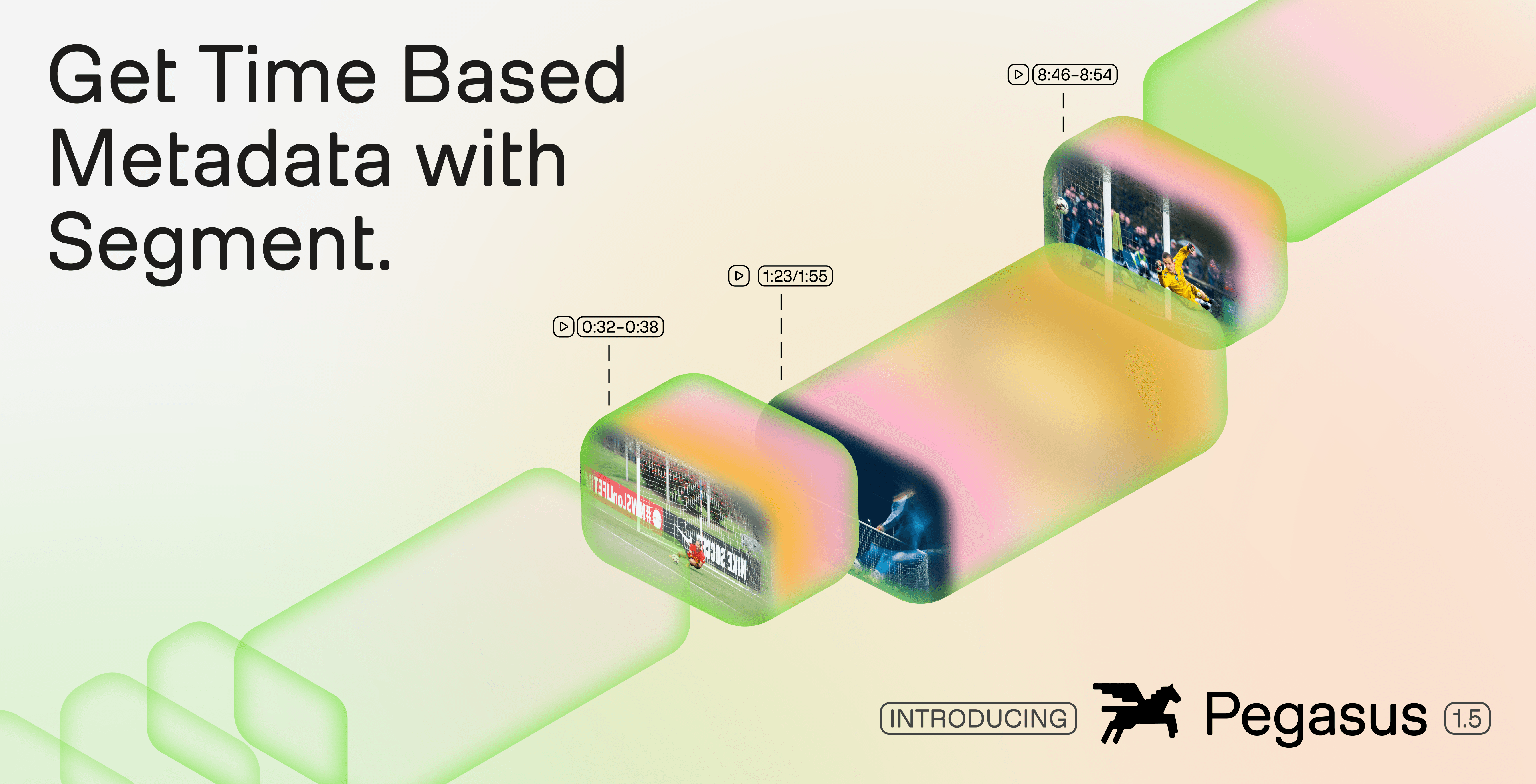

Pegasus 1.5 introduces a fundamental shift in video understanding: from answering questions about clips to generating structured, time-based metadata across entire videos. This post breaks down the technical challenges behind temporal segmentation, how TwelveLabs designed new evaluation metrics and datasets from first principles, and why aligning training with these metrics matters for real-world reliability. The result is a system that turns video into queryable, production-ready data; enabling search, analytics, and automation at scale.

Pegasus 1.5 introduces a fundamental shift in video understanding: from answering questions about clips to generating structured, time-based metadata across entire videos. This post breaks down the technical challenges behind temporal segmentation, how TwelveLabs designed new evaluation metrics and datasets from first principles, and why aligning training with these metrics matters for real-world reliability. The result is a system that turns video into queryable, production-ready data; enabling search, analytics, and automation at scale.

In this article

No headings found on page

Join our newsletter

Join our newsletter

Receive the latest advancements, tutorials, and industry insights in video understanding

Receive the latest advancements, tutorials, and industry insights in video understanding

Search, analyze, and explore your videos with AI.

2026/04/19

12 Minutes

Copy link to article

1 - From Clip-Based Answers to Structured Video Intelligence

Video is one of the richest forms of information, yet it remains one of the least accessible to software systems. Unlike text or images, meaning in video is not contained in a single moment: it emerges through temporal continuity, multimodal interactions, and causal relationships across time. A sports play unfolds over several seconds, a narrative arc in a film spans minutes, a brand appearance may be visually subtle but contextually significant. To operationalize video at scale, systems must reason not only about what happens, but also when it happens.

This is where time-based metadata becomes essential. Time-based metadata transforms raw video into structured, timestamped data, enabling developers to treat video as a queryable and computable asset. Instead of manually reviewing footage or relying on brittle heuristics, organizations can define what matters to their business (such as editorial segments, sports plays, speaker changes, or brand appearances) and automatically extract these events across entire video libraries.

Earlier versions of Pegasus addressed a different class of problem. Pegasus 1.2 was designed as a video question-answering (QA) system: users provided a clip and asked a question, and the model returned an answer or summary. This paradigm works well for factoid queries or localized understanding. However, it introduces a fundamental limitation for real-world workflows: the user must already know where to look. In large-scale environments (such as media archives, live sports, or streaming catalogs), this assumption does not hold.

As a result, Pegasus 1.2 could not fully support workflows that require systematic segmentation and consistent metadata extraction across entire videos. The absence of model-driven boundary detection meant that users still relied on manual annotation or heuristic preprocessing to define temporal regions of interest.

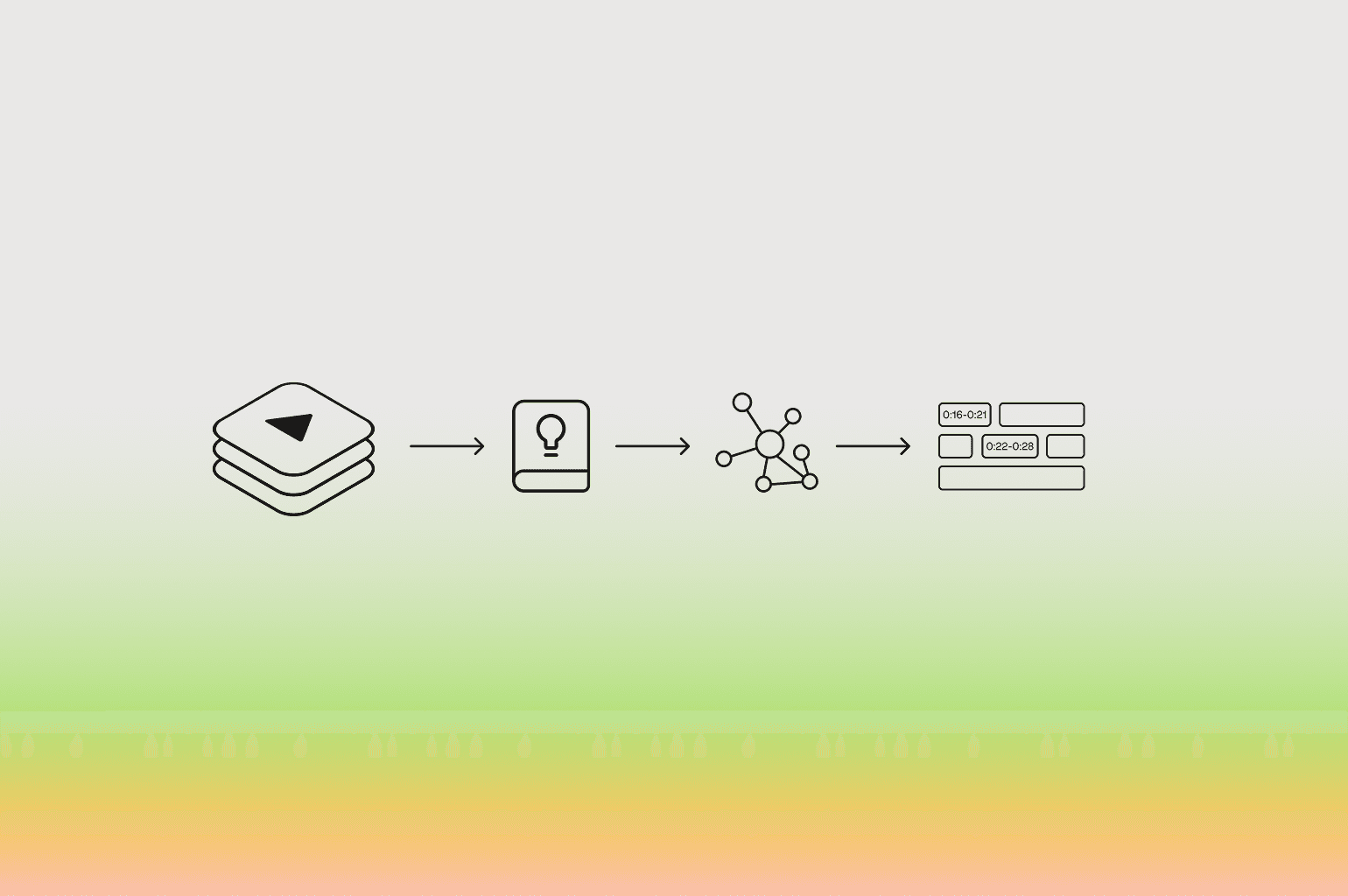

Pegasus 1.5 represents a fundamental shift. Instead of answering questions about a predefined clip, the model partitions an entire video according to a user-defined schema and labels each segment with structured metadata. This transformation moves video understanding from a retrieval problem to a data generation pipeline, where video becomes a first-class input for analytics, automation, and agentic systems.

2 - The Cost of Unstructured Video

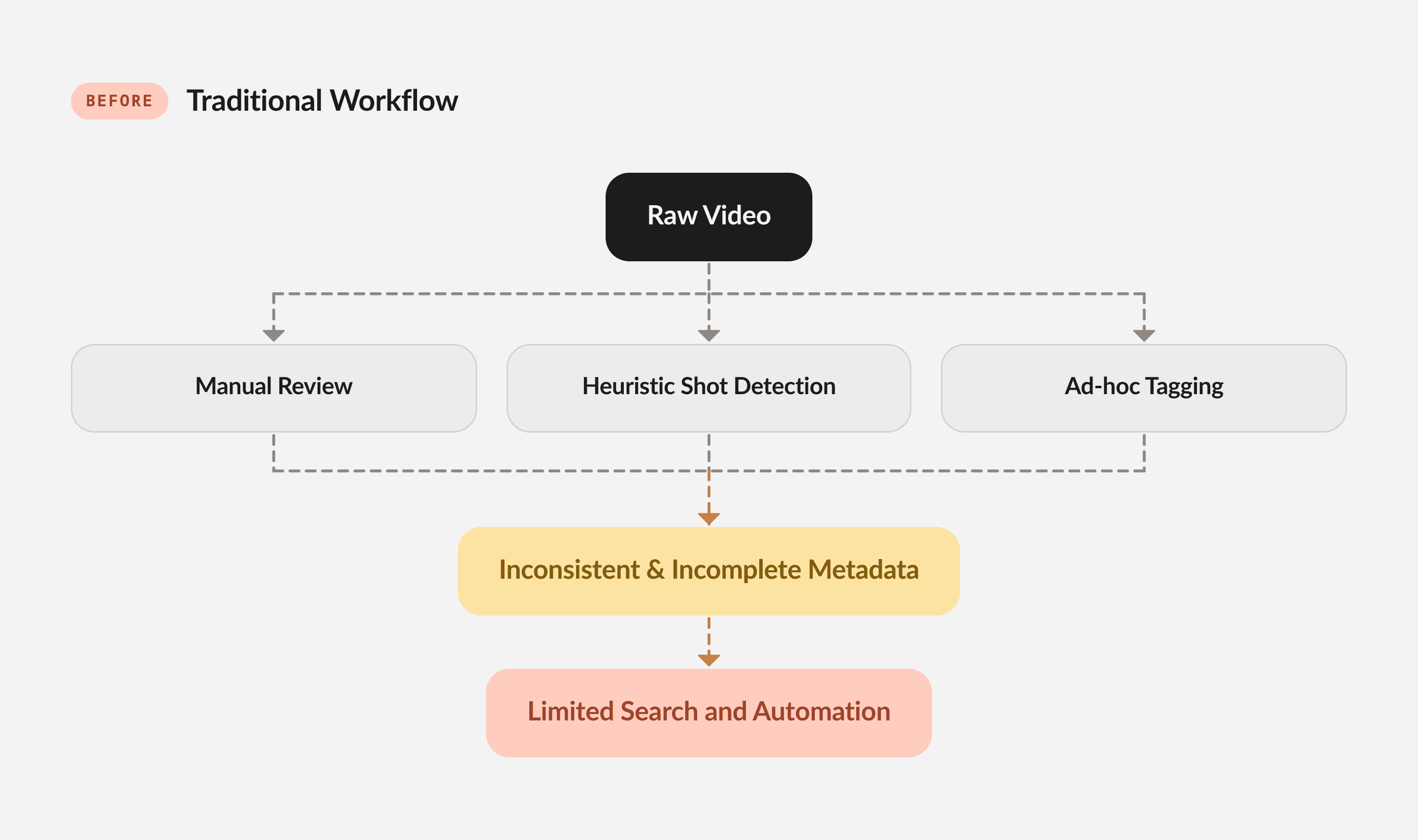

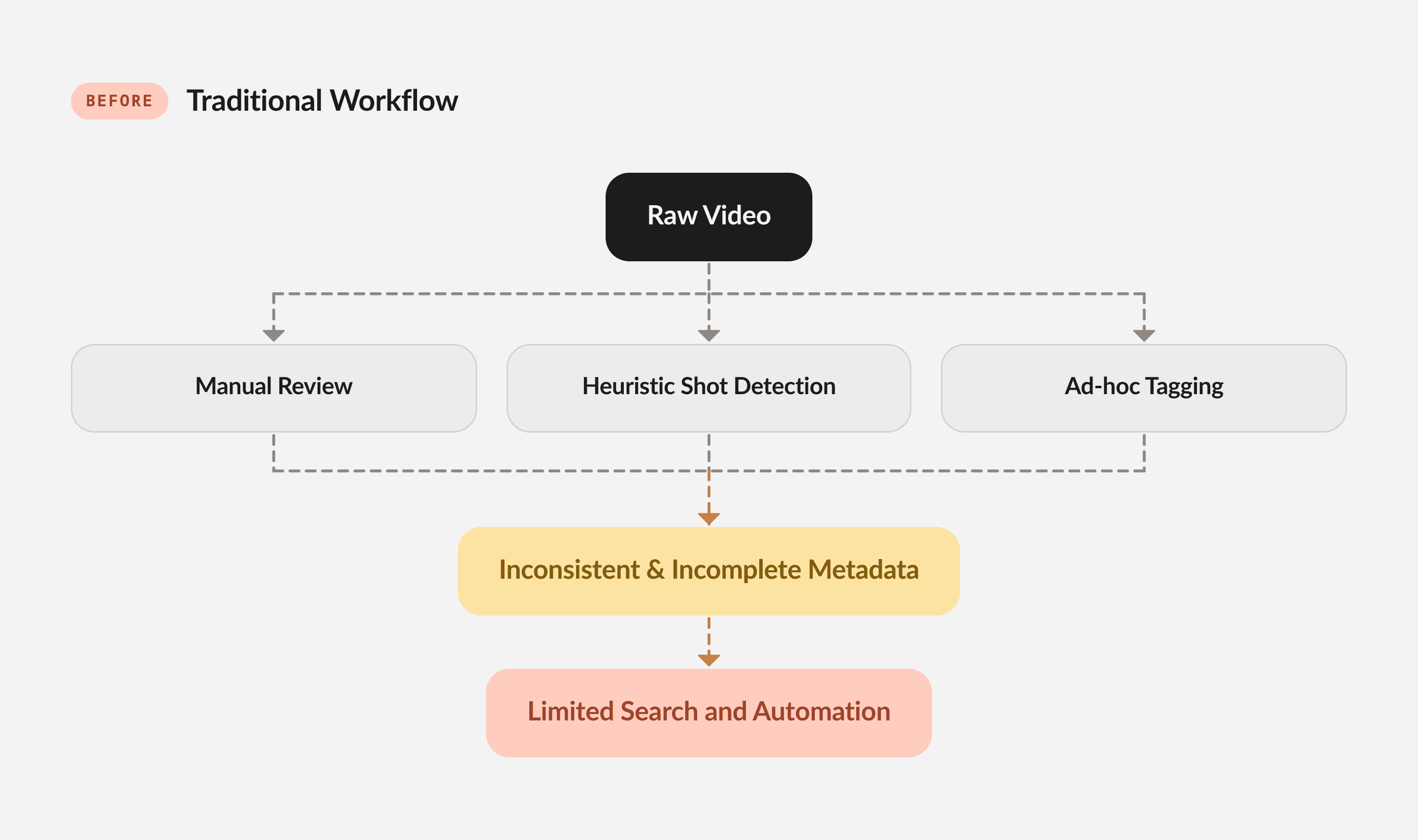

Despite the explosive growth of video content, most organizations still manage it as unstructured media. Traditional approaches rely on manual logging, keyword tagging, or simple shot-detection algorithms. These methods fail to capture the semantic and temporal complexity required for downstream decision-making.

From a systems perspective, the challenge stems from three properties of video:

Temporal Ambiguity: Events do not have explicit boundaries. Determining where a news story begins or where a sports play ends requires contextual reasoning across multiple modalities.

Multimodal Dependence: Meaning arises from the interaction of visual cues, speech, audio signals, and on-screen text.

Schema Variability: Different organizations care about different events, requiring flexible and domain-specific definitions.

Without reliable time-based metadata, video cannot be easily indexed, queried, or integrated into data pipelines, limiting its value for automation and analytics.

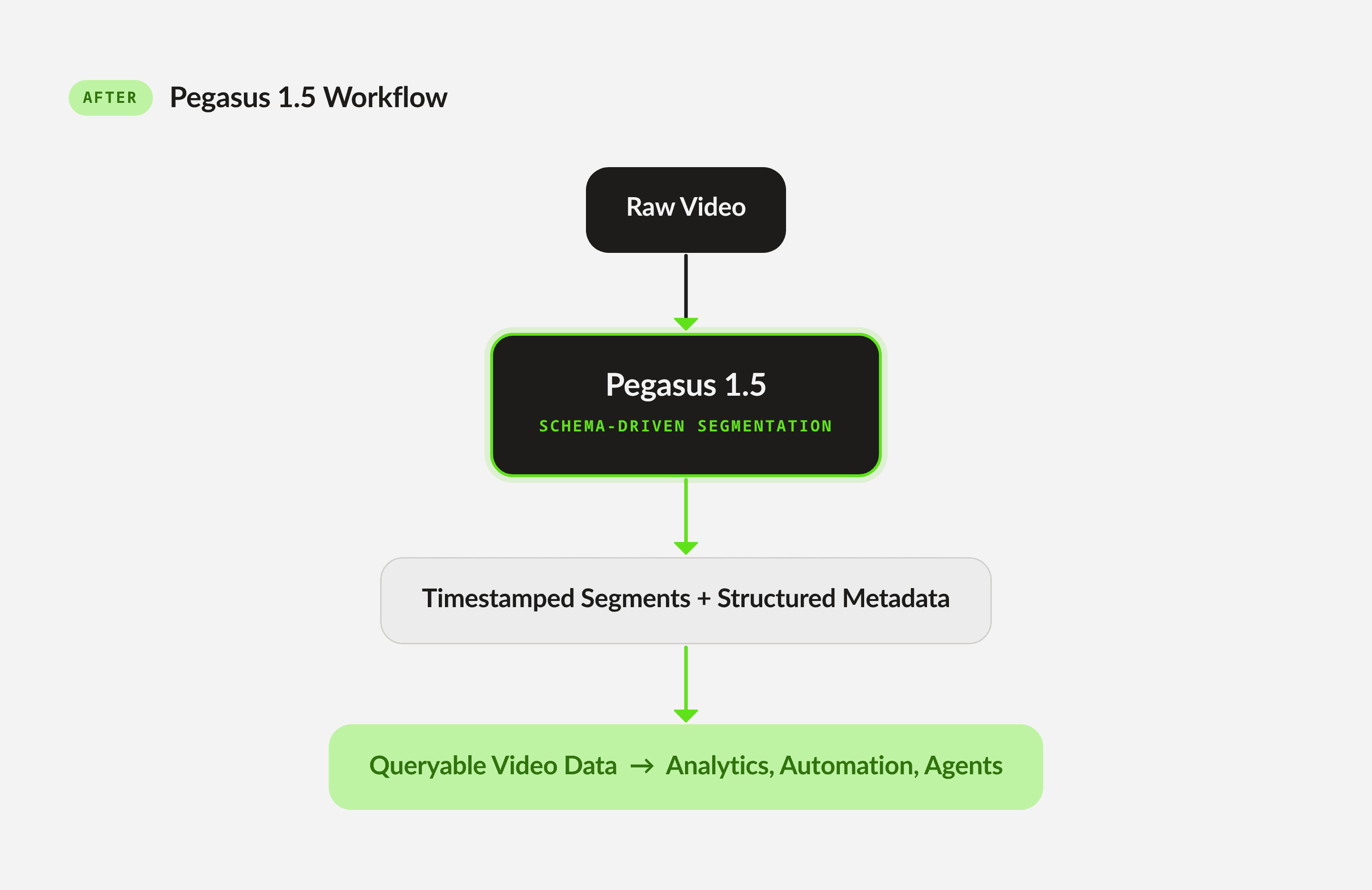

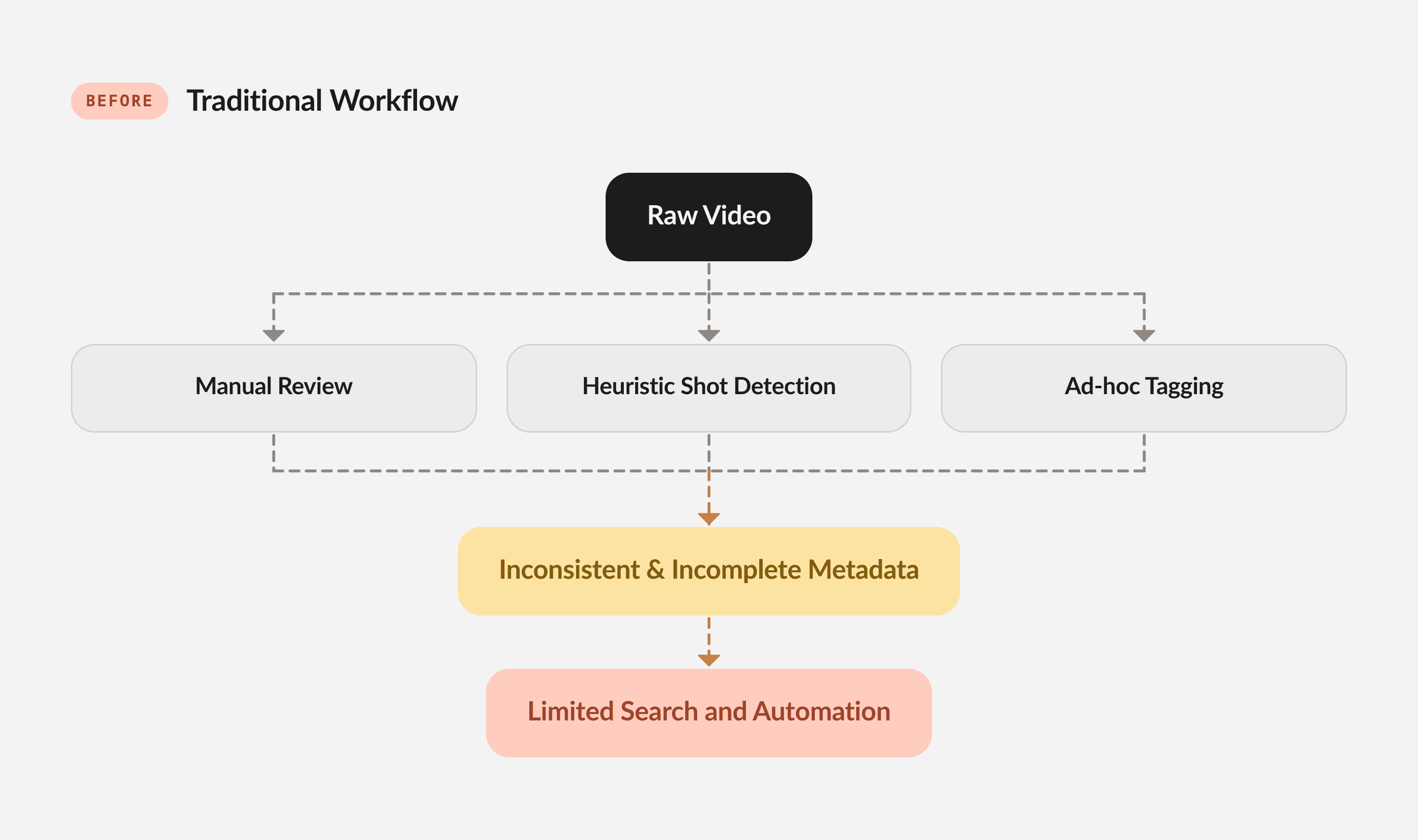

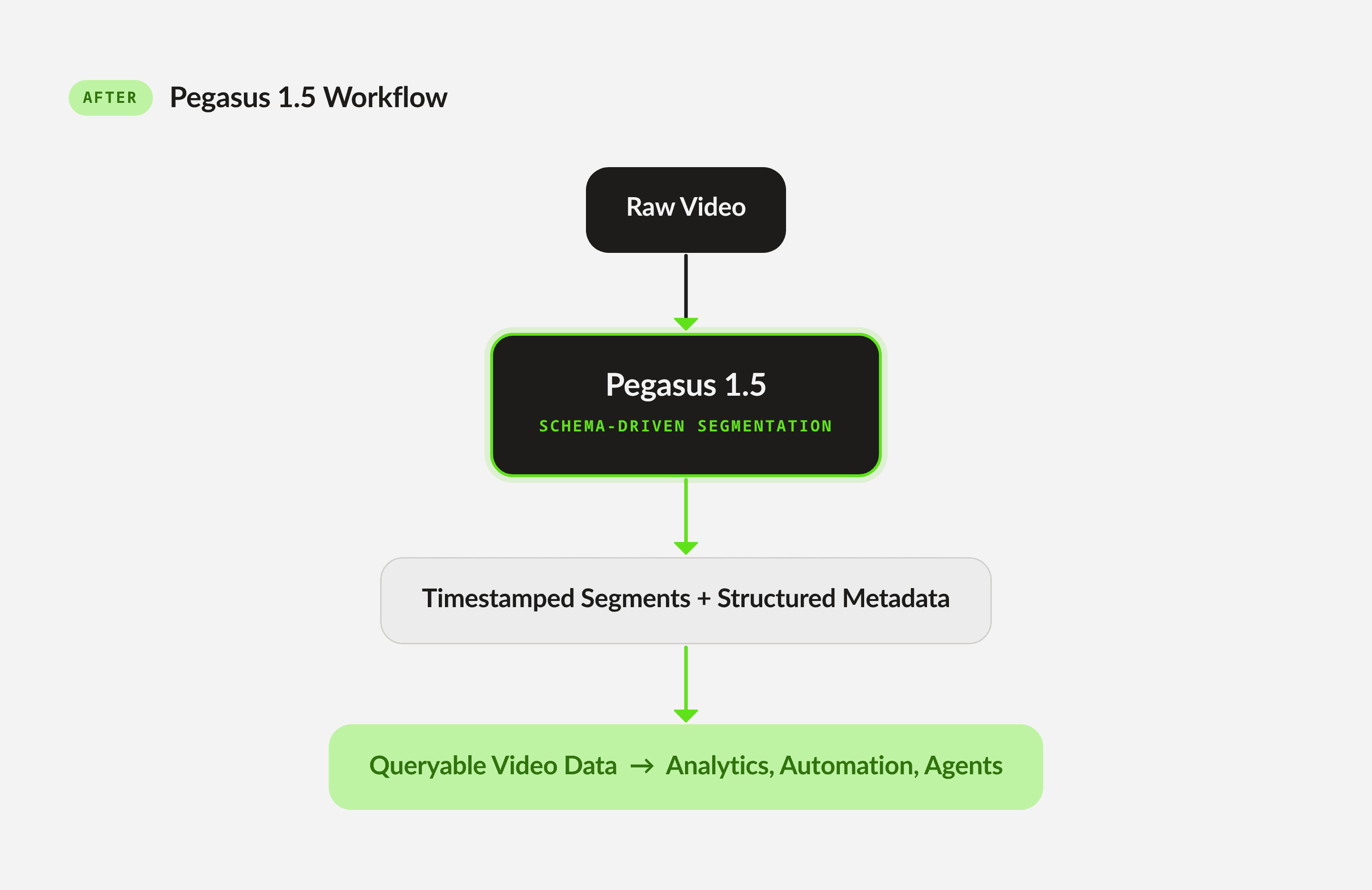

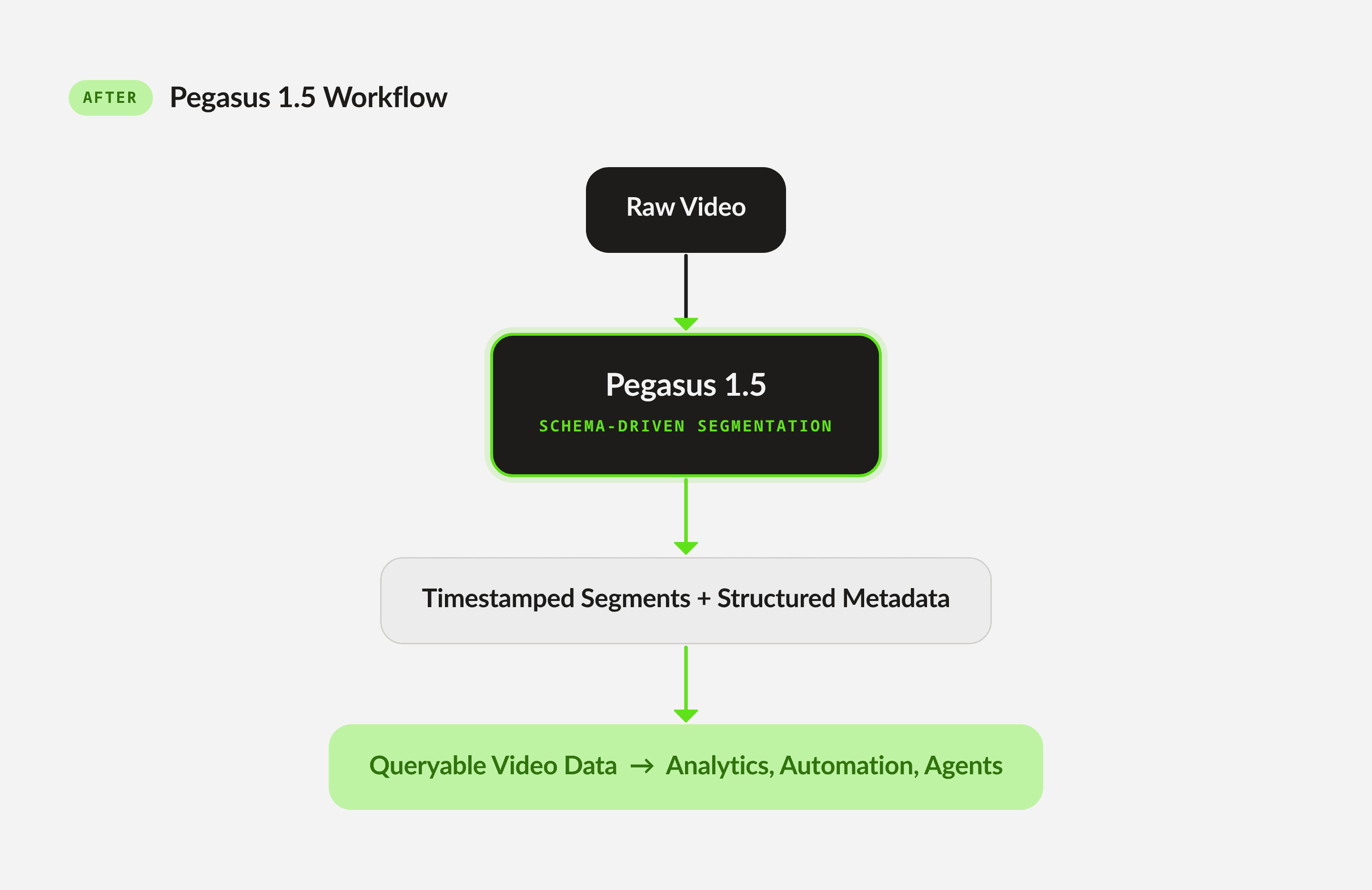

The operational gap between raw video and structured data can be illustrated through the following comparison:

Diagram 1: Traditional Workflow

Diagram 2: Pegasus 1.5 Workflow

By allowing developers to define a vocabulary of events, Pegasus 1.5 shifts the burden of temporal reasoning from humans to the model, enabling scalable and consistent metadata extraction.

In media & entertainment, editorial teams must segment long-form content into narrative units (such as scenes, topics, or character appearances) to support archiving, recommendation, and monetization. With Pegasus 1.5, they can define schemas for editorial segments and automatically extract structured metadata across entire catalogs. This enables semantic search, automated highlight generation, and efficient content reuse.

In sports analytics, identifying and labeling plays within sports footage is labor-intensive and time-sensitive, often requiring domain experts to review entire games. With Pegasus 1.5, they can define schemas for plays (such as goals, fouls, or turnovers) and automatically detect each instance with precise temporal boundaries. This supports real-time highlight creation and performance analytics.

In streaming platforms, these services must identify brand appearances, scene transitions, and contextual moments to enable targeted advertising and content monetization. With Pegasus 1.5, they can define schemas for brand visibility or contextual triggers, allowing automatic detection of monetizable moments across vast libraries.

3 - Technical Foundations: Defining Time-Based Metadata

Time-based metadata (TBM) refers to structured information associated with specific temporal segments of a video. Each segment is defined by precise start and end timestamps and is enriched with metadata fields that conform to a user-defined schema.

Formally, a TBM output can be represented as:

Segment = { start_time: float, end_time: float, metadata: { key: value, ... } }

A complete analysis consists of a set of non-overlapping segments for each semantic definition provided by the user. This structure enables deterministic integration with downstream systems such as search indexes, analytics platforms, and agentic workflows.

Pegasus 1.5 introduces a schema-first interaction model through the /analyze API. Instead of prompting the model with open-ended questions, developers define segment definitions that describe:

What constitutes a segment (semantic description)

Which metadata fields to extract

Optional constraints such as duration or contextual references

This design ensures consistency, determinism, and integration readiness for production environments.

This is an example /analyze API request for the basketball video above:

{ "model_name": "pegasus1.5", "analysis_mode": "time_based_metadata", "video": { "type": "url", "url": "https://example.com/video.mp4" }, "response_format": { "type": "segment_definitions", "segment_definitions": [ { "id": "non_gameplay_footage", "description": "Generate segments only when the content on screen IS NOT actual gameplay.", "fields": [ { "name": "description", "type": "string", "description": "A rich long description of the non-gameplay footage." } ] }, { "id": "scoring_plays", "description": "Segment any time a team scores points. The segment should be the entire scoring play.", "fields": [ { "name": "points_scored", "type": "string", "description": "How many points were scored during the play.", "enum": [ "2pt", "1pt", "3pt" ] }, { "name": "shot_type", "type": "string", "description": "The shot type from the scoring play.", "enum": [ "jump_start", "layup", "dunk", "foul_shot" ] }, { "name": "scoring_team", "type": "string", "description": "Name of the team that scored." } ] }, { "id": "camera_cut", "description": "Segment any time only when there is a hard cut in the camera. Otherwise continue the current segment.", "fields": [ { "name": "camera_angle", "type": "string", "description": "Angle of the current camera.", "enum": [ "high", "low", "medium" ] } ] } ] }, "temperature": 0, "min_segment_duration": 2 }

And this is an example response structure:

"result": { "generation_id": "5be1b8c6-7e92-43ce-b37d-ba1b53ed1ebe", "data": "{\"gameplay_footage\": [{\"start_time\": 0.0, \"end_time\": 11.0, \"metadata\": {\"description\": \"The video opens with a title card announcing Loyola's NCAA championship win, followed by a wide shot of the packed arena and a close-up of the 'NCAA Finals 1963' logo on the court.\"}}, {\"start_time\": 20.0, \"end_time\": 22.0, \"metadata\": {\"description\": \"A brief cutaway shot shows a woman in the stands smiling and clapping enthusiastically.\"}}, {\"start_time\": 29.0, \"end_time\": 31.0, \"metadata\": {\"description\": \"The camera focuses on the scoreboard, showing the score as 48-50 with 2:04 remaining in the second period.\"}}, {\"start_time\": 53.0, \"end_time\": 54.0, \"metadata\": {\"description\": \"A quick shot of spectators in the stands reacting to the game.\"}}, {\"start_time\": 56.0, \"end_time\": 58.0, \"metadata\": {\"description\": \"The camera captures two men in the stands celebrating with their arms raised.\"}}, {\"start_time\": 68.0, \"end_time\": 70.0, \"metadata\": {\"description\": \"The scoreboard is shown again, displaying a tied score of 54-54 with 5:00 remaining in the third period.\"}}, {\"start_time\": 76.0, \"end_time\": 78.0, \"metadata\": {\"description\": \"A shot of the crowd shows fans cheering and celebrating during the game.\"}}, {\"start_time\": 81.0, \"end_time\": 83.0, \"metadata\": {\"description\": \"Two cheerleaders are shown on the court, performing a routine.\"}}, {\"start_time\": 88.0, \"end_time\": 90.0, \"metadata\": {\"description\": \"A man in a suit is seen standing and clapping in the stands.\"}}, {\"start_time\": 109.0, \"end_time\": 118.0, \"metadata\": {\"description\": \"Following the final shot, the Loyola players and coaches rush onto the court to celebrate their championship victory.\"}}, {\"start_time\": 118.0, \"end_time\": 121.0, \"metadata\": {\"description\": \"The final scoreboard is displayed, showing Loyola's victory with a score of 60-58 as the time runs out.\"}}], \"scoring_plays\": [{\"start_time\": 12.0, \"end_time\": 20.0, \"metadata\": {\"points_scored\": \"2pt\", \"shot_type\": \"layup\", \"scoring_team\": \"University of Cincinnati Bearcats\"}}, {\"start_time\": 23.0, \"end_time\": 28.0, \"metadata\": {\"points_scored\": \"2pt\", \"shot_type\": \"jump_start\", \"scoring_team\": \"Loyola Ramblers\"}}, {\"start_time\": 46.0, \"end_time\": 52.0, \"metadata\": {\"points_scored\": \"2pt\", \"shot_type\": \"layup\", \"scoring_team\": \"Loyola Ramblers\"}}, {\"start_time\": 60.0, \"end_time\": 68.0, \"metadata\": {\"points_scored\": \"2pt\", \"shot_type\": \"layup\", \"scoring_team\": \"Loyola Ramblers\"}}, {\"start_time\": 71.0, \"end_time\": 76.0, \"metadata\": {\"points_scored\": \"2pt\", \"shot_type\": \"jump_start\", \"scoring_team\": \"Loyola Ramblers\"}}, {\"start_time\": 79.0, \"end_time\": 83.0, \"metadata\": {\"points_scored\": \"2pt\", \"shot_type\": \"jump_start\", \"scoring_team\": \"University of Cincinnati Bearcats\"}}, {\"start_time\": 102.0, \"end_time\": 110.0, \"metadata\": {\"points_scored\": \"2pt\", \"shot_type\": \"layup\", \"scoring_team\": \"Loyola Ramblers\"}}], \"camera_cut\": [{\"start_time\": 0.0, \"end_time\": 3.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 3.0, \"end_time\": 10.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 10.0, \"end_time\": 11.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 11.0, \"end_time\": 20.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 20.0, \"end_time\": 22.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 22.0, \"end_time\": 28.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 28.0, \"end_time\": 31.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 31.0, \"end_time\": 38.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 38.0, \"end_time\": 45.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 45.0, \"end_time\": 53.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 53.0, \"end_time\": 54.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 54.0, \"end_time\": 56.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 56.0, \"end_time\": 58.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 58.0, \"end_time\": 68.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 68.0, \"end_time\": 70.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 70.0, \"end_time\": 76.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 76.0, \"end_time\": 78.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 78.0, \"end_time\": 81.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 81.0, \"end_time\": 83.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 83.0, \"end_time\": 88.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 88.0, \"end_time\": 90.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 90.0, \"end_time\": 108.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 108.0, \"end_time\": 118.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 118.0, \"end_time\": 121.0, \"metadata\": {\"camera_angle\": \"medium\"}}]}" "finish_reason": "stop", "usage": { "output_tokens": <number>

Here’s the json.parse() on the “data” of the response structure:

{ "gameplay_footage": [ {"start_time": 0.0, "end_time": 11.0, "metadata": {"description": "The video opens with a title card announcing Loyola's NCAA championship win, followed by a wide shot of the packed arena and a close-up of the 'NCAA Finals 1963' logo on the court."}}, {"start_time": 20.0, "end_time": 22.0, "metadata": {"description": "A brief cutaway shot shows a woman in the stands smiling and clapping enthusiastically."}}, {"start_time": 29.0, "end_time": 31.0, "metadata": {"description": "The camera focuses on the scoreboard, showing the score as 48-50 with 2:04 remaining in the second period."}}, {"start_time": 53.0, "end_time": 54.0, "metadata": {"description": "A quick shot of spectators in the stands reacting to the game."}}, {"start_time": 56.0, "end_time": 58.0, "metadata": {"description": "The camera captures two men in the stands celebrating with their arms raised."}}, {"start_time": 68.0, "end_time": 70.0, "metadata": {"description": "The scoreboard is shown again, displaying a tied score of 54-54 with 5:00 remaining in the third period."}}, {"start_time": 76.0, "end_time": 78.0, "metadata": {"description": "A shot of the crowd shows fans cheering and celebrating during the game."}}, {"start_time": 81.0, "end_time": 83.0, "metadata": {"description": "Two cheerleaders are shown on the court, performing a routine."}}, {"start_time": 88.0, "end_time": 90.0, "metadata": {"description": "A man in a suit is seen standing and clapping in the stands."}}, {"start_time": 109.0, "end_time": 118.0, "metadata": {"description": "Following the final shot, the Loyola players and coaches rush onto the court to celebrate their championship victory."}}, {"start_time": 118.0, "end_time": 121.0, "metadata": {"description": "The final scoreboard is displayed, showing Loyola's victory with a score of 60-58 as the time runs out."}} ], "scoring_plays": [ {"start_time": 12.0, "end_time": 20.0, "metadata": {"points_scored": "2pt", "shot_type": "layup", "scoring_team": "University of Cincinnati Bearcats"}}, {"start_time": 23.0, "end_time": 28.0, "metadata": {"points_scored": "2pt", "shot_type": "jump_start", "scoring_team": "Loyola Ramblers"}}, {"start_time": 46.0, "end_time": 52.0, "metadata": {"points_scored": "2pt", "shot_type": "layup", "scoring_team": "Loyola Ramblers"}}, {"start_time": 60.0, "end_time": 68.0, "metadata": {"points_scored": "2pt", "shot_type": "layup", "scoring_team": "Loyola Ramblers"}}, {"start_time": 71.0, "end_time": 76.0, "metadata": {"points_scored": "2pt", "shot_type": "jump_start", "scoring_team": "Loyola Ramblers"}}, {"start_time": 79.0, "end_time": 83.0, "metadata": {"points_scored": "2pt", "shot_type": "jump_start", "scoring_team": "University of Cincinnati Bearcats"}}, {"start_time": 102.0, "end_time": 110.0, "metadata": {"points_scored": "2pt", "shot_type": "layup", "scoring_team": "Loyola Ramblers"}} ], "camera_cut": [ {"start_time": 0.0, "end_time": 3.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 3.0, "end_time": 10.0, "metadata": {"camera_angle": "high"}}, {"start_time": 10.0, "end_time": 11.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 11.0, "end_time": 20.0, "metadata": {"camera_angle": "high"}}, {"start_time": 20.0, "end_time": 22.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 22.0, "end_time": 28.0, "metadata": {"camera_angle": "high"}}, {"start_time": 28.0, "end_time": 31.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 31.0, "end_time": 38.0, "metadata": {"camera_angle": "high"}}, {"start_time": 38.0, "end_time": 45.0, "metadata": {"camera_angle": "high"}}, {"start_time": 45.0, "end_time": 53.0, "metadata": {"camera_angle": "high"}}, {"start_time": 53.0, "end_time": 54.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 54.0, "end_time": 56.0, "metadata": {"camera_angle": "high"}}, {"start_time": 56.0, "end_time": 58.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 58.0, "end_time": 68.0, "metadata": {"camera_angle": "high"}}, {"start_time": 68.0, "end_time": 70.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 70.0, "end_time": 76.0, "metadata": {"camera_angle": "high"}}, {"start_time": 76.0, "end_time": 78.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 78.0, "end_time": 81.0, "metadata": {"camera_angle": "high"}}, {"start_time": 81.0, "end_time": 83.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 83.0, "end_time": 88.0, "metadata": {"camera_angle": "high"}}, {"start_time": 88.0, "end_time": 90.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 90.0, "end_time": 108.0, "metadata": {"camera_angle": "high"}}, {"start_time": 108.0, "end_time": 118.0, "metadata": {"camera_angle": "high"}}, {"start_time": 118.0, "end_time": 121.0, "metadata": {"camera_angle": "medium"}} ] }

We design this schema with the following principles:

Temporal Precision: Each segment is anchored by explicit timestamps, enabling frame-accurate alignment with downstream systems such as editing tools or analytics pipelines.

Non-Overlapping Segments: Within a segment definition, outputs are mutually exclusive, ensuring deterministic and consistent interpretations of the video timeline.

Open Vocabulary with Structured Outputs: Developers can define domain-specific vocabularies while maintaining strict schema compliance, enabling seamless integration into databases and agentic workflows.

Multimodal Reasoning: Segment boundaries and metadata are inferred from the interaction of visual, auditory, and linguistic signals, reflecting the true complexity of video.

4 - Building an Evaluation Framework for Time-Based Metadata

4.1 - Evaluation dataset: built from scratch with rigorous human verification

Building a reliable evaluation system for time-based metadata required solving a bootstrapping problem: no suitable benchmark existed.

Existing academic benchmarks for video understanding, such as Video-MME, evaluate a fundamentally different task. They reduce video comprehension to multiple-choice question answering: given a clip and a question, select the correct option from a fixed set of candidates. This format is useful for measuring general video reasoning, but it bears little resemblance to producing structured temporal outputs. Time-based metadata extraction requires schema-conditioned segmentation: the model must partition an entire video according to a user-defined vocabulary of events, determine where each segment begins and ends, and populate structured metadata fields for each one. No existing benchmark evaluates this combination of dense temporal boundary prediction with per-segment structured metadata across diverse content domains.

We built the evaluation set from scratch. The dataset spans multiple content verticals, each presenting distinct segmentation challenges:

News broadcasts require identifying editorial packages, anchor segments, and field reports with precise transition boundaries.

Movies and television demand scene-level segmentation with narrative and visual metadata.

Sports footage requires detection of plays, scoring events, and game-state transitions at much finer temporal granularity.

For each domain, we defined segment definitions and metadata schemas that mirror real production workflows, the same schemas a media company or sports broadcaster would use in practice.

Critically, we implemented a multi-stage human verification process rather than treating annotation as a single pass. The workflow consists of four phases:

Project initialization: clarifying segment definitions and resolving ambiguity in boundary criteria before annotation begins.

Segmentation triage: reviewing boundary quality after segmentation is completed, but before metadata annotation starts.

Metadata triage: verifying field-level metadata accuracy after extraction, confirming direction and quality before scaling.

Final validation: end-to-end quality review before a sample enters the evaluation set.

At each stage, samples that fail quality thresholds are either corrected or removed. This process revealed an important lesson: a universal annotation workflow does not work for time-based metadata. Different segment types, a shot-level visual boundary versus a high-level editorial transition, require different annotation strategies, different levels of domain expertise, and different quality criteria. We addressed this by routing tasks based on complexity and volume: straightforward boundary types were validated at scale using external annotators, while semantically complex definitions (such as narrative beats or gradual topic transitions) received dedicated expert review.

The result is an evaluation set where we have high confidence in both segment boundary placement and metadata accuracy, a prerequisite for any metric to be meaningful.

4.2 - Metric design: why time-based metadata evaluation is different

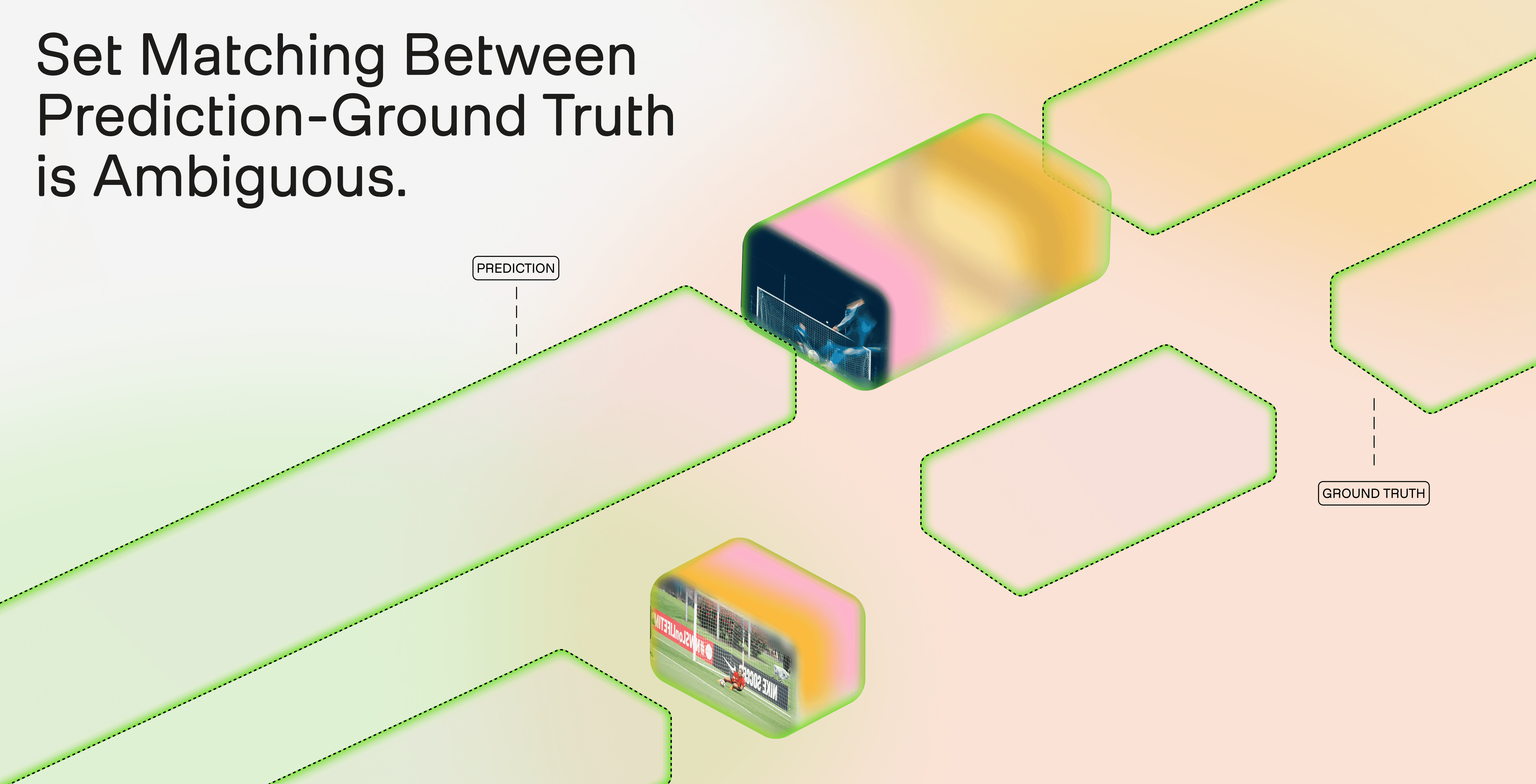

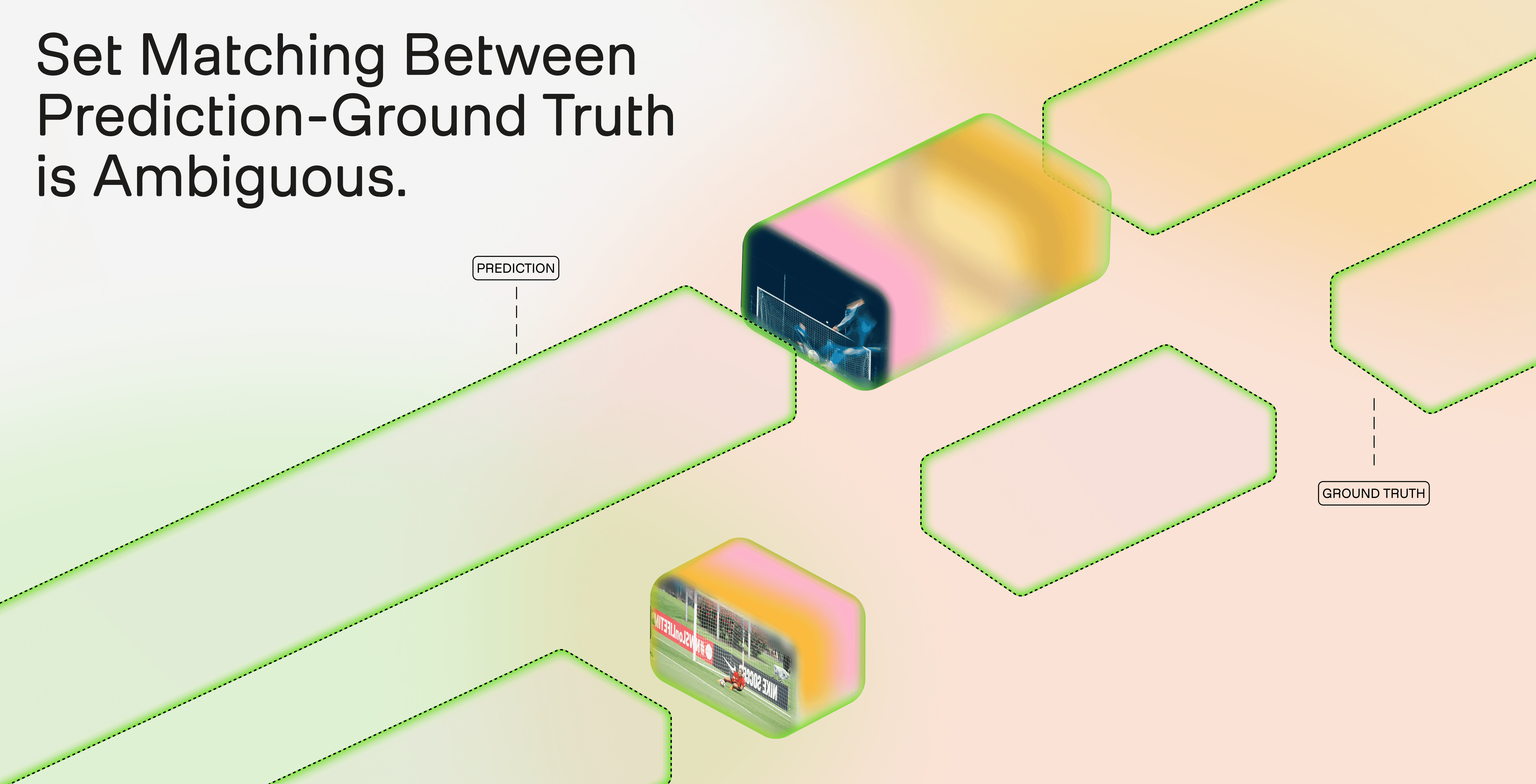

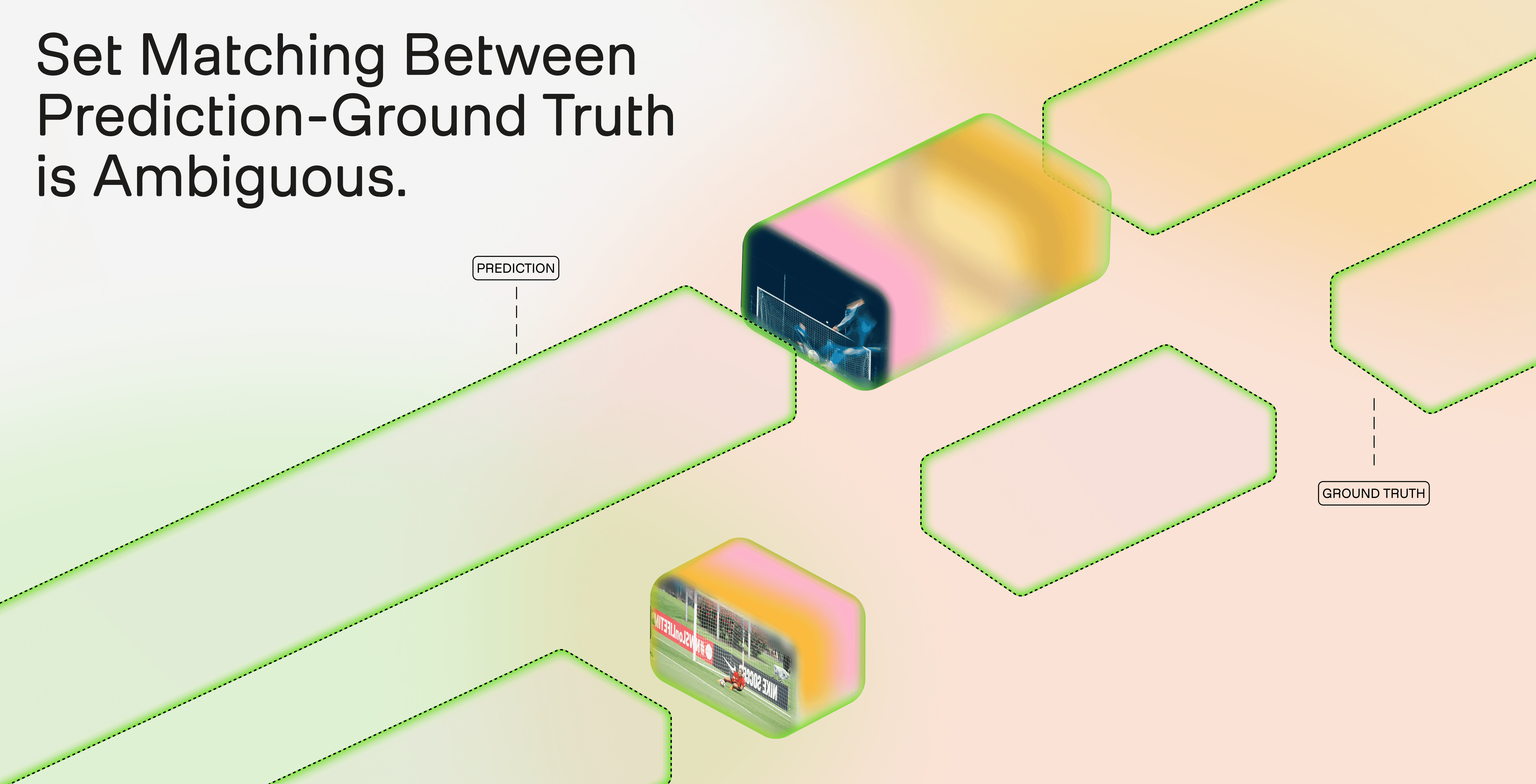

Evaluating time-based metadata is fundamentally different from evaluating standard NLP or vision tasks. The model’s output is not a single answer but a set of temporal segments, each defined by start and end timestamps and enriched with structured metadata fields. Scoring this output requires solving two distinct problems: how well the predicted segments align with the ground truth (segmentation quality), and how accurate the metadata is within aligned segments (metadata quality).

Why naive metrics fail

Our first segmentation metric was temporal coverage: the fraction of ground truth duration covered by predicted segments. This metric has a critical flaw: it is recall-only. A single prediction spanning the entire video achieves high coverage while capturing no meaningful segmentation structure. More subtly, an over-segmented output (many small fragments) can collectively cover the ground truth while failing to produce coherent segment boundaries. Coverage measures whether the right time was found, but says nothing about whether the right structure was found.

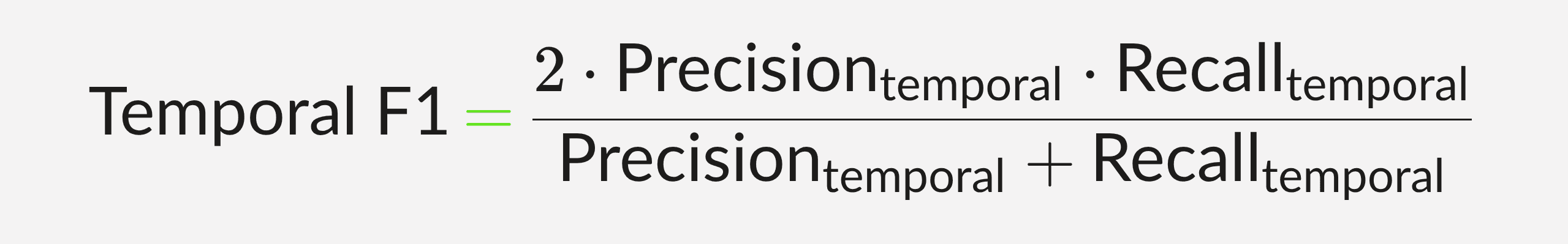

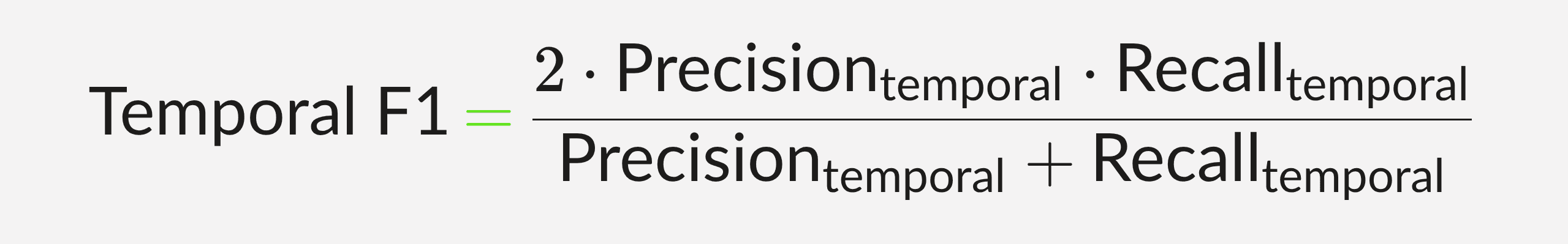

From coverage to Temporal F1

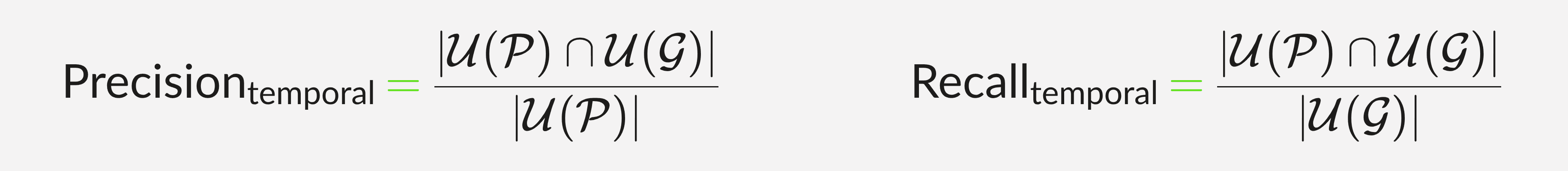

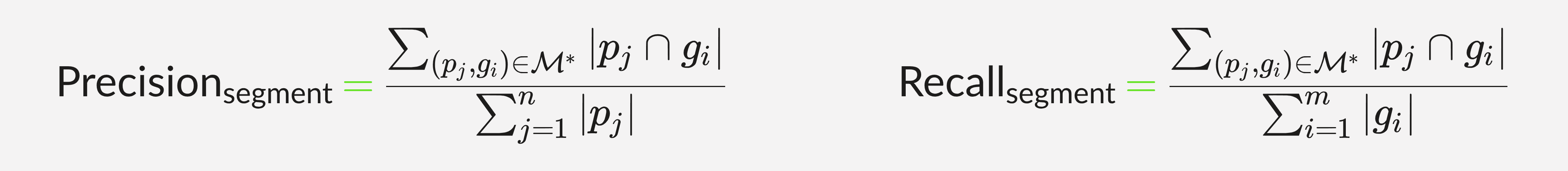

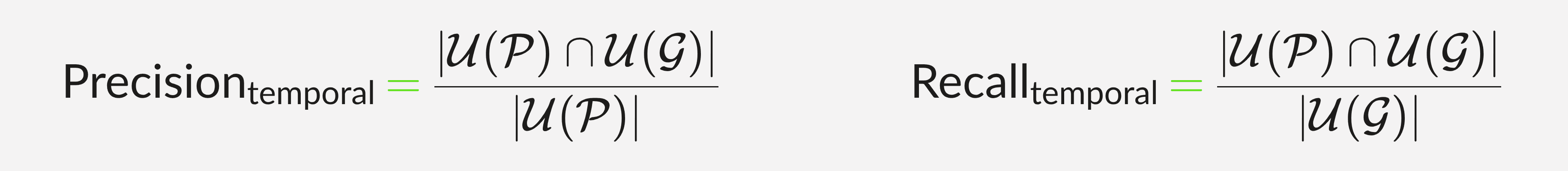

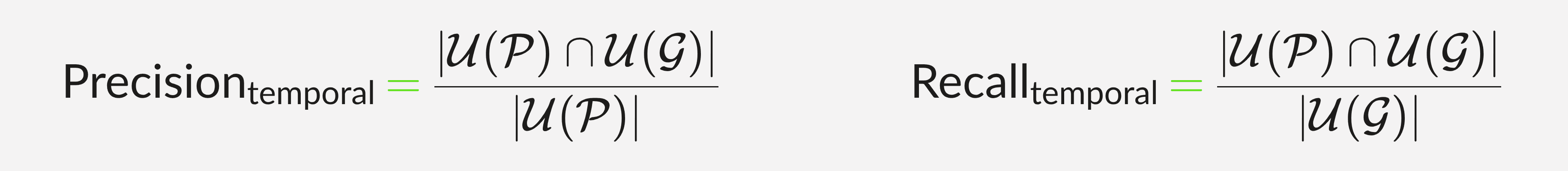

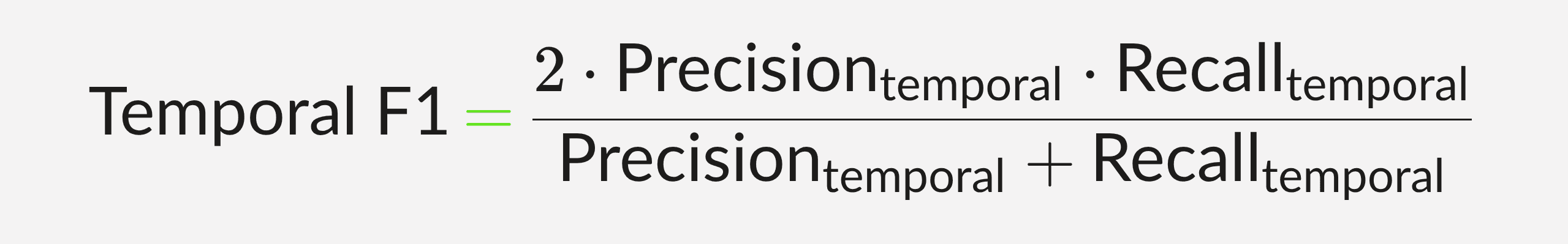

To address this, we introduced precision into the evaluation. Let G = {g_1, ..., g_m} be the set of ground truth segments and P = {p_1, ..., p_n} be the set of predicted segments, where each segment is a time interval with duration |s|. Let U(.) denote the union of all intervals in a set, and |.| the total duration of that union.

In the many-to-many (N:N) formulation, we ignore segment identity and compute overlap on the merged time axis:

This penalizes both over-prediction (low precision) and missed content (low recall) by treating evaluation as a duration-weighted comparison on the time axis.

However, Temporal F1 ignores individual segment boundaries. A ground truth segment perfectly matched by one prediction scores the same as one fragmented across many small predictions.

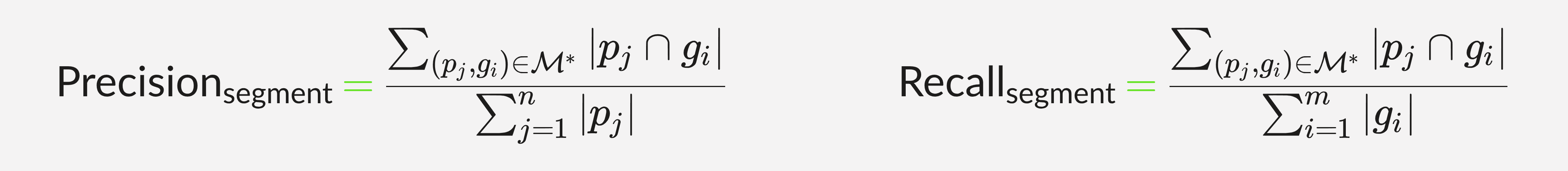

To capture segment-level alignment, we introduced Segment F1. This uses the Hungarian algorithm to find the optimal 1:1 assignment M* that maximizes total IoU across matched pairs. Only matched pairs contribute to the numerator:

Unmatched predictions become false positives; unmatched ground truth segments become false negatives.

Together, Temporal F1 and Segment F1 capture complementary failure modes. Temporal F1 is lenient toward fragmentation but strict about total time accuracy. Segment F1 is strict about boundary alignment but may undercount coverage when predictions legitimately span multiple ground truth events. We report both metrics to provide a complete picture of segmentation quality.

Metadata evaluation

Segmentation metrics measure whether the model found the right boundaries. Metadata metrics measure whether the model extracted the right information within those boundaries. Because metadata fields are open-vocabulary, free-text summaries, categorical labels, keyword lists, entity names, rule-based scoring is insufficient.

We use an LLM-as-judge approach: for each aligned segment pair, a language model evaluates each metadata field against the ground truth using category-specific rubrics. Different field types, transcripts, summaries, keywords, categorical labels, speaker identities, spatial descriptions, each receive a tailored scoring rubric. When segment boundaries between prediction and ground truth are not identical, the judge accounts for temporal context: differences in metadata may reflect boundary misalignment rather than extraction failure, and the scoring adjusts accordingly. Field-level scores are aggregated per segment and per sample to produce the final metadata quality score.

4.3 - Reinforcement learning with a custom metric: making the reward what you measure

The metric design described above was not only an evaluation tool, it became the foundation for model training. Pegasus 1.5 uses reinforcement learning with verifiable rewards (RLVR), and a key insight driving this work is that time-based metadata is a particularly natural fit for this training paradigm.

Why TBM fits RLVR

Reinforcement learning with verifiable rewards requires that the quality of a model’s output can be assessed programmatically, without human judgment in the loop. Time-based metadata satisfies this requirement in two ways. First, structural validity is verifiable: the output must be valid JSON, conform to the requested schema, and produce non-overlapping temporal segments, all properties that can be checked deterministically. Second, segmentation quality is verifiable: given ground truth segments and a rule-based metric such as Temporal F1 or Segment F1, boundary accuracy can be scored automatically. Together, these make a large fraction of the task verifiable, which is what makes RLVR effective here.

The reward function

The RL reward is a composite signal with distinct components. Format validity, JSON parsability and schema compliance, is assessed as a granular, separate reward component, ensuring the model never learns to trade structural correctness for segmentation gains. Outputs that fail JSON parsing or schema validation receive zero reward on this component, functioning as a hard constraint on output quality. The segmentation reward is computed using the same Temporal F1 and Segment F1 metrics described in Section 4.2. The metadata reward uses automated scoring of field-level accuracy.

Reward hacking and metric co-evolution

One of the most instructive outcomes of the RL process was how aggressively the model exposed weaknesses in our evaluation metrics. When we used temporal coverage (a recall-only metric) as the reward signal, the model learned to maximize coverage through degenerate strategies, producing maximally long segments, or over-segmenting videos into many small fragments that collectively covered the ground truth. The reward score increased, but the actual outputs were not useful: they lacked meaningful segment boundaries.

This is a textbook instance of reward hacking, and it forced us to iterate on the metric itself. The progression from coverage-only to Temporal F1 (which introduced precision) to the combined Temporal F1 + Segment F1 framework was driven directly by observing what the model optimized toward under each reward definition. In this sense, RL served a dual purpose: it improved the model’s segmentation ability, and it pressure-tested the metric, revealing failure modes that were not apparent from static evaluation alone.

Guarding against degenerate strategies

The F1-based reward function naturally penalizes the two most common failure modes: over-segmentation (which reduces precision) and under-segmentation (which reduces recall). Combined with the format validity gate, this creates a reward landscape where the only reliable path to a higher score is genuinely better segmentation, not an exploit of the metric’s blind spots.

The result is a training process where the reward function is not a proxy for quality but a direct measurement of it. The same metric used to evaluate the model in production is the metric used to train it.

5 - Pegasus 1.5 on TwelveLabs Playground

Research matters only if it survives contact with a real workflow. With Pegasus 1.5, you do not ask a question about a clip and hope the answer is enough. You define a schema for what matters in the video, run time-based metadata extraction, and get back structured, timestamped segments that a downstream system can actually use. What used to require manual review, ad hoc clipping, or a custom ingestion pipeline becomes a much simpler loop: choose a video, specify the segment definitions, inspect the JSON, and move directly from raw footage to queryable data.

The basketball example in the demo above shows why that matters. In a single workflow, Pegasus 1.5 can separate non-gameplay footage from scoring plays, attach structured fields to each segment, and return outputs that are ready for highlight, analytics, or archive workflows. That does not eliminate the human in the loop. It changes the human role. Instead of spending hours finding and tagging moments by hand, teams can review, refine, and act on model-generated structure. That is the real product story behind Pegasus 1.5: not just better video understanding, but a practical way to turn video into an operational input for search, automation, and decision-making.

6 - Comparative Performance: Why This Matters in Practice

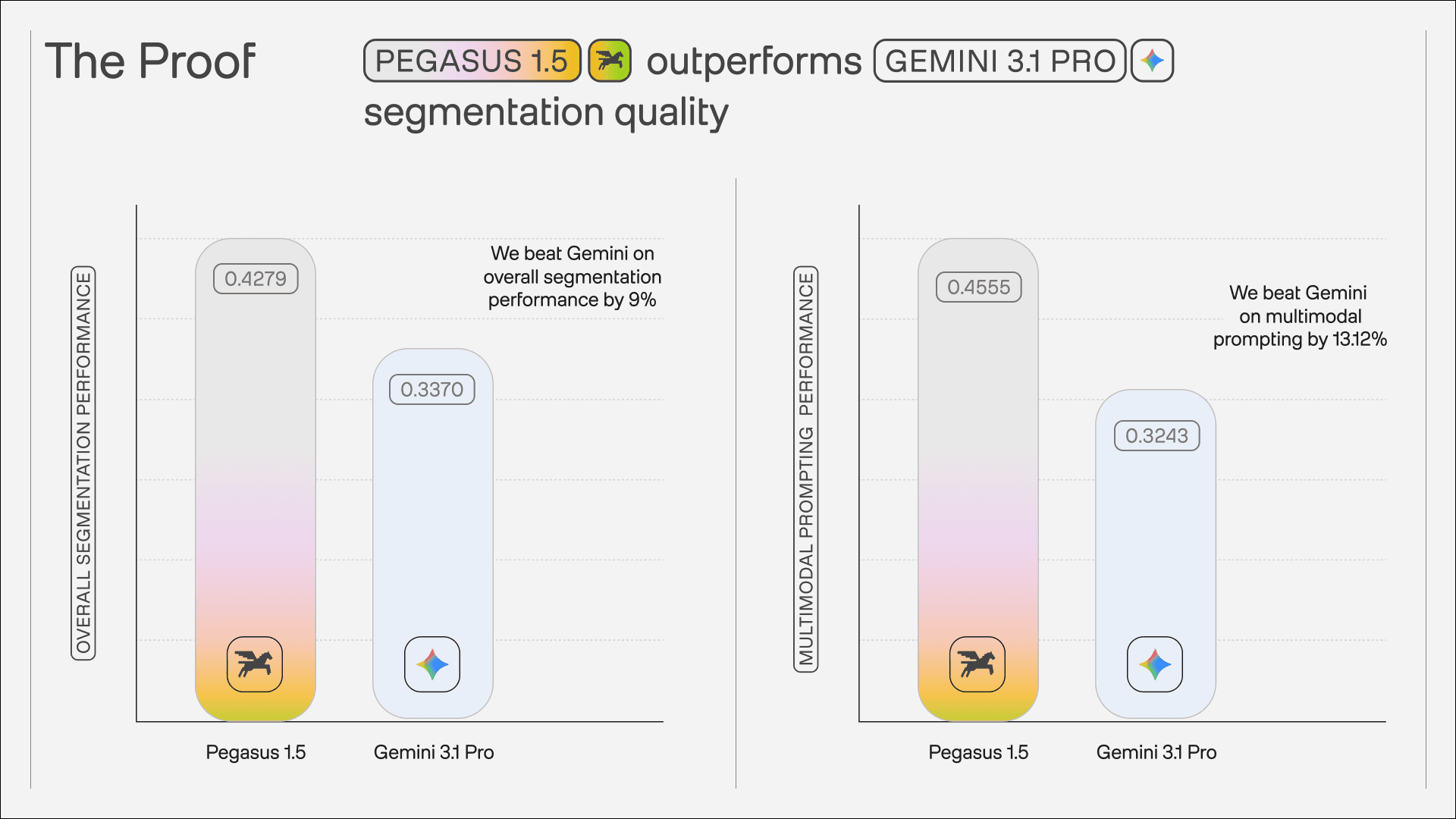

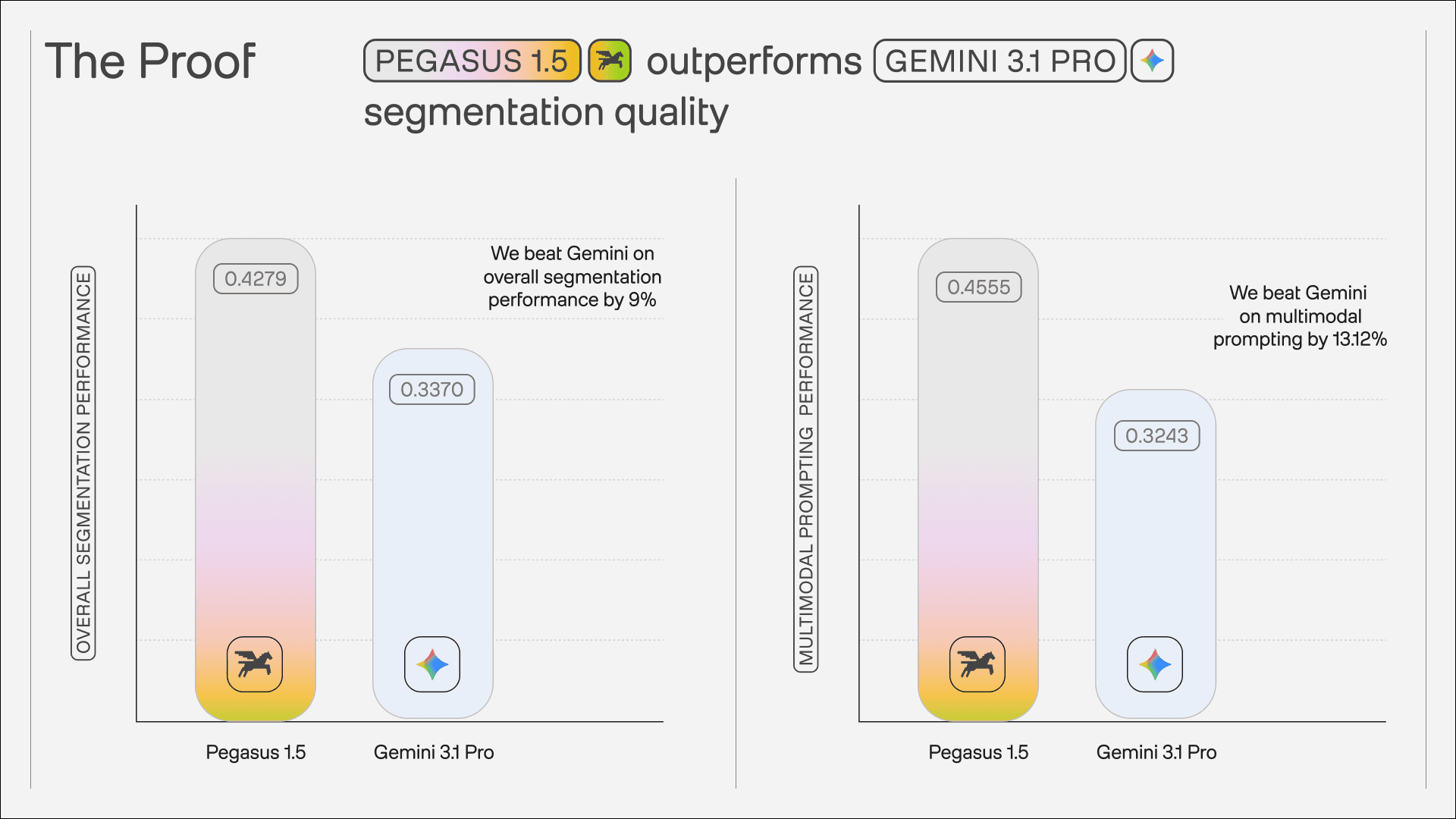

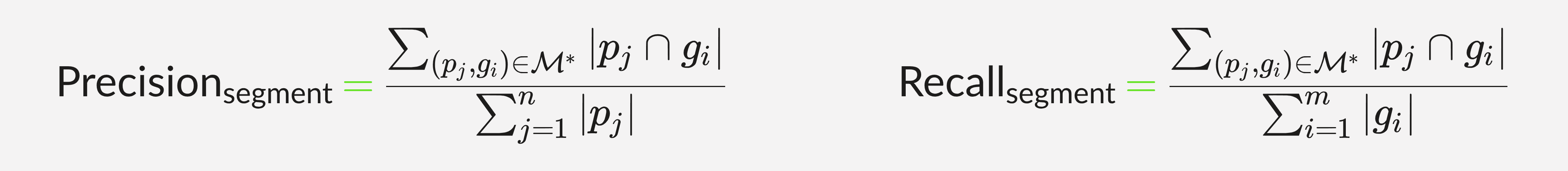

The product experience in the Playground is important, but it needs to be backed by measurable performance. In our comparative evaluation, Pegasus 1.5 outperformed Gemini 3.1 Pro on the two capabilities that matter most for this workflow: segmentation quality and multimodal prompting quality. The benchmark visual below shows Pegasus 1.5 scoring 0.4279 vs. 0.3370 on overall segmentation performance, and 0.4555 vs. 0.3243 on multimodal prompting performance, reflecting stronger boundary decisions and more reliable schema-aligned extraction from mixed text-and-image inputs.

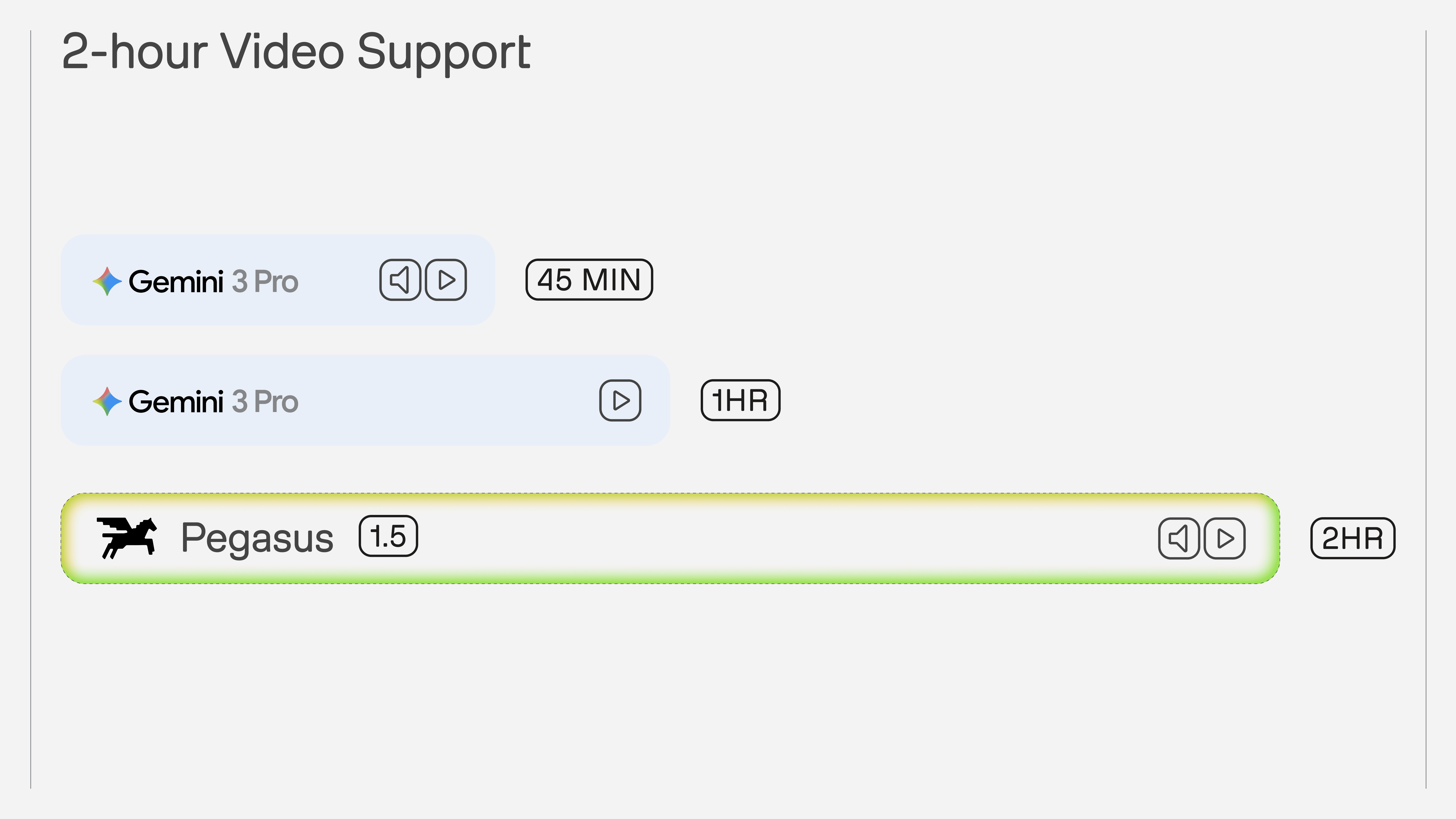

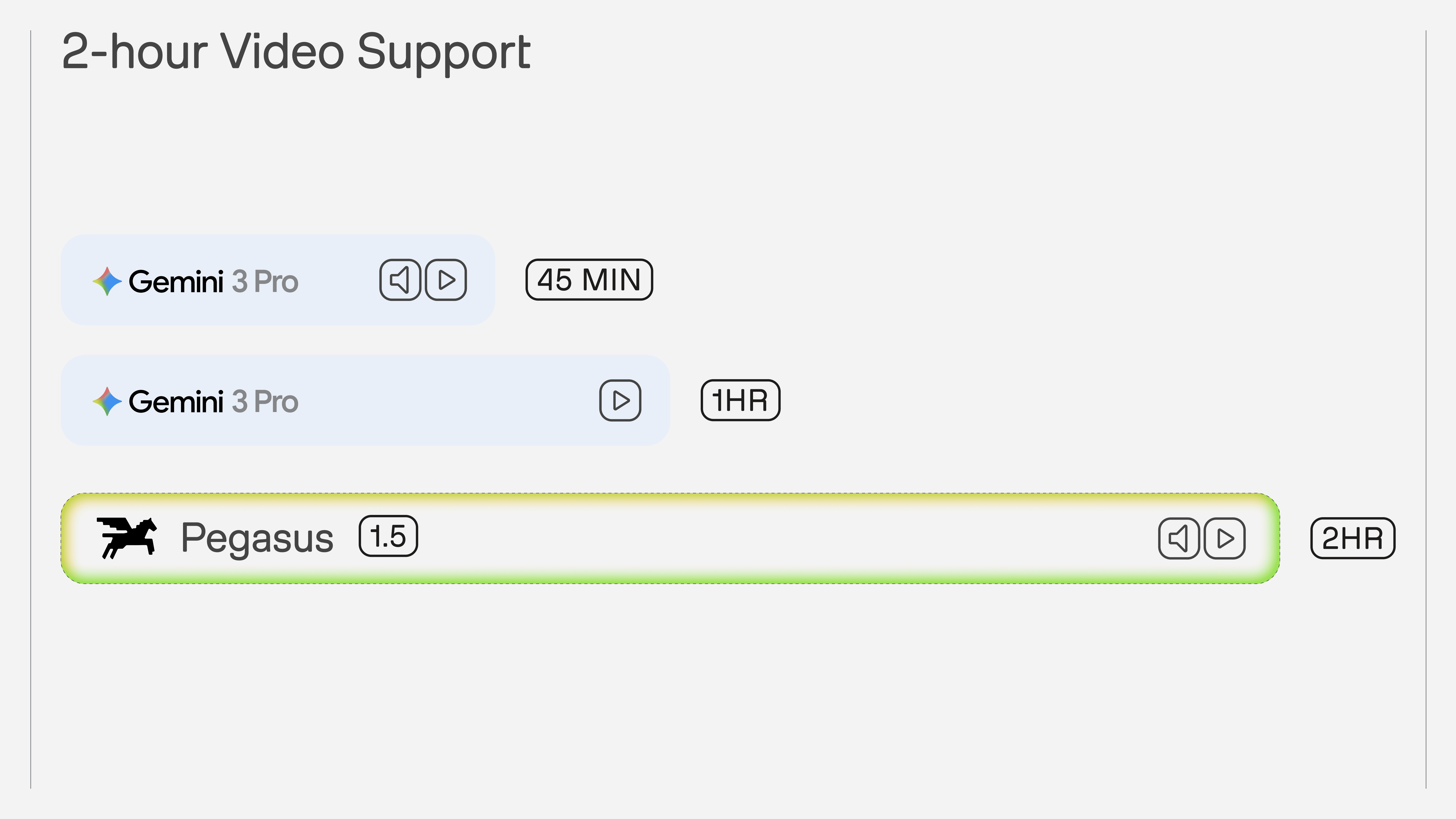

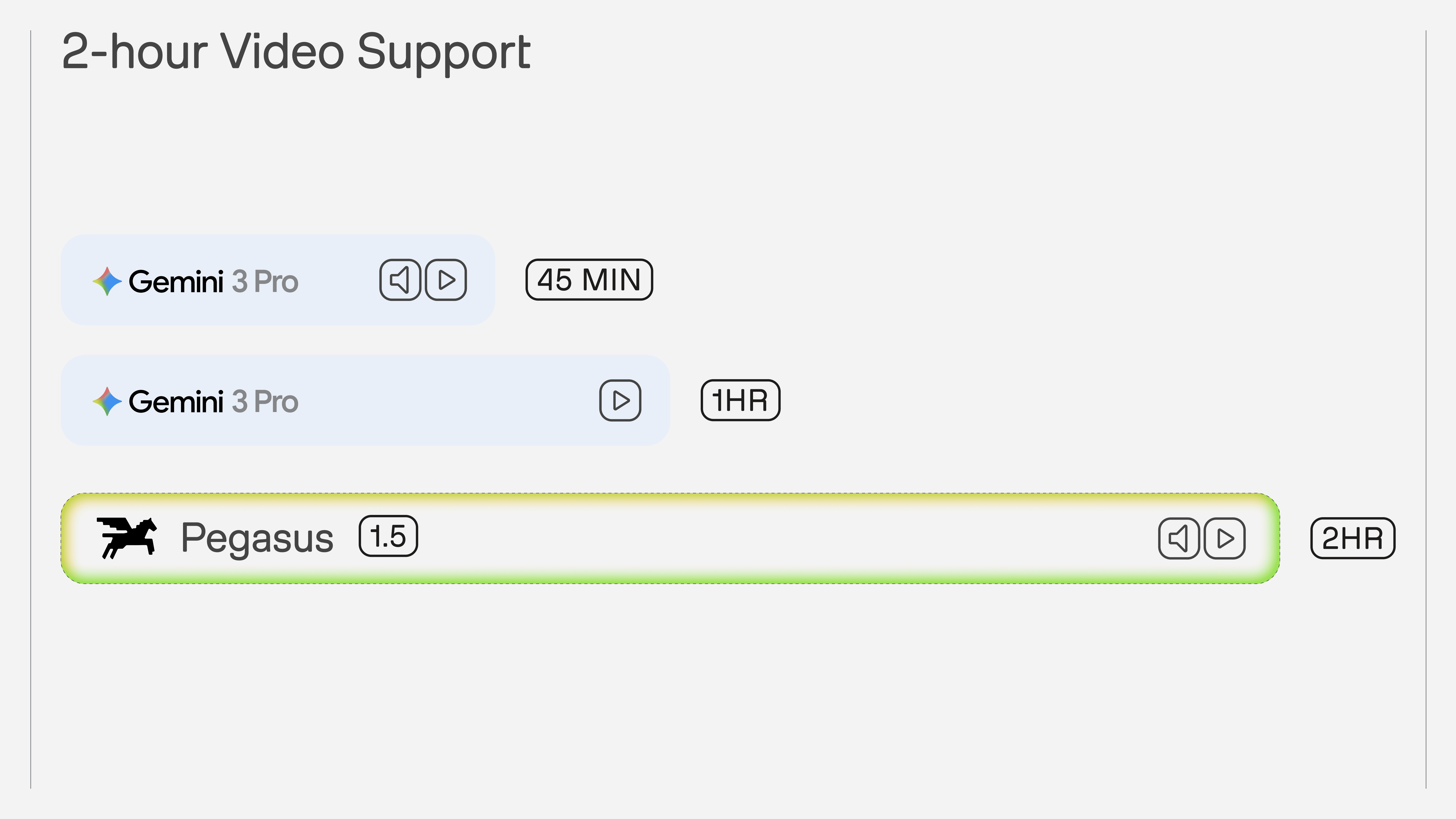

That advantage also shows up in long-context support. Pegasus 1.5 can process up to two hours of video in a single request, which is a meaningful difference for real production workloads such as full sports broadcasts, long-form interviews, and feature-length content.

Taken together, these comparisons reinforce the core argument of this post. Pegasus 1.5 is not just a different interface for video QA. It is a system optimized for temporal structure: better at identifying where events begin and end, better at grounding extraction with multimodal inputs, and better suited to the long-form video that production teams actually work with.

7 - Conclusion

For most teams, video has remained fundamentally unstructured: rich in information, but difficult to operationalize. The shift introduced by Pegasus 1.5 is not incremental. It changes the unit of interaction from answers to structured, time-aligned data. When the model can decide boundaries and consistently extract metadata across an entire video, video stops being something you watch and becomes something your systems can compute over.

That shift required rethinking the problem itself: how to define quality for temporal outputs, how to build evaluation datasets that reflect real workflows, and how to train models against metrics that actually matter in production. The result is not just a more capable model, but a more reliable one: one that produces outputs designed to integrate into pipelines, not just demos.

The implication is straightforward. If your workflow depends on video (whether for search, analytics, compliance, or automation), you no longer need to build a custom segmentation and annotation stack around it. You can define what matters once, and let the model apply it consistently across your entire library. Video becomes a queryable system of record, not a manual process.

Try It Out

You can try Pegasus 1.5 today in the TwelveLabs Playground or integrate it directly through the asynchronous analysis endpoint. For a how-to guide, see the Segment videos page. For complete parameter specifications, see the Create an async analysis task page in the API Reference section.

Start with a single video, define your schema, and inspect the output. The fastest way to understand what’s changed is to run it on your own data.

TwelveLabs Team

Pegasus 1.5 is a joint effort across multiple functional groups including:

Science: Kian Kim, Sam Choi, Lia Nam, Henry Oh, Dylan Byeon

Machine Learning Engineering: SJ, Kevin Lee, Wade Jeong

Data: Elton Cho, Haley Kim, Kaylim Kang, Heeyewon Jeong

Product Management: Shannon Hong

1 - From Clip-Based Answers to Structured Video Intelligence

Video is one of the richest forms of information, yet it remains one of the least accessible to software systems. Unlike text or images, meaning in video is not contained in a single moment: it emerges through temporal continuity, multimodal interactions, and causal relationships across time. A sports play unfolds over several seconds, a narrative arc in a film spans minutes, a brand appearance may be visually subtle but contextually significant. To operationalize video at scale, systems must reason not only about what happens, but also when it happens.

This is where time-based metadata becomes essential. Time-based metadata transforms raw video into structured, timestamped data, enabling developers to treat video as a queryable and computable asset. Instead of manually reviewing footage or relying on brittle heuristics, organizations can define what matters to their business (such as editorial segments, sports plays, speaker changes, or brand appearances) and automatically extract these events across entire video libraries.

Earlier versions of Pegasus addressed a different class of problem. Pegasus 1.2 was designed as a video question-answering (QA) system: users provided a clip and asked a question, and the model returned an answer or summary. This paradigm works well for factoid queries or localized understanding. However, it introduces a fundamental limitation for real-world workflows: the user must already know where to look. In large-scale environments (such as media archives, live sports, or streaming catalogs), this assumption does not hold.

As a result, Pegasus 1.2 could not fully support workflows that require systematic segmentation and consistent metadata extraction across entire videos. The absence of model-driven boundary detection meant that users still relied on manual annotation or heuristic preprocessing to define temporal regions of interest.

Pegasus 1.5 represents a fundamental shift. Instead of answering questions about a predefined clip, the model partitions an entire video according to a user-defined schema and labels each segment with structured metadata. This transformation moves video understanding from a retrieval problem to a data generation pipeline, where video becomes a first-class input for analytics, automation, and agentic systems.

2 - The Cost of Unstructured Video

Despite the explosive growth of video content, most organizations still manage it as unstructured media. Traditional approaches rely on manual logging, keyword tagging, or simple shot-detection algorithms. These methods fail to capture the semantic and temporal complexity required for downstream decision-making.

From a systems perspective, the challenge stems from three properties of video:

Temporal Ambiguity: Events do not have explicit boundaries. Determining where a news story begins or where a sports play ends requires contextual reasoning across multiple modalities.

Multimodal Dependence: Meaning arises from the interaction of visual cues, speech, audio signals, and on-screen text.

Schema Variability: Different organizations care about different events, requiring flexible and domain-specific definitions.

Without reliable time-based metadata, video cannot be easily indexed, queried, or integrated into data pipelines, limiting its value for automation and analytics.

The operational gap between raw video and structured data can be illustrated through the following comparison:

Diagram 1: Traditional Workflow

Diagram 2: Pegasus 1.5 Workflow

By allowing developers to define a vocabulary of events, Pegasus 1.5 shifts the burden of temporal reasoning from humans to the model, enabling scalable and consistent metadata extraction.

In media & entertainment, editorial teams must segment long-form content into narrative units (such as scenes, topics, or character appearances) to support archiving, recommendation, and monetization. With Pegasus 1.5, they can define schemas for editorial segments and automatically extract structured metadata across entire catalogs. This enables semantic search, automated highlight generation, and efficient content reuse.

In sports analytics, identifying and labeling plays within sports footage is labor-intensive and time-sensitive, often requiring domain experts to review entire games. With Pegasus 1.5, they can define schemas for plays (such as goals, fouls, or turnovers) and automatically detect each instance with precise temporal boundaries. This supports real-time highlight creation and performance analytics.

In streaming platforms, these services must identify brand appearances, scene transitions, and contextual moments to enable targeted advertising and content monetization. With Pegasus 1.5, they can define schemas for brand visibility or contextual triggers, allowing automatic detection of monetizable moments across vast libraries.

3 - Technical Foundations: Defining Time-Based Metadata

Time-based metadata (TBM) refers to structured information associated with specific temporal segments of a video. Each segment is defined by precise start and end timestamps and is enriched with metadata fields that conform to a user-defined schema.

Formally, a TBM output can be represented as:

Segment = { start_time: float, end_time: float, metadata: { key: value, ... } }

A complete analysis consists of a set of non-overlapping segments for each semantic definition provided by the user. This structure enables deterministic integration with downstream systems such as search indexes, analytics platforms, and agentic workflows.

Pegasus 1.5 introduces a schema-first interaction model through the /analyze API. Instead of prompting the model with open-ended questions, developers define segment definitions that describe:

What constitutes a segment (semantic description)

Which metadata fields to extract

Optional constraints such as duration or contextual references

This design ensures consistency, determinism, and integration readiness for production environments.

This is an example /analyze API request for the basketball video above:

{ "model_name": "pegasus1.5", "analysis_mode": "time_based_metadata", "video": { "type": "url", "url": "https://example.com/video.mp4" }, "response_format": { "type": "segment_definitions", "segment_definitions": [ { "id": "non_gameplay_footage", "description": "Generate segments only when the content on screen IS NOT actual gameplay.", "fields": [ { "name": "description", "type": "string", "description": "A rich long description of the non-gameplay footage." } ] }, { "id": "scoring_plays", "description": "Segment any time a team scores points. The segment should be the entire scoring play.", "fields": [ { "name": "points_scored", "type": "string", "description": "How many points were scored during the play.", "enum": [ "2pt", "1pt", "3pt" ] }, { "name": "shot_type", "type": "string", "description": "The shot type from the scoring play.", "enum": [ "jump_start", "layup", "dunk", "foul_shot" ] }, { "name": "scoring_team", "type": "string", "description": "Name of the team that scored." } ] }, { "id": "camera_cut", "description": "Segment any time only when there is a hard cut in the camera. Otherwise continue the current segment.", "fields": [ { "name": "camera_angle", "type": "string", "description": "Angle of the current camera.", "enum": [ "high", "low", "medium" ] } ] } ] }, "temperature": 0, "min_segment_duration": 2 }

And this is an example response structure:

"result": { "generation_id": "5be1b8c6-7e92-43ce-b37d-ba1b53ed1ebe", "data": "{\"gameplay_footage\": [{\"start_time\": 0.0, \"end_time\": 11.0, \"metadata\": {\"description\": \"The video opens with a title card announcing Loyola's NCAA championship win, followed by a wide shot of the packed arena and a close-up of the 'NCAA Finals 1963' logo on the court.\"}}, {\"start_time\": 20.0, \"end_time\": 22.0, \"metadata\": {\"description\": \"A brief cutaway shot shows a woman in the stands smiling and clapping enthusiastically.\"}}, {\"start_time\": 29.0, \"end_time\": 31.0, \"metadata\": {\"description\": \"The camera focuses on the scoreboard, showing the score as 48-50 with 2:04 remaining in the second period.\"}}, {\"start_time\": 53.0, \"end_time\": 54.0, \"metadata\": {\"description\": \"A quick shot of spectators in the stands reacting to the game.\"}}, {\"start_time\": 56.0, \"end_time\": 58.0, \"metadata\": {\"description\": \"The camera captures two men in the stands celebrating with their arms raised.\"}}, {\"start_time\": 68.0, \"end_time\": 70.0, \"metadata\": {\"description\": \"The scoreboard is shown again, displaying a tied score of 54-54 with 5:00 remaining in the third period.\"}}, {\"start_time\": 76.0, \"end_time\": 78.0, \"metadata\": {\"description\": \"A shot of the crowd shows fans cheering and celebrating during the game.\"}}, {\"start_time\": 81.0, \"end_time\": 83.0, \"metadata\": {\"description\": \"Two cheerleaders are shown on the court, performing a routine.\"}}, {\"start_time\": 88.0, \"end_time\": 90.0, \"metadata\": {\"description\": \"A man in a suit is seen standing and clapping in the stands.\"}}, {\"start_time\": 109.0, \"end_time\": 118.0, \"metadata\": {\"description\": \"Following the final shot, the Loyola players and coaches rush onto the court to celebrate their championship victory.\"}}, {\"start_time\": 118.0, \"end_time\": 121.0, \"metadata\": {\"description\": \"The final scoreboard is displayed, showing Loyola's victory with a score of 60-58 as the time runs out.\"}}], \"scoring_plays\": [{\"start_time\": 12.0, \"end_time\": 20.0, \"metadata\": {\"points_scored\": \"2pt\", \"shot_type\": \"layup\", \"scoring_team\": \"University of Cincinnati Bearcats\"}}, {\"start_time\": 23.0, \"end_time\": 28.0, \"metadata\": {\"points_scored\": \"2pt\", \"shot_type\": \"jump_start\", \"scoring_team\": \"Loyola Ramblers\"}}, {\"start_time\": 46.0, \"end_time\": 52.0, \"metadata\": {\"points_scored\": \"2pt\", \"shot_type\": \"layup\", \"scoring_team\": \"Loyola Ramblers\"}}, {\"start_time\": 60.0, \"end_time\": 68.0, \"metadata\": {\"points_scored\": \"2pt\", \"shot_type\": \"layup\", \"scoring_team\": \"Loyola Ramblers\"}}, {\"start_time\": 71.0, \"end_time\": 76.0, \"metadata\": {\"points_scored\": \"2pt\", \"shot_type\": \"jump_start\", \"scoring_team\": \"Loyola Ramblers\"}}, {\"start_time\": 79.0, \"end_time\": 83.0, \"metadata\": {\"points_scored\": \"2pt\", \"shot_type\": \"jump_start\", \"scoring_team\": \"University of Cincinnati Bearcats\"}}, {\"start_time\": 102.0, \"end_time\": 110.0, \"metadata\": {\"points_scored\": \"2pt\", \"shot_type\": \"layup\", \"scoring_team\": \"Loyola Ramblers\"}}], \"camera_cut\": [{\"start_time\": 0.0, \"end_time\": 3.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 3.0, \"end_time\": 10.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 10.0, \"end_time\": 11.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 11.0, \"end_time\": 20.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 20.0, \"end_time\": 22.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 22.0, \"end_time\": 28.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 28.0, \"end_time\": 31.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 31.0, \"end_time\": 38.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 38.0, \"end_time\": 45.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 45.0, \"end_time\": 53.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 53.0, \"end_time\": 54.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 54.0, \"end_time\": 56.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 56.0, \"end_time\": 58.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 58.0, \"end_time\": 68.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 68.0, \"end_time\": 70.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 70.0, \"end_time\": 76.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 76.0, \"end_time\": 78.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 78.0, \"end_time\": 81.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 81.0, \"end_time\": 83.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 83.0, \"end_time\": 88.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 88.0, \"end_time\": 90.0, \"metadata\": {\"camera_angle\": \"medium\"}}, {\"start_time\": 90.0, \"end_time\": 108.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 108.0, \"end_time\": 118.0, \"metadata\": {\"camera_angle\": \"high\"}}, {\"start_time\": 118.0, \"end_time\": 121.0, \"metadata\": {\"camera_angle\": \"medium\"}}]}" "finish_reason": "stop", "usage": { "output_tokens": <number>

Here’s the json.parse() on the “data” of the response structure:

{ "gameplay_footage": [ {"start_time": 0.0, "end_time": 11.0, "metadata": {"description": "The video opens with a title card announcing Loyola's NCAA championship win, followed by a wide shot of the packed arena and a close-up of the 'NCAA Finals 1963' logo on the court."}}, {"start_time": 20.0, "end_time": 22.0, "metadata": {"description": "A brief cutaway shot shows a woman in the stands smiling and clapping enthusiastically."}}, {"start_time": 29.0, "end_time": 31.0, "metadata": {"description": "The camera focuses on the scoreboard, showing the score as 48-50 with 2:04 remaining in the second period."}}, {"start_time": 53.0, "end_time": 54.0, "metadata": {"description": "A quick shot of spectators in the stands reacting to the game."}}, {"start_time": 56.0, "end_time": 58.0, "metadata": {"description": "The camera captures two men in the stands celebrating with their arms raised."}}, {"start_time": 68.0, "end_time": 70.0, "metadata": {"description": "The scoreboard is shown again, displaying a tied score of 54-54 with 5:00 remaining in the third period."}}, {"start_time": 76.0, "end_time": 78.0, "metadata": {"description": "A shot of the crowd shows fans cheering and celebrating during the game."}}, {"start_time": 81.0, "end_time": 83.0, "metadata": {"description": "Two cheerleaders are shown on the court, performing a routine."}}, {"start_time": 88.0, "end_time": 90.0, "metadata": {"description": "A man in a suit is seen standing and clapping in the stands."}}, {"start_time": 109.0, "end_time": 118.0, "metadata": {"description": "Following the final shot, the Loyola players and coaches rush onto the court to celebrate their championship victory."}}, {"start_time": 118.0, "end_time": 121.0, "metadata": {"description": "The final scoreboard is displayed, showing Loyola's victory with a score of 60-58 as the time runs out."}} ], "scoring_plays": [ {"start_time": 12.0, "end_time": 20.0, "metadata": {"points_scored": "2pt", "shot_type": "layup", "scoring_team": "University of Cincinnati Bearcats"}}, {"start_time": 23.0, "end_time": 28.0, "metadata": {"points_scored": "2pt", "shot_type": "jump_start", "scoring_team": "Loyola Ramblers"}}, {"start_time": 46.0, "end_time": 52.0, "metadata": {"points_scored": "2pt", "shot_type": "layup", "scoring_team": "Loyola Ramblers"}}, {"start_time": 60.0, "end_time": 68.0, "metadata": {"points_scored": "2pt", "shot_type": "layup", "scoring_team": "Loyola Ramblers"}}, {"start_time": 71.0, "end_time": 76.0, "metadata": {"points_scored": "2pt", "shot_type": "jump_start", "scoring_team": "Loyola Ramblers"}}, {"start_time": 79.0, "end_time": 83.0, "metadata": {"points_scored": "2pt", "shot_type": "jump_start", "scoring_team": "University of Cincinnati Bearcats"}}, {"start_time": 102.0, "end_time": 110.0, "metadata": {"points_scored": "2pt", "shot_type": "layup", "scoring_team": "Loyola Ramblers"}} ], "camera_cut": [ {"start_time": 0.0, "end_time": 3.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 3.0, "end_time": 10.0, "metadata": {"camera_angle": "high"}}, {"start_time": 10.0, "end_time": 11.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 11.0, "end_time": 20.0, "metadata": {"camera_angle": "high"}}, {"start_time": 20.0, "end_time": 22.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 22.0, "end_time": 28.0, "metadata": {"camera_angle": "high"}}, {"start_time": 28.0, "end_time": 31.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 31.0, "end_time": 38.0, "metadata": {"camera_angle": "high"}}, {"start_time": 38.0, "end_time": 45.0, "metadata": {"camera_angle": "high"}}, {"start_time": 45.0, "end_time": 53.0, "metadata": {"camera_angle": "high"}}, {"start_time": 53.0, "end_time": 54.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 54.0, "end_time": 56.0, "metadata": {"camera_angle": "high"}}, {"start_time": 56.0, "end_time": 58.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 58.0, "end_time": 68.0, "metadata": {"camera_angle": "high"}}, {"start_time": 68.0, "end_time": 70.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 70.0, "end_time": 76.0, "metadata": {"camera_angle": "high"}}, {"start_time": 76.0, "end_time": 78.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 78.0, "end_time": 81.0, "metadata": {"camera_angle": "high"}}, {"start_time": 81.0, "end_time": 83.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 83.0, "end_time": 88.0, "metadata": {"camera_angle": "high"}}, {"start_time": 88.0, "end_time": 90.0, "metadata": {"camera_angle": "medium"}}, {"start_time": 90.0, "end_time": 108.0, "metadata": {"camera_angle": "high"}}, {"start_time": 108.0, "end_time": 118.0, "metadata": {"camera_angle": "high"}}, {"start_time": 118.0, "end_time": 121.0, "metadata": {"camera_angle": "medium"}} ] }

We design this schema with the following principles:

Temporal Precision: Each segment is anchored by explicit timestamps, enabling frame-accurate alignment with downstream systems such as editing tools or analytics pipelines.

Non-Overlapping Segments: Within a segment definition, outputs are mutually exclusive, ensuring deterministic and consistent interpretations of the video timeline.

Open Vocabulary with Structured Outputs: Developers can define domain-specific vocabularies while maintaining strict schema compliance, enabling seamless integration into databases and agentic workflows.

Multimodal Reasoning: Segment boundaries and metadata are inferred from the interaction of visual, auditory, and linguistic signals, reflecting the true complexity of video.

4 - Building an Evaluation Framework for Time-Based Metadata

4.1 - Evaluation dataset: built from scratch with rigorous human verification

Building a reliable evaluation system for time-based metadata required solving a bootstrapping problem: no suitable benchmark existed.

Existing academic benchmarks for video understanding, such as Video-MME, evaluate a fundamentally different task. They reduce video comprehension to multiple-choice question answering: given a clip and a question, select the correct option from a fixed set of candidates. This format is useful for measuring general video reasoning, but it bears little resemblance to producing structured temporal outputs. Time-based metadata extraction requires schema-conditioned segmentation: the model must partition an entire video according to a user-defined vocabulary of events, determine where each segment begins and ends, and populate structured metadata fields for each one. No existing benchmark evaluates this combination of dense temporal boundary prediction with per-segment structured metadata across diverse content domains.

We built the evaluation set from scratch. The dataset spans multiple content verticals, each presenting distinct segmentation challenges:

News broadcasts require identifying editorial packages, anchor segments, and field reports with precise transition boundaries.

Movies and television demand scene-level segmentation with narrative and visual metadata.

Sports footage requires detection of plays, scoring events, and game-state transitions at much finer temporal granularity.

For each domain, we defined segment definitions and metadata schemas that mirror real production workflows, the same schemas a media company or sports broadcaster would use in practice.

Critically, we implemented a multi-stage human verification process rather than treating annotation as a single pass. The workflow consists of four phases:

Project initialization: clarifying segment definitions and resolving ambiguity in boundary criteria before annotation begins.

Segmentation triage: reviewing boundary quality after segmentation is completed, but before metadata annotation starts.

Metadata triage: verifying field-level metadata accuracy after extraction, confirming direction and quality before scaling.

Final validation: end-to-end quality review before a sample enters the evaluation set.

At each stage, samples that fail quality thresholds are either corrected or removed. This process revealed an important lesson: a universal annotation workflow does not work for time-based metadata. Different segment types, a shot-level visual boundary versus a high-level editorial transition, require different annotation strategies, different levels of domain expertise, and different quality criteria. We addressed this by routing tasks based on complexity and volume: straightforward boundary types were validated at scale using external annotators, while semantically complex definitions (such as narrative beats or gradual topic transitions) received dedicated expert review.

The result is an evaluation set where we have high confidence in both segment boundary placement and metadata accuracy, a prerequisite for any metric to be meaningful.

4.2 - Metric design: why time-based metadata evaluation is different

Evaluating time-based metadata is fundamentally different from evaluating standard NLP or vision tasks. The model’s output is not a single answer but a set of temporal segments, each defined by start and end timestamps and enriched with structured metadata fields. Scoring this output requires solving two distinct problems: how well the predicted segments align with the ground truth (segmentation quality), and how accurate the metadata is within aligned segments (metadata quality).

Why naive metrics fail

Our first segmentation metric was temporal coverage: the fraction of ground truth duration covered by predicted segments. This metric has a critical flaw: it is recall-only. A single prediction spanning the entire video achieves high coverage while capturing no meaningful segmentation structure. More subtly, an over-segmented output (many small fragments) can collectively cover the ground truth while failing to produce coherent segment boundaries. Coverage measures whether the right time was found, but says nothing about whether the right structure was found.

From coverage to Temporal F1

To address this, we introduced precision into the evaluation. Let G = {g_1, ..., g_m} be the set of ground truth segments and P = {p_1, ..., p_n} be the set of predicted segments, where each segment is a time interval with duration |s|. Let U(.) denote the union of all intervals in a set, and |.| the total duration of that union.

In the many-to-many (N:N) formulation, we ignore segment identity and compute overlap on the merged time axis:

This penalizes both over-prediction (low precision) and missed content (low recall) by treating evaluation as a duration-weighted comparison on the time axis.

However, Temporal F1 ignores individual segment boundaries. A ground truth segment perfectly matched by one prediction scores the same as one fragmented across many small predictions.

To capture segment-level alignment, we introduced Segment F1. This uses the Hungarian algorithm to find the optimal 1:1 assignment M* that maximizes total IoU across matched pairs. Only matched pairs contribute to the numerator:

Unmatched predictions become false positives; unmatched ground truth segments become false negatives.

Together, Temporal F1 and Segment F1 capture complementary failure modes. Temporal F1 is lenient toward fragmentation but strict about total time accuracy. Segment F1 is strict about boundary alignment but may undercount coverage when predictions legitimately span multiple ground truth events. We report both metrics to provide a complete picture of segmentation quality.

Metadata evaluation

Segmentation metrics measure whether the model found the right boundaries. Metadata metrics measure whether the model extracted the right information within those boundaries. Because metadata fields are open-vocabulary, free-text summaries, categorical labels, keyword lists, entity names, rule-based scoring is insufficient.

We use an LLM-as-judge approach: for each aligned segment pair, a language model evaluates each metadata field against the ground truth using category-specific rubrics. Different field types, transcripts, summaries, keywords, categorical labels, speaker identities, spatial descriptions, each receive a tailored scoring rubric. When segment boundaries between prediction and ground truth are not identical, the judge accounts for temporal context: differences in metadata may reflect boundary misalignment rather than extraction failure, and the scoring adjusts accordingly. Field-level scores are aggregated per segment and per sample to produce the final metadata quality score.

4.3 - Reinforcement learning with a custom metric: making the reward what you measure

The metric design described above was not only an evaluation tool, it became the foundation for model training. Pegasus 1.5 uses reinforcement learning with verifiable rewards (RLVR), and a key insight driving this work is that time-based metadata is a particularly natural fit for this training paradigm.

Why TBM fits RLVR

Reinforcement learning with verifiable rewards requires that the quality of a model’s output can be assessed programmatically, without human judgment in the loop. Time-based metadata satisfies this requirement in two ways. First, structural validity is verifiable: the output must be valid JSON, conform to the requested schema, and produce non-overlapping temporal segments, all properties that can be checked deterministically. Second, segmentation quality is verifiable: given ground truth segments and a rule-based metric such as Temporal F1 or Segment F1, boundary accuracy can be scored automatically. Together, these make a large fraction of the task verifiable, which is what makes RLVR effective here.

The reward function

The RL reward is a composite signal with distinct components. Format validity, JSON parsability and schema compliance, is assessed as a granular, separate reward component, ensuring the model never learns to trade structural correctness for segmentation gains. Outputs that fail JSON parsing or schema validation receive zero reward on this component, functioning as a hard constraint on output quality. The segmentation reward is computed using the same Temporal F1 and Segment F1 metrics described in Section 4.2. The metadata reward uses automated scoring of field-level accuracy.

Reward hacking and metric co-evolution

One of the most instructive outcomes of the RL process was how aggressively the model exposed weaknesses in our evaluation metrics. When we used temporal coverage (a recall-only metric) as the reward signal, the model learned to maximize coverage through degenerate strategies, producing maximally long segments, or over-segmenting videos into many small fragments that collectively covered the ground truth. The reward score increased, but the actual outputs were not useful: they lacked meaningful segment boundaries.

This is a textbook instance of reward hacking, and it forced us to iterate on the metric itself. The progression from coverage-only to Temporal F1 (which introduced precision) to the combined Temporal F1 + Segment F1 framework was driven directly by observing what the model optimized toward under each reward definition. In this sense, RL served a dual purpose: it improved the model’s segmentation ability, and it pressure-tested the metric, revealing failure modes that were not apparent from static evaluation alone.

Guarding against degenerate strategies

The F1-based reward function naturally penalizes the two most common failure modes: over-segmentation (which reduces precision) and under-segmentation (which reduces recall). Combined with the format validity gate, this creates a reward landscape where the only reliable path to a higher score is genuinely better segmentation, not an exploit of the metric’s blind spots.

The result is a training process where the reward function is not a proxy for quality but a direct measurement of it. The same metric used to evaluate the model in production is the metric used to train it.

5 - Pegasus 1.5 on TwelveLabs Playground

Research matters only if it survives contact with a real workflow. With Pegasus 1.5, you do not ask a question about a clip and hope the answer is enough. You define a schema for what matters in the video, run time-based metadata extraction, and get back structured, timestamped segments that a downstream system can actually use. What used to require manual review, ad hoc clipping, or a custom ingestion pipeline becomes a much simpler loop: choose a video, specify the segment definitions, inspect the JSON, and move directly from raw footage to queryable data.

The basketball example in the demo above shows why that matters. In a single workflow, Pegasus 1.5 can separate non-gameplay footage from scoring plays, attach structured fields to each segment, and return outputs that are ready for highlight, analytics, or archive workflows. That does not eliminate the human in the loop. It changes the human role. Instead of spending hours finding and tagging moments by hand, teams can review, refine, and act on model-generated structure. That is the real product story behind Pegasus 1.5: not just better video understanding, but a practical way to turn video into an operational input for search, automation, and decision-making.

6 - Comparative Performance: Why This Matters in Practice

The product experience in the Playground is important, but it needs to be backed by measurable performance. In our comparative evaluation, Pegasus 1.5 outperformed Gemini 3.1 Pro on the two capabilities that matter most for this workflow: segmentation quality and multimodal prompting quality. The benchmark visual below shows Pegasus 1.5 scoring 0.4279 vs. 0.3370 on overall segmentation performance, and 0.4555 vs. 0.3243 on multimodal prompting performance, reflecting stronger boundary decisions and more reliable schema-aligned extraction from mixed text-and-image inputs.

That advantage also shows up in long-context support. Pegasus 1.5 can process up to two hours of video in a single request, which is a meaningful difference for real production workloads such as full sports broadcasts, long-form interviews, and feature-length content.

Taken together, these comparisons reinforce the core argument of this post. Pegasus 1.5 is not just a different interface for video QA. It is a system optimized for temporal structure: better at identifying where events begin and end, better at grounding extraction with multimodal inputs, and better suited to the long-form video that production teams actually work with.

7 - Conclusion

For most teams, video has remained fundamentally unstructured: rich in information, but difficult to operationalize. The shift introduced by Pegasus 1.5 is not incremental. It changes the unit of interaction from answers to structured, time-aligned data. When the model can decide boundaries and consistently extract metadata across an entire video, video stops being something you watch and becomes something your systems can compute over.

That shift required rethinking the problem itself: how to define quality for temporal outputs, how to build evaluation datasets that reflect real workflows, and how to train models against metrics that actually matter in production. The result is not just a more capable model, but a more reliable one: one that produces outputs designed to integrate into pipelines, not just demos.

The implication is straightforward. If your workflow depends on video (whether for search, analytics, compliance, or automation), you no longer need to build a custom segmentation and annotation stack around it. You can define what matters once, and let the model apply it consistently across your entire library. Video becomes a queryable system of record, not a manual process.

Try It Out

You can try Pegasus 1.5 today in the TwelveLabs Playground or integrate it directly through the asynchronous analysis endpoint. For a how-to guide, see the Segment videos page. For complete parameter specifications, see the Create an async analysis task page in the API Reference section.

Start with a single video, define your schema, and inspect the output. The fastest way to understand what’s changed is to run it on your own data.

TwelveLabs Team

Pegasus 1.5 is a joint effort across multiple functional groups including:

Science: Kian Kim, Sam Choi, Lia Nam, Henry Oh, Dylan Byeon

Machine Learning Engineering: SJ, Kevin Lee, Wade Jeong

Data: Elton Cho, Haley Kim, Kaylim Kang, Heeyewon Jeong

Product Management: Shannon Hong

1 - From Clip-Based Answers to Structured Video Intelligence

Video is one of the richest forms of information, yet it remains one of the least accessible to software systems. Unlike text or images, meaning in video is not contained in a single moment: it emerges through temporal continuity, multimodal interactions, and causal relationships across time. A sports play unfolds over several seconds, a narrative arc in a film spans minutes, a brand appearance may be visually subtle but contextually significant. To operationalize video at scale, systems must reason not only about what happens, but also when it happens.

This is where time-based metadata becomes essential. Time-based metadata transforms raw video into structured, timestamped data, enabling developers to treat video as a queryable and computable asset. Instead of manually reviewing footage or relying on brittle heuristics, organizations can define what matters to their business (such as editorial segments, sports plays, speaker changes, or brand appearances) and automatically extract these events across entire video libraries.

Earlier versions of Pegasus addressed a different class of problem. Pegasus 1.2 was designed as a video question-answering (QA) system: users provided a clip and asked a question, and the model returned an answer or summary. This paradigm works well for factoid queries or localized understanding. However, it introduces a fundamental limitation for real-world workflows: the user must already know where to look. In large-scale environments (such as media archives, live sports, or streaming catalogs), this assumption does not hold.

As a result, Pegasus 1.2 could not fully support workflows that require systematic segmentation and consistent metadata extraction across entire videos. The absence of model-driven boundary detection meant that users still relied on manual annotation or heuristic preprocessing to define temporal regions of interest.

Pegasus 1.5 represents a fundamental shift. Instead of answering questions about a predefined clip, the model partitions an entire video according to a user-defined schema and labels each segment with structured metadata. This transformation moves video understanding from a retrieval problem to a data generation pipeline, where video becomes a first-class input for analytics, automation, and agentic systems.

2 - The Cost of Unstructured Video

Despite the explosive growth of video content, most organizations still manage it as unstructured media. Traditional approaches rely on manual logging, keyword tagging, or simple shot-detection algorithms. These methods fail to capture the semantic and temporal complexity required for downstream decision-making.

From a systems perspective, the challenge stems from three properties of video:

Temporal Ambiguity: Events do not have explicit boundaries. Determining where a news story begins or where a sports play ends requires contextual reasoning across multiple modalities.

Multimodal Dependence: Meaning arises from the interaction of visual cues, speech, audio signals, and on-screen text.

Schema Variability: Different organizations care about different events, requiring flexible and domain-specific definitions.

Without reliable time-based metadata, video cannot be easily indexed, queried, or integrated into data pipelines, limiting its value for automation and analytics.

The operational gap between raw video and structured data can be illustrated through the following comparison:

Diagram 1: Traditional Workflow

Diagram 2: Pegasus 1.5 Workflow

By allowing developers to define a vocabulary of events, Pegasus 1.5 shifts the burden of temporal reasoning from humans to the model, enabling scalable and consistent metadata extraction.

In media & entertainment, editorial teams must segment long-form content into narrative units (such as scenes, topics, or character appearances) to support archiving, recommendation, and monetization. With Pegasus 1.5, they can define schemas for editorial segments and automatically extract structured metadata across entire catalogs. This enables semantic search, automated highlight generation, and efficient content reuse.

In sports analytics, identifying and labeling plays within sports footage is labor-intensive and time-sensitive, often requiring domain experts to review entire games. With Pegasus 1.5, they can define schemas for plays (such as goals, fouls, or turnovers) and automatically detect each instance with precise temporal boundaries. This supports real-time highlight creation and performance analytics.

In streaming platforms, these services must identify brand appearances, scene transitions, and contextual moments to enable targeted advertising and content monetization. With Pegasus 1.5, they can define schemas for brand visibility or contextual triggers, allowing automatic detection of monetizable moments across vast libraries.

3 - Technical Foundations: Defining Time-Based Metadata

Time-based metadata (TBM) refers to structured information associated with specific temporal segments of a video. Each segment is defined by precise start and end timestamps and is enriched with metadata fields that conform to a user-defined schema.

Formally, a TBM output can be represented as:

Segment = { start_time: float, end_time: float, metadata: { key: value, ... } }

A complete analysis consists of a set of non-overlapping segments for each semantic definition provided by the user. This structure enables deterministic integration with downstream systems such as search indexes, analytics platforms, and agentic workflows.

Pegasus 1.5 introduces a schema-first interaction model through the /analyze API. Instead of prompting the model with open-ended questions, developers define segment definitions that describe:

What constitutes a segment (semantic description)

Which metadata fields to extract

Optional constraints such as duration or contextual references

This design ensures consistency, determinism, and integration readiness for production environments.

This is an example /analyze API request for the basketball video above:

{ "model_name": "pegasus1.5", "analysis_mode": "time_based_metadata", "video": { "type": "url", "url": "https://example.com/video.mp4" }, "response_format": { "type": "segment_definitions", "segment_definitions": [ { "id": "non_gameplay_footage", "description": "Generate segments only when the content on screen IS NOT actual gameplay.", "fields": [ { "name": "description", "type": "string", "description": "A rich long description of the non-gameplay footage." } ] }, { "id": "scoring_plays", "description": "Segment any time a team scores points. The segment should be the entire scoring play.", "fields": [ { "name": "points_scored", "type": "string", "description": "How many points were scored during the play.", "enum": [ "2pt", "1pt", "3pt" ] }, { "name": "shot_type", "type": "string", "description": "The shot type from the scoring play.", "enum": [ "jump_start", "layup", "dunk", "foul_shot" ] }, { "name": "scoring_team", "type": "string", "description": "Name of the team that scored." } ] }, { "id": "camera_cut", "description": "Segment any time only when there is a hard cut in the camera. Otherwise continue the current segment.", "fields": [ { "name": "camera_angle", "type": "string", "description": "Angle of the current camera.", "enum": [ "high", "low", "medium" ] } ] } ] }, "temperature": 0, "min_segment_duration": 2 }

And this is an example response structure: