Company

The Design Choices Behind Indexing 3.0: Consolidation, Packaging, Execution, and Deployment

Stu Stewart, Abraham Jo, SJ Kim, Paritosh Mohan

Indexing 3.0 represents a fundamental architectural shift from a microservices mesh to streamlined layers, where consolidation reduces coordination overhead, a unifying execution layer handles distribution, and deployment becomes a swappable property rather than a defining constraint. The redesign prioritizes developer velocity and operational simplicity by treating indexing as a coherent, portable system that can run across cloud, on-prem, and constrained environments without architectural forks. By moving from infrastructure-coupled services to a layered model with explicit resource semantics, TwelveLabs built a foundation where future work can focus on advancing video understanding capabilities rather than untangling deployment complexity.

Indexing 3.0 represents a fundamental architectural shift from a microservices mesh to streamlined layers, where consolidation reduces coordination overhead, a unifying execution layer handles distribution, and deployment becomes a swappable property rather than a defining constraint. The redesign prioritizes developer velocity and operational simplicity by treating indexing as a coherent, portable system that can run across cloud, on-prem, and constrained environments without architectural forks. By moving from infrastructure-coupled services to a layered model with explicit resource semantics, TwelveLabs built a foundation where future work can focus on advancing video understanding capabilities rather than untangling deployment complexity.

In this article

Join our newsletter

Join our newsletter

Receive the latest advancements, tutorials, and industry insights in video understanding

Receive the latest advancements, tutorials, and industry insights in video understanding

AI로 영상을 검색하고, 분석하고, 탐색하세요.

2026. 3. 25.

13 Minutes

링크 복사하기

Part 1 showed why our previous indexing platform hit a scaling ceiling even though it “worked”: the limiting factor wasn’t raw model throughput, but the operational and organizational overhead of a microservices-heavy system with infrastructure assumptions baked into the application. As the team and product surfaces grew, coordination costs, cross-service debugging, and deployment coupling started to dominate day-to-day engineering velocity. The core reframe was to treat indexing as a coherent, portable system: moving from a microservices mesh to streamlined layers where application logic stays readable, an execution layer handles distribution, and infrastructure becomes swappable rather than defining. That layered view makes it easier to reason about the end-to-end path from video → reusable representations → downstream search/generation, without re-deriving behavior from control-plane interactions.

In Part 2, we will unpack the concrete design choices that made that reframe real: why consolidation improved extensibility instead of reducing it, what “single container” means as a packaging constraint, what you gain (and pay) when you bet on a unifying execution layer, and how we designed for multiple deployment environments without fragmenting the platform.

1 - Consolidation as an enabler, not an ideology

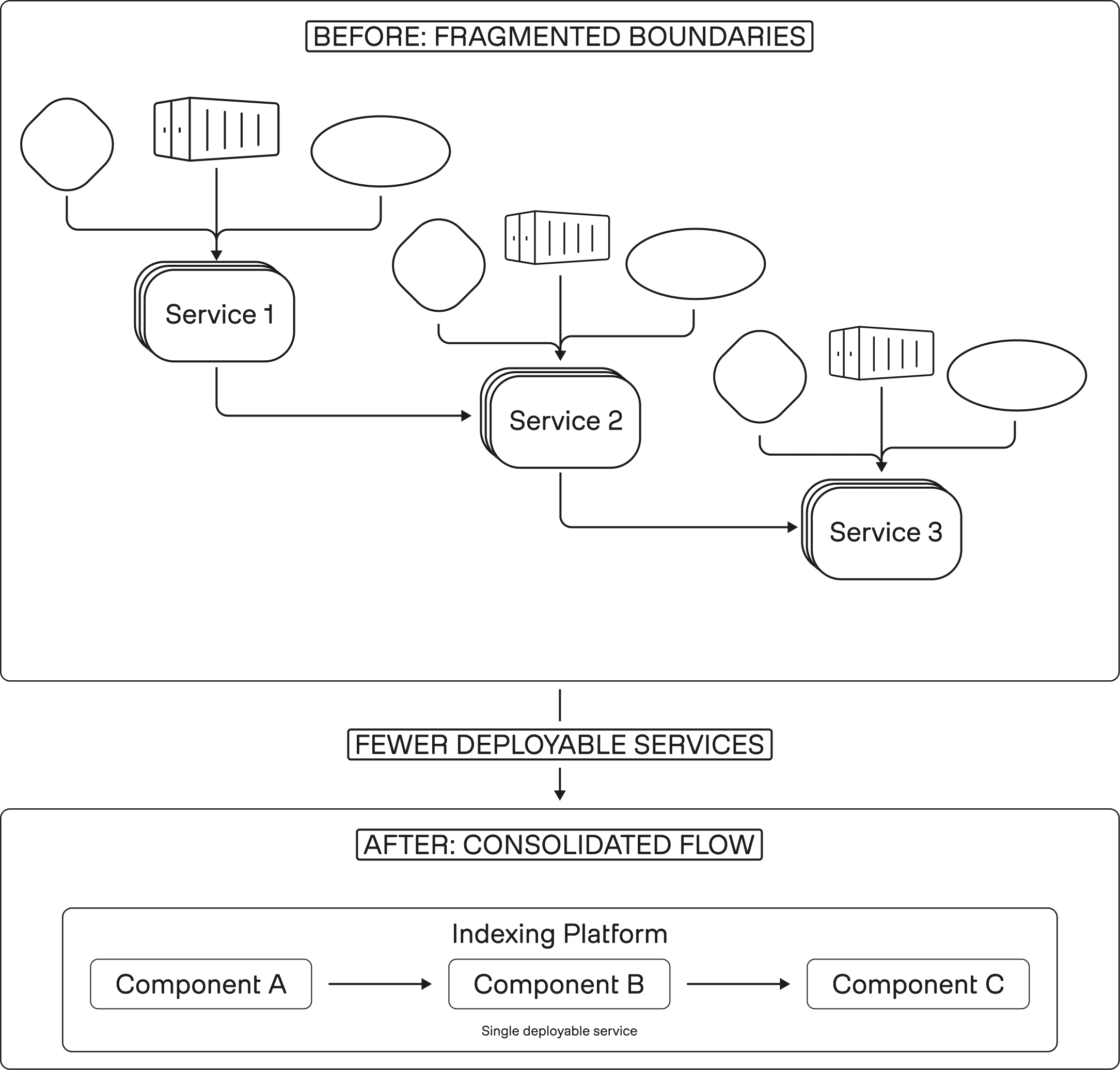

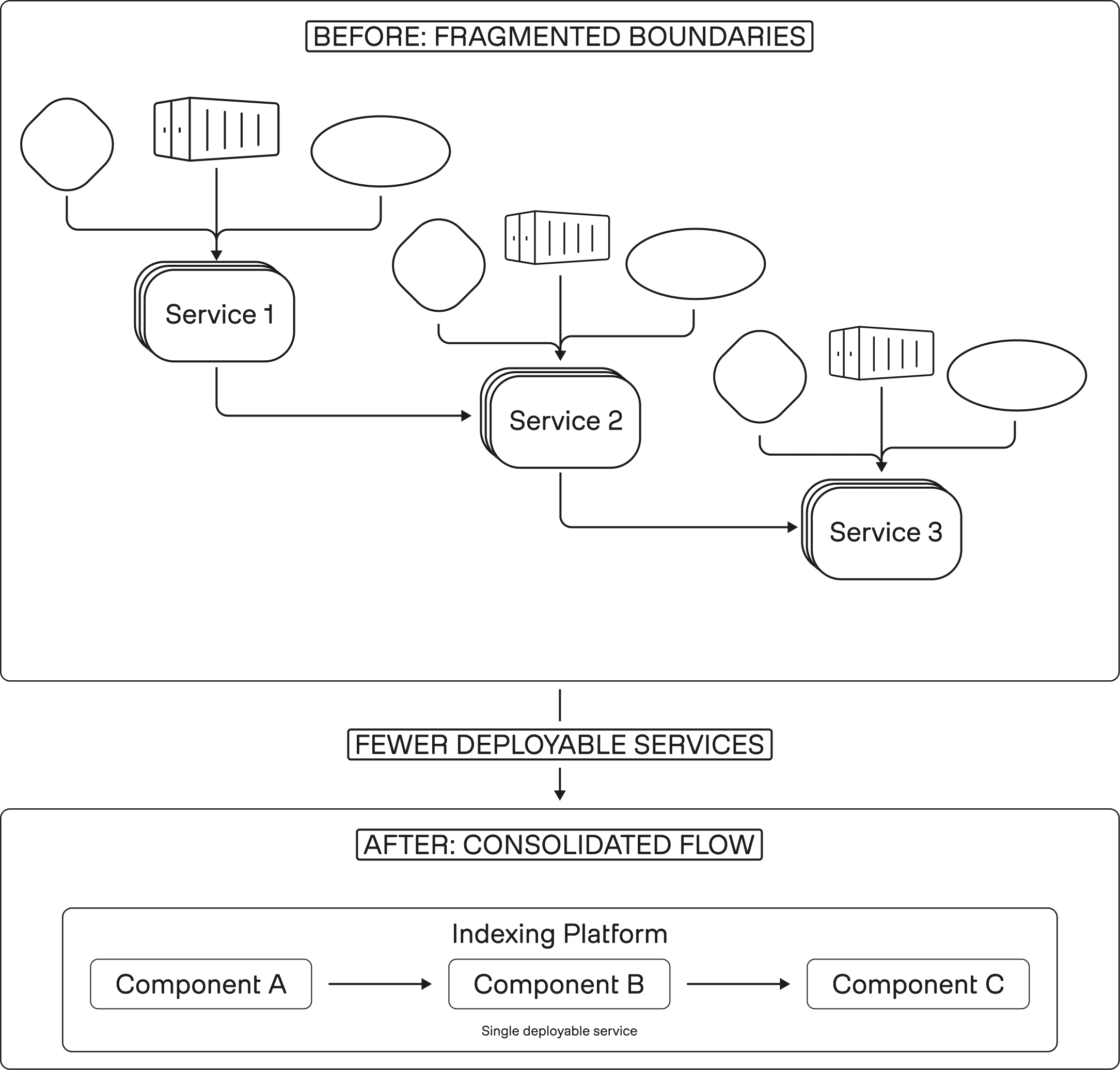

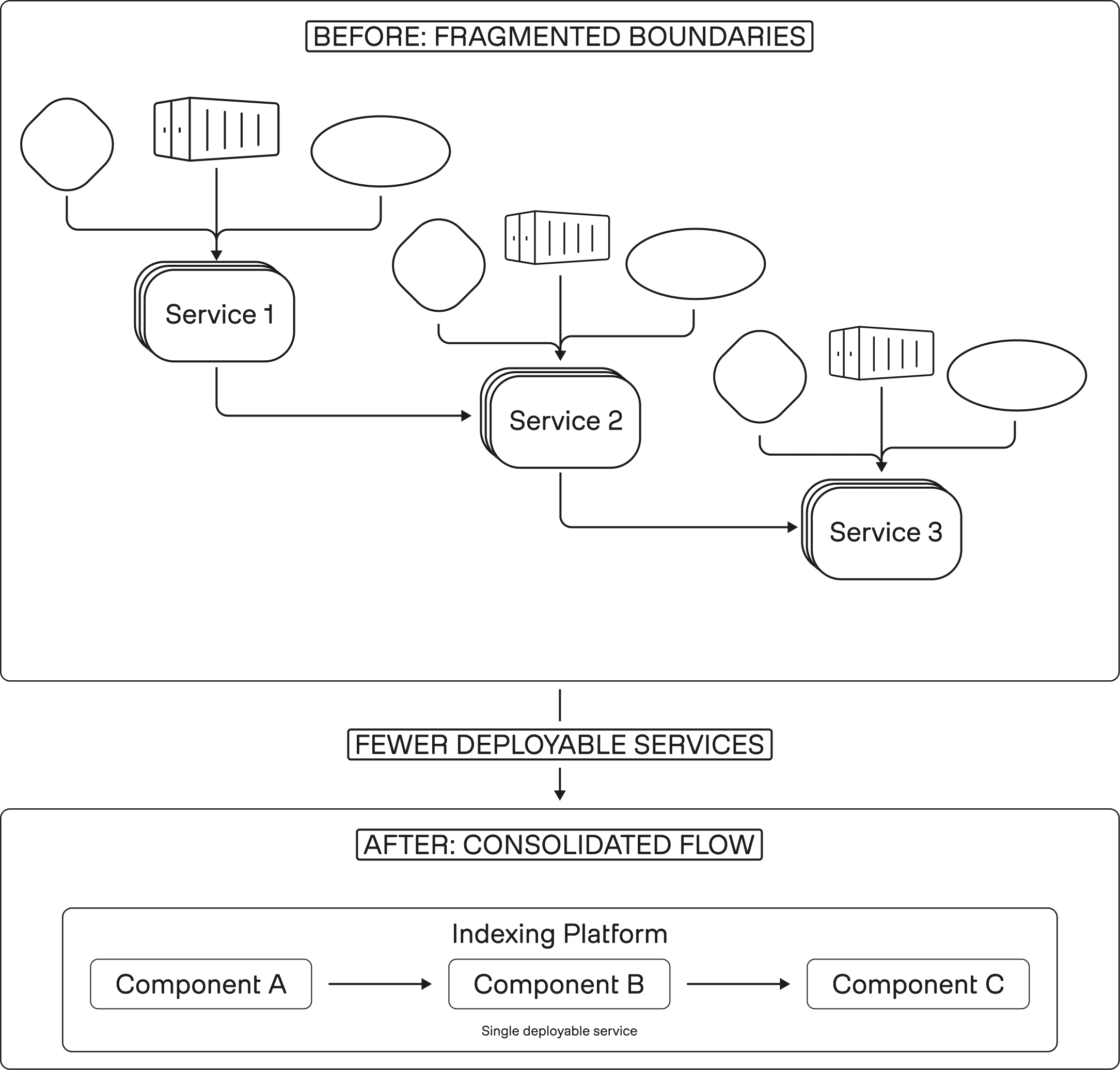

Figure 1: Fewer boundaries, faster reasoning

We argued in Part 1 that the real tax in Indexing 2.0 was not “lack of compute.” It was the accumulation of boundaries: every stage turned into its own service contract, deployment artifact, and failure mode to reason about. Once each step became a separate service boundary, the system became harder to debug, harder to package, and harder to evolve.

Indexing 3.0 uses consolidation as a lever to reduce that boundary surface area. This is not “monolith for the sake of it.” The point is to keep the system modular where it matters, but stop paying coordination overhead where it does not buy you real independence.

Why fewer boundaries improved, rather than limited, flexibility

Flexibility in production ML systems does not come from having the most boxes. It comes from being able to change behavior without synchronizing half the fleet.

Consolidation helped by:

Reducing the number of cross-service data contracts that must remain stable under change.

Collapsing “glue code” that exists only to translate between runtimes, tracing systems, and deployment pipelines.

Making it easier to reason about the end-to-end indexing path as one coherent flow.

Consolidation as a developer experience multiplier

We called out in Part 1 that language and runtime boundaries (Go for some plumbing, Python for ML workflows) created friction because the interface was not always clean.

Consolidation is how you retire that friction.

In practice, it improves:

Debugging: fewer places where an error can be serialized, wrapped, retried, and lose context.

Profiling: easier to trace latency and utilization across stages without stitching logs across services.

Onboarding: engineers can follow one code path and build a mental model faster.

A narrower surface area, by design

Consolidation only works if the system’s purpose stays bounded. Indexing is a transformation layer: video in, reusable representations out. When you keep that scope clear, consolidation reduces accidental complexity without turning the codebase into a catch-all.

2 - Packaging rethink: what “single container” actually means

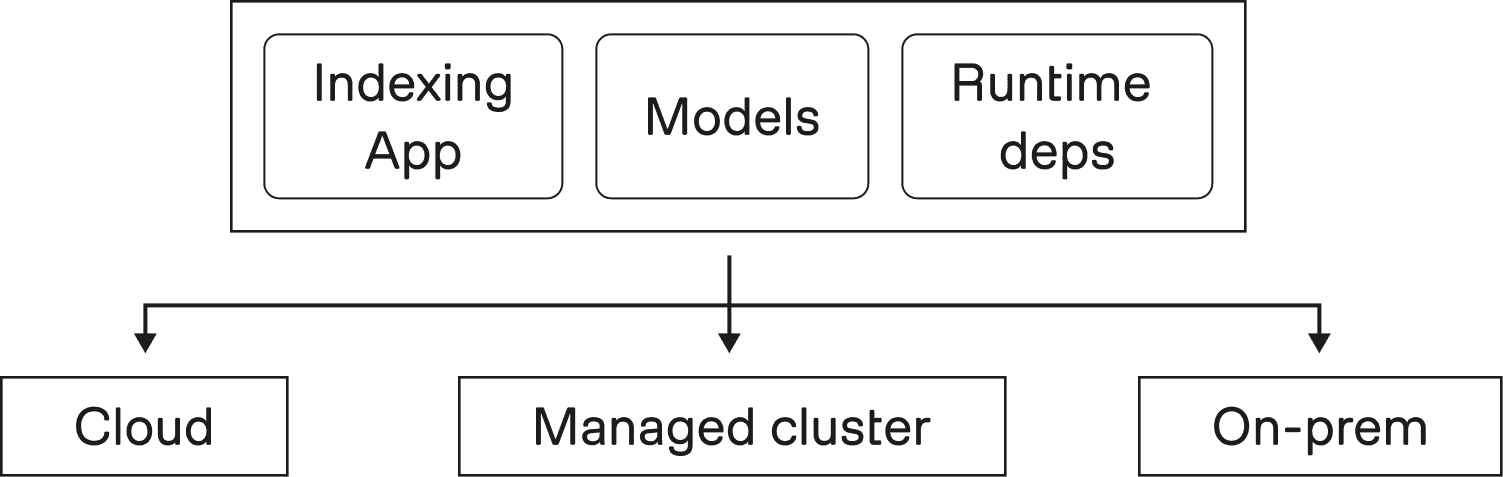

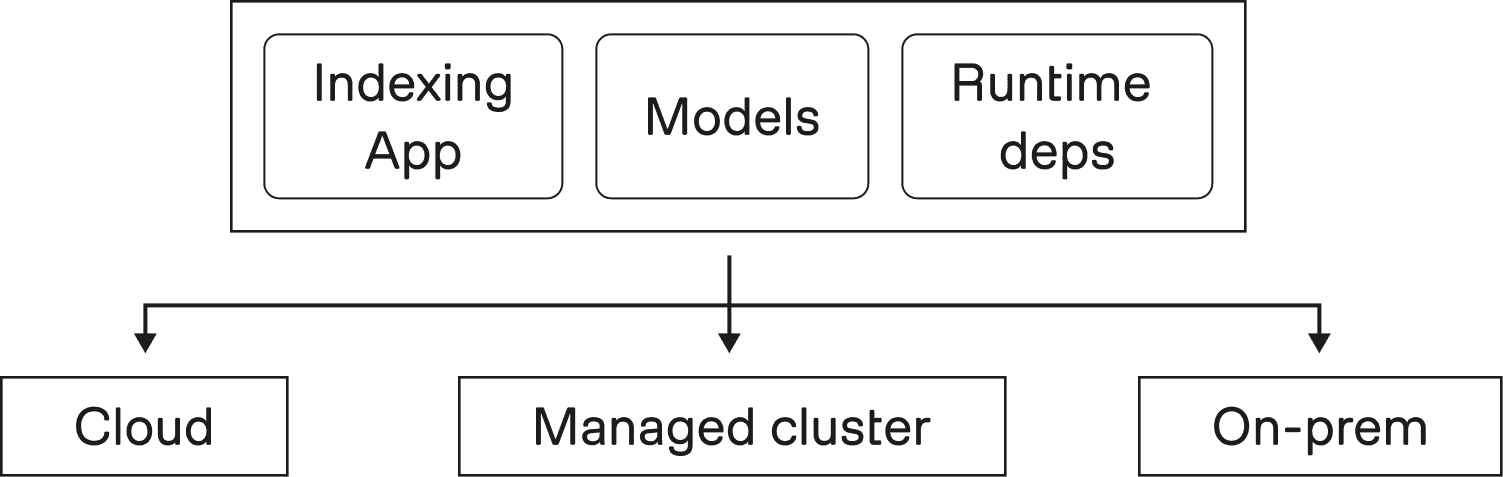

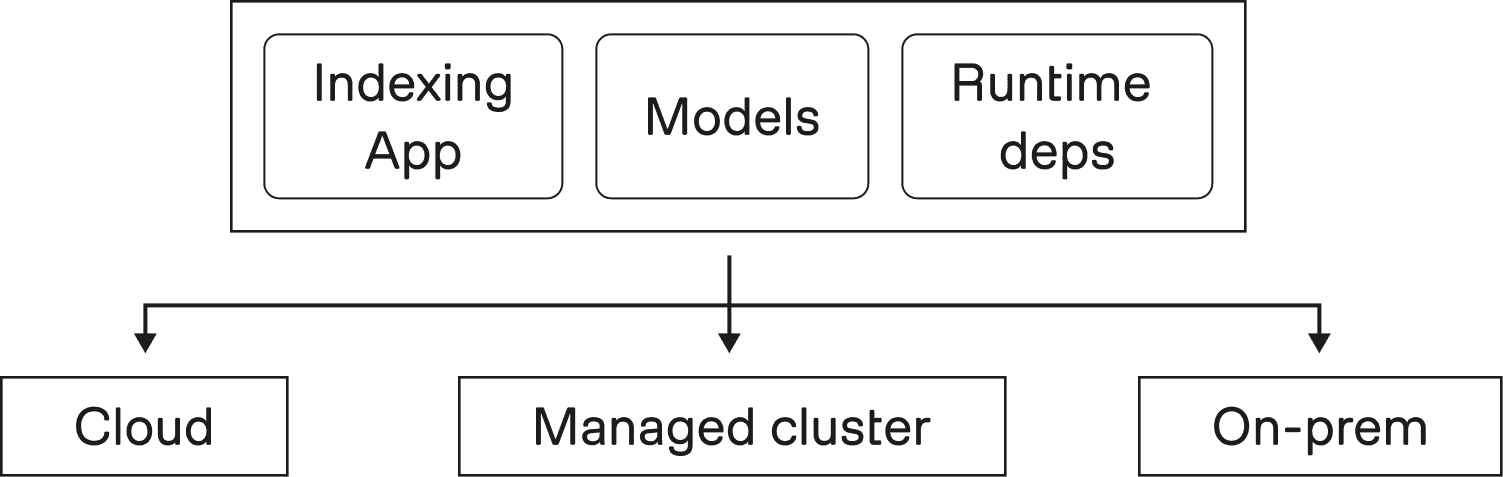

Figure 2: Runs as a self-contained unit across environments

As argued in Part 1, deployment flexibility showed up as a first-order constraint, not a nice-to-have. Some environments look like “full platform,” others look like “a container runtime and a few knobs.” Indexing 3.0 needed to run across that spectrum.

“Single container” is the packaging shorthand for that constraint.

“Single container” is about decoupling, not compression

The goal is not to stuff every component into one oversized image. The goal is to make the indexing system runnable without depending on infrastructure-specific control plane primitives.

A practical definition is:

The system can be deployed as a self-contained unit.

It does not require Kubernetes APIs (or similar) to perform core orchestration.

It can run in minimal environments without architectural forks.

This aligns with our core framing: deployment becomes a property of the bottom layer, not a constraint that leaks into application logic.

Moving orchestration into the application

In a microservices mesh, orchestration tends to live outside the application. It is spread across routing, controllers, and platform behavior.

In the layered model from Part 1, orchestration moves inward:

The indexing application defines “what should happen.”

The execution layer runs it and scales it.

The infrastructure is the substrate.

That inversion is what makes portability possible, because the system is no longer anchored to a single operating environment.

Why this mattered more than it first appeared

Packaging is not only about where you can run. It also affects:

How easy local development is.

How consistent behavior is across environments.

How much operational surface area new deployments inherit.

When packaging gets simpler, everything downstream gets simpler too: rollout, debugging, support, and onboarding.

3 - Choosing a unifying execution layer: promise vs reality

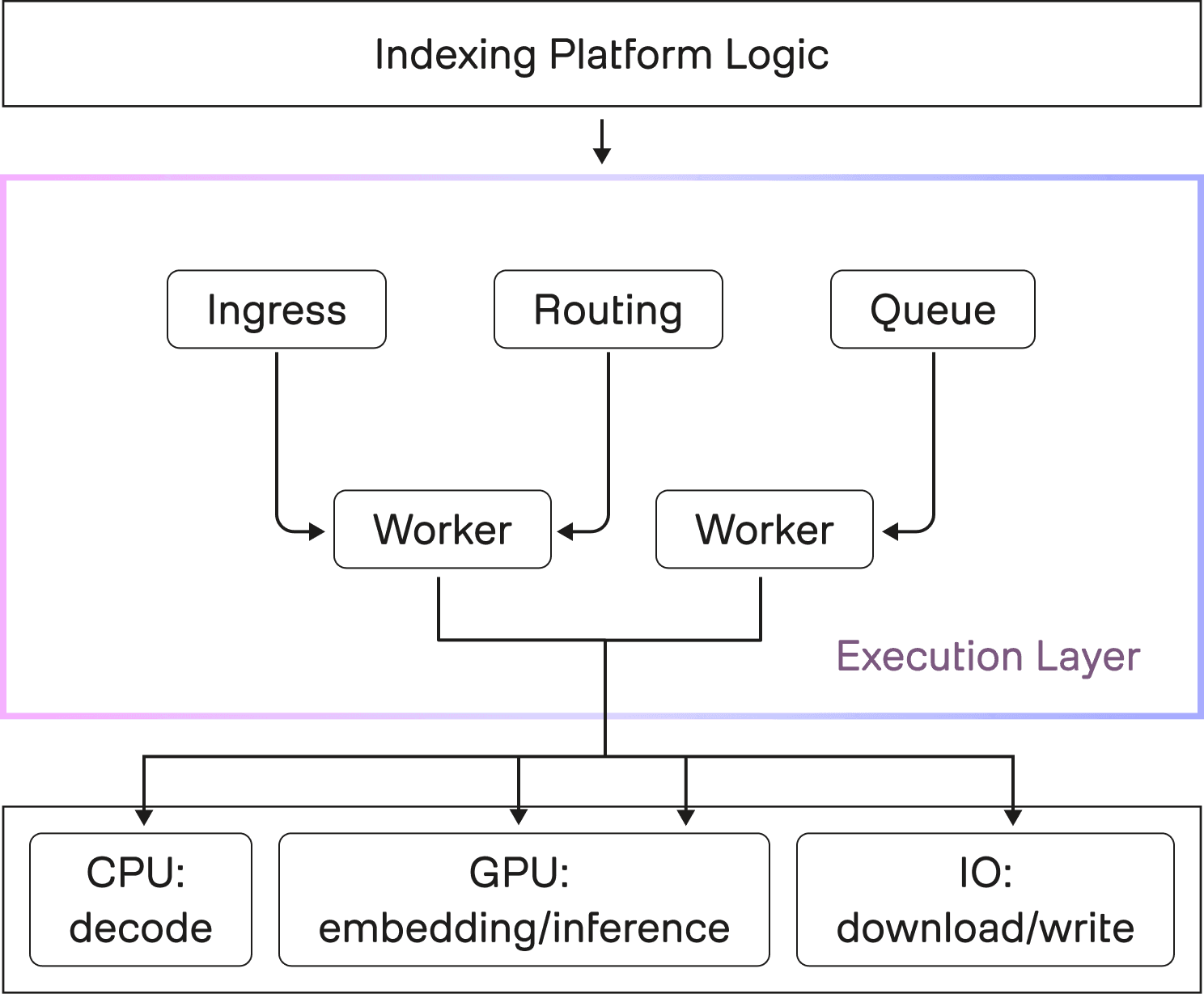

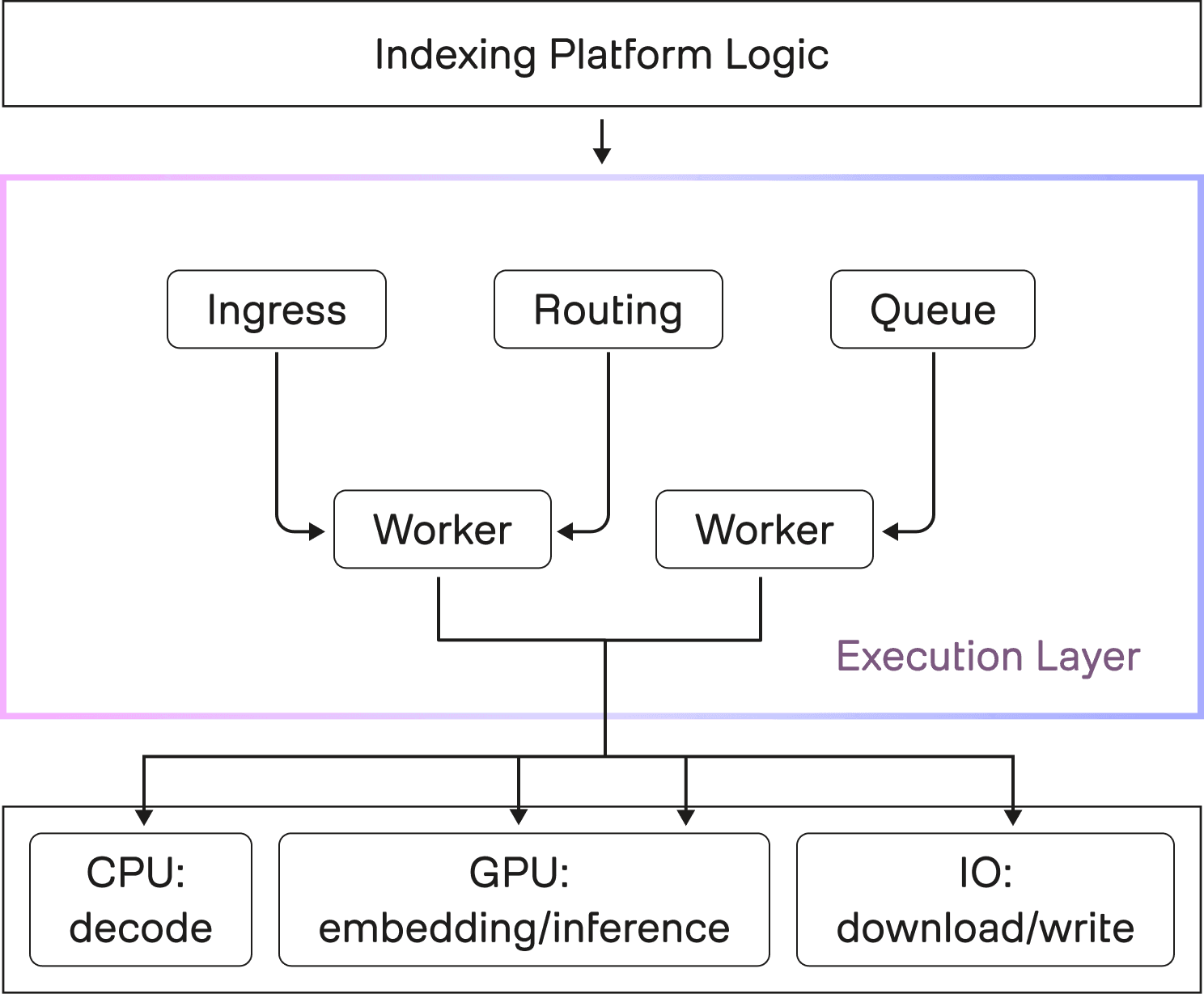

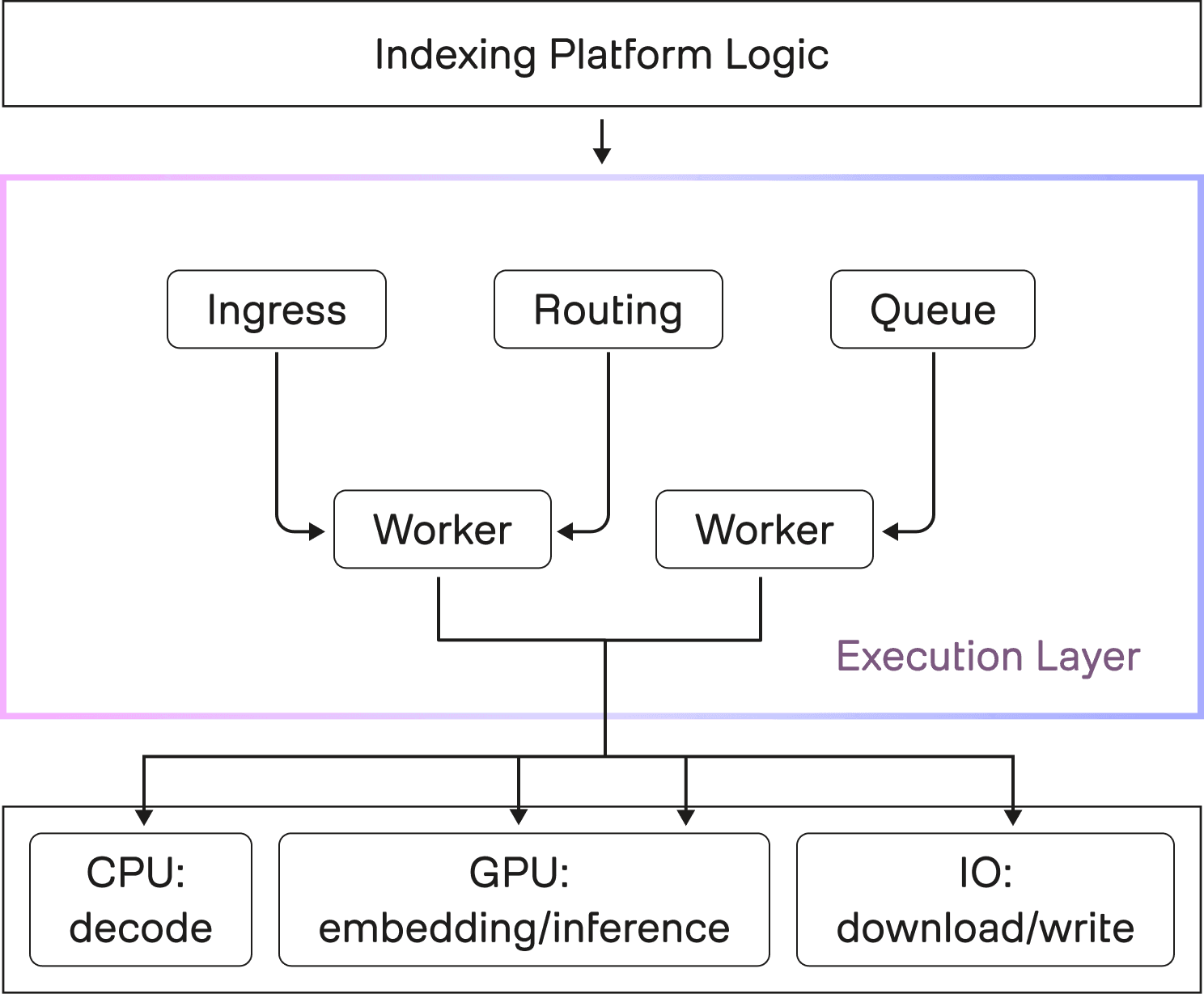

Figure 3: One substrate for routing, scaling, and reliability

Part 1 framed Indexing 3.0 as a shift from a microservices mesh to streamlined layers, where application logic stays coherent, an execution layer handles distribution, and the infrastructure layer becomes swappable.

This section is the uncomfortable middle of that story: what it actually means to pick an execution layer that sits between “our indexing logic” and “whatever compute the customer has.” We chose Ray Serve (with Anyscale as our managed provider for Ray Core and Ray Serve) as that unifying layer. The decision delivered real leverage, but it also came with costs that are worth calling out directly.

The promise of one abstraction

The core promise of a unifying execution layer is simple: it gives you a single place to express concurrency, scaling, and fault handling, instead of rebuilding those mechanisms across a growing collection of services.

Ray Serve’s serving model makes that promise concrete:

Requests enter through an HTTP (or gRPC) proxy, get queued and routed to an available replica, then executed by replica actors that run user code.

Serve supports multiple proxies for scalability and high availability, not just a single ingress point.

The same request path applies even when services call each other via

DeploymentHandlesfor model composition, which means internal composition can share routing and batching behavior.

If you are building an indexing platform, this is attractive because indexing is not one model call. It is a multi-stage flow, and each stage needs concurrency control and failure semantics. A unifying execution layer is the difference between “we stitched this together with many ad hoc schedulers” and “we have one scheduling substrate we can standardize on.”

It also aligns with the packaging and deployment driver from Part 1. If the orchestration logic is inside an execution layer, not spread across Kubernetes controllers and microservice glue, you get closer to a system that can run across different environments without rewriting the architecture.

The reality of indexing workloads

The part that is easy to under-estimate is that an execution layer is not free. It introduces its own request path, its own control plane, and its own performance characteristics. Ray Serve’s own docs call out that the most common issues are high latency and low throughput in the request path, and they surface specific router and processing latency metrics to watch.

For video indexing, that matters because the workload shape tends to multiply overhead.

Reality #1: request routing overhead is real, and it can be meaningful

Ray Serve’s architecture routes requests through a proxy, queues them, and then selects an available replica before execution. This is a sensible default for general serving, but it means there is framework work happening even before your code runs.

If your application has lots of “small hops” between stages, that routing overhead can become a measurable part of end-to-end latency. The abstraction helps you compose systems, but it also means you need to budget for the cost of composition.

This is not hypothetical. Ray’s performance tuning guidance explicitly discusses diagnosing request-path bottlenecks and points to router throughput and queuing latency as signals of trouble. And the Ray ecosystem continues to invest in request routing as a first-class performance lever, including new support for custom routing to reduce latency in demanding serving workloads.

The practical takeaway for indexing is: the framework overhead is part of the system. You have to measure it, profile it, and design around it, especially when your workload includes many short jobs where overhead is a larger fraction of total time. That exact “short-video setup tax” dynamic was one of the breaking points described in Part 1.

Reality #2: video decoding is core, and it is often CPU-bound

It is easy to talk about video indexing as “GPU inference plus some glue.” In practice, decoding is one of the most consequential parts of the pipeline, and it can be heavily CPU-bound depending on codec, format, and whether hardware decode is available.

NVIDIA’s NVDEC documentation makes the trade-off explicit: when decoding is offloaded to NVDEC, the CPU is freed for other work. That is another way of saying the default path without that offload consumes meaningful CPU.

Even in modern PyTorch ecosystem tooling, CUDA decoding is positioned as an acceleration path because it can be faster than CPU decoding for the decode step. But there is an important caveat: CUDA decoding can fall back to CPU decoding when the codec or format is not supported by the hardware decoder.

For an indexing platform, that means CPU capacity is not optional background noise. It can be a primary bottleneck, even when the “headline stage” is a GPU model. You can also end up with the worst kind of utilization: GPUs idle because CPU decode is saturated. This is why “heterogeneous resource profiles” is not an abstract point. Decode alone can force you to treat CPU as a first-class scheduling dimension.

Reality #3: heterogeneous stages require explicit resource modeling

Indexing workloads are resource heterogenous by default:

download and IO phases

decode phases that can be CPU heavy

embedding phases that may be GPU heavy

post-processing phases that can swing between CPU and memory pressure

A unifying execution layer helps only if you model those requirements explicitly. Ray Core supports specifying logical resource requirements like CPU and GPU for tasks and actors, and schedules them only on nodes with sufficient resources. That capability is essential when you want to avoid the failure mode where one stage silently starves another, or where concurrency grows faster than the bottleneck stage can support.

This is also where the “streamlined layers” reframe in Part 1 becomes operationally real. You are not only layering code, you are layering resource semantics: the indexing platform expresses the flow, the execution layer enforces concurrency and placement, and the infrastructure layer supplies the physical substrate.

The lesson: abstractions do not eliminate work, they relocate it

A strong execution layer does not eliminate engineering work. It relocates it. Instead of writing bespoke orchestration code, you spend more time on: understanding the request path, instrumentation and tuning, and making framework behavior match workload shape.

There is also a second lesson we learned that is worth stating explicitly: if you bet on a unifying execution layer early, treat the platform team behind it as an extension of your team.

Ray Serve and Anyscale gave us leverage, but we also ran into real edge cases that only appear under production-grade video workloads. We worked closely with the Ray Serve team at Anyscale to identify bottlenecks, provide reproducible cases, and iterate on fixes. Some issues were rough at first, but the collaboration is part of what made the bet viable over time. In a fast-moving serving stack, the relationship is not an afterthought. It is part of the architecture.

4 - Supporting multiple deployment environments without disruption

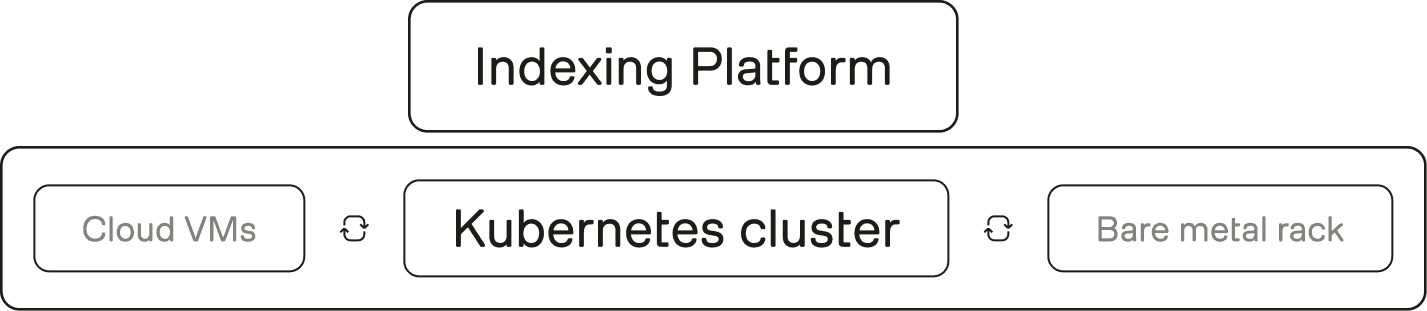

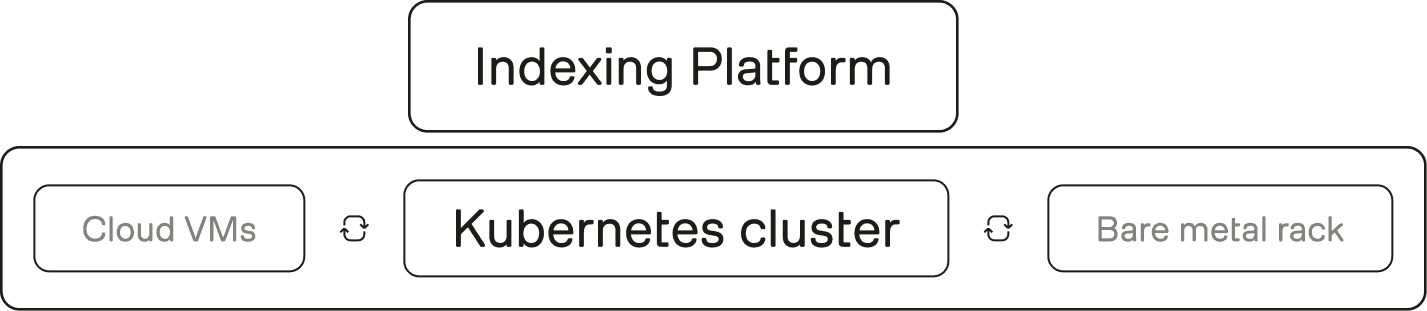

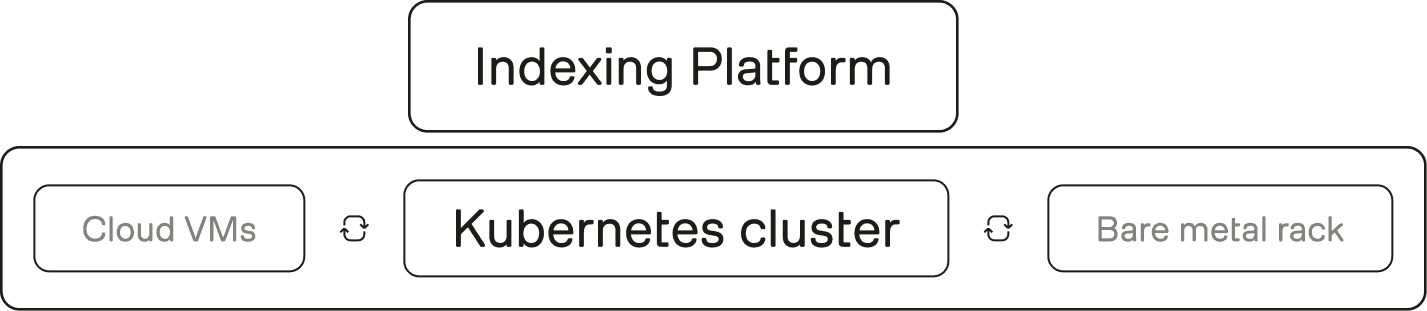

Figure 4: One conceptual platform, many substrates

We made a strong claim in Part 1: deployment shape should be a variable, not a defining feature of the system. This section is about what it takes to make that true in practice.

Deployment is not an afterthought, it is a design axis

Once customers rely on indexing outputs, deployment constraints become expensive to change. If your system is welded to one control plane or one operational model, every new environment becomes either a rewrite or a fork.

Indexing 3.0 treats “where it runs” as a first-class input to architecture, not a packaging task for later.

Flexibility without fragmentation

Supporting multiple environments does not mean maintaining multiple platforms.

The goal is one conceptual system, with environment-specific concerns pushed to the lowest layer possible. This is the same layering principle from Part 1: infrastructure should be swappable without forcing application logic to change.

Practically, that implies:

Keeping core indexing behavior consistent across environments.

Isolating differences to configuration, deployment tooling, and thin adapters.

Avoiding “special case” code paths that silently diverge over time.

The hidden cost of ignoring deployment reality

If you ignore deployment diversity, the platform fragments anyway. It just fragments through ad hoc forks, one-off patches, and environment-specific operational playbooks.

Designing for multiple environments upfront is cheaper than paying that fragmentation tax later. It is also the difference between a system that can evolve and a system that has to be replaced every time the business changes its deployment assumptions.

Conclusion: Infrastructure that scales people scales everything else

Indexing 3.0 did not start with a performance target. It started with a question that many ML teams eventually face:

What happens when the system works, but only a few people truly understand it, and only in one environment?

The answer, we learned, is that progress slows in subtle but compounding ways. Changes take longer. Deployments become brittle. Onboarding turns into archaeology. And architectural decisions made for convenience quietly harden into long-term constraints.

The most meaningful outcome of Indexing 3.0 is not a single technical feature; it’s a shift in what the system makes possible:

More engineers can contribute without coordination bottlenecks

The same core platform can run across cloud, on-prem, and constrained environments

Deployment shape no longer dictates architectural complexity

Cost and performance optimization become tractable because the system is simpler

The broader lesson is one we believe applies well beyond video indexing.

In ML infrastructure, scaling is rarely blocked by models or hardware alone. It is blocked by systems that are difficult to reason about, difficult to deploy outside their original assumptions, and difficult for new people to safely change.

Simplicity, in that context, is not a lack of ambition. It is a deliberate design choice that trades local cleverness for global leverage.

Indexing 3.0 is our attempt to build that leverage into the foundation; so future work can focus less on untangling infrastructure, and more on advancing what video understanding makes possible.

Part 1 showed why our previous indexing platform hit a scaling ceiling even though it “worked”: the limiting factor wasn’t raw model throughput, but the operational and organizational overhead of a microservices-heavy system with infrastructure assumptions baked into the application. As the team and product surfaces grew, coordination costs, cross-service debugging, and deployment coupling started to dominate day-to-day engineering velocity. The core reframe was to treat indexing as a coherent, portable system: moving from a microservices mesh to streamlined layers where application logic stays readable, an execution layer handles distribution, and infrastructure becomes swappable rather than defining. That layered view makes it easier to reason about the end-to-end path from video → reusable representations → downstream search/generation, without re-deriving behavior from control-plane interactions.

In Part 2, we will unpack the concrete design choices that made that reframe real: why consolidation improved extensibility instead of reducing it, what “single container” means as a packaging constraint, what you gain (and pay) when you bet on a unifying execution layer, and how we designed for multiple deployment environments without fragmenting the platform.

1 - Consolidation as an enabler, not an ideology

Figure 1: Fewer boundaries, faster reasoning

We argued in Part 1 that the real tax in Indexing 2.0 was not “lack of compute.” It was the accumulation of boundaries: every stage turned into its own service contract, deployment artifact, and failure mode to reason about. Once each step became a separate service boundary, the system became harder to debug, harder to package, and harder to evolve.

Indexing 3.0 uses consolidation as a lever to reduce that boundary surface area. This is not “monolith for the sake of it.” The point is to keep the system modular where it matters, but stop paying coordination overhead where it does not buy you real independence.

Why fewer boundaries improved, rather than limited, flexibility

Flexibility in production ML systems does not come from having the most boxes. It comes from being able to change behavior without synchronizing half the fleet.

Consolidation helped by:

Reducing the number of cross-service data contracts that must remain stable under change.

Collapsing “glue code” that exists only to translate between runtimes, tracing systems, and deployment pipelines.

Making it easier to reason about the end-to-end indexing path as one coherent flow.

Consolidation as a developer experience multiplier

We called out in Part 1 that language and runtime boundaries (Go for some plumbing, Python for ML workflows) created friction because the interface was not always clean.

Consolidation is how you retire that friction.

In practice, it improves:

Debugging: fewer places where an error can be serialized, wrapped, retried, and lose context.

Profiling: easier to trace latency and utilization across stages without stitching logs across services.

Onboarding: engineers can follow one code path and build a mental model faster.

A narrower surface area, by design

Consolidation only works if the system’s purpose stays bounded. Indexing is a transformation layer: video in, reusable representations out. When you keep that scope clear, consolidation reduces accidental complexity without turning the codebase into a catch-all.

2 - Packaging rethink: what “single container” actually means

Figure 2: Runs as a self-contained unit across environments

As argued in Part 1, deployment flexibility showed up as a first-order constraint, not a nice-to-have. Some environments look like “full platform,” others look like “a container runtime and a few knobs.” Indexing 3.0 needed to run across that spectrum.

“Single container” is the packaging shorthand for that constraint.

“Single container” is about decoupling, not compression

The goal is not to stuff every component into one oversized image. The goal is to make the indexing system runnable without depending on infrastructure-specific control plane primitives.

A practical definition is:

The system can be deployed as a self-contained unit.

It does not require Kubernetes APIs (or similar) to perform core orchestration.

It can run in minimal environments without architectural forks.

This aligns with our core framing: deployment becomes a property of the bottom layer, not a constraint that leaks into application logic.

Moving orchestration into the application

In a microservices mesh, orchestration tends to live outside the application. It is spread across routing, controllers, and platform behavior.

In the layered model from Part 1, orchestration moves inward:

The indexing application defines “what should happen.”

The execution layer runs it and scales it.

The infrastructure is the substrate.

That inversion is what makes portability possible, because the system is no longer anchored to a single operating environment.

Why this mattered more than it first appeared

Packaging is not only about where you can run. It also affects:

How easy local development is.

How consistent behavior is across environments.

How much operational surface area new deployments inherit.

When packaging gets simpler, everything downstream gets simpler too: rollout, debugging, support, and onboarding.

3 - Choosing a unifying execution layer: promise vs reality

Figure 3: One substrate for routing, scaling, and reliability

Part 1 framed Indexing 3.0 as a shift from a microservices mesh to streamlined layers, where application logic stays coherent, an execution layer handles distribution, and the infrastructure layer becomes swappable.

This section is the uncomfortable middle of that story: what it actually means to pick an execution layer that sits between “our indexing logic” and “whatever compute the customer has.” We chose Ray Serve (with Anyscale as our managed provider for Ray Core and Ray Serve) as that unifying layer. The decision delivered real leverage, but it also came with costs that are worth calling out directly.

The promise of one abstraction

The core promise of a unifying execution layer is simple: it gives you a single place to express concurrency, scaling, and fault handling, instead of rebuilding those mechanisms across a growing collection of services.

Ray Serve’s serving model makes that promise concrete:

Requests enter through an HTTP (or gRPC) proxy, get queued and routed to an available replica, then executed by replica actors that run user code.

Serve supports multiple proxies for scalability and high availability, not just a single ingress point.

The same request path applies even when services call each other via

DeploymentHandlesfor model composition, which means internal composition can share routing and batching behavior.

If you are building an indexing platform, this is attractive because indexing is not one model call. It is a multi-stage flow, and each stage needs concurrency control and failure semantics. A unifying execution layer is the difference between “we stitched this together with many ad hoc schedulers” and “we have one scheduling substrate we can standardize on.”

It also aligns with the packaging and deployment driver from Part 1. If the orchestration logic is inside an execution layer, not spread across Kubernetes controllers and microservice glue, you get closer to a system that can run across different environments without rewriting the architecture.

The reality of indexing workloads

The part that is easy to under-estimate is that an execution layer is not free. It introduces its own request path, its own control plane, and its own performance characteristics. Ray Serve’s own docs call out that the most common issues are high latency and low throughput in the request path, and they surface specific router and processing latency metrics to watch.

For video indexing, that matters because the workload shape tends to multiply overhead.

Reality #1: request routing overhead is real, and it can be meaningful

Ray Serve’s architecture routes requests through a proxy, queues them, and then selects an available replica before execution. This is a sensible default for general serving, but it means there is framework work happening even before your code runs.

If your application has lots of “small hops” between stages, that routing overhead can become a measurable part of end-to-end latency. The abstraction helps you compose systems, but it also means you need to budget for the cost of composition.

This is not hypothetical. Ray’s performance tuning guidance explicitly discusses diagnosing request-path bottlenecks and points to router throughput and queuing latency as signals of trouble. And the Ray ecosystem continues to invest in request routing as a first-class performance lever, including new support for custom routing to reduce latency in demanding serving workloads.

The practical takeaway for indexing is: the framework overhead is part of the system. You have to measure it, profile it, and design around it, especially when your workload includes many short jobs where overhead is a larger fraction of total time. That exact “short-video setup tax” dynamic was one of the breaking points described in Part 1.

Reality #2: video decoding is core, and it is often CPU-bound

It is easy to talk about video indexing as “GPU inference plus some glue.” In practice, decoding is one of the most consequential parts of the pipeline, and it can be heavily CPU-bound depending on codec, format, and whether hardware decode is available.

NVIDIA’s NVDEC documentation makes the trade-off explicit: when decoding is offloaded to NVDEC, the CPU is freed for other work. That is another way of saying the default path without that offload consumes meaningful CPU.

Even in modern PyTorch ecosystem tooling, CUDA decoding is positioned as an acceleration path because it can be faster than CPU decoding for the decode step. But there is an important caveat: CUDA decoding can fall back to CPU decoding when the codec or format is not supported by the hardware decoder.

For an indexing platform, that means CPU capacity is not optional background noise. It can be a primary bottleneck, even when the “headline stage” is a GPU model. You can also end up with the worst kind of utilization: GPUs idle because CPU decode is saturated. This is why “heterogeneous resource profiles” is not an abstract point. Decode alone can force you to treat CPU as a first-class scheduling dimension.

Reality #3: heterogeneous stages require explicit resource modeling

Indexing workloads are resource heterogenous by default:

download and IO phases

decode phases that can be CPU heavy

embedding phases that may be GPU heavy

post-processing phases that can swing between CPU and memory pressure

A unifying execution layer helps only if you model those requirements explicitly. Ray Core supports specifying logical resource requirements like CPU and GPU for tasks and actors, and schedules them only on nodes with sufficient resources. That capability is essential when you want to avoid the failure mode where one stage silently starves another, or where concurrency grows faster than the bottleneck stage can support.

This is also where the “streamlined layers” reframe in Part 1 becomes operationally real. You are not only layering code, you are layering resource semantics: the indexing platform expresses the flow, the execution layer enforces concurrency and placement, and the infrastructure layer supplies the physical substrate.

The lesson: abstractions do not eliminate work, they relocate it

A strong execution layer does not eliminate engineering work. It relocates it. Instead of writing bespoke orchestration code, you spend more time on: understanding the request path, instrumentation and tuning, and making framework behavior match workload shape.

There is also a second lesson we learned that is worth stating explicitly: if you bet on a unifying execution layer early, treat the platform team behind it as an extension of your team.

Ray Serve and Anyscale gave us leverage, but we also ran into real edge cases that only appear under production-grade video workloads. We worked closely with the Ray Serve team at Anyscale to identify bottlenecks, provide reproducible cases, and iterate on fixes. Some issues were rough at first, but the collaboration is part of what made the bet viable over time. In a fast-moving serving stack, the relationship is not an afterthought. It is part of the architecture.

4 - Supporting multiple deployment environments without disruption

Figure 4: One conceptual platform, many substrates

We made a strong claim in Part 1: deployment shape should be a variable, not a defining feature of the system. This section is about what it takes to make that true in practice.

Deployment is not an afterthought, it is a design axis

Once customers rely on indexing outputs, deployment constraints become expensive to change. If your system is welded to one control plane or one operational model, every new environment becomes either a rewrite or a fork.

Indexing 3.0 treats “where it runs” as a first-class input to architecture, not a packaging task for later.

Flexibility without fragmentation

Supporting multiple environments does not mean maintaining multiple platforms.

The goal is one conceptual system, with environment-specific concerns pushed to the lowest layer possible. This is the same layering principle from Part 1: infrastructure should be swappable without forcing application logic to change.

Practically, that implies:

Keeping core indexing behavior consistent across environments.

Isolating differences to configuration, deployment tooling, and thin adapters.

Avoiding “special case” code paths that silently diverge over time.

The hidden cost of ignoring deployment reality

If you ignore deployment diversity, the platform fragments anyway. It just fragments through ad hoc forks, one-off patches, and environment-specific operational playbooks.

Designing for multiple environments upfront is cheaper than paying that fragmentation tax later. It is also the difference between a system that can evolve and a system that has to be replaced every time the business changes its deployment assumptions.

Conclusion: Infrastructure that scales people scales everything else

Indexing 3.0 did not start with a performance target. It started with a question that many ML teams eventually face:

What happens when the system works, but only a few people truly understand it, and only in one environment?

The answer, we learned, is that progress slows in subtle but compounding ways. Changes take longer. Deployments become brittle. Onboarding turns into archaeology. And architectural decisions made for convenience quietly harden into long-term constraints.

The most meaningful outcome of Indexing 3.0 is not a single technical feature; it’s a shift in what the system makes possible:

More engineers can contribute without coordination bottlenecks

The same core platform can run across cloud, on-prem, and constrained environments

Deployment shape no longer dictates architectural complexity

Cost and performance optimization become tractable because the system is simpler

The broader lesson is one we believe applies well beyond video indexing.

In ML infrastructure, scaling is rarely blocked by models or hardware alone. It is blocked by systems that are difficult to reason about, difficult to deploy outside their original assumptions, and difficult for new people to safely change.

Simplicity, in that context, is not a lack of ambition. It is a deliberate design choice that trades local cleverness for global leverage.

Indexing 3.0 is our attempt to build that leverage into the foundation; so future work can focus less on untangling infrastructure, and more on advancing what video understanding makes possible.

Part 1 showed why our previous indexing platform hit a scaling ceiling even though it “worked”: the limiting factor wasn’t raw model throughput, but the operational and organizational overhead of a microservices-heavy system with infrastructure assumptions baked into the application. As the team and product surfaces grew, coordination costs, cross-service debugging, and deployment coupling started to dominate day-to-day engineering velocity. The core reframe was to treat indexing as a coherent, portable system: moving from a microservices mesh to streamlined layers where application logic stays readable, an execution layer handles distribution, and infrastructure becomes swappable rather than defining. That layered view makes it easier to reason about the end-to-end path from video → reusable representations → downstream search/generation, without re-deriving behavior from control-plane interactions.

In Part 2, we will unpack the concrete design choices that made that reframe real: why consolidation improved extensibility instead of reducing it, what “single container” means as a packaging constraint, what you gain (and pay) when you bet on a unifying execution layer, and how we designed for multiple deployment environments without fragmenting the platform.

1 - Consolidation as an enabler, not an ideology

Figure 1: Fewer boundaries, faster reasoning

We argued in Part 1 that the real tax in Indexing 2.0 was not “lack of compute.” It was the accumulation of boundaries: every stage turned into its own service contract, deployment artifact, and failure mode to reason about. Once each step became a separate service boundary, the system became harder to debug, harder to package, and harder to evolve.

Indexing 3.0 uses consolidation as a lever to reduce that boundary surface area. This is not “monolith for the sake of it.” The point is to keep the system modular where it matters, but stop paying coordination overhead where it does not buy you real independence.

Why fewer boundaries improved, rather than limited, flexibility

Flexibility in production ML systems does not come from having the most boxes. It comes from being able to change behavior without synchronizing half the fleet.

Consolidation helped by:

Reducing the number of cross-service data contracts that must remain stable under change.

Collapsing “glue code” that exists only to translate between runtimes, tracing systems, and deployment pipelines.

Making it easier to reason about the end-to-end indexing path as one coherent flow.

Consolidation as a developer experience multiplier

We called out in Part 1 that language and runtime boundaries (Go for some plumbing, Python for ML workflows) created friction because the interface was not always clean.

Consolidation is how you retire that friction.

In practice, it improves:

Debugging: fewer places where an error can be serialized, wrapped, retried, and lose context.

Profiling: easier to trace latency and utilization across stages without stitching logs across services.

Onboarding: engineers can follow one code path and build a mental model faster.

A narrower surface area, by design

Consolidation only works if the system’s purpose stays bounded. Indexing is a transformation layer: video in, reusable representations out. When you keep that scope clear, consolidation reduces accidental complexity without turning the codebase into a catch-all.

2 - Packaging rethink: what “single container” actually means

Figure 2: Runs as a self-contained unit across environments

As argued in Part 1, deployment flexibility showed up as a first-order constraint, not a nice-to-have. Some environments look like “full platform,” others look like “a container runtime and a few knobs.” Indexing 3.0 needed to run across that spectrum.

“Single container” is the packaging shorthand for that constraint.

“Single container” is about decoupling, not compression

The goal is not to stuff every component into one oversized image. The goal is to make the indexing system runnable without depending on infrastructure-specific control plane primitives.

A practical definition is:

The system can be deployed as a self-contained unit.

It does not require Kubernetes APIs (or similar) to perform core orchestration.

It can run in minimal environments without architectural forks.

This aligns with our core framing: deployment becomes a property of the bottom layer, not a constraint that leaks into application logic.

Moving orchestration into the application

In a microservices mesh, orchestration tends to live outside the application. It is spread across routing, controllers, and platform behavior.

In the layered model from Part 1, orchestration moves inward:

The indexing application defines “what should happen.”

The execution layer runs it and scales it.

The infrastructure is the substrate.

That inversion is what makes portability possible, because the system is no longer anchored to a single operating environment.

Why this mattered more than it first appeared

Packaging is not only about where you can run. It also affects:

How easy local development is.

How consistent behavior is across environments.

How much operational surface area new deployments inherit.

When packaging gets simpler, everything downstream gets simpler too: rollout, debugging, support, and onboarding.

3 - Choosing a unifying execution layer: promise vs reality

Figure 3: One substrate for routing, scaling, and reliability

Part 1 framed Indexing 3.0 as a shift from a microservices mesh to streamlined layers, where application logic stays coherent, an execution layer handles distribution, and the infrastructure layer becomes swappable.

This section is the uncomfortable middle of that story: what it actually means to pick an execution layer that sits between “our indexing logic” and “whatever compute the customer has.” We chose Ray Serve (with Anyscale as our managed provider for Ray Core and Ray Serve) as that unifying layer. The decision delivered real leverage, but it also came with costs that are worth calling out directly.

The promise of one abstraction

The core promise of a unifying execution layer is simple: it gives you a single place to express concurrency, scaling, and fault handling, instead of rebuilding those mechanisms across a growing collection of services.

Ray Serve’s serving model makes that promise concrete:

Requests enter through an HTTP (or gRPC) proxy, get queued and routed to an available replica, then executed by replica actors that run user code.

Serve supports multiple proxies for scalability and high availability, not just a single ingress point.

The same request path applies even when services call each other via

DeploymentHandlesfor model composition, which means internal composition can share routing and batching behavior.

If you are building an indexing platform, this is attractive because indexing is not one model call. It is a multi-stage flow, and each stage needs concurrency control and failure semantics. A unifying execution layer is the difference between “we stitched this together with many ad hoc schedulers” and “we have one scheduling substrate we can standardize on.”

It also aligns with the packaging and deployment driver from Part 1. If the orchestration logic is inside an execution layer, not spread across Kubernetes controllers and microservice glue, you get closer to a system that can run across different environments without rewriting the architecture.

The reality of indexing workloads

The part that is easy to under-estimate is that an execution layer is not free. It introduces its own request path, its own control plane, and its own performance characteristics. Ray Serve’s own docs call out that the most common issues are high latency and low throughput in the request path, and they surface specific router and processing latency metrics to watch.

For video indexing, that matters because the workload shape tends to multiply overhead.

Reality #1: request routing overhead is real, and it can be meaningful

Ray Serve’s architecture routes requests through a proxy, queues them, and then selects an available replica before execution. This is a sensible default for general serving, but it means there is framework work happening even before your code runs.

If your application has lots of “small hops” between stages, that routing overhead can become a measurable part of end-to-end latency. The abstraction helps you compose systems, but it also means you need to budget for the cost of composition.

This is not hypothetical. Ray’s performance tuning guidance explicitly discusses diagnosing request-path bottlenecks and points to router throughput and queuing latency as signals of trouble. And the Ray ecosystem continues to invest in request routing as a first-class performance lever, including new support for custom routing to reduce latency in demanding serving workloads.

The practical takeaway for indexing is: the framework overhead is part of the system. You have to measure it, profile it, and design around it, especially when your workload includes many short jobs where overhead is a larger fraction of total time. That exact “short-video setup tax” dynamic was one of the breaking points described in Part 1.

Reality #2: video decoding is core, and it is often CPU-bound

It is easy to talk about video indexing as “GPU inference plus some glue.” In practice, decoding is one of the most consequential parts of the pipeline, and it can be heavily CPU-bound depending on codec, format, and whether hardware decode is available.

NVIDIA’s NVDEC documentation makes the trade-off explicit: when decoding is offloaded to NVDEC, the CPU is freed for other work. That is another way of saying the default path without that offload consumes meaningful CPU.

Even in modern PyTorch ecosystem tooling, CUDA decoding is positioned as an acceleration path because it can be faster than CPU decoding for the decode step. But there is an important caveat: CUDA decoding can fall back to CPU decoding when the codec or format is not supported by the hardware decoder.

For an indexing platform, that means CPU capacity is not optional background noise. It can be a primary bottleneck, even when the “headline stage” is a GPU model. You can also end up with the worst kind of utilization: GPUs idle because CPU decode is saturated. This is why “heterogeneous resource profiles” is not an abstract point. Decode alone can force you to treat CPU as a first-class scheduling dimension.

Reality #3: heterogeneous stages require explicit resource modeling

Indexing workloads are resource heterogenous by default:

download and IO phases

decode phases that can be CPU heavy

embedding phases that may be GPU heavy

post-processing phases that can swing between CPU and memory pressure

A unifying execution layer helps only if you model those requirements explicitly. Ray Core supports specifying logical resource requirements like CPU and GPU for tasks and actors, and schedules them only on nodes with sufficient resources. That capability is essential when you want to avoid the failure mode where one stage silently starves another, or where concurrency grows faster than the bottleneck stage can support.

This is also where the “streamlined layers” reframe in Part 1 becomes operationally real. You are not only layering code, you are layering resource semantics: the indexing platform expresses the flow, the execution layer enforces concurrency and placement, and the infrastructure layer supplies the physical substrate.

The lesson: abstractions do not eliminate work, they relocate it

A strong execution layer does not eliminate engineering work. It relocates it. Instead of writing bespoke orchestration code, you spend more time on: understanding the request path, instrumentation and tuning, and making framework behavior match workload shape.

There is also a second lesson we learned that is worth stating explicitly: if you bet on a unifying execution layer early, treat the platform team behind it as an extension of your team.

Ray Serve and Anyscale gave us leverage, but we also ran into real edge cases that only appear under production-grade video workloads. We worked closely with the Ray Serve team at Anyscale to identify bottlenecks, provide reproducible cases, and iterate on fixes. Some issues were rough at first, but the collaboration is part of what made the bet viable over time. In a fast-moving serving stack, the relationship is not an afterthought. It is part of the architecture.

4 - Supporting multiple deployment environments without disruption

Figure 4: One conceptual platform, many substrates

We made a strong claim in Part 1: deployment shape should be a variable, not a defining feature of the system. This section is about what it takes to make that true in practice.

Deployment is not an afterthought, it is a design axis

Once customers rely on indexing outputs, deployment constraints become expensive to change. If your system is welded to one control plane or one operational model, every new environment becomes either a rewrite or a fork.

Indexing 3.0 treats “where it runs” as a first-class input to architecture, not a packaging task for later.

Flexibility without fragmentation

Supporting multiple environments does not mean maintaining multiple platforms.

The goal is one conceptual system, with environment-specific concerns pushed to the lowest layer possible. This is the same layering principle from Part 1: infrastructure should be swappable without forcing application logic to change.

Practically, that implies:

Keeping core indexing behavior consistent across environments.

Isolating differences to configuration, deployment tooling, and thin adapters.

Avoiding “special case” code paths that silently diverge over time.

The hidden cost of ignoring deployment reality

If you ignore deployment diversity, the platform fragments anyway. It just fragments through ad hoc forks, one-off patches, and environment-specific operational playbooks.

Designing for multiple environments upfront is cheaper than paying that fragmentation tax later. It is also the difference between a system that can evolve and a system that has to be replaced every time the business changes its deployment assumptions.

Conclusion: Infrastructure that scales people scales everything else

Indexing 3.0 did not start with a performance target. It started with a question that many ML teams eventually face:

What happens when the system works, but only a few people truly understand it, and only in one environment?

The answer, we learned, is that progress slows in subtle but compounding ways. Changes take longer. Deployments become brittle. Onboarding turns into archaeology. And architectural decisions made for convenience quietly harden into long-term constraints.

The most meaningful outcome of Indexing 3.0 is not a single technical feature; it’s a shift in what the system makes possible:

More engineers can contribute without coordination bottlenecks

The same core platform can run across cloud, on-prem, and constrained environments

Deployment shape no longer dictates architectural complexity

Cost and performance optimization become tractable because the system is simpler

The broader lesson is one we believe applies well beyond video indexing.

In ML infrastructure, scaling is rarely blocked by models or hardware alone. It is blocked by systems that are difficult to reason about, difficult to deploy outside their original assumptions, and difficult for new people to safely change.

Simplicity, in that context, is not a lack of ambition. It is a deliberate design choice that trades local cleverness for global leverage.

Indexing 3.0 is our attempt to build that leverage into the foundation; so future work can focus less on untangling infrastructure, and more on advancing what video understanding makes possible.