Tutorials

How to Automatically Get a Written Summary of a YouTube Video?

Meeran Kim

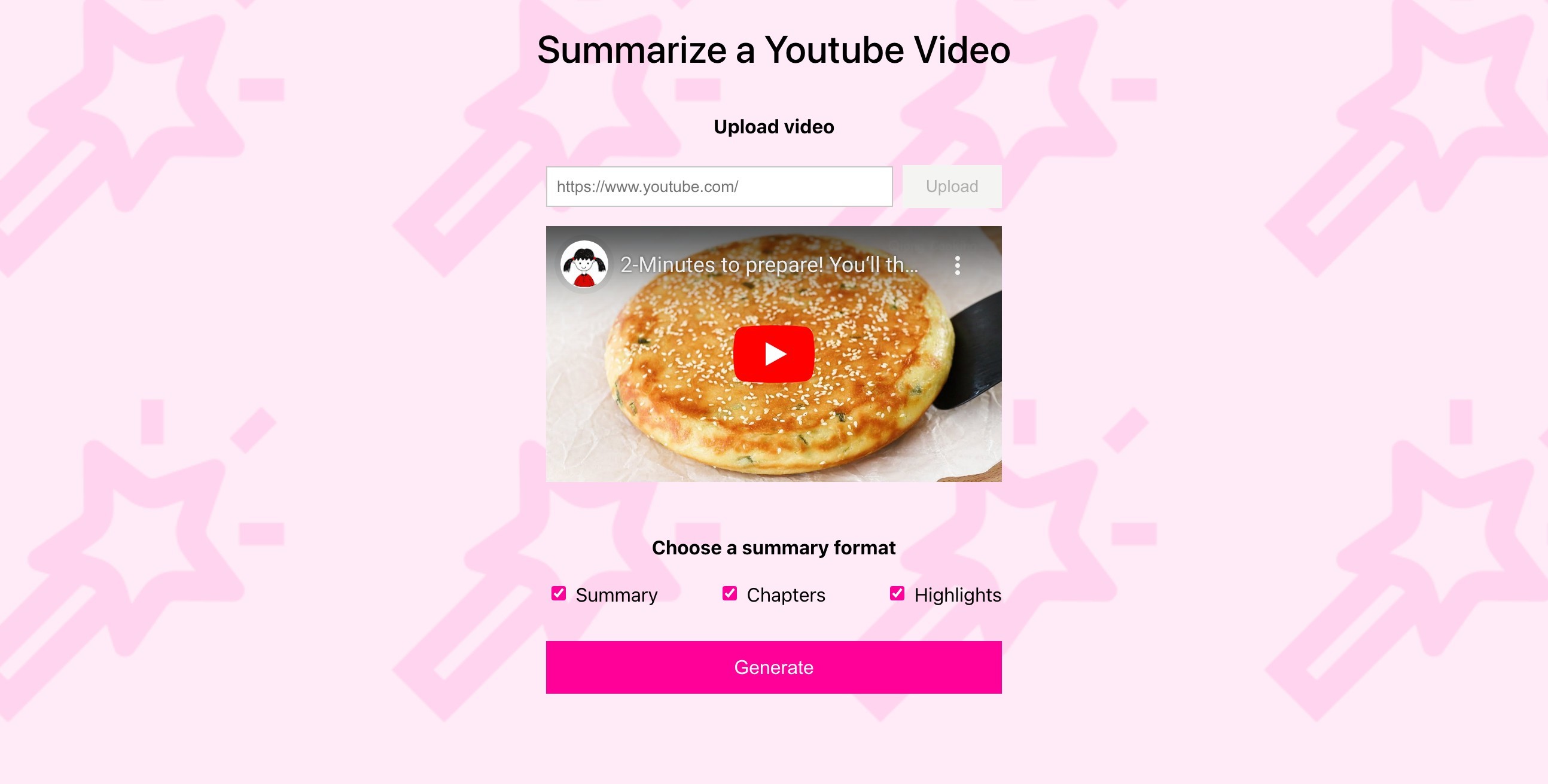

"Summarize a Youtube Video" app gets your back when you need a speedy text summary of any video in your sights.

"Summarize a Youtube Video" app gets your back when you need a speedy text summary of any video in your sights.

In this article

Join our newsletter

Join our newsletter

Receive the latest advancements, tutorials, and industry insights in video understanding

Receive the latest advancements, tutorials, and industry insights in video understanding

Search, analyze, and explore your videos with AI.

Feb 26, 2024

10 Min

Copy link to article

Coming from a marketing background, I've always been deeply intrigued by the world of influencers. I realized one of the challenges of being an influencer is to keep getting inspiration to create and deliver great content. This is particularly true for YouTube influencers, who need to watch numerous videos by others to understand structure, key points, and highlights.

When I discovered Twelve Labs' Generate API, I immediately recognized its potential to address this challenge. I set out to build a simple app leveraging this API, aiming to generate summaries, chapters, and highlights of YouTube videos. The app creates comprehensive reports, providing a structured analysis of each video. This not only streamlines the content analysis process but also facilitates better organization of thoughts.

Without further due, let's dive in!

Prerequisites

You should have your Twelve Labs API Key. If you don’t have one, visit the Twelve Labs Playground, sign up, and generate your API key.

The repository containing all the files for this app is available on Github.

(Good to Have) Basic knowledge in JavaScript, Node, React, and React Query. Don't worry if you're not familiar with these though. The key takeaway from this post will be to see how this app utilizes the Twelve Labs API!

How the App is Structured

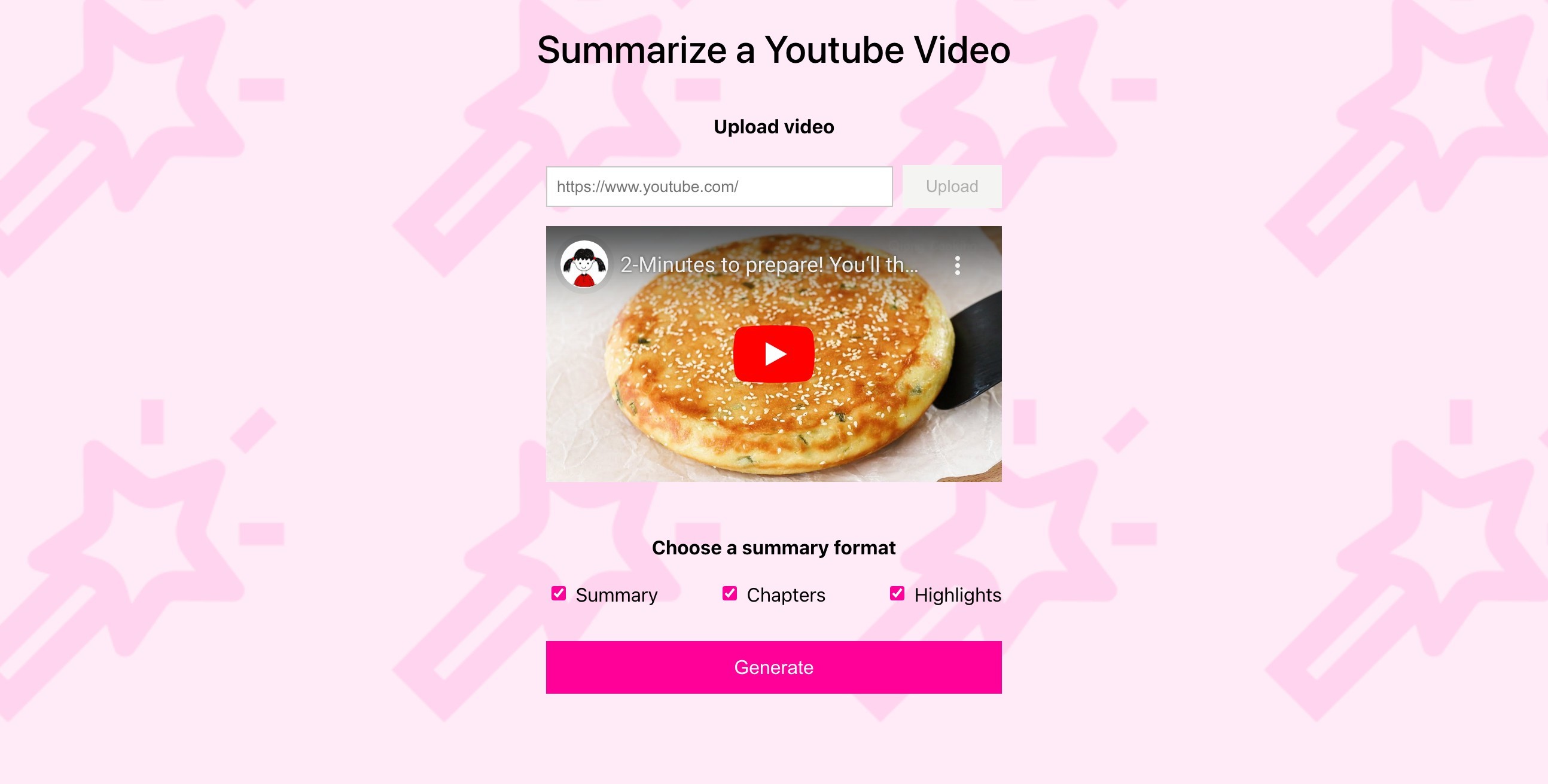

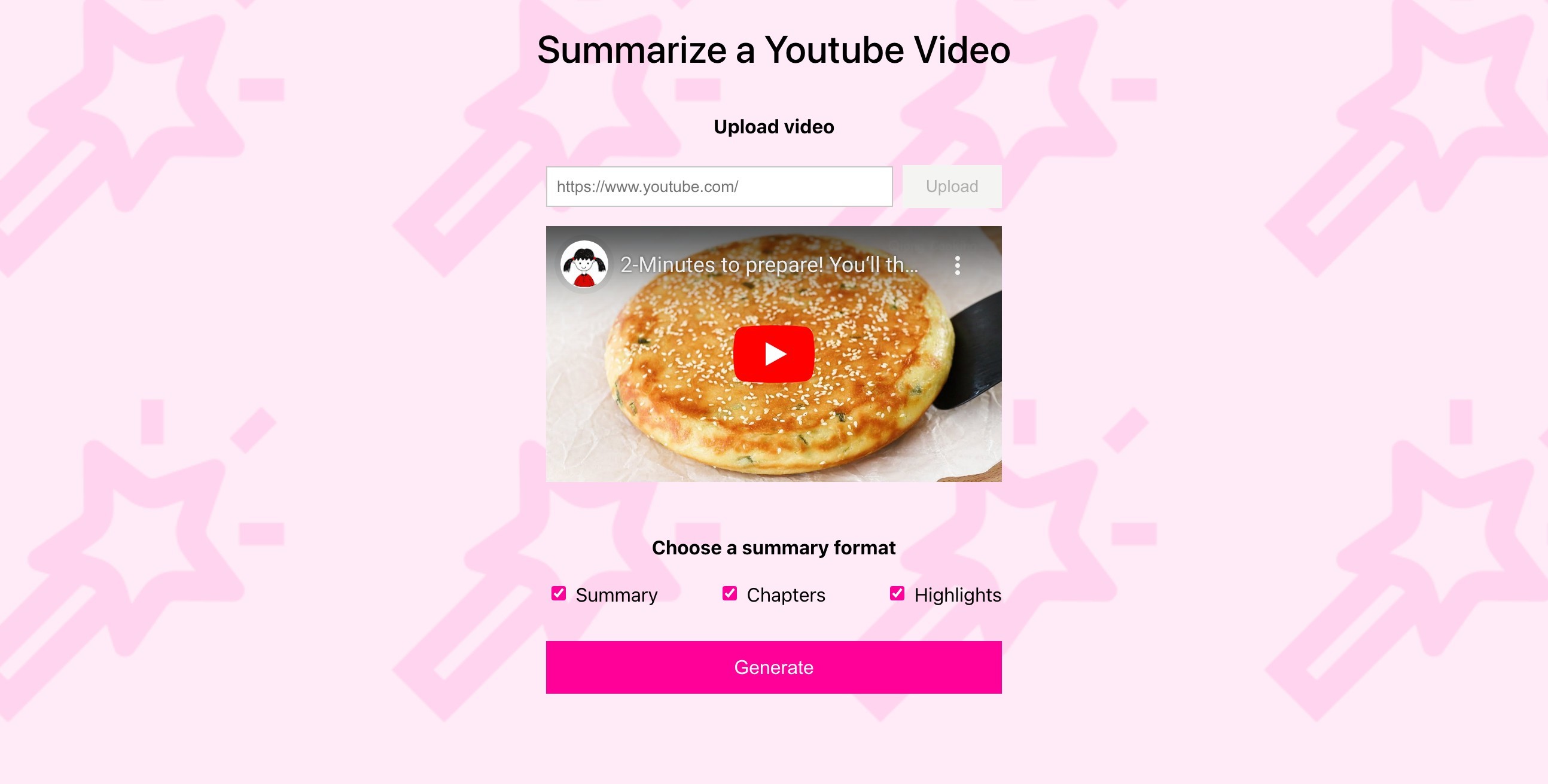

The app consists of five main components; SummarizeVideo, VideoUrlUploadForm, Video, InputForm, Result.

SummarizeVideo: It serves as the parent container for the other components. It holds key states that are shared with its descendants.

VideoUrlUploadForm: It is a simple form that receives the YouTube video url and index the video using TwelveLabs API. It also shows the video that is in the process of indexing as well as the status of an indexing task until the indexing is complete.

Video: It shows a video by a given url. It is reused within three different components.

InputForm: It is a form consisting of three check boxes; Summary, Chapters, and Highlights. A user can check or uncheck each field.

Result: It shows the result of the fields checked from the inputForm by calling the TwelveLabs API (‘/summarize’ endpoint)

The app also has a server that stores all the code involving the API calls and the apiHooks.js which is a set of custom React Query hooks for managing state, cache, and fetching data.

Now, let’s take a look at how these components work along with the Twelve Labs API.

How the App Works with Twelve Labs API

1 - Showing the Most Recent Video of an Index

In generating the summary, this app only works with one video, which is the most recently uploaded one of an index. Thus, on mount, the app shows the most recent video of a given index by default. Below is the process of how it works.

Get all videos of a given index in the App.js (GET Videos)

Extract the first video’s id from the response and pass it down to SummarizeVideo.js

Get details of a video using the video id, extract the video source url and pass it down to Video.js (GET Video)

So we make two GET requests to the Twelve Labs API in this flow of getting the videos and showing the first video on the page. Let’s take each step in detail.

1.1 - Get all videos of a given index in the App.js (GET Videos)

Inside the app, the videos are returned by calling the react query hook useGetVideos which makes the request to the server. The server then makes a GET request to the Twelve Labs API to get all videos of an index. (💡Find details in the API document - GET Videos)

/** Get videos */ app.get("/indexes/:indexId/videos", async (request, response, next) => { const params = { page_limit: request.query.page_limit, }; try { const options = { method: "GET", url: `${API_BASE_URL}/indexes/${request.params.indexId}/videos`, headers: { ...HEADERS }, data: { params }, }; const apiResponse = await axios.request(options); response.json(apiResponse.data); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error Getting Videos"; return next({ status, message }); } });

The returned data (videos) looks like below.

{ "data": [ { "_id": "65caf0fa48db9fa780cb3fc2", "created_at": "2024-02-13T04:32:54Z", "updated_at": "2024-02-13T04:32:58Z", "indexed_at": "2024-02-13T04:40:15Z", "metadata": { "duration": 130, "engine_ids": [ "pegasus1", "marengo2.5" ], "filename": "Adidas CEO Herbert Hainer: How I Work", "fps": 30, "height": 720, "size": 11149582, "width": 1280 }, {…}, }

1.2 - Extract the first video’s id from the response and pass it down to SummarizeVideo.js

Based on the returned videos, we’re passing down the first video’s id to the SummizeVideo.js component.

<SummarizeVideo index={apiConfig.INDEX_ID} videoId={videos.data[0]?._id || null} //passing down the id refetchVideos={refetchVideos} /

1.3 - Get details of a video using the video id, extract the video source url and pass it down to Video.js (GET Video)

Similar to what we’ve seen from the previous step in getting videos, to get details of a video, we’re using the react query hook useGetVideo which makes the request to the server. The server then makes a GET request to the Twelve Labs API to get details of a specific video. (💡Find details in the API document - GET Video)

/** Get a video of an index */ app.get( "/indexes/:indexId/videos/:videoId", async (request, response, next) => { const indexId = request.params.indexId; const videoId = request.params.videoId; try { const options = { method: "GET", url: `${API_BASE_URL}/indexes/${indexId}/videos/${videoId}`, headers: { ...HEADERS }, }; const apiResponse = await axios.request(options); response.json(apiResponse.data); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error Getting a Video"; return next({ status, message }); } } );

It returns the details of a video including what we need, the Youtube Url! You might have noticed that Youtube Url was not available from GET Videos. As a reminder, this is why we’re making the GET Video request here.

{ "_id": "65caf0fa48db9fa780cb3fc2", "created_at": "2024-02-13T04:32:54Z", "updated_at": "2024-02-13T04:32:58Z", "indexed_at": "2024-02-13T04:40:15Z", "metadata": { "duration": 130, "engine_ids": [ "pegasus1", "marengo2.5" ], "filename": "Adidas CEO Herbert Hainer: How I Work", "fps": 30, "height": 720, "size": 11149582, "video_title": "Adidas CEO Herbert Hainer: How I Work", "width": 1280 }, "hls": { "video_url": "...", "thumbnail_urls": [ "..." ], "status": "COMPLETE", "updated_at": "2024-02-13T04:33:29.993Z" }, "source": { "type": "youtube", "name": "The Wall Street Journal", "url": "https://www.youtube.com/watch?v=sHD0YxASbGQ" // here! } }

Based on the returned data (video), we’re passing down its url to the Video component where the React Player is rendered using the url.

SummarizeVideo.js (line 101 - 105)

{video && ( <Video url={video.source?.url} // passing down the url width={"381px"} height={"214px"}

2 - Uploading/Indexing a Video by YouTube Url

In this app, a user can upload and index a video simply by submitting a YouTube URL—thanks to the API version 1.2! Once you submit a video indexing request (we call it a ‘task’), then we can receive the progress of the indexing task. I also made a video visible during the indexing process so that a user can confirm and watch the video while the indexing is in progress.

Create a video indexing task using a YouTube url in VideoUrlUploadForm.js (POST Task)

Fetch video information and shows the video in VideoUrlUploadForm.js (*I used the ytdl-core library)

Receive and show the progress of the indexing task in Task.js (GET Task)

Let’s take a look at each step one by one.

2.1 - Create a video indexing task using a YouTube url in VideoUrlUploadForm.js

When a user submits the videoUrlUploadForm with a YouTube url, the taskVideo is set. I’ve added an useEffect so that if there is a taskVideo, indexYouTubeVideo to be executed.

indexYouTubeVideo makes a post request to the server which makes a post request to Twelve Labs API’s ‘/tasks/external-provider’ endpoint. (💡Find details in the API document - POST Task)

/** Index a Youtube video for analysis, returning a task ID */ app.post("/index", async (request, response, next) => { const options = { method: "POST", url: `${API_BASE_URL}/tasks/external-provider`, headers: { ...HEADERS, accept: "application/json" }, data: request.body.body, }; try { const apiResponse = await axios.request(options); response.json(apiResponse.data); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error indexing a YouTube Video"; return next({ status, message }); } });

It returns an id of the video task that has just been created.

{ "_id": "65a9df3f627beda40b8dfa56" }

2.2 - Fetch video information and shows the video in VideoUrlUploadForm.js

While the video task is being indexed, we’re showing the task video to the user.

VideoUrlUploadForm.js (line 120 - 126)

{taskVideo && ( <div className="videoUrlUploadForm__taskVideoWrapper"> <Video url={taskVideo.video_url} width={"381px"} height={"214px"}

When a user submits the form with a Youtube url, we retrieve the information of the video through getVideoInfo and fetchVideoInfo (React Query hook), then set TaskVideo with that information.

VideoUrlUploadForm.js (line 74 - 88)

/** Get information of a video and set it as task */ async function handleSubmit(evt) { evt.preventDefault(); try { if (!videoUrl?.trim()) { throw new Error("Please enter a valid video URL"); } const videoInfo = await getVideoInfo(videoUrl); //get video info setTaskVideo(videoInfo); //set TaskVideo with the video info we got inputRef.current.value = ""; resetPrompts(); } catch (error) { setError(error.message); } }

We’re using the ytdl-core library -getURLVideoID and getBasicInfo - in getting the video information.

/** Get video information from a YouTube URL using ytdl */ app.get("/video-info", async (request, response, next) => { try { let url = request.query.url; const videoId = ytdl.getURLVideoID(url); const videoInfo = await ytdl.getBasicInfo(videoId); response.json(videoInfo.videoDetails); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error getting info of a video"; return next({ status, message }); } });

2.3 - Receive and show the progress of the indexing task in Task.js

Remember that the POST request to ‘/index’ returns a task id? We will use the task id to get details of a task and keep updating the task status to the user.

So when there is a taskId, the Task component will be rendered.

VideoUrlUploadForm.js (line 130 - 137)

{taskId && ( <Task taskId={taskId} refetchVideos={refetchVideos} index={index} setTaskVideo={setTaskVideo}

Inside the Task component, we’re receiving the data by using the useGetTask React Query hook which makes a GET request to Twelve Labs API. (💡Find details in the API document - GET Task)

/** Check the status of a specific indexing task */ app.get("/tasks/:taskId", async (request, response, next) => { const taskId = request.params.taskId; try { const options = { method: "GET", url: `${API_BASE_URL}/tasks/${taskId}`, headers: { ...HEADERS }, }; const apiResponse = await axios.request(options); response.json(apiResponse.data); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error getting a task"; return next({ status, message }); } });

It returns the task details as below.

{ "_id": "65a9fc79627beda40b8dfa7b", "index_id": "653c0592480f870fb3bb01be", "video_id": "65a9fc7e4981af6e637c8e59", "status": "indexing", "metadata": {...}, "created_at": "2024-01-19T04:37:13.724Z", "updated_at": "2024-01-19T04:41:10.606Z", "estimated_time": "2024-01-19T04:41:36.601Z", "type": "index_task_info", "process": { "upload_percentage": 0, "remain_seconds": 0 }, "hls": {... } }

Unless the status is “ready”, the useGetTask hook will refetch the data every 5000 ms so that a user can see the progress of the task in real-time. Check how I leveraged the refetchInterval property of useQuery below.

export function useGetTask(taskId) { return useQuery({ queryKey: [keys.TASK, taskId], queryFn: () => apiConfig.SERVER.get(`${apiConfig.TASKS_URL}/${taskId}`).then( (res) => res.data ), refetchInterval: (data) => { return data?.status === "ready" || data?.status === "failed" ? false : 5000; }, refetchIntervalInBackground: true, }); }

3 - Receiving User Inputs and Generate/Show Results

This is really the core and fun part - generating summary, chapters, and highlights! We’re receiving the user input then using the Twelve Labs API’s summarize endpoint to generate the written summary, chapters, and highlights of a video.

Receive a user input from the checkbox form in InputForm.js

Based on the user input on each field prompt, make ‘/summary’ API calls in Result.js (POST Summaries, chapters, or highlights)

Show the results in Result.js

Let’s dive into each step.

3.1 - Receive a user input from the checkbox form in InputForm.js

InputForm is a simple form consisting of the three checkbox fields; Summary, Chapters, and Highlights. Whenever a user checks or unchecks each field, each field prompt is set with the type property. This is because the ‘type’ property is required to make the request to the ‘/summary’ in the next step.

if (summaryRef.current?.checked) { setField1Prompt({ type: field1 }); } else { setField1Prompt(null); } if (chaptersRef.current?.checked) { setField2Prompt({ type: field2 }); } else { setField2Prompt(null); } if (highlightsRef.current?.checked) { setField3Prompt({ type: field3 }); } else { setField3Prompt(null); }

3.2 - Based on the user input on each field prompt, make ‘/summary’ API calls in Result.js (POST Summaries, chapters, or highlights)

When the form has been submitted and valid video id and field prompt (type) are available, useGenerate hooks will be called from the Result.js. The hooks will then make the request to the server where the API request to Twelve Labs API is made. (💡Find details in the API document - POST Summaries, chapters, or highlights)

/** Summarize a video */ app.post("/videos/:videoId/summarize", async (request, response, next) => { const videoId = request.params.videoId; let type = request.body.data; try { const options = { method: "POST", url: `${API_BASE_URL}/summarize`, headers: { ...HEADERS, accept: "application/json" }, data: { ...type, video_id: videoId }, }; const apiResponse = await axios.request(options); response.json(apiResponse.data); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error Summarizing a Video"; return next({ status, message }); } });

It returns the data object containing “id” (response id) and the name of the type (either “summary”, “chapters”, or “highlights”). Note that “chapters” and “highlights” include the start and end time of each chapter or highlight of a video so that we can show the results utilizing the video player. Below is the example of a response of “chapters”.

{ "id": "5a3f3e65-206a-4877-a0c5-871050edf3dc", "chapters": [ { "chapter_number": 0, "start": 0, "end": 30, "chapter_title": "The Morning Routine", "chapter_summary": "The video starts with the man discussing his morning routine, including waking up between 6:30 and 7 am and having a cup of coffee…." }, {...}, … ] }

3.3 - Shows the results in Result.js

Based on the responses we get from the above step, the results are shown in the Result component. As mentioned, for the “chapters” and “highlights”, each result is rendered with a video portion and the summary.

Conclusion

With the Twelve Lab’s new ‘/summarize’ endpoint, you can easily generate the written summary, chapters, and highlights of a video. With the API now supporting the video indexing directly by Youtube Url, it is much easier to apply this powerful technology with Youtube videos. I hope my use case gives inspiration to some of you and encourages you to try it out yourself. Happy coding!

What's Next?

Check out the Quickstart tutorial, and begin building amazing apps with Twelve Labs.

Try out our Playground. Default video credits are 10 hours.

Follow us on X (Twitter) and LinkedIn.

Join our Discord community to connect with fellow users and developers.

Coming from a marketing background, I've always been deeply intrigued by the world of influencers. I realized one of the challenges of being an influencer is to keep getting inspiration to create and deliver great content. This is particularly true for YouTube influencers, who need to watch numerous videos by others to understand structure, key points, and highlights.

When I discovered Twelve Labs' Generate API, I immediately recognized its potential to address this challenge. I set out to build a simple app leveraging this API, aiming to generate summaries, chapters, and highlights of YouTube videos. The app creates comprehensive reports, providing a structured analysis of each video. This not only streamlines the content analysis process but also facilitates better organization of thoughts.

Without further due, let's dive in!

Prerequisites

You should have your Twelve Labs API Key. If you don’t have one, visit the Twelve Labs Playground, sign up, and generate your API key.

The repository containing all the files for this app is available on Github.

(Good to Have) Basic knowledge in JavaScript, Node, React, and React Query. Don't worry if you're not familiar with these though. The key takeaway from this post will be to see how this app utilizes the Twelve Labs API!

How the App is Structured

The app consists of five main components; SummarizeVideo, VideoUrlUploadForm, Video, InputForm, Result.

SummarizeVideo: It serves as the parent container for the other components. It holds key states that are shared with its descendants.

VideoUrlUploadForm: It is a simple form that receives the YouTube video url and index the video using TwelveLabs API. It also shows the video that is in the process of indexing as well as the status of an indexing task until the indexing is complete.

Video: It shows a video by a given url. It is reused within three different components.

InputForm: It is a form consisting of three check boxes; Summary, Chapters, and Highlights. A user can check or uncheck each field.

Result: It shows the result of the fields checked from the inputForm by calling the TwelveLabs API (‘/summarize’ endpoint)

The app also has a server that stores all the code involving the API calls and the apiHooks.js which is a set of custom React Query hooks for managing state, cache, and fetching data.

Now, let’s take a look at how these components work along with the Twelve Labs API.

How the App Works with Twelve Labs API

1 - Showing the Most Recent Video of an Index

In generating the summary, this app only works with one video, which is the most recently uploaded one of an index. Thus, on mount, the app shows the most recent video of a given index by default. Below is the process of how it works.

Get all videos of a given index in the App.js (GET Videos)

Extract the first video’s id from the response and pass it down to SummarizeVideo.js

Get details of a video using the video id, extract the video source url and pass it down to Video.js (GET Video)

So we make two GET requests to the Twelve Labs API in this flow of getting the videos and showing the first video on the page. Let’s take each step in detail.

1.1 - Get all videos of a given index in the App.js (GET Videos)

Inside the app, the videos are returned by calling the react query hook useGetVideos which makes the request to the server. The server then makes a GET request to the Twelve Labs API to get all videos of an index. (💡Find details in the API document - GET Videos)

/** Get videos */ app.get("/indexes/:indexId/videos", async (request, response, next) => { const params = { page_limit: request.query.page_limit, }; try { const options = { method: "GET", url: `${API_BASE_URL}/indexes/${request.params.indexId}/videos`, headers: { ...HEADERS }, data: { params }, }; const apiResponse = await axios.request(options); response.json(apiResponse.data); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error Getting Videos"; return next({ status, message }); } });

The returned data (videos) looks like below.

{ "data": [ { "_id": "65caf0fa48db9fa780cb3fc2", "created_at": "2024-02-13T04:32:54Z", "updated_at": "2024-02-13T04:32:58Z", "indexed_at": "2024-02-13T04:40:15Z", "metadata": { "duration": 130, "engine_ids": [ "pegasus1", "marengo2.5" ], "filename": "Adidas CEO Herbert Hainer: How I Work", "fps": 30, "height": 720, "size": 11149582, "width": 1280 }, {…}, }

1.2 - Extract the first video’s id from the response and pass it down to SummarizeVideo.js

Based on the returned videos, we’re passing down the first video’s id to the SummizeVideo.js component.

<SummarizeVideo index={apiConfig.INDEX_ID} videoId={videos.data[0]?._id || null} //passing down the id refetchVideos={refetchVideos} /

1.3 - Get details of a video using the video id, extract the video source url and pass it down to Video.js (GET Video)

Similar to what we’ve seen from the previous step in getting videos, to get details of a video, we’re using the react query hook useGetVideo which makes the request to the server. The server then makes a GET request to the Twelve Labs API to get details of a specific video. (💡Find details in the API document - GET Video)

/** Get a video of an index */ app.get( "/indexes/:indexId/videos/:videoId", async (request, response, next) => { const indexId = request.params.indexId; const videoId = request.params.videoId; try { const options = { method: "GET", url: `${API_BASE_URL}/indexes/${indexId}/videos/${videoId}`, headers: { ...HEADERS }, }; const apiResponse = await axios.request(options); response.json(apiResponse.data); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error Getting a Video"; return next({ status, message }); } } );

It returns the details of a video including what we need, the Youtube Url! You might have noticed that Youtube Url was not available from GET Videos. As a reminder, this is why we’re making the GET Video request here.

{ "_id": "65caf0fa48db9fa780cb3fc2", "created_at": "2024-02-13T04:32:54Z", "updated_at": "2024-02-13T04:32:58Z", "indexed_at": "2024-02-13T04:40:15Z", "metadata": { "duration": 130, "engine_ids": [ "pegasus1", "marengo2.5" ], "filename": "Adidas CEO Herbert Hainer: How I Work", "fps": 30, "height": 720, "size": 11149582, "video_title": "Adidas CEO Herbert Hainer: How I Work", "width": 1280 }, "hls": { "video_url": "...", "thumbnail_urls": [ "..." ], "status": "COMPLETE", "updated_at": "2024-02-13T04:33:29.993Z" }, "source": { "type": "youtube", "name": "The Wall Street Journal", "url": "https://www.youtube.com/watch?v=sHD0YxASbGQ" // here! } }

Based on the returned data (video), we’re passing down its url to the Video component where the React Player is rendered using the url.

SummarizeVideo.js (line 101 - 105)

{video && ( <Video url={video.source?.url} // passing down the url width={"381px"} height={"214px"}

2 - Uploading/Indexing a Video by YouTube Url

In this app, a user can upload and index a video simply by submitting a YouTube URL—thanks to the API version 1.2! Once you submit a video indexing request (we call it a ‘task’), then we can receive the progress of the indexing task. I also made a video visible during the indexing process so that a user can confirm and watch the video while the indexing is in progress.

Create a video indexing task using a YouTube url in VideoUrlUploadForm.js (POST Task)

Fetch video information and shows the video in VideoUrlUploadForm.js (*I used the ytdl-core library)

Receive and show the progress of the indexing task in Task.js (GET Task)

Let’s take a look at each step one by one.

2.1 - Create a video indexing task using a YouTube url in VideoUrlUploadForm.js

When a user submits the videoUrlUploadForm with a YouTube url, the taskVideo is set. I’ve added an useEffect so that if there is a taskVideo, indexYouTubeVideo to be executed.

indexYouTubeVideo makes a post request to the server which makes a post request to Twelve Labs API’s ‘/tasks/external-provider’ endpoint. (💡Find details in the API document - POST Task)

/** Index a Youtube video for analysis, returning a task ID */ app.post("/index", async (request, response, next) => { const options = { method: "POST", url: `${API_BASE_URL}/tasks/external-provider`, headers: { ...HEADERS, accept: "application/json" }, data: request.body.body, }; try { const apiResponse = await axios.request(options); response.json(apiResponse.data); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error indexing a YouTube Video"; return next({ status, message }); } });

It returns an id of the video task that has just been created.

{ "_id": "65a9df3f627beda40b8dfa56" }

2.2 - Fetch video information and shows the video in VideoUrlUploadForm.js

While the video task is being indexed, we’re showing the task video to the user.

VideoUrlUploadForm.js (line 120 - 126)

{taskVideo && ( <div className="videoUrlUploadForm__taskVideoWrapper"> <Video url={taskVideo.video_url} width={"381px"} height={"214px"}

When a user submits the form with a Youtube url, we retrieve the information of the video through getVideoInfo and fetchVideoInfo (React Query hook), then set TaskVideo with that information.

VideoUrlUploadForm.js (line 74 - 88)

/** Get information of a video and set it as task */ async function handleSubmit(evt) { evt.preventDefault(); try { if (!videoUrl?.trim()) { throw new Error("Please enter a valid video URL"); } const videoInfo = await getVideoInfo(videoUrl); //get video info setTaskVideo(videoInfo); //set TaskVideo with the video info we got inputRef.current.value = ""; resetPrompts(); } catch (error) { setError(error.message); } }

We’re using the ytdl-core library -getURLVideoID and getBasicInfo - in getting the video information.

/** Get video information from a YouTube URL using ytdl */ app.get("/video-info", async (request, response, next) => { try { let url = request.query.url; const videoId = ytdl.getURLVideoID(url); const videoInfo = await ytdl.getBasicInfo(videoId); response.json(videoInfo.videoDetails); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error getting info of a video"; return next({ status, message }); } });

2.3 - Receive and show the progress of the indexing task in Task.js

Remember that the POST request to ‘/index’ returns a task id? We will use the task id to get details of a task and keep updating the task status to the user.

So when there is a taskId, the Task component will be rendered.

VideoUrlUploadForm.js (line 130 - 137)

{taskId && ( <Task taskId={taskId} refetchVideos={refetchVideos} index={index} setTaskVideo={setTaskVideo}

Inside the Task component, we’re receiving the data by using the useGetTask React Query hook which makes a GET request to Twelve Labs API. (💡Find details in the API document - GET Task)

/** Check the status of a specific indexing task */ app.get("/tasks/:taskId", async (request, response, next) => { const taskId = request.params.taskId; try { const options = { method: "GET", url: `${API_BASE_URL}/tasks/${taskId}`, headers: { ...HEADERS }, }; const apiResponse = await axios.request(options); response.json(apiResponse.data); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error getting a task"; return next({ status, message }); } });

It returns the task details as below.

{ "_id": "65a9fc79627beda40b8dfa7b", "index_id": "653c0592480f870fb3bb01be", "video_id": "65a9fc7e4981af6e637c8e59", "status": "indexing", "metadata": {...}, "created_at": "2024-01-19T04:37:13.724Z", "updated_at": "2024-01-19T04:41:10.606Z", "estimated_time": "2024-01-19T04:41:36.601Z", "type": "index_task_info", "process": { "upload_percentage": 0, "remain_seconds": 0 }, "hls": {... } }

Unless the status is “ready”, the useGetTask hook will refetch the data every 5000 ms so that a user can see the progress of the task in real-time. Check how I leveraged the refetchInterval property of useQuery below.

export function useGetTask(taskId) { return useQuery({ queryKey: [keys.TASK, taskId], queryFn: () => apiConfig.SERVER.get(`${apiConfig.TASKS_URL}/${taskId}`).then( (res) => res.data ), refetchInterval: (data) => { return data?.status === "ready" || data?.status === "failed" ? false : 5000; }, refetchIntervalInBackground: true, }); }

3 - Receiving User Inputs and Generate/Show Results

This is really the core and fun part - generating summary, chapters, and highlights! We’re receiving the user input then using the Twelve Labs API’s summarize endpoint to generate the written summary, chapters, and highlights of a video.

Receive a user input from the checkbox form in InputForm.js

Based on the user input on each field prompt, make ‘/summary’ API calls in Result.js (POST Summaries, chapters, or highlights)

Show the results in Result.js

Let’s dive into each step.

3.1 - Receive a user input from the checkbox form in InputForm.js

InputForm is a simple form consisting of the three checkbox fields; Summary, Chapters, and Highlights. Whenever a user checks or unchecks each field, each field prompt is set with the type property. This is because the ‘type’ property is required to make the request to the ‘/summary’ in the next step.

if (summaryRef.current?.checked) { setField1Prompt({ type: field1 }); } else { setField1Prompt(null); } if (chaptersRef.current?.checked) { setField2Prompt({ type: field2 }); } else { setField2Prompt(null); } if (highlightsRef.current?.checked) { setField3Prompt({ type: field3 }); } else { setField3Prompt(null); }

3.2 - Based on the user input on each field prompt, make ‘/summary’ API calls in Result.js (POST Summaries, chapters, or highlights)

When the form has been submitted and valid video id and field prompt (type) are available, useGenerate hooks will be called from the Result.js. The hooks will then make the request to the server where the API request to Twelve Labs API is made. (💡Find details in the API document - POST Summaries, chapters, or highlights)

/** Summarize a video */ app.post("/videos/:videoId/summarize", async (request, response, next) => { const videoId = request.params.videoId; let type = request.body.data; try { const options = { method: "POST", url: `${API_BASE_URL}/summarize`, headers: { ...HEADERS, accept: "application/json" }, data: { ...type, video_id: videoId }, }; const apiResponse = await axios.request(options); response.json(apiResponse.data); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error Summarizing a Video"; return next({ status, message }); } });

It returns the data object containing “id” (response id) and the name of the type (either “summary”, “chapters”, or “highlights”). Note that “chapters” and “highlights” include the start and end time of each chapter or highlight of a video so that we can show the results utilizing the video player. Below is the example of a response of “chapters”.

{ "id": "5a3f3e65-206a-4877-a0c5-871050edf3dc", "chapters": [ { "chapter_number": 0, "start": 0, "end": 30, "chapter_title": "The Morning Routine", "chapter_summary": "The video starts with the man discussing his morning routine, including waking up between 6:30 and 7 am and having a cup of coffee…." }, {...}, … ] }

3.3 - Shows the results in Result.js

Based on the responses we get from the above step, the results are shown in the Result component. As mentioned, for the “chapters” and “highlights”, each result is rendered with a video portion and the summary.

Conclusion

With the Twelve Lab’s new ‘/summarize’ endpoint, you can easily generate the written summary, chapters, and highlights of a video. With the API now supporting the video indexing directly by Youtube Url, it is much easier to apply this powerful technology with Youtube videos. I hope my use case gives inspiration to some of you and encourages you to try it out yourself. Happy coding!

What's Next?

Check out the Quickstart tutorial, and begin building amazing apps with Twelve Labs.

Try out our Playground. Default video credits are 10 hours.

Follow us on X (Twitter) and LinkedIn.

Join our Discord community to connect with fellow users and developers.

Coming from a marketing background, I've always been deeply intrigued by the world of influencers. I realized one of the challenges of being an influencer is to keep getting inspiration to create and deliver great content. This is particularly true for YouTube influencers, who need to watch numerous videos by others to understand structure, key points, and highlights.

When I discovered Twelve Labs' Generate API, I immediately recognized its potential to address this challenge. I set out to build a simple app leveraging this API, aiming to generate summaries, chapters, and highlights of YouTube videos. The app creates comprehensive reports, providing a structured analysis of each video. This not only streamlines the content analysis process but also facilitates better organization of thoughts.

Without further due, let's dive in!

Prerequisites

You should have your Twelve Labs API Key. If you don’t have one, visit the Twelve Labs Playground, sign up, and generate your API key.

The repository containing all the files for this app is available on Github.

(Good to Have) Basic knowledge in JavaScript, Node, React, and React Query. Don't worry if you're not familiar with these though. The key takeaway from this post will be to see how this app utilizes the Twelve Labs API!

How the App is Structured

The app consists of five main components; SummarizeVideo, VideoUrlUploadForm, Video, InputForm, Result.

SummarizeVideo: It serves as the parent container for the other components. It holds key states that are shared with its descendants.

VideoUrlUploadForm: It is a simple form that receives the YouTube video url and index the video using TwelveLabs API. It also shows the video that is in the process of indexing as well as the status of an indexing task until the indexing is complete.

Video: It shows a video by a given url. It is reused within three different components.

InputForm: It is a form consisting of three check boxes; Summary, Chapters, and Highlights. A user can check or uncheck each field.

Result: It shows the result of the fields checked from the inputForm by calling the TwelveLabs API (‘/summarize’ endpoint)

The app also has a server that stores all the code involving the API calls and the apiHooks.js which is a set of custom React Query hooks for managing state, cache, and fetching data.

Now, let’s take a look at how these components work along with the Twelve Labs API.

How the App Works with Twelve Labs API

1 - Showing the Most Recent Video of an Index

In generating the summary, this app only works with one video, which is the most recently uploaded one of an index. Thus, on mount, the app shows the most recent video of a given index by default. Below is the process of how it works.

Get all videos of a given index in the App.js (GET Videos)

Extract the first video’s id from the response and pass it down to SummarizeVideo.js

Get details of a video using the video id, extract the video source url and pass it down to Video.js (GET Video)

So we make two GET requests to the Twelve Labs API in this flow of getting the videos and showing the first video on the page. Let’s take each step in detail.

1.1 - Get all videos of a given index in the App.js (GET Videos)

Inside the app, the videos are returned by calling the react query hook useGetVideos which makes the request to the server. The server then makes a GET request to the Twelve Labs API to get all videos of an index. (💡Find details in the API document - GET Videos)

/** Get videos */ app.get("/indexes/:indexId/videos", async (request, response, next) => { const params = { page_limit: request.query.page_limit, }; try { const options = { method: "GET", url: `${API_BASE_URL}/indexes/${request.params.indexId}/videos`, headers: { ...HEADERS }, data: { params }, }; const apiResponse = await axios.request(options); response.json(apiResponse.data); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error Getting Videos"; return next({ status, message }); } });

The returned data (videos) looks like below.

{ "data": [ { "_id": "65caf0fa48db9fa780cb3fc2", "created_at": "2024-02-13T04:32:54Z", "updated_at": "2024-02-13T04:32:58Z", "indexed_at": "2024-02-13T04:40:15Z", "metadata": { "duration": 130, "engine_ids": [ "pegasus1", "marengo2.5" ], "filename": "Adidas CEO Herbert Hainer: How I Work", "fps": 30, "height": 720, "size": 11149582, "width": 1280 }, {…}, }

1.2 - Extract the first video’s id from the response and pass it down to SummarizeVideo.js

Based on the returned videos, we’re passing down the first video’s id to the SummizeVideo.js component.

<SummarizeVideo index={apiConfig.INDEX_ID} videoId={videos.data[0]?._id || null} //passing down the id refetchVideos={refetchVideos} /

1.3 - Get details of a video using the video id, extract the video source url and pass it down to Video.js (GET Video)

Similar to what we’ve seen from the previous step in getting videos, to get details of a video, we’re using the react query hook useGetVideo which makes the request to the server. The server then makes a GET request to the Twelve Labs API to get details of a specific video. (💡Find details in the API document - GET Video)

/** Get a video of an index */ app.get( "/indexes/:indexId/videos/:videoId", async (request, response, next) => { const indexId = request.params.indexId; const videoId = request.params.videoId; try { const options = { method: "GET", url: `${API_BASE_URL}/indexes/${indexId}/videos/${videoId}`, headers: { ...HEADERS }, }; const apiResponse = await axios.request(options); response.json(apiResponse.data); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error Getting a Video"; return next({ status, message }); } } );

It returns the details of a video including what we need, the Youtube Url! You might have noticed that Youtube Url was not available from GET Videos. As a reminder, this is why we’re making the GET Video request here.

{ "_id": "65caf0fa48db9fa780cb3fc2", "created_at": "2024-02-13T04:32:54Z", "updated_at": "2024-02-13T04:32:58Z", "indexed_at": "2024-02-13T04:40:15Z", "metadata": { "duration": 130, "engine_ids": [ "pegasus1", "marengo2.5" ], "filename": "Adidas CEO Herbert Hainer: How I Work", "fps": 30, "height": 720, "size": 11149582, "video_title": "Adidas CEO Herbert Hainer: How I Work", "width": 1280 }, "hls": { "video_url": "...", "thumbnail_urls": [ "..." ], "status": "COMPLETE", "updated_at": "2024-02-13T04:33:29.993Z" }, "source": { "type": "youtube", "name": "The Wall Street Journal", "url": "https://www.youtube.com/watch?v=sHD0YxASbGQ" // here! } }

Based on the returned data (video), we’re passing down its url to the Video component where the React Player is rendered using the url.

SummarizeVideo.js (line 101 - 105)

{video && ( <Video url={video.source?.url} // passing down the url width={"381px"} height={"214px"}

2 - Uploading/Indexing a Video by YouTube Url

In this app, a user can upload and index a video simply by submitting a YouTube URL—thanks to the API version 1.2! Once you submit a video indexing request (we call it a ‘task’), then we can receive the progress of the indexing task. I also made a video visible during the indexing process so that a user can confirm and watch the video while the indexing is in progress.

Create a video indexing task using a YouTube url in VideoUrlUploadForm.js (POST Task)

Fetch video information and shows the video in VideoUrlUploadForm.js (*I used the ytdl-core library)

Receive and show the progress of the indexing task in Task.js (GET Task)

Let’s take a look at each step one by one.

2.1 - Create a video indexing task using a YouTube url in VideoUrlUploadForm.js

When a user submits the videoUrlUploadForm with a YouTube url, the taskVideo is set. I’ve added an useEffect so that if there is a taskVideo, indexYouTubeVideo to be executed.

indexYouTubeVideo makes a post request to the server which makes a post request to Twelve Labs API’s ‘/tasks/external-provider’ endpoint. (💡Find details in the API document - POST Task)

/** Index a Youtube video for analysis, returning a task ID */ app.post("/index", async (request, response, next) => { const options = { method: "POST", url: `${API_BASE_URL}/tasks/external-provider`, headers: { ...HEADERS, accept: "application/json" }, data: request.body.body, }; try { const apiResponse = await axios.request(options); response.json(apiResponse.data); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error indexing a YouTube Video"; return next({ status, message }); } });

It returns an id of the video task that has just been created.

{ "_id": "65a9df3f627beda40b8dfa56" }

2.2 - Fetch video information and shows the video in VideoUrlUploadForm.js

While the video task is being indexed, we’re showing the task video to the user.

VideoUrlUploadForm.js (line 120 - 126)

{taskVideo && ( <div className="videoUrlUploadForm__taskVideoWrapper"> <Video url={taskVideo.video_url} width={"381px"} height={"214px"}

When a user submits the form with a Youtube url, we retrieve the information of the video through getVideoInfo and fetchVideoInfo (React Query hook), then set TaskVideo with that information.

VideoUrlUploadForm.js (line 74 - 88)

/** Get information of a video and set it as task */ async function handleSubmit(evt) { evt.preventDefault(); try { if (!videoUrl?.trim()) { throw new Error("Please enter a valid video URL"); } const videoInfo = await getVideoInfo(videoUrl); //get video info setTaskVideo(videoInfo); //set TaskVideo with the video info we got inputRef.current.value = ""; resetPrompts(); } catch (error) { setError(error.message); } }

We’re using the ytdl-core library -getURLVideoID and getBasicInfo - in getting the video information.

/** Get video information from a YouTube URL using ytdl */ app.get("/video-info", async (request, response, next) => { try { let url = request.query.url; const videoId = ytdl.getURLVideoID(url); const videoInfo = await ytdl.getBasicInfo(videoId); response.json(videoInfo.videoDetails); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error getting info of a video"; return next({ status, message }); } });

2.3 - Receive and show the progress of the indexing task in Task.js

Remember that the POST request to ‘/index’ returns a task id? We will use the task id to get details of a task and keep updating the task status to the user.

So when there is a taskId, the Task component will be rendered.

VideoUrlUploadForm.js (line 130 - 137)

{taskId && ( <Task taskId={taskId} refetchVideos={refetchVideos} index={index} setTaskVideo={setTaskVideo}

Inside the Task component, we’re receiving the data by using the useGetTask React Query hook which makes a GET request to Twelve Labs API. (💡Find details in the API document - GET Task)

/** Check the status of a specific indexing task */ app.get("/tasks/:taskId", async (request, response, next) => { const taskId = request.params.taskId; try { const options = { method: "GET", url: `${API_BASE_URL}/tasks/${taskId}`, headers: { ...HEADERS }, }; const apiResponse = await axios.request(options); response.json(apiResponse.data); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error getting a task"; return next({ status, message }); } });

It returns the task details as below.

{ "_id": "65a9fc79627beda40b8dfa7b", "index_id": "653c0592480f870fb3bb01be", "video_id": "65a9fc7e4981af6e637c8e59", "status": "indexing", "metadata": {...}, "created_at": "2024-01-19T04:37:13.724Z", "updated_at": "2024-01-19T04:41:10.606Z", "estimated_time": "2024-01-19T04:41:36.601Z", "type": "index_task_info", "process": { "upload_percentage": 0, "remain_seconds": 0 }, "hls": {... } }

Unless the status is “ready”, the useGetTask hook will refetch the data every 5000 ms so that a user can see the progress of the task in real-time. Check how I leveraged the refetchInterval property of useQuery below.

export function useGetTask(taskId) { return useQuery({ queryKey: [keys.TASK, taskId], queryFn: () => apiConfig.SERVER.get(`${apiConfig.TASKS_URL}/${taskId}`).then( (res) => res.data ), refetchInterval: (data) => { return data?.status === "ready" || data?.status === "failed" ? false : 5000; }, refetchIntervalInBackground: true, }); }

3 - Receiving User Inputs and Generate/Show Results

This is really the core and fun part - generating summary, chapters, and highlights! We’re receiving the user input then using the Twelve Labs API’s summarize endpoint to generate the written summary, chapters, and highlights of a video.

Receive a user input from the checkbox form in InputForm.js

Based on the user input on each field prompt, make ‘/summary’ API calls in Result.js (POST Summaries, chapters, or highlights)

Show the results in Result.js

Let’s dive into each step.

3.1 - Receive a user input from the checkbox form in InputForm.js

InputForm is a simple form consisting of the three checkbox fields; Summary, Chapters, and Highlights. Whenever a user checks or unchecks each field, each field prompt is set with the type property. This is because the ‘type’ property is required to make the request to the ‘/summary’ in the next step.

if (summaryRef.current?.checked) { setField1Prompt({ type: field1 }); } else { setField1Prompt(null); } if (chaptersRef.current?.checked) { setField2Prompt({ type: field2 }); } else { setField2Prompt(null); } if (highlightsRef.current?.checked) { setField3Prompt({ type: field3 }); } else { setField3Prompt(null); }

3.2 - Based on the user input on each field prompt, make ‘/summary’ API calls in Result.js (POST Summaries, chapters, or highlights)

When the form has been submitted and valid video id and field prompt (type) are available, useGenerate hooks will be called from the Result.js. The hooks will then make the request to the server where the API request to Twelve Labs API is made. (💡Find details in the API document - POST Summaries, chapters, or highlights)

/** Summarize a video */ app.post("/videos/:videoId/summarize", async (request, response, next) => { const videoId = request.params.videoId; let type = request.body.data; try { const options = { method: "POST", url: `${API_BASE_URL}/summarize`, headers: { ...HEADERS, accept: "application/json" }, data: { ...type, video_id: videoId }, }; const apiResponse = await axios.request(options); response.json(apiResponse.data); } catch (error) { const status = error.response?.status || 500; const message = error.response?.data?.message || "Error Summarizing a Video"; return next({ status, message }); } });

It returns the data object containing “id” (response id) and the name of the type (either “summary”, “chapters”, or “highlights”). Note that “chapters” and “highlights” include the start and end time of each chapter or highlight of a video so that we can show the results utilizing the video player. Below is the example of a response of “chapters”.

{ "id": "5a3f3e65-206a-4877-a0c5-871050edf3dc", "chapters": [ { "chapter_number": 0, "start": 0, "end": 30, "chapter_title": "The Morning Routine", "chapter_summary": "The video starts with the man discussing his morning routine, including waking up between 6:30 and 7 am and having a cup of coffee…." }, {...}, … ] }

3.3 - Shows the results in Result.js

Based on the responses we get from the above step, the results are shown in the Result component. As mentioned, for the “chapters” and “highlights”, each result is rendered with a video portion and the summary.

Conclusion

With the Twelve Lab’s new ‘/summarize’ endpoint, you can easily generate the written summary, chapters, and highlights of a video. With the API now supporting the video indexing directly by Youtube Url, it is much easier to apply this powerful technology with Youtube videos. I hope my use case gives inspiration to some of you and encourages you to try it out yourself. Happy coding!

What's Next?

Check out the Quickstart tutorial, and begin building amazing apps with Twelve Labs.

Try out our Playground. Default video credits are 10 hours.

Follow us on X (Twitter) and LinkedIn.

Join our Discord community to connect with fellow users and developers.

Related articles

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved