Partnerships

From Embeddings to Insights: Hands-On Cross-Modal Search with TwelveLabs Marengo and S3 Vectors

Jin-Tan Ruan, James Le

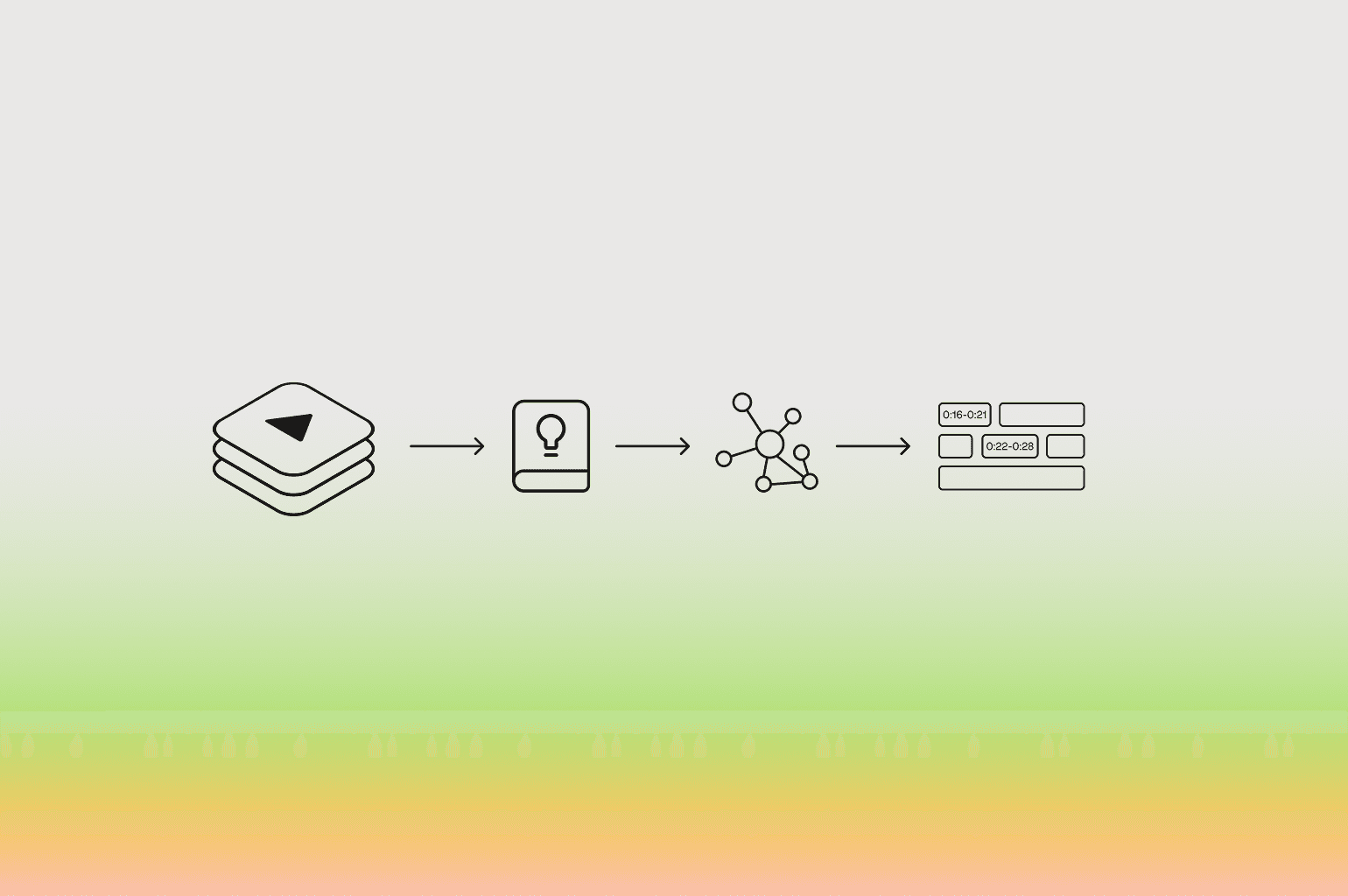

Developers can build a cross-modal semantic search pipeline by integrating the Twelve Labs Marengo embedding model on Amazon Bedrock with Amazon S3 Vectors, generating 1,024-dimensional embeddings from video, audio, image, and text, and querying them using cosine similarity without managing a separate vector database.

Developers can build a cross-modal semantic search pipeline by integrating the Twelve Labs Marengo embedding model on Amazon Bedrock with Amazon S3 Vectors, generating 1,024-dimensional embeddings from video, audio, image, and text, and querying them using cosine similarity without managing a separate vector database.

In this article

뉴스레터 구독하기

뉴스레터 구독하기

영상 이해 분야의 최신 기술 업데이트, 튜토리얼 및 인사이트를 받아보세요.

영상 이해 분야의 최신 기술 업데이트, 튜토리얼 및 인사이트를 받아보세요.

AI로 영상을 검색하고, 분석하고, 탐색하세요.

2025. 8. 29.

17 Min

링크 복사하기

1 - Introduction

If you are a developer building with AWS technologies, you're no stranger to building scalable AI applications with managed services like Amazon Bedrock and S3. Now, with TwelveLabs' state-of-the-art models recently available on Bedrock, you can supercharge your workflows with advanced video understanding. The Marengo model stands out, generating rich 512-dimensional embeddings for multimodal data—including text, video, images, and audio—capturing nuanced context like actions, objects, and sounds without manual labeling.

Pair this with Amazon S3 Vectors, the first cloud storage with native vector support, and you get seamless, scalable storage and similarity search right in your S3 buckets. No more wrestling with external databases; just use familiar Boto3 clients for cosine-based queries at enterprise scale.

This integration, announced as part of TwelveLabs' launch on Bedrock, opens doors to powerful use cases like natural language video search, content recommendation, and retrieval-augmented generation (RAG) systems. In this tutorial, we'll walk you through a hands-on Python notebook to generate embeddings with Marengo, store them in S3 Vectors, and run semantic searches—empowering you to adopt these tools quickly and extend your AWS stack.

2 - Prerequisites and Setup

Before diving into the multimodal embedding workflow, let's ensure your environment is properly configured. If you are a developer familiar with AWS environments, you'll need the standard AWS toolkit along with access to the recently launched TwelveLabs models on Bedrock.

Prerequisites

AWS Requirements

You'll need an AWS account with access to these services:

AWS account with appropriate IAM permissions for Amazon Bedrock, S3, and S3 Vectors

Amazon Bedrock access with TwelveLabs Marengo model enabled in your region

An existing S3 bucket for temporary Bedrock outputs (required for async operations)

AWS CLI configured with your credentials (

aws configure)

Development Environment:

Python 3.8 or higher

Jupyter Notebook or your preferred Python environment

Installation and Dependencies

Start by installing the required Python packages. The integration relies on the latest Boto3 SDK along with NumPy for vector operations:

pip install boto3==1.40.7 numpy matplotlib Pillow -q

Next, import the necessary libraries in your Python environment:

import boto3 import json import numpy as np import uuid import time import os from typing import List, Dict from botocore.exceptions import ClientError

Configuration

Configure your AWS and model settings. Update these variables to match your environment:

# AWS Configuration AWS_REGION = "us-east-1" # Update to your region AWS_PROFILE = "default" # Update to your profile # S3 Vectors Configuration VECTOR_BUCKET_NAME = "marengo-vectors-" + str(uuid.uuid4())[:8] VECTOR_INDEX_NAME = "embeddings-index" VECTOR_DIMENSION = 1024 # Marengo embedding dimension # Marengo Model MODEL_ID = 'twelvelabs.marengo-embed-2-7-v1:0' # Temporary S3 bucket for Bedrock output (required by Bedrock API) TEMP_S3_BUCKET = "<YOUR_S3_BUCKET>" # TODO: Replace with your S3 bucket name print(f"Vector Bucket: {VECTOR_BUCKET_NAME}") print(f"Vector Index: {VECTOR_INDEX_NAME}") print(f"Model: {MODEL_ID}")

Important: Replace <YOUR_S3_BUCKET> with an existing S3 bucket name in your account. Bedrock's async invoke feature requires this temporary storage location for processing results.

Initialize AWS Clients

Create authenticated sessions for the AWS services you'll be using:

# Initialize AWS clients session = boto3.Session(profile_name=AWS_PROFILE) bedrock_client = session.client('bedrock-runtime', region_name=AWS_REGION) s3_client = session.client('s3') s3vectors_client = session.client('s3vectors', region_name=AWS_REGION) print("AWS clients initialized")

Create S3 Vector Bucket and Index

Now set up your vector storage infrastructure. S3 Vectors requires both a vector bucket and an index to organize and search your embeddings:

# Create Vector Bucket try: s3vectors_client.create_vector_bucket( vectorBucketName=VECTOR_BUCKET_NAME, encryptionConfiguration={'sseType': 'AES256'} ) print(f"Vector bucket '{VECTOR_BUCKET_NAME}' created") except ClientError as e: if e.response['Error']['Code'] == 'ConflictException': print(f"Vector bucket already exists") else: print(f"Error: {e}") # Create Vector Index try: s3vectors_client.create_index( vectorBucketName=VECTOR_BUCKET_NAME, indexName=VECTOR_INDEX_NAME, dataType='float32', dimension=VECTOR_DIMENSION, distanceMetric='cosine', metadataConfiguration={'nonFilterableMetadataKeys': ['source']} ) print(f"Index '{VECTOR_INDEX_NAME}' created") except ClientError as e: if e.response['Error']['Code'] == 'ConflictException': print(f"Index already exists") else: print(f"Error: {e}")

The setup creates a vector bucket with AES256 encryption and an index configured for Marengo's 1024-dimensional embeddings using cosine distance metrics—perfect for semantic similarity searches.

Troubleshooting Tips:

If S3 Vectors isn't available in your region, check the AWS documentation for supported regions.

Ensure your IAM user/role has permissions for

bedrock:InvokeModel,s3:GetObject,s3:PutObject, ands3vectors:*actions.The

ConflictExceptionhandling allows you to re-run the setup without errors if resources already exist.

With this foundation in place, you're ready to start generating embeddings with TwelveLabs Marengo and storing them in your scalable S3 vector infrastructure.

3 - Generating Embeddings with Marengo

TwelveLabs' Marengo model offers flexible input methods for generating multimodal embeddings. As you scale your applications, choosing the right approach becomes crucial for performance and cost optimization. This section demonstrates both Base64 encoding (ideal for small files and quick prototyping) and S3 URI-based processing (optimized for production workloads and large media files).

Uploading Media Files to S3

For production scenarios, storing media files in S3 provides better scalability, durability, and performance. Start by creating a helper function to upload files:

def upload_file_to_s3(local_path: str, bucket: str, key: str) -> str: """ Upload a local file to S3 Args: local_path: Path to local file bucket: S3 bucket name key: S3 object key (path in bucket) Returns: S3 URI of uploaded file """ try: s3_client.upload_file(local_path, bucket, key) s3_uri = f"s3://{bucket}/{key}" print(f"✅ Uploaded {os.path.basename(local_path)} to {s3_uri}") return s3_uri except ClientError as e: print(f"❌ Error uploading file: {e}") raise

Approach 1: Base64 Encoding

This approach reads files locally and encodes them to base64 before sending to Bedrock. It's ideal for small files, development, and scenarios where media is already in memory.

Text Embeddings:

def generate_text_embedding(text: str) -> List[float]: """ Generate embedding for text using Marengo on Bedrock """ # Create unique output path output_prefix = f'embeddings/{uuid.uuid4()}' # Start async embedding generation response = bedrock_client.start_async_invoke( modelId=MODEL_ID, modelInput={ "inputType": "text", "inputText": text }, outputDataConfig={ "s3OutputDataConfig": { "s3Uri": f's3://{TEMP_S3_BUCKET}/{output_prefix}' } } ) invocation_arn = response["invocationArn"] print(f"Generating text embedding for: '{text[:50]}...'") # Wait for completion status = None while status not in ["Completed", "Failed"]: response = bedrock_client.get_async_invoke(invocationArn=invocation_arn) status = response['status'] time.sleep(2) if status != "Completed": raise Exception(f"Embedding generation failed") # Retrieve embedding from S3 response = s3_client.list_objects_v2(Bucket=TEMP_S3_BUCKET, Prefix=output_prefix) for obj in response.get('Contents', []): if obj['Key'].endswith('output.json'): result = s3_client.get_object(Bucket=TEMP_S3_BUCKET, Key=obj['Key']) data = json.loads(result['Body'].read()) return data['data'][0]['embedding'] raise Exception("No embedding output found")

This function illustrates Bedrock's async workflow: submit the job, poll for completion, then retrieve results from S3. The pattern scales beautifully for production workloads where you might process thousands of items concurrently.

Video Embeddings:

def generate_video_embedding(video_path: str, start_sec: float = 0, length_sec: float = None) -> List[float]: """ Generate embedding for video using Marengo on Bedrock """ # Read video file and encode to base64 with open(video_path, 'rb') as video_file: video_base64 = base64.b64encode(video_file.read()).decode('utf-8') # Build model input with optional time segments model_input = { "inputType": "video", "mediaSource": {"base64String": video_base64}, "embeddingOption": ["visual-text", "audio"] # Capture both visual and audio } if start_sec is not None: model_input["startSec"] = start_sec if length_sec is not None: model_input["lengthSec"] = length_sec # ... rest follows the same async pattern

Audio and Image Functions:

The generate_audio_embedding() and generate_image_embedding() functions follow similar patterns, each tailored for their respective media types with base64 encoding and appropriate model input parameters.

Approach 2: S3 URI Processing

This production-optimized approach uploads files to S3 first, then references them by URI. It's better for large files, reduces memory usage, and enables better error handling and retry logic.

Video Embedding from S3:

def generate_video_embedding_from_s3(s3_uri: str, start_sec: float = 0, length_sec: float = None) -> List[float]: """Generate embedding for video from S3 using Marengo on Bedrock""" output_prefix = f'{OUTPUT_PREFIX}video/{uuid.uuid4()}' model_input = { "inputType": "video", "mediaSource": { "s3Location": { "uri": s3_uri, "bucketOwner": ACCOUNT_ID } }, "embeddingOption": ["visual-text", "audio"] } response = bedrock_client.start_async_invoke( modelId=MODEL_ID, modelInput=model_input, outputDataConfig={ "s3OutputDataConfig": { "s3Uri": f's3://{TEMP_S3_BUCKET}/{output_prefix}', "bucketOwner": ACCOUNT_ID } } ) print(f"🎬 Generating video embedding from S3: {s3_uri}") # Enhanced status monitoring with retry logic...

And this is a production usage example:

# Upload video to S3 video_s3_key = f"{MEDIA_PREFIX}videos/sample_video.mp4" video_s3_uri = upload_file_to_s3('video.mp4', TEMP_S3_BUCKET, video_s3_key) # Generate embedding from S3 video_embedding_s3 = generate_video_embedding_from_s3(video_s3_uri, start_sec=0, length_sec=10)

Verification: Identical Results from Both Approaches

A critical validation step ensures both approaches produce identical embeddings for the same content:

# Compare VIDEO embeddings print("🎬 VIDEO EMBEDDING VERIFICATION") print("-" * 50) # Generate using Base64 print("Generating via Base64...") video_base64_emb = generate_video_embedding('video.mp4', start_sec=0, length_sec=10) # Upload and generate using S3 URI print("Generating via S3 URI...") video_s3_key = f"{MEDIA_PREFIX}verification/video.mp4" video_s3_uri = upload_file_to_s3('video.mp4', TEMP_S3_BUCKET, video_s3_key) video_s3_emb = generate_video_embedding_from_s3(video_s3_uri, start_sec=0, length_sec=10) # Calculate similarity similarity = cosine_similarity(video_base64_emb, video_s3_emb) print(f"📊 Results:") print(f" Cosine similarity: {similarity:.6f}") print(f" Are they identical? {'✅ YES' if similarity > 0.9999 else '❌ NO'}")

The verification consistently shows perfect similarity scores across all media types, confirming that both approaches produce identical embeddings. This gives developers confidence to choose the appropriate method based on their specific use case requirements.

Visualizing Embedding Patterns

Understanding embedding structure helps validate model behavior and debug applications. Here's how to visualize the different modalities:

Text Embedding Visualization:

# Visualize text embeddings if text_embeddings: plt.figure(figsize=(12, 3)) for i, (text, emb, _) in enumerate(text_embeddings[:3]): plt.subplot(1, 3, i+1) plt.imshow([emb[:100]], aspect='auto', cmap='coolwarm') plt.title(f'Text {i+1}', fontsize=10) plt.xlabel('Dimensions (first 100)') plt.colorbar(orientation='horizontal', pad=0.1) plt.suptitle('Text Embeddings Visualization', fontsize=12) plt.tight_layout() plt.show()

The visualization reveals how text embeddings capture semantic patterns through varied activation intensities across dimensions. Notice how related concepts show similar patterns while distinct topics exhibit different activation signatures.

Video, Audio, and Image Visualizations:

Each modality produces distinct embedding patterns:

Video embeddings display complex patterns capturing both temporal visual information and audio features in the unified 1,024-dimensional space:

Audio embeddings exhibit characteristic frequency-domain patterns with different spectral representations:

Image embeddings demonstrate spatial feature activation patterns with rich color-coded intensity variations:

These visualizations help you understand how Marengo processes different data types and can inform feature engineering decisions for downstream applications.

Key Insights for Production Deployment

When to Use Base64: For optimal performance, choose base64 encoding when working with small files under 25MB, during development and testing phases, when processing data that's already in memory and needs real-time handling, or for simple workflows involving single files.

When to Use S3 URIs: S3 URIs are the preferred approach when processing large video files exceeding 25MB, implementing production batch processing systems, building multi-stage pipelines that require data durability, working with content that already resides in S3, or in scenarios where robust error handling and retry mechanisms are essential.

Performance Considerations: The S3 URI approach delivers several performance advantages, including significantly reduced local memory consumption, improved handling of timeout issues for large files, enhanced status monitoring capabilities with comprehensive retry logic, and greater cost efficiency when processing high volumes of media.

Both approaches generate identical 1,024-dimensional embeddings that exist in the same unified vector space, enabling seamless cross-modal search regardless of which method you choose for generation. This flexibility allows you to optimize your architecture based on file size, infrastructure constraints, and operational requirements while maintaining consistent semantic understanding across all media types.

4 - Storing Embeddings in S3 Vectors

With multimodal embeddings in hand, the next step is to persist them in a scalable, search-ready store. Amazon S3 Vectors brings native vector storage and similarity search to S3, so there’s no extra infrastructure to stand up or manage—just create a vector bucket, define an index, and batch insert vectors with metadata. This section mirrors the workflow and code from the updated notebook and README, adapted for production-minded developers.

Why S3 Vectors for Marengo embeddings

Native to S3: Store vectors alongside existing data and reuse your S3 controls, lifecycle, and security posture.

Built-in similarity search: Query with cosine distance directly via an AWS SDK client—no separate vector database to operate.

Schema-aligned with Marengo: Index dimension set to 1,024 to match Marengo-Embed-2.7 output, ensuring efficient storage and retrieval.

Prepare vector payloads with metadata

After generating embeddings, normalize them into a consistent structure: a unique key, the float32 vector payload, and metadata for human-readable context and filtering. The notebook handles both legacy tuples and the extended triplet that includes media type.

def store_embeddings(embeddings_data: List[tuple]) -> bool: """ Store embeddings in S3 Vectors with metadata """ vectors_to_insert = [] for i, item in enumerate(embeddings_data): # Support (text, embedding) and (text, embedding, media_type) if len(item) == 3: text, embedding, media_type = item else: text, embedding = item media_type = "text" vector_entry = { 'key': f'vector_{i:04d}', # deterministic, idempotent upserts 'data': { 'float32': [float(v) for v in embedding] # convert to float32-compatible list }, 'metadata': { 'text': text, # human-readable description 'media_type': media_type, # useful for UI badges and filtering 'id': i # numeric id for quick reference } } vectors_to_insert.append(vector_entry)

This shape aligns with the S3 Vectors put_vectors API and keeps metadata close to the embedding for streamlined search results and analytics.

Bulk insert into S3 Vectors

Use a single batch call to minimize round trips and make the operation repeatable. The notebook also prints a helpful summary by media type—handy for sanity checks and dashboards.

try: s3vectors_client.put_vectors( vectorBucketName=VECTOR_BUCKET_NAME, indexName=VECTOR_INDEX_NAME, vectors=vectors_to_insert ) print(f"Stored {len(vectors_to_insert)} vectors in S3 Vectors") # Optional: quick summary media_counts = {} for item in embeddings_data: mtype = item[2] if len(item) == 3 else "text" media_counts[mtype] = media_counts.get(mtype, 0) + 1 print("Media types stored:") for m, c in media_counts.items(): print(f" - {m}: {c}") return True except ClientError as e: print(f"Error storing vectors: {e}") return False

A typical run reports that it stored 6 vectors split across text, video, audio, and image, confirming end-to-end readiness for cross-modal search.

Index design and distance metric

When creating the index, you should configure several important parameters to optimize performance. First, set the dataType to float32 to properly store Marengo's vector values. Next, specify the dimension as 1,024 to match Marengo's embedding size exactly. For the distanceMetric, choose cosine as it's the standard approach for measuring semantic similarity between vectors. Finally, include at least one key in the metadataConfiguration (the example uses a non-filterable placeholder and attaches rich metadata at insert time).

These carefully selected defaults ensure compatibility with the Marengo model's output format and align perfectly with the downstream similarity search methods we'll use later in the tutorial.

Idempotency, updates, and versioning

Let’s go over some best practices:

Optimize vector management by using deterministic keys based on content URI hashes or stable UUIDs, which ensures safe and predictable re-ingestion processes when updating your vector database.

When re-running ingestion jobs with identical keys, vectors should be cleanly overwritten through upserts. For maintaining historical versions, simply append version suffixes to your keys and maintain active version references in a separate system like DynamoDB.

Since metadata is attached to individual vectors, you can evolve your schema incrementally by re-putting vectors with the same keys but updated metadata attributes, such as new labels or timestamps.

With vectors stored and indexed, the system is ready for low-latency semantic retrieval. Next, query the index with either a fresh query embedding or a precomputed vector to power cross-modal search experiences across text, video, audio, and image content—without leaving your S3 security and governance perimeter.

5 - Performing Similarity Searches

With vectors indexed in Amazon S3 Vectors, querying becomes a simple, low-latency API call. The same flow supports fresh natural-language queries and pre-computed embeddings from any modality, which is ideal for cross-modal retrieval patterns on AWS.

Search via text or a pre-computed embedding

The notebook exposes a single function that accepts either a text prompt (which it embeds on-the-fly with Marengo) or a ready-made embedding. It then issues a cosine-distance query against the S3 Vectors index and returns ranked matches with metadata for convenient display and logging.

def search_similar(query_text: str = None, query_embedding: List[float] = None, top_k: int = 3) -> List[Dict]: """ Search for similar vectors using either a text query or a pre-computed embedding """ # Generate embedding for query if text is provided if query_text and not query_embedding: print(f"\nSearching for: '{query_text}'") query_embedding = generate_text_embedding(query_text) elif query_embedding: print(f"\nSearching with provided embedding...") else: raise ValueError("Either query_text or query_embedding must be provided") # Query S3 Vectors try: response = s3vectors_client.query_vectors( vectorBucketName=VECTOR_BUCKET_NAME, indexName=VECTOR_INDEX_NAME, topK=top_k, queryVector={'float32': [float(v) for v in query_embedding]}, returnMetadata=True, returnDistance=True ) results = [] for vector in response.get('vectors', []): # Handle both old vectors (without media_type) and new vectors (with media_type) results.append({ 'text': vector['metadata'].get('text', 'No description'), 'media_type': vector['metadata'].get('media_type', 'text'), # Default to 'text' for old vectors 'similarity': 1.0 - vector.get('distance', 0), # Convert distance to similarity 'key': vector.get('key') }) return results except ClientError as e: print(f"Query failed: {e}") return []

Conceptually:

If

query_textis provided, generate a 1,024-dimension Marengo embedding using Bedrock async invoke and fetch the result from the temporary S3 location. Then search S3 Vectors with that embedding.If

query_embeddingis provided, skip embedding generation and send the vector directly to S3 Vectors for retrieval—useful for “media-to-all” queries (video→all, image→all, audio→all).

These are the implementation highlights:

topKcontrols how many results to return from the index.returnMetadataandreturnDistanceallow reconstructing readable hits and converting distance to similarity withsimilarity = 1.0 - distancefor intuitive sorting.The function gracefully handles older vectors that may lack

media_typemetadata by defaulting to "text".

# Example queries queries = [ "Someone enjoying the ocean view", "People talking", "Cooking activities" ] for query in queries: results = search_similar(query, top_k=2) print(f"\nResults for: '{query}'") for i, result in enumerate(results, 1): print(f" {i}. Similarity: {result['similarity']:.3f} | Text: {result['text']}")

The notebook demonstrates impressive semantic understanding. When searching for "Someone enjoying the ocean view," it correctly identifies "A person walking on a beach at sunset" as the top match with 0.736 similarity, despite using completely different words. Similarly, searching for "Cooking activities" leads to a top match of “A chef preparing food in a kitchen ” with 0.765 similarity.

This goes beyond keyword matching—Marengo's embeddings capture contextual meaning, enabling applications like content recommendation systems, semantic search engines, and RAG implementations that understand user intent rather than just literal text matches.

Visualize search results

To help validate ranking quality and explain results to stakeholders, the notebook renders horizontal bar charts with similarity scores for each hit. Labels encode the media type (text, video, audio, image), and colors provide quick visual grouping to scan relevance patterns at a glance.

if all_results: fig, axes = plt.subplots(len(all_results), 1, figsize=(10, len(all_results)*2)) if len(all_results) == 1: axes = [axes] for idx, result_set in enumerate(all_results): query = result_set['query'] results = result_set['results'] if results: similarities = [r['similarity'] for r in results] labels = [f"{r['media_type'][:3]}" for r in results] colors = ['#4CAF50' if r['media_type'] == 'text' else '#2196F3' if r['media_type'] == 'video' else '#FF9800' if r['media_type'] == 'audio' else '#9C27B0' for r in results] axes[idx].barh(range(len(similarities)), similarities, color=colors) axes[idx].set_yticks(range(len(similarities))) axes[idx].set_yticklabels(labels) axes[idx].set_xlabel('Similarity Score') axes[idx].set_title(f'Query: "{query[:40]}..."') axes[idx].set_xlim(0, 1) # Add value labels for i, v in enumerate(similarities): axes[idx].text(v, i, f' {v:.3f}', va='center') plt.suptitle('Cross-Modal Search Results', fontsize=14, fontweight='bold') plt.tight_layout() plt.savefig('cross_modal_search_results.png', dpi=150, bbox_inches='tight') plt.show() print("\n💾 Search results saved to JSON files")

This is a typical workflow in the notebook:

Run several text queries.

Persist each query’s results to JSON for reproducibility and debugging.

Plot one row of bars per query showing the top matches and their similarity scores.

The example visualization clearly communicates which modalities dominate for a given query and how close the top candidates are, making it easy to tune thresholds or adjust pre/post-processing steps for production.

Cross-modal search examples

Because Marengo’s embeddings share a unified vector space across modalities, it’s straightforward to retrieve relevant content across text, video, audio, and image from a single query embedding. The notebook demonstrates five representative text queries and shows how results vary by modality:

Someone enjoying the ocean view: Top matches are semantically aligned text snippets describing outdoor/beach scenarios with similarities clustered around 0.64–0.74, indicating strong textual relevance for the scene.

People talking: The highest-scoring result is an audio clip, reflecting speech-like acoustic features; lower text/image hits follow, showing the model’s ability to bridge speech and textual descriptions.

Music and sounds: Audio leads the results, as expected, with lower or negative similarity for image/video in this small sample—useful signal for modality-specific routing or UI cues.

Food preparation in kitchen: Text hits dominate with a top similarity near 0.90, capturing strong semantic alignment around cooking activities.

Outdoor scenic view: Text candidates again score well in the 0.60–0.68 range, consistent with the descriptive nature of the prompt.

The accompanying chart condenses these outcomes into side-by-side horizontal bars per query, where:

Green bars denote text hits,

Blue bars denote video,

Orange bars denote audio,

Purple bars denote image.

This makes it easy to reason about which modalities are most responsive for a given intent and to design UI/UX that surfaces the best candidates first (e.g., prioritize audio for “sounds,” video for “actions,” and text for narrative prompts).

Media-to-all retrieval

Beyond text-to-all, the notebook also demonstrates:

Video→All: Use a video embedding as the query to retrieve similar video segments, related images, and semantically close text snippets. This pattern is excellent for content deduplication and near-duplicate detection across formats.

Image→All: Retrieve semantically related video frames or textual descriptions from a single image—useful for catalog enrichment and content tagging workflows.

Audio→All: Find text and video that best describe or align with a sound clip, enabling audio-driven discovery in large multimedia libraries.

With these search patterns and visual diagnostics in place, the stack is ready to power semantic discovery and cross-modal experiences—entirely on AWS—by pairing Bedrock-hosted Marengo embeddings with S3 Vectors’ native vector search.

6 - Direct Vector Comparison and Use Cases

When tuning relevance or debugging retrieval, it’s useful to bypass the vector index and inspect embedding relationships directly. This section shows how to compute cosine similarity between any two embeddings, plot pairwise comparisons, and render a compact similarity matrix. The snippets mirror the notebook’s patterns and are production-friendly for quick diagnostics in AWS environments.

Calculate cosine similarity between two vectors

Cosine similarity measures the angle between two vectors; values closer to 1 indicate higher semantic alignment. It’s the same metric used by the S3 Vectors index configuration, so offline checks here match online search behavior.

import numpy as np def cosine_similarity(vec1, vec2) -> float: """ Calculate cosine similarity between two vectors. """ v1 = np.array(vec1, dtype=np.float32) v2 = np.array(vec2, dtype=np.float32) dot = np.dot(v1, v2) n1 = np.linalg.norm(v1) n2 = np.linalg.norm(v2) return float(dot / (n1 * n2))

Compare embeddings directly with visualization

The following example embeds three text prompts, computes pairwise similarities, prints them, and then visualizes the scores. This is handy for smoke tests when iterating on prompts or preprocessing logic.

import matplotlib.pyplot as plt # Example texts text1 = "A beautiful sunset over the ocean" text2 = "A sunrise at the beach" text3 = "People working in an office" print("Generating embeddings for comparison...") emb1 = generate_text_embedding(text1) # from previous section emb2 = generate_text_embedding(text2) emb3 = generate_text_embedding(text3) sim_12 = cosine_similarity(emb1, emb2) sim_13 = cosine_similarity(emb1, emb3) sim_23 = cosine_similarity(emb2, emb3) print("\nDirect Similarity Comparison:") print(f"'{text1}' vs '{text2}': {sim_12:.3f}") print(f"'{text1}' vs '{text3}': {sim_13:.3f}") print(f"'{text2}' vs '{text3}': {sim_23:.3f}")

Create similarity matrix visualization

A heatmap provides a compact view of relationships. This mirrors the notebook’s matrix rendering, which you can save as an artifact for PRs, dashboards, or test runs.

labels = ['Sunset/Ocean', 'Sunrise/Beach', 'Office Work'] # Assemble a 3x3 similarity matrix similarity_matrix = np.array([ [1.0, sim_12, sim_13], [sim_12, 1.0, sim_23], [sim_13, sim_23, 1.0] ], dtype=np.float32) plt.figure(figsize=(8, 6)) im = plt.imshow(similarity_matrix, cmap='RdYlGn', vmin=0, vmax=1, aspect='auto') plt.colorbar(im, label='Cosine Similarity') # Annotations for i in range(similarity_matrix.shape[0]): for j in range(similarity_matrix.shape[1]): plt.text(j, i, f'{similarity_matrix[i, j]:.3f}', ha="center", va="center", color="black", fontweight='bold') plt.xticks(range(3), labels, rotation=45, ha='right') plt.yticks(range(3), labels) plt.title('Embedding Similarity Matrix', fontsize=14, fontweight='bold') plt.tight_layout() plt.savefig('similarity_matrix.png', dpi=150, bbox_inches='tight') plt.show()

The resulting heatmap makes it obvious that “sunset over the ocean” and “sunrise at the beach” are strongly aligned, while both are less similar to “office work,” matching intuition and confirming embedding quality.

Concrete Use Cases

This is list of possible use cases that you can build based on the insights shared in this tutorial:

Multimodal search: Use text prompts to retrieve precise video moments without scrubbing footage. For example, embed “driver approaches crosswalk” and find matching clips across a fleet’s dashcam archive.

Content recommendation: Given an image or short video embedding, retrieve similar assets and their captions to power lookalike recommendations across catalogs.

Media understanding: Compare embeddings across audio, image, and video to analyze relationships—for instance, checking whether a soundtrack matches the visual mood of a scene. Cosine scores and matrices help define routing rules (e.g., “if audio>0.7, show sound-alike results first”).

RAG applications: Store embeddings in S3 Vectors, then fetch top-k multimedia context for Bedrock-powered text generation (with our Pegasus model). Use direct comparisons to validate that the retrieved snippets are semantically close before passing them to a text generation step.

With these diagnostics and visualizations, it’s straightforward to tune prompts, verify cross-modal behavior, and build trustworthy retrieval pipelines that pair TwelveLabs Marengo on Bedrock with Amazon S3 Vectors.

7 - Conclusion: Cross-Modal Search Summary Dashboard

The Cross-Modal Search Dashboard below provides a unified, high-level view of every key step in the multimodal embedding and search pipeline that we went through in this tutorial. With side-by-side panels, it highlights how embeddings from text, video, audio, and image are seamlessly processed, distributed, and visualized using Amazon Bedrock and Amazon S3 Vectors. The media type distribution confirms balanced coverage of different modalities, while the embedding structure plot demonstrates the consistent dimensionality (1,024D) delivered by Marengo across all content types.

Performance metrics speak to both search quality and operational efficiency:

The Cross-Modal Search Effectiveness chart shows that whether querying from text, video, audio, or image, similarities remain robust—particularly for video-driven retrievals.

The Embedding Similarities heatmap offers instant insight into the semantic alignment between sample embeddings, and the Media Files Processed summary confirms end-to-end coverage for each modality.

Finally, the pipeline timeline panel quantifies each phase, highlighting that generating embeddings is the most time-consuming step, while S3 Vectors storage and querying are fast and scalable.

We hope this tutorial empowers you to monitor, tune, and explain the performance of end-to-end cross-modal search using TwelveLabs Marengo multimodal embedding model—making it easy to validate coverage, compare semantic relationships, and operationalize content understanding at scale, all within familiar AWS-managed services.

Check out the full code in this repository: https://github.com/twelvelabs-io/tl-marengo-bedrock-s3/

Essential Resources

Get Started Immediately:

Request Amazon Bedrock Model Access - Enable TwelveLabs Marengo 2.7 in your AWS account

TwelveLabs on Amazon Bedrock - Official integration announcement and capabilities

S3 Vectors Private Beta - Contact your AWS account team for immediate access

Implementation Guides:

Amazon Bedrock User Guide: TwelveLabs Models - API parameters and usage examples

S3 Vectors with Media Lakes - Production patterns and best practices

Developer Resources:

TwelveLabs Documentation - Complete API reference and video understanding guides

AWS SDK for Python (Boto3) - Implementation examples and code samples

1 - Introduction

If you are a developer building with AWS technologies, you're no stranger to building scalable AI applications with managed services like Amazon Bedrock and S3. Now, with TwelveLabs' state-of-the-art models recently available on Bedrock, you can supercharge your workflows with advanced video understanding. The Marengo model stands out, generating rich 512-dimensional embeddings for multimodal data—including text, video, images, and audio—capturing nuanced context like actions, objects, and sounds without manual labeling.

Pair this with Amazon S3 Vectors, the first cloud storage with native vector support, and you get seamless, scalable storage and similarity search right in your S3 buckets. No more wrestling with external databases; just use familiar Boto3 clients for cosine-based queries at enterprise scale.

This integration, announced as part of TwelveLabs' launch on Bedrock, opens doors to powerful use cases like natural language video search, content recommendation, and retrieval-augmented generation (RAG) systems. In this tutorial, we'll walk you through a hands-on Python notebook to generate embeddings with Marengo, store them in S3 Vectors, and run semantic searches—empowering you to adopt these tools quickly and extend your AWS stack.

2 - Prerequisites and Setup

Before diving into the multimodal embedding workflow, let's ensure your environment is properly configured. If you are a developer familiar with AWS environments, you'll need the standard AWS toolkit along with access to the recently launched TwelveLabs models on Bedrock.

Prerequisites

AWS Requirements

You'll need an AWS account with access to these services:

AWS account with appropriate IAM permissions for Amazon Bedrock, S3, and S3 Vectors

Amazon Bedrock access with TwelveLabs Marengo model enabled in your region

An existing S3 bucket for temporary Bedrock outputs (required for async operations)

AWS CLI configured with your credentials (

aws configure)

Development Environment:

Python 3.8 or higher

Jupyter Notebook or your preferred Python environment

Installation and Dependencies

Start by installing the required Python packages. The integration relies on the latest Boto3 SDK along with NumPy for vector operations:

pip install boto3==1.40.7 numpy matplotlib Pillow -q

Next, import the necessary libraries in your Python environment:

import boto3 import json import numpy as np import uuid import time import os from typing import List, Dict from botocore.exceptions import ClientError

Configuration

Configure your AWS and model settings. Update these variables to match your environment:

# AWS Configuration AWS_REGION = "us-east-1" # Update to your region AWS_PROFILE = "default" # Update to your profile # S3 Vectors Configuration VECTOR_BUCKET_NAME = "marengo-vectors-" + str(uuid.uuid4())[:8] VECTOR_INDEX_NAME = "embeddings-index" VECTOR_DIMENSION = 1024 # Marengo embedding dimension # Marengo Model MODEL_ID = 'twelvelabs.marengo-embed-2-7-v1:0' # Temporary S3 bucket for Bedrock output (required by Bedrock API) TEMP_S3_BUCKET = "<YOUR_S3_BUCKET>" # TODO: Replace with your S3 bucket name print(f"Vector Bucket: {VECTOR_BUCKET_NAME}") print(f"Vector Index: {VECTOR_INDEX_NAME}") print(f"Model: {MODEL_ID}")

Important: Replace <YOUR_S3_BUCKET> with an existing S3 bucket name in your account. Bedrock's async invoke feature requires this temporary storage location for processing results.

Initialize AWS Clients

Create authenticated sessions for the AWS services you'll be using:

# Initialize AWS clients session = boto3.Session(profile_name=AWS_PROFILE) bedrock_client = session.client('bedrock-runtime', region_name=AWS_REGION) s3_client = session.client('s3') s3vectors_client = session.client('s3vectors', region_name=AWS_REGION) print("AWS clients initialized")

Create S3 Vector Bucket and Index

Now set up your vector storage infrastructure. S3 Vectors requires both a vector bucket and an index to organize and search your embeddings:

# Create Vector Bucket try: s3vectors_client.create_vector_bucket( vectorBucketName=VECTOR_BUCKET_NAME, encryptionConfiguration={'sseType': 'AES256'} ) print(f"Vector bucket '{VECTOR_BUCKET_NAME}' created") except ClientError as e: if e.response['Error']['Code'] == 'ConflictException': print(f"Vector bucket already exists") else: print(f"Error: {e}") # Create Vector Index try: s3vectors_client.create_index( vectorBucketName=VECTOR_BUCKET_NAME, indexName=VECTOR_INDEX_NAME, dataType='float32', dimension=VECTOR_DIMENSION, distanceMetric='cosine', metadataConfiguration={'nonFilterableMetadataKeys': ['source']} ) print(f"Index '{VECTOR_INDEX_NAME}' created") except ClientError as e: if e.response['Error']['Code'] == 'ConflictException': print(f"Index already exists") else: print(f"Error: {e}")

The setup creates a vector bucket with AES256 encryption and an index configured for Marengo's 1024-dimensional embeddings using cosine distance metrics—perfect for semantic similarity searches.

Troubleshooting Tips:

If S3 Vectors isn't available in your region, check the AWS documentation for supported regions.

Ensure your IAM user/role has permissions for

bedrock:InvokeModel,s3:GetObject,s3:PutObject, ands3vectors:*actions.The

ConflictExceptionhandling allows you to re-run the setup without errors if resources already exist.

With this foundation in place, you're ready to start generating embeddings with TwelveLabs Marengo and storing them in your scalable S3 vector infrastructure.

3 - Generating Embeddings with Marengo

TwelveLabs' Marengo model offers flexible input methods for generating multimodal embeddings. As you scale your applications, choosing the right approach becomes crucial for performance and cost optimization. This section demonstrates both Base64 encoding (ideal for small files and quick prototyping) and S3 URI-based processing (optimized for production workloads and large media files).

Uploading Media Files to S3

For production scenarios, storing media files in S3 provides better scalability, durability, and performance. Start by creating a helper function to upload files:

def upload_file_to_s3(local_path: str, bucket: str, key: str) -> str: """ Upload a local file to S3 Args: local_path: Path to local file bucket: S3 bucket name key: S3 object key (path in bucket) Returns: S3 URI of uploaded file """ try: s3_client.upload_file(local_path, bucket, key) s3_uri = f"s3://{bucket}/{key}" print(f"✅ Uploaded {os.path.basename(local_path)} to {s3_uri}") return s3_uri except ClientError as e: print(f"❌ Error uploading file: {e}") raise

Approach 1: Base64 Encoding

This approach reads files locally and encodes them to base64 before sending to Bedrock. It's ideal for small files, development, and scenarios where media is already in memory.

Text Embeddings:

def generate_text_embedding(text: str) -> List[float]: """ Generate embedding for text using Marengo on Bedrock """ # Create unique output path output_prefix = f'embeddings/{uuid.uuid4()}' # Start async embedding generation response = bedrock_client.start_async_invoke( modelId=MODEL_ID, modelInput={ "inputType": "text", "inputText": text }, outputDataConfig={ "s3OutputDataConfig": { "s3Uri": f's3://{TEMP_S3_BUCKET}/{output_prefix}' } } ) invocation_arn = response["invocationArn"] print(f"Generating text embedding for: '{text[:50]}...'") # Wait for completion status = None while status not in ["Completed", "Failed"]: response = bedrock_client.get_async_invoke(invocationArn=invocation_arn) status = response['status'] time.sleep(2) if status != "Completed": raise Exception(f"Embedding generation failed") # Retrieve embedding from S3 response = s3_client.list_objects_v2(Bucket=TEMP_S3_BUCKET, Prefix=output_prefix) for obj in response.get('Contents', []): if obj['Key'].endswith('output.json'): result = s3_client.get_object(Bucket=TEMP_S3_BUCKET, Key=obj['Key']) data = json.loads(result['Body'].read()) return data['data'][0]['embedding'] raise Exception("No embedding output found")

This function illustrates Bedrock's async workflow: submit the job, poll for completion, then retrieve results from S3. The pattern scales beautifully for production workloads where you might process thousands of items concurrently.

Video Embeddings:

def generate_video_embedding(video_path: str, start_sec: float = 0, length_sec: float = None) -> List[float]: """ Generate embedding for video using Marengo on Bedrock """ # Read video file and encode to base64 with open(video_path, 'rb') as video_file: video_base64 = base64.b64encode(video_file.read()).decode('utf-8') # Build model input with optional time segments model_input = { "inputType": "video", "mediaSource": {"base64String": video_base64}, "embeddingOption": ["visual-text", "audio"] # Capture both visual and audio } if start_sec is not None: model_input["startSec"] = start_sec if length_sec is not None: model_input["lengthSec"] = length_sec # ... rest follows the same async pattern

Audio and Image Functions:

The generate_audio_embedding() and generate_image_embedding() functions follow similar patterns, each tailored for their respective media types with base64 encoding and appropriate model input parameters.

Approach 2: S3 URI Processing

This production-optimized approach uploads files to S3 first, then references them by URI. It's better for large files, reduces memory usage, and enables better error handling and retry logic.

Video Embedding from S3:

def generate_video_embedding_from_s3(s3_uri: str, start_sec: float = 0, length_sec: float = None) -> List[float]: """Generate embedding for video from S3 using Marengo on Bedrock""" output_prefix = f'{OUTPUT_PREFIX}video/{uuid.uuid4()}' model_input = { "inputType": "video", "mediaSource": { "s3Location": { "uri": s3_uri, "bucketOwner": ACCOUNT_ID } }, "embeddingOption": ["visual-text", "audio"] } response = bedrock_client.start_async_invoke( modelId=MODEL_ID, modelInput=model_input, outputDataConfig={ "s3OutputDataConfig": { "s3Uri": f's3://{TEMP_S3_BUCKET}/{output_prefix}', "bucketOwner": ACCOUNT_ID } } ) print(f"🎬 Generating video embedding from S3: {s3_uri}") # Enhanced status monitoring with retry logic...

And this is a production usage example:

# Upload video to S3 video_s3_key = f"{MEDIA_PREFIX}videos/sample_video.mp4" video_s3_uri = upload_file_to_s3('video.mp4', TEMP_S3_BUCKET, video_s3_key) # Generate embedding from S3 video_embedding_s3 = generate_video_embedding_from_s3(video_s3_uri, start_sec=0, length_sec=10)

Verification: Identical Results from Both Approaches

A critical validation step ensures both approaches produce identical embeddings for the same content:

# Compare VIDEO embeddings print("🎬 VIDEO EMBEDDING VERIFICATION") print("-" * 50) # Generate using Base64 print("Generating via Base64...") video_base64_emb = generate_video_embedding('video.mp4', start_sec=0, length_sec=10) # Upload and generate using S3 URI print("Generating via S3 URI...") video_s3_key = f"{MEDIA_PREFIX}verification/video.mp4" video_s3_uri = upload_file_to_s3('video.mp4', TEMP_S3_BUCKET, video_s3_key) video_s3_emb = generate_video_embedding_from_s3(video_s3_uri, start_sec=0, length_sec=10) # Calculate similarity similarity = cosine_similarity(video_base64_emb, video_s3_emb) print(f"📊 Results:") print(f" Cosine similarity: {similarity:.6f}") print(f" Are they identical? {'✅ YES' if similarity > 0.9999 else '❌ NO'}")

The verification consistently shows perfect similarity scores across all media types, confirming that both approaches produce identical embeddings. This gives developers confidence to choose the appropriate method based on their specific use case requirements.

Visualizing Embedding Patterns

Understanding embedding structure helps validate model behavior and debug applications. Here's how to visualize the different modalities:

Text Embedding Visualization:

# Visualize text embeddings if text_embeddings: plt.figure(figsize=(12, 3)) for i, (text, emb, _) in enumerate(text_embeddings[:3]): plt.subplot(1, 3, i+1) plt.imshow([emb[:100]], aspect='auto', cmap='coolwarm') plt.title(f'Text {i+1}', fontsize=10) plt.xlabel('Dimensions (first 100)') plt.colorbar(orientation='horizontal', pad=0.1) plt.suptitle('Text Embeddings Visualization', fontsize=12) plt.tight_layout() plt.show()

The visualization reveals how text embeddings capture semantic patterns through varied activation intensities across dimensions. Notice how related concepts show similar patterns while distinct topics exhibit different activation signatures.

Video, Audio, and Image Visualizations:

Each modality produces distinct embedding patterns:

Video embeddings display complex patterns capturing both temporal visual information and audio features in the unified 1,024-dimensional space:

Audio embeddings exhibit characteristic frequency-domain patterns with different spectral representations:

Image embeddings demonstrate spatial feature activation patterns with rich color-coded intensity variations:

These visualizations help you understand how Marengo processes different data types and can inform feature engineering decisions for downstream applications.

Key Insights for Production Deployment

When to Use Base64: For optimal performance, choose base64 encoding when working with small files under 25MB, during development and testing phases, when processing data that's already in memory and needs real-time handling, or for simple workflows involving single files.

When to Use S3 URIs: S3 URIs are the preferred approach when processing large video files exceeding 25MB, implementing production batch processing systems, building multi-stage pipelines that require data durability, working with content that already resides in S3, or in scenarios where robust error handling and retry mechanisms are essential.

Performance Considerations: The S3 URI approach delivers several performance advantages, including significantly reduced local memory consumption, improved handling of timeout issues for large files, enhanced status monitoring capabilities with comprehensive retry logic, and greater cost efficiency when processing high volumes of media.

Both approaches generate identical 1,024-dimensional embeddings that exist in the same unified vector space, enabling seamless cross-modal search regardless of which method you choose for generation. This flexibility allows you to optimize your architecture based on file size, infrastructure constraints, and operational requirements while maintaining consistent semantic understanding across all media types.

4 - Storing Embeddings in S3 Vectors

With multimodal embeddings in hand, the next step is to persist them in a scalable, search-ready store. Amazon S3 Vectors brings native vector storage and similarity search to S3, so there’s no extra infrastructure to stand up or manage—just create a vector bucket, define an index, and batch insert vectors with metadata. This section mirrors the workflow and code from the updated notebook and README, adapted for production-minded developers.

Why S3 Vectors for Marengo embeddings

Native to S3: Store vectors alongside existing data and reuse your S3 controls, lifecycle, and security posture.

Built-in similarity search: Query with cosine distance directly via an AWS SDK client—no separate vector database to operate.

Schema-aligned with Marengo: Index dimension set to 1,024 to match Marengo-Embed-2.7 output, ensuring efficient storage and retrieval.

Prepare vector payloads with metadata

After generating embeddings, normalize them into a consistent structure: a unique key, the float32 vector payload, and metadata for human-readable context and filtering. The notebook handles both legacy tuples and the extended triplet that includes media type.

def store_embeddings(embeddings_data: List[tuple]) -> bool: """ Store embeddings in S3 Vectors with metadata """ vectors_to_insert = [] for i, item in enumerate(embeddings_data): # Support (text, embedding) and (text, embedding, media_type) if len(item) == 3: text, embedding, media_type = item else: text, embedding = item media_type = "text" vector_entry = { 'key': f'vector_{i:04d}', # deterministic, idempotent upserts 'data': { 'float32': [float(v) for v in embedding] # convert to float32-compatible list }, 'metadata': { 'text': text, # human-readable description 'media_type': media_type, # useful for UI badges and filtering 'id': i # numeric id for quick reference } } vectors_to_insert.append(vector_entry)

This shape aligns with the S3 Vectors put_vectors API and keeps metadata close to the embedding for streamlined search results and analytics.

Bulk insert into S3 Vectors

Use a single batch call to minimize round trips and make the operation repeatable. The notebook also prints a helpful summary by media type—handy for sanity checks and dashboards.

try: s3vectors_client.put_vectors( vectorBucketName=VECTOR_BUCKET_NAME, indexName=VECTOR_INDEX_NAME, vectors=vectors_to_insert ) print(f"Stored {len(vectors_to_insert)} vectors in S3 Vectors") # Optional: quick summary media_counts = {} for item in embeddings_data: mtype = item[2] if len(item) == 3 else "text" media_counts[mtype] = media_counts.get(mtype, 0) + 1 print("Media types stored:") for m, c in media_counts.items(): print(f" - {m}: {c}") return True except ClientError as e: print(f"Error storing vectors: {e}") return False

A typical run reports that it stored 6 vectors split across text, video, audio, and image, confirming end-to-end readiness for cross-modal search.

Index design and distance metric

When creating the index, you should configure several important parameters to optimize performance. First, set the dataType to float32 to properly store Marengo's vector values. Next, specify the dimension as 1,024 to match Marengo's embedding size exactly. For the distanceMetric, choose cosine as it's the standard approach for measuring semantic similarity between vectors. Finally, include at least one key in the metadataConfiguration (the example uses a non-filterable placeholder and attaches rich metadata at insert time).

These carefully selected defaults ensure compatibility with the Marengo model's output format and align perfectly with the downstream similarity search methods we'll use later in the tutorial.

Idempotency, updates, and versioning

Let’s go over some best practices:

Optimize vector management by using deterministic keys based on content URI hashes or stable UUIDs, which ensures safe and predictable re-ingestion processes when updating your vector database.

When re-running ingestion jobs with identical keys, vectors should be cleanly overwritten through upserts. For maintaining historical versions, simply append version suffixes to your keys and maintain active version references in a separate system like DynamoDB.

Since metadata is attached to individual vectors, you can evolve your schema incrementally by re-putting vectors with the same keys but updated metadata attributes, such as new labels or timestamps.

With vectors stored and indexed, the system is ready for low-latency semantic retrieval. Next, query the index with either a fresh query embedding or a precomputed vector to power cross-modal search experiences across text, video, audio, and image content—without leaving your S3 security and governance perimeter.

5 - Performing Similarity Searches

With vectors indexed in Amazon S3 Vectors, querying becomes a simple, low-latency API call. The same flow supports fresh natural-language queries and pre-computed embeddings from any modality, which is ideal for cross-modal retrieval patterns on AWS.

Search via text or a pre-computed embedding

The notebook exposes a single function that accepts either a text prompt (which it embeds on-the-fly with Marengo) or a ready-made embedding. It then issues a cosine-distance query against the S3 Vectors index and returns ranked matches with metadata for convenient display and logging.

def search_similar(query_text: str = None, query_embedding: List[float] = None, top_k: int = 3) -> List[Dict]: """ Search for similar vectors using either a text query or a pre-computed embedding """ # Generate embedding for query if text is provided if query_text and not query_embedding: print(f"\nSearching for: '{query_text}'") query_embedding = generate_text_embedding(query_text) elif query_embedding: print(f"\nSearching with provided embedding...") else: raise ValueError("Either query_text or query_embedding must be provided") # Query S3 Vectors try: response = s3vectors_client.query_vectors( vectorBucketName=VECTOR_BUCKET_NAME, indexName=VECTOR_INDEX_NAME, topK=top_k, queryVector={'float32': [float(v) for v in query_embedding]}, returnMetadata=True, returnDistance=True ) results = [] for vector in response.get('vectors', []): # Handle both old vectors (without media_type) and new vectors (with media_type) results.append({ 'text': vector['metadata'].get('text', 'No description'), 'media_type': vector['metadata'].get('media_type', 'text'), # Default to 'text' for old vectors 'similarity': 1.0 - vector.get('distance', 0), # Convert distance to similarity 'key': vector.get('key') }) return results except ClientError as e: print(f"Query failed: {e}") return []

Conceptually:

If

query_textis provided, generate a 1,024-dimension Marengo embedding using Bedrock async invoke and fetch the result from the temporary S3 location. Then search S3 Vectors with that embedding.If

query_embeddingis provided, skip embedding generation and send the vector directly to S3 Vectors for retrieval—useful for “media-to-all” queries (video→all, image→all, audio→all).

These are the implementation highlights:

topKcontrols how many results to return from the index.returnMetadataandreturnDistanceallow reconstructing readable hits and converting distance to similarity withsimilarity = 1.0 - distancefor intuitive sorting.The function gracefully handles older vectors that may lack

media_typemetadata by defaulting to "text".

# Example queries queries = [ "Someone enjoying the ocean view", "People talking", "Cooking activities" ] for query in queries: results = search_similar(query, top_k=2) print(f"\nResults for: '{query}'") for i, result in enumerate(results, 1): print(f" {i}. Similarity: {result['similarity']:.3f} | Text: {result['text']}")

The notebook demonstrates impressive semantic understanding. When searching for "Someone enjoying the ocean view," it correctly identifies "A person walking on a beach at sunset" as the top match with 0.736 similarity, despite using completely different words. Similarly, searching for "Cooking activities" leads to a top match of “A chef preparing food in a kitchen ” with 0.765 similarity.

This goes beyond keyword matching—Marengo's embeddings capture contextual meaning, enabling applications like content recommendation systems, semantic search engines, and RAG implementations that understand user intent rather than just literal text matches.

Visualize search results

To help validate ranking quality and explain results to stakeholders, the notebook renders horizontal bar charts with similarity scores for each hit. Labels encode the media type (text, video, audio, image), and colors provide quick visual grouping to scan relevance patterns at a glance.

if all_results: fig, axes = plt.subplots(len(all_results), 1, figsize=(10, len(all_results)*2)) if len(all_results) == 1: axes = [axes] for idx, result_set in enumerate(all_results): query = result_set['query'] results = result_set['results'] if results: similarities = [r['similarity'] for r in results] labels = [f"{r['media_type'][:3]}" for r in results] colors = ['#4CAF50' if r['media_type'] == 'text' else '#2196F3' if r['media_type'] == 'video' else '#FF9800' if r['media_type'] == 'audio' else '#9C27B0' for r in results] axes[idx].barh(range(len(similarities)), similarities, color=colors) axes[idx].set_yticks(range(len(similarities))) axes[idx].set_yticklabels(labels) axes[idx].set_xlabel('Similarity Score') axes[idx].set_title(f'Query: "{query[:40]}..."') axes[idx].set_xlim(0, 1) # Add value labels for i, v in enumerate(similarities): axes[idx].text(v, i, f' {v:.3f}', va='center') plt.suptitle('Cross-Modal Search Results', fontsize=14, fontweight='bold') plt.tight_layout() plt.savefig('cross_modal_search_results.png', dpi=150, bbox_inches='tight') plt.show() print("\n💾 Search results saved to JSON files")

This is a typical workflow in the notebook:

Run several text queries.

Persist each query’s results to JSON for reproducibility and debugging.

Plot one row of bars per query showing the top matches and their similarity scores.

The example visualization clearly communicates which modalities dominate for a given query and how close the top candidates are, making it easy to tune thresholds or adjust pre/post-processing steps for production.

Cross-modal search examples

Because Marengo’s embeddings share a unified vector space across modalities, it’s straightforward to retrieve relevant content across text, video, audio, and image from a single query embedding. The notebook demonstrates five representative text queries and shows how results vary by modality:

Someone enjoying the ocean view: Top matches are semantically aligned text snippets describing outdoor/beach scenarios with similarities clustered around 0.64–0.74, indicating strong textual relevance for the scene.

People talking: The highest-scoring result is an audio clip, reflecting speech-like acoustic features; lower text/image hits follow, showing the model’s ability to bridge speech and textual descriptions.

Music and sounds: Audio leads the results, as expected, with lower or negative similarity for image/video in this small sample—useful signal for modality-specific routing or UI cues.

Food preparation in kitchen: Text hits dominate with a top similarity near 0.90, capturing strong semantic alignment around cooking activities.

Outdoor scenic view: Text candidates again score well in the 0.60–0.68 range, consistent with the descriptive nature of the prompt.

The accompanying chart condenses these outcomes into side-by-side horizontal bars per query, where:

Green bars denote text hits,

Blue bars denote video,

Orange bars denote audio,

Purple bars denote image.

This makes it easy to reason about which modalities are most responsive for a given intent and to design UI/UX that surfaces the best candidates first (e.g., prioritize audio for “sounds,” video for “actions,” and text for narrative prompts).

Media-to-all retrieval

Beyond text-to-all, the notebook also demonstrates:

Video→All: Use a video embedding as the query to retrieve similar video segments, related images, and semantically close text snippets. This pattern is excellent for content deduplication and near-duplicate detection across formats.

Image→All: Retrieve semantically related video frames or textual descriptions from a single image—useful for catalog enrichment and content tagging workflows.

Audio→All: Find text and video that best describe or align with a sound clip, enabling audio-driven discovery in large multimedia libraries.

With these search patterns and visual diagnostics in place, the stack is ready to power semantic discovery and cross-modal experiences—entirely on AWS—by pairing Bedrock-hosted Marengo embeddings with S3 Vectors’ native vector search.

6 - Direct Vector Comparison and Use Cases

When tuning relevance or debugging retrieval, it’s useful to bypass the vector index and inspect embedding relationships directly. This section shows how to compute cosine similarity between any two embeddings, plot pairwise comparisons, and render a compact similarity matrix. The snippets mirror the notebook’s patterns and are production-friendly for quick diagnostics in AWS environments.

Calculate cosine similarity between two vectors

Cosine similarity measures the angle between two vectors; values closer to 1 indicate higher semantic alignment. It’s the same metric used by the S3 Vectors index configuration, so offline checks here match online search behavior.

import numpy as np def cosine_similarity(vec1, vec2) -> float: """ Calculate cosine similarity between two vectors. """ v1 = np.array(vec1, dtype=np.float32) v2 = np.array(vec2, dtype=np.float32) dot = np.dot(v1, v2) n1 = np.linalg.norm(v1) n2 = np.linalg.norm(v2) return float(dot / (n1 * n2))

Compare embeddings directly with visualization

The following example embeds three text prompts, computes pairwise similarities, prints them, and then visualizes the scores. This is handy for smoke tests when iterating on prompts or preprocessing logic.

import matplotlib.pyplot as plt # Example texts text1 = "A beautiful sunset over the ocean" text2 = "A sunrise at the beach" text3 = "People working in an office" print("Generating embeddings for comparison...") emb1 = generate_text_embedding(text1) # from previous section emb2 = generate_text_embedding(text2) emb3 = generate_text_embedding(text3) sim_12 = cosine_similarity(emb1, emb2) sim_13 = cosine_similarity(emb1, emb3) sim_23 = cosine_similarity(emb2, emb3) print("\nDirect Similarity Comparison:") print(f"'{text1}' vs '{text2}': {sim_12:.3f}") print(f"'{text1}' vs '{text3}': {sim_13:.3f}") print(f"'{text2}' vs '{text3}': {sim_23:.3f}")

Create similarity matrix visualization

A heatmap provides a compact view of relationships. This mirrors the notebook’s matrix rendering, which you can save as an artifact for PRs, dashboards, or test runs.

labels = ['Sunset/Ocean', 'Sunrise/Beach', 'Office Work'] # Assemble a 3x3 similarity matrix similarity_matrix = np.array([ [1.0, sim_12, sim_13], [sim_12, 1.0, sim_23], [sim_13, sim_23, 1.0] ], dtype=np.float32) plt.figure(figsize=(8, 6)) im = plt.imshow(similarity_matrix, cmap='RdYlGn', vmin=0, vmax=1, aspect='auto') plt.colorbar(im, label='Cosine Similarity') # Annotations for i in range(similarity_matrix.shape[0]): for j in range(similarity_matrix.shape[1]): plt.text(j, i, f'{similarity_matrix[i, j]:.3f}', ha="center", va="center", color="black", fontweight='bold') plt.xticks(range(3), labels, rotation=45, ha='right') plt.yticks(range(3), labels) plt.title('Embedding Similarity Matrix', fontsize=14, fontweight='bold') plt.tight_layout() plt.savefig('similarity_matrix.png', dpi=150, bbox_inches='tight') plt.show()

The resulting heatmap makes it obvious that “sunset over the ocean” and “sunrise at the beach” are strongly aligned, while both are less similar to “office work,” matching intuition and confirming embedding quality.

Concrete Use Cases

This is list of possible use cases that you can build based on the insights shared in this tutorial:

Multimodal search: Use text prompts to retrieve precise video moments without scrubbing footage. For example, embed “driver approaches crosswalk” and find matching clips across a fleet’s dashcam archive.

Content recommendation: Given an image or short video embedding, retrieve similar assets and their captions to power lookalike recommendations across catalogs.

Media understanding: Compare embeddings across audio, image, and video to analyze relationships—for instance, checking whether a soundtrack matches the visual mood of a scene. Cosine scores and matrices help define routing rules (e.g., “if audio>0.7, show sound-alike results first”).

RAG applications: Store embeddings in S3 Vectors, then fetch top-k multimedia context for Bedrock-powered text generation (with our Pegasus model). Use direct comparisons to validate that the retrieved snippets are semantically close before passing them to a text generation step.

With these diagnostics and visualizations, it’s straightforward to tune prompts, verify cross-modal behavior, and build trustworthy retrieval pipelines that pair TwelveLabs Marengo on Bedrock with Amazon S3 Vectors.

7 - Conclusion: Cross-Modal Search Summary Dashboard

The Cross-Modal Search Dashboard below provides a unified, high-level view of every key step in the multimodal embedding and search pipeline that we went through in this tutorial. With side-by-side panels, it highlights how embeddings from text, video, audio, and image are seamlessly processed, distributed, and visualized using Amazon Bedrock and Amazon S3 Vectors. The media type distribution confirms balanced coverage of different modalities, while the embedding structure plot demonstrates the consistent dimensionality (1,024D) delivered by Marengo across all content types.

Performance metrics speak to both search quality and operational efficiency:

The Cross-Modal Search Effectiveness chart shows that whether querying from text, video, audio, or image, similarities remain robust—particularly for video-driven retrievals.

The Embedding Similarities heatmap offers instant insight into the semantic alignment between sample embeddings, and the Media Files Processed summary confirms end-to-end coverage for each modality.

Finally, the pipeline timeline panel quantifies each phase, highlighting that generating embeddings is the most time-consuming step, while S3 Vectors storage and querying are fast and scalable.

We hope this tutorial empowers you to monitor, tune, and explain the performance of end-to-end cross-modal search using TwelveLabs Marengo multimodal embedding model—making it easy to validate coverage, compare semantic relationships, and operationalize content understanding at scale, all within familiar AWS-managed services.

Check out the full code in this repository: https://github.com/twelvelabs-io/tl-marengo-bedrock-s3/

Essential Resources

Get Started Immediately:

Request Amazon Bedrock Model Access - Enable TwelveLabs Marengo 2.7 in your AWS account

TwelveLabs on Amazon Bedrock - Official integration announcement and capabilities

S3 Vectors Private Beta - Contact your AWS account team for immediate access

Implementation Guides:

Amazon Bedrock User Guide: TwelveLabs Models - API parameters and usage examples

S3 Vectors with Media Lakes - Production patterns and best practices

Developer Resources:

TwelveLabs Documentation - Complete API reference and video understanding guides

AWS SDK for Python (Boto3) - Implementation examples and code samples

1 - Introduction

If you are a developer building with AWS technologies, you're no stranger to building scalable AI applications with managed services like Amazon Bedrock and S3. Now, with TwelveLabs' state-of-the-art models recently available on Bedrock, you can supercharge your workflows with advanced video understanding. The Marengo model stands out, generating rich 512-dimensional embeddings for multimodal data—including text, video, images, and audio—capturing nuanced context like actions, objects, and sounds without manual labeling.

Pair this with Amazon S3 Vectors, the first cloud storage with native vector support, and you get seamless, scalable storage and similarity search right in your S3 buckets. No more wrestling with external databases; just use familiar Boto3 clients for cosine-based queries at enterprise scale.

This integration, announced as part of TwelveLabs' launch on Bedrock, opens doors to powerful use cases like natural language video search, content recommendation, and retrieval-augmented generation (RAG) systems. In this tutorial, we'll walk you through a hands-on Python notebook to generate embeddings with Marengo, store them in S3 Vectors, and run semantic searches—empowering you to adopt these tools quickly and extend your AWS stack.

2 - Prerequisites and Setup

Before diving into the multimodal embedding workflow, let's ensure your environment is properly configured. If you are a developer familiar with AWS environments, you'll need the standard AWS toolkit along with access to the recently launched TwelveLabs models on Bedrock.

Prerequisites

AWS Requirements

You'll need an AWS account with access to these services:

AWS account with appropriate IAM permissions for Amazon Bedrock, S3, and S3 Vectors

Amazon Bedrock access with TwelveLabs Marengo model enabled in your region

An existing S3 bucket for temporary Bedrock outputs (required for async operations)

AWS CLI configured with your credentials (

aws configure)

Development Environment:

Python 3.8 or higher

Jupyter Notebook or your preferred Python environment

Installation and Dependencies

Start by installing the required Python packages. The integration relies on the latest Boto3 SDK along with NumPy for vector operations:

pip install boto3==1.40.7 numpy matplotlib Pillow -q

Next, import the necessary libraries in your Python environment:

import boto3 import json import numpy as np import uuid import time import os from typing import List, Dict from botocore.exceptions import ClientError

Configuration

Configure your AWS and model settings. Update these variables to match your environment:

# AWS Configuration AWS_REGION = "us-east-1" # Update to your region AWS_PROFILE = "default" # Update to your profile # S3 Vectors Configuration VECTOR_BUCKET_NAME = "marengo-vectors-" + str(uuid.uuid4())[:8] VECTOR_INDEX_NAME = "embeddings-index" VECTOR_DIMENSION = 1024 # Marengo embedding dimension # Marengo Model MODEL_ID = 'twelvelabs.marengo-embed-2-7-v1:0' # Temporary S3 bucket for Bedrock output (required by Bedrock API) TEMP_S3_BUCKET = "<YOUR_S3_BUCKET>" # TODO: Replace with your S3 bucket name print(f"Vector Bucket: {VECTOR_BUCKET_NAME}") print(f"Vector Index: {VECTOR_INDEX_NAME}") print(f"Model: {MODEL_ID}")

Important: Replace <YOUR_S3_BUCKET> with an existing S3 bucket name in your account. Bedrock's async invoke feature requires this temporary storage location for processing results.

Initialize AWS Clients

Create authenticated sessions for the AWS services you'll be using:

# Initialize AWS clients session = boto3.Session(profile_name=AWS_PROFILE) bedrock_client = session.client('bedrock-runtime', region_name=AWS_REGION) s3_client = session.client('s3') s3vectors_client = session.client('s3vectors', region_name=AWS_REGION) print("AWS clients initialized")

Create S3 Vector Bucket and Index

Now set up your vector storage infrastructure. S3 Vectors requires both a vector bucket and an index to organize and search your embeddings:

# Create Vector Bucket try: s3vectors_client.create_vector_bucket( vectorBucketName=VECTOR_BUCKET_NAME, encryptionConfiguration={'sseType': 'AES256'} ) print(f"Vector bucket '{VECTOR_BUCKET_NAME}' created") except ClientError as e: if e.response['Error']['Code'] == 'ConflictException': print(f"Vector bucket already exists") else: print(f"Error: {e}") # Create Vector Index try: s3vectors_client.create_index( vectorBucketName=VECTOR_BUCKET_NAME, indexName=VECTOR_INDEX_NAME, dataType='float32', dimension=VECTOR_DIMENSION, distanceMetric='cosine', metadataConfiguration={'nonFilterableMetadataKeys': ['source']} ) print(f"Index '{VECTOR_INDEX_NAME}' created") except ClientError as e: if e.response['Error']['Code'] == 'ConflictException': print(f"Index already exists") else: print(f"Error: {e}")

The setup creates a vector bucket with AES256 encryption and an index configured for Marengo's 1024-dimensional embeddings using cosine distance metrics—perfect for semantic similarity searches.

Troubleshooting Tips:

If S3 Vectors isn't available in your region, check the AWS documentation for supported regions.

Ensure your IAM user/role has permissions for

bedrock:InvokeModel,s3:GetObject,s3:PutObject, ands3vectors:*actions.The

ConflictExceptionhandling allows you to re-run the setup without errors if resources already exist.

With this foundation in place, you're ready to start generating embeddings with TwelveLabs Marengo and storing them in your scalable S3 vector infrastructure.

3 - Generating Embeddings with Marengo

TwelveLabs' Marengo model offers flexible input methods for generating multimodal embeddings. As you scale your applications, choosing the right approach becomes crucial for performance and cost optimization. This section demonstrates both Base64 encoding (ideal for small files and quick prototyping) and S3 URI-based processing (optimized for production workloads and large media files).

Uploading Media Files to S3

For production scenarios, storing media files in S3 provides better scalability, durability, and performance. Start by creating a helper function to upload files:

def upload_file_to_s3(local_path: str, bucket: str, key: str) -> str: """ Upload a local file to S3 Args: local_path: Path to local file bucket: S3 bucket name key: S3 object key (path in bucket) Returns: S3 URI of uploaded file """ try: s3_client.upload_file(local_path, bucket, key) s3_uri = f"s3://{bucket}/{key}" print(f"✅ Uploaded {os.path.basename(local_path)} to {s3_uri}") return s3_uri except ClientError as e: print(f"❌ Error uploading file: {e}") raise

Approach 1: Base64 Encoding

This approach reads files locally and encodes them to base64 before sending to Bedrock. It's ideal for small files, development, and scenarios where media is already in memory.

Text Embeddings:

def generate_text_embedding(text: str) -> List[float]: """ Generate embedding for text using Marengo on Bedrock """ # Create unique output path output_prefix = f'embeddings/{uuid.uuid4()}' # Start async embedding generation response = bedrock_client.start_async_invoke( modelId=MODEL_ID, modelInput={ "inputType": "text", "inputText": text }, outputDataConfig={ "s3OutputDataConfig": { "s3Uri": f's3://{TEMP_S3_BUCKET}/{output_prefix}' } } ) invocation_arn = response["invocationArn"] print(f"Generating text embedding for: '{text[:50]}...'") # Wait for completion status = None while status not in ["Completed", "Failed"]: response = bedrock_client.get_async_invoke(invocationArn=invocation_arn) status = response['status'] time.sleep(2) if status != "Completed": raise Exception(f"Embedding generation failed") # Retrieve embedding from S3 response = s3_client.list_objects_v2(Bucket=TEMP_S3_BUCKET, Prefix=output_prefix) for obj in response.get('Contents', []): if obj['Key'].endswith('output.json'): result = s3_client.get_object(Bucket=TEMP_S3_BUCKET, Key=obj['Key']) data = json.loads(result['Body'].read()) return data['data'][0]['embedding'] raise Exception("No embedding output found")

This function illustrates Bedrock's async workflow: submit the job, poll for completion, then retrieve results from S3. The pattern scales beautifully for production workloads where you might process thousands of items concurrently.

Video Embeddings: