Product

Announcing TwelveLabs Claude Code Plugin: Work With Videos Without Leaving Your Terminal

James Le, Will Brennan, Alex Owen

The TwelveLabs Claude Code Plugin brings video intelligence into your Claude Code workflow, so you can index, search, analyze, and embed videos without leaving the same CLI conversation you’re already using to write code.

The TwelveLabs Claude Code Plugin brings video intelligence into your Claude Code workflow, so you can index, search, analyze, and embed videos without leaving the same CLI conversation you’re already using to write code.

In this article

No headings found on page

Join our newsletter

Join our newsletter

Receive the latest advancements, tutorials, and industry insights in video understanding

Receive the latest advancements, tutorials, and industry insights in video understanding

AI로 영상을 검색하고, 분석하고, 탐색하세요.

2026. 3. 19.

7 Minutes

링크 복사하기

Video is everywhere in software work now: product demos recorded on Zoom, internal design reviews, customer calls, QA reproduction clips, onboarding walkthroughs, and hours of tutorials. But when video becomes part of your engineering “surface area,” it collides with a painful reality: most developer tools still treat video like a blob. You can’t grep it. You can’t diff it. You can’t ask it what happened.

Last year we shipped the TwelveLabs MCP Server to change that: by exposing video indexing, semantic search, analysis, and embeddings as standard tools any Model Context Protocol (MCP) client can call. In the MCP launch post, we described it as a bridge between our video understanding platform and AI assistants, built on the open MCP standard, so agents can “index videos, find relevant scenes, generate summaries, and more” without custom glue code.

Today we’re taking the next step for developers who live in the terminal: the TwelveLabs Claude Code Plugin.

What it is

The TwelveLabs Claude Code Plugin brings video intelligence into your Claude Code workflow, so you can index, search, analyze, and embed videos without leaving the same CLI conversation you’re already using to write code.

It installs through the Claude Code plugin marketplace and uses your TWELVELABS_API_KEY to authenticate. After install, it wires up the MCP server plus the UX layer developers actually want: slash commands, natural-language “skills,” and hooks that track async work and state automatically.

If you already liked the idea of MCP as a universal adapter, think of this plugin as the “batteries included” Claude Code experience: same MCP backbone, optimized for day-to-day terminal workflows.

What you can actually do

The plugin is intentionally small. It gives you a few verbs you can combine like building blocks: index, search, analyze, embed, and (optionally) entity search. The result is a workflow that feels like “grep, then explain”, but for video.

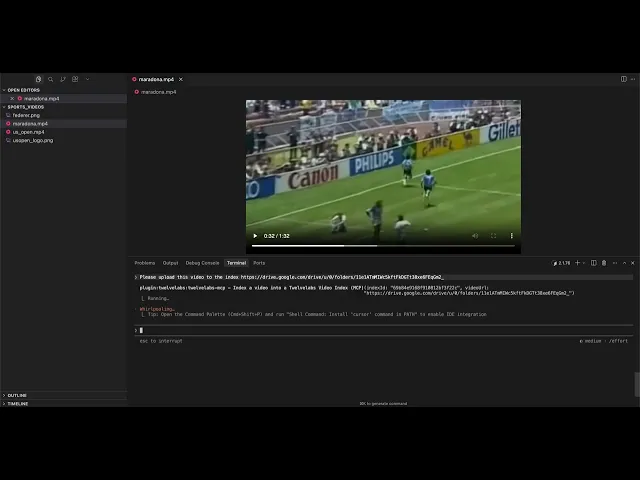

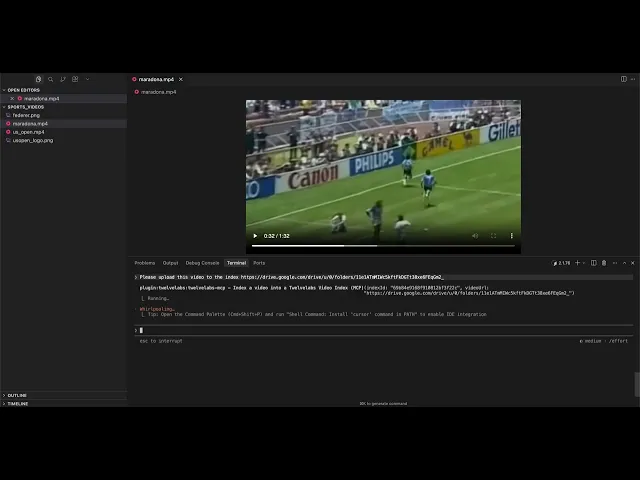

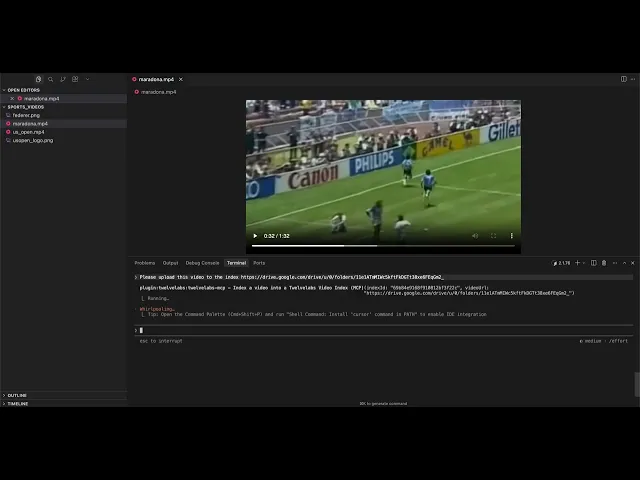

Index. Indexing accepts local paths, remote URLs, or Google Drive links. It’s also asynchronous: you can kick it off, go back to coding, and check status when you care.

Once indexing finishes, the video becomes searchable and analyzable from the same session.

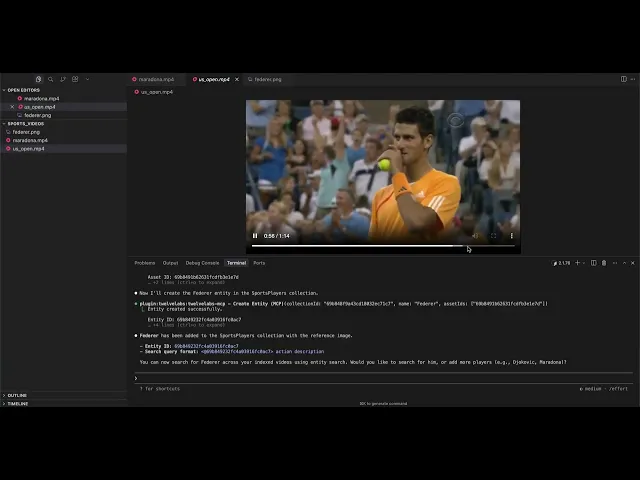

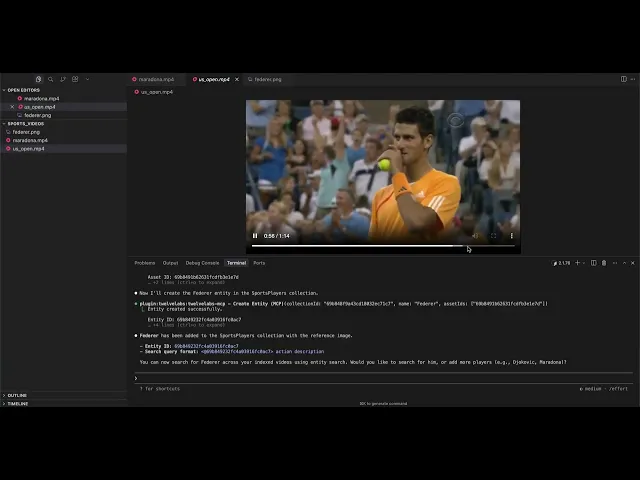

Search. Describe what you’re looking for (visual elements, actions, sounds, on-screen text) and Claude Code returns relevant moments. The plugin also supports image search, composed (image + text) search, and entity search.

Entity search is how you make “find every time this person appears” practical. You create an entity collection, upload reference images, create an entity, then search by entity ID (or @entity_id).

Under the hood, search experiences like these are what TwelveLabs built Marengo for: Marengo focuses on “any-to-any” retrieval tasks that power cross-modal search across text, image, audio, and video.

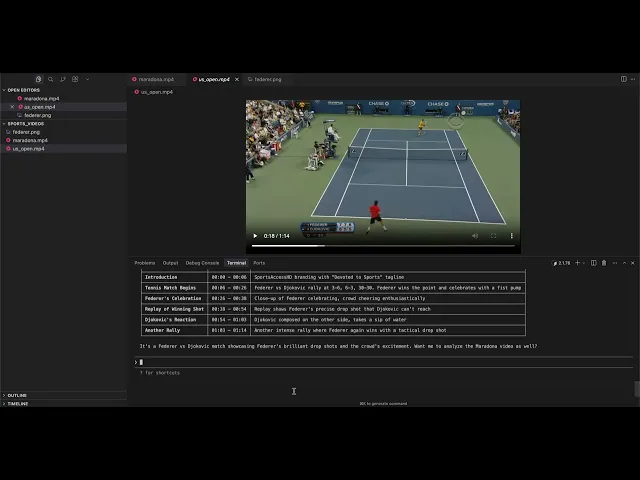

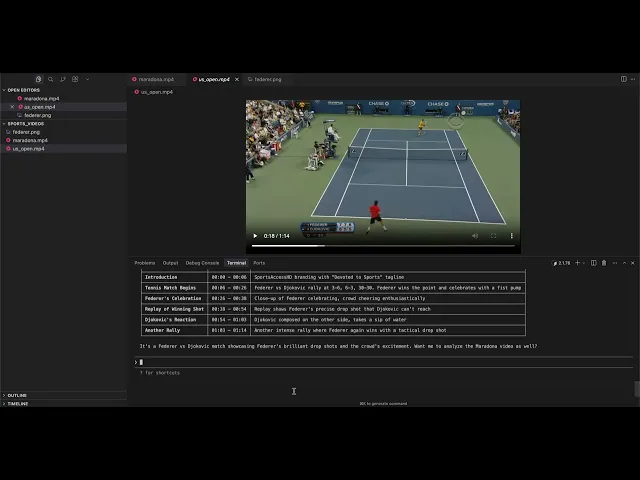

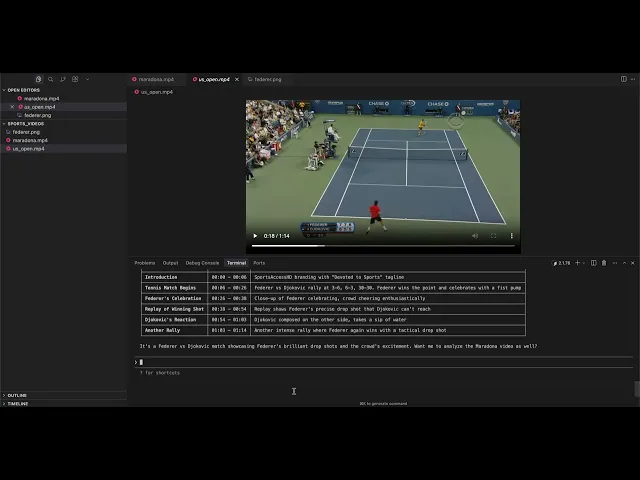

Analyze. Search is about finding moments. Analysis is about extracting meaning. The plugin supports summaries, topics, Q&A, action items; whatever you can describe in a prompt.

That “video-to-text” generation is the core strength of Pegasus: it integrates visual, audio, and speech information for text generation and reasoning over video.

Embed. If you’re building your own retrieval layer, clustering pipeline, or recommendation system, you can generate video embeddings directly.

How it works

From first principles, the design goal is: don’t make developers learn another tool; make video just another thing their agent can operate on.

Practically, that means a clean chain from Claude Code → MCP tools → TwelveLabs:

The plugin follows Claude Code’s plugin structure: a manifest for marketplace metadata, commands (slash commands under the twelvelabs: namespace), skills (natural-language triggers), and hooks (pre/post scripts around MCP calls).

The hook + state layer is what makes it feel “CLI-native” instead of “API wrapper.” Hooks wrap MCP calls with a predictable JSON contract (they read tool_name/tool_input and return continue/message), so they can validate inputs, cache results, and keep async tasks consistent.

The local .twelvelabs/config.json acts as a small runtime cache to track pending indexing tasks, completed videos, and recent results - managed through a helper that uses file locking (so you avoid state corruption when multiple steps happen quickly).

At the bottom of the stack is the MCP server itself. The plugin configures twelvelabs-mcp as an MCP server run via npx, and video operations flow through MCP tool calls with the mcp__twelvelabs-mcp__* prefix.

Conceptually, it’s the same MCP idea we introduced with the MCP Server launch: expose video understanding as discoverable “tools” so MCP-compatible clients can use them without bespoke integration code.

Why Claude Code

This isn’t a standalone app because developers don’t need another app.

Claude Code is already where people summarize diffs, refactor modules, trace incidents, and generate docs. The moment video enters that same workflow (demo recordings, customer interviews, user session replays), you want the same loop: ask a question, get a precise answer, ask a follow-up, ship the fix.

The plugin keeps that loop in one place. You can use explicit slash commands when you want deterministic behavior, or switch to natural language when you’re exploring.

And because the plugin configures the MCP server, commands, skills, and hooks automatically, setup stays close to “one environment variable and you’re done.”

Getting started

Install is three lines. (You may need to restart Claude Code after installing for the plugin and MCP server to take effect.)

export TWELVELABS_API_KEY

If you’re new to TwelveLabs, you can start on the Free plan (the pricing page lists up to 10 hours of indexing with a 600-minute usage limit and 90 days of index access), and it explicitly states you don’t need a credit card to begin.

What’s next

This first release focuses on the essentials: indexing, multimodal search (text/image/entity), analysis, and embeddings - all inside the workflow developers already use every day.

From here, the most useful roadmap is the one guided by real workflows. If you try the plugin and hit friction (missing command, awkward output shape, a “this should be one step” moment), open an issue or PR in the repo. It’s MIT licensed and built to be extended.

Useful links

TwelveLabs Claude Code Plugin Repo: https://github.com/twelvelabs-io/twelve-labs-claude-code-plugin

TwelveLabs MCP Server Announcement: https://www.twelvelabs.io/blog/twelve-labs-mcp-server

TwelveLabs API Docs: https://docs.twelvelabs.io/docs/get-started/quickstart

TwelveLabs Playground: https://playground.twelvelabs.io/

TwelveLabs Video Foundation Models: https://www.twelvelabs.io/product/models-overview

TwelveLabs Pricing: https://www.twelvelabs.io/pricing

Video is everywhere in software work now: product demos recorded on Zoom, internal design reviews, customer calls, QA reproduction clips, onboarding walkthroughs, and hours of tutorials. But when video becomes part of your engineering “surface area,” it collides with a painful reality: most developer tools still treat video like a blob. You can’t grep it. You can’t diff it. You can’t ask it what happened.

Last year we shipped the TwelveLabs MCP Server to change that: by exposing video indexing, semantic search, analysis, and embeddings as standard tools any Model Context Protocol (MCP) client can call. In the MCP launch post, we described it as a bridge between our video understanding platform and AI assistants, built on the open MCP standard, so agents can “index videos, find relevant scenes, generate summaries, and more” without custom glue code.

Today we’re taking the next step for developers who live in the terminal: the TwelveLabs Claude Code Plugin.

What it is

The TwelveLabs Claude Code Plugin brings video intelligence into your Claude Code workflow, so you can index, search, analyze, and embed videos without leaving the same CLI conversation you’re already using to write code.

It installs through the Claude Code plugin marketplace and uses your TWELVELABS_API_KEY to authenticate. After install, it wires up the MCP server plus the UX layer developers actually want: slash commands, natural-language “skills,” and hooks that track async work and state automatically.

If you already liked the idea of MCP as a universal adapter, think of this plugin as the “batteries included” Claude Code experience: same MCP backbone, optimized for day-to-day terminal workflows.

What you can actually do

The plugin is intentionally small. It gives you a few verbs you can combine like building blocks: index, search, analyze, embed, and (optionally) entity search. The result is a workflow that feels like “grep, then explain”, but for video.

Index. Indexing accepts local paths, remote URLs, or Google Drive links. It’s also asynchronous: you can kick it off, go back to coding, and check status when you care.

Once indexing finishes, the video becomes searchable and analyzable from the same session.

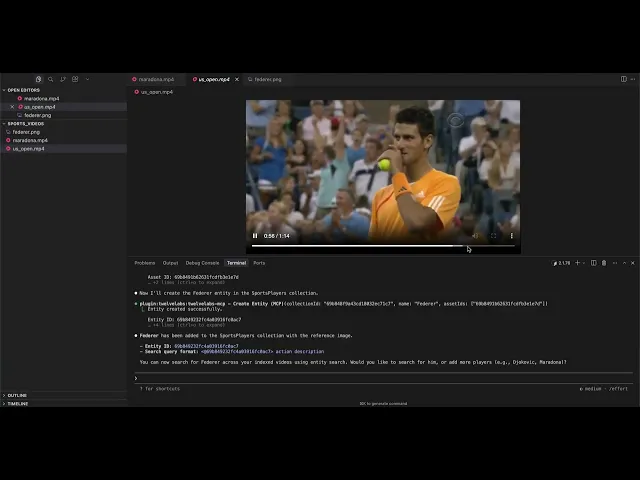

Search. Describe what you’re looking for (visual elements, actions, sounds, on-screen text) and Claude Code returns relevant moments. The plugin also supports image search, composed (image + text) search, and entity search.

Entity search is how you make “find every time this person appears” practical. You create an entity collection, upload reference images, create an entity, then search by entity ID (or @entity_id).

Under the hood, search experiences like these are what TwelveLabs built Marengo for: Marengo focuses on “any-to-any” retrieval tasks that power cross-modal search across text, image, audio, and video.

Analyze. Search is about finding moments. Analysis is about extracting meaning. The plugin supports summaries, topics, Q&A, action items; whatever you can describe in a prompt.

That “video-to-text” generation is the core strength of Pegasus: it integrates visual, audio, and speech information for text generation and reasoning over video.

Embed. If you’re building your own retrieval layer, clustering pipeline, or recommendation system, you can generate video embeddings directly.

How it works

From first principles, the design goal is: don’t make developers learn another tool; make video just another thing their agent can operate on.

Practically, that means a clean chain from Claude Code → MCP tools → TwelveLabs:

The plugin follows Claude Code’s plugin structure: a manifest for marketplace metadata, commands (slash commands under the twelvelabs: namespace), skills (natural-language triggers), and hooks (pre/post scripts around MCP calls).

The hook + state layer is what makes it feel “CLI-native” instead of “API wrapper.” Hooks wrap MCP calls with a predictable JSON contract (they read tool_name/tool_input and return continue/message), so they can validate inputs, cache results, and keep async tasks consistent.

The local .twelvelabs/config.json acts as a small runtime cache to track pending indexing tasks, completed videos, and recent results - managed through a helper that uses file locking (so you avoid state corruption when multiple steps happen quickly).

At the bottom of the stack is the MCP server itself. The plugin configures twelvelabs-mcp as an MCP server run via npx, and video operations flow through MCP tool calls with the mcp__twelvelabs-mcp__* prefix.

Conceptually, it’s the same MCP idea we introduced with the MCP Server launch: expose video understanding as discoverable “tools” so MCP-compatible clients can use them without bespoke integration code.

Why Claude Code

This isn’t a standalone app because developers don’t need another app.

Claude Code is already where people summarize diffs, refactor modules, trace incidents, and generate docs. The moment video enters that same workflow (demo recordings, customer interviews, user session replays), you want the same loop: ask a question, get a precise answer, ask a follow-up, ship the fix.

The plugin keeps that loop in one place. You can use explicit slash commands when you want deterministic behavior, or switch to natural language when you’re exploring.

And because the plugin configures the MCP server, commands, skills, and hooks automatically, setup stays close to “one environment variable and you’re done.”

Getting started

Install is three lines. (You may need to restart Claude Code after installing for the plugin and MCP server to take effect.)

export TWELVELABS_API_KEY

If you’re new to TwelveLabs, you can start on the Free plan (the pricing page lists up to 10 hours of indexing with a 600-minute usage limit and 90 days of index access), and it explicitly states you don’t need a credit card to begin.

What’s next

This first release focuses on the essentials: indexing, multimodal search (text/image/entity), analysis, and embeddings - all inside the workflow developers already use every day.

From here, the most useful roadmap is the one guided by real workflows. If you try the plugin and hit friction (missing command, awkward output shape, a “this should be one step” moment), open an issue or PR in the repo. It’s MIT licensed and built to be extended.

Useful links

TwelveLabs Claude Code Plugin Repo: https://github.com/twelvelabs-io/twelve-labs-claude-code-plugin

TwelveLabs MCP Server Announcement: https://www.twelvelabs.io/blog/twelve-labs-mcp-server

TwelveLabs API Docs: https://docs.twelvelabs.io/docs/get-started/quickstart

TwelveLabs Playground: https://playground.twelvelabs.io/

TwelveLabs Video Foundation Models: https://www.twelvelabs.io/product/models-overview

TwelveLabs Pricing: https://www.twelvelabs.io/pricing

Video is everywhere in software work now: product demos recorded on Zoom, internal design reviews, customer calls, QA reproduction clips, onboarding walkthroughs, and hours of tutorials. But when video becomes part of your engineering “surface area,” it collides with a painful reality: most developer tools still treat video like a blob. You can’t grep it. You can’t diff it. You can’t ask it what happened.

Last year we shipped the TwelveLabs MCP Server to change that: by exposing video indexing, semantic search, analysis, and embeddings as standard tools any Model Context Protocol (MCP) client can call. In the MCP launch post, we described it as a bridge between our video understanding platform and AI assistants, built on the open MCP standard, so agents can “index videos, find relevant scenes, generate summaries, and more” without custom glue code.

Today we’re taking the next step for developers who live in the terminal: the TwelveLabs Claude Code Plugin.

What it is

The TwelveLabs Claude Code Plugin brings video intelligence into your Claude Code workflow, so you can index, search, analyze, and embed videos without leaving the same CLI conversation you’re already using to write code.

It installs through the Claude Code plugin marketplace and uses your TWELVELABS_API_KEY to authenticate. After install, it wires up the MCP server plus the UX layer developers actually want: slash commands, natural-language “skills,” and hooks that track async work and state automatically.

If you already liked the idea of MCP as a universal adapter, think of this plugin as the “batteries included” Claude Code experience: same MCP backbone, optimized for day-to-day terminal workflows.

What you can actually do

The plugin is intentionally small. It gives you a few verbs you can combine like building blocks: index, search, analyze, embed, and (optionally) entity search. The result is a workflow that feels like “grep, then explain”, but for video.

Index. Indexing accepts local paths, remote URLs, or Google Drive links. It’s also asynchronous: you can kick it off, go back to coding, and check status when you care.

Once indexing finishes, the video becomes searchable and analyzable from the same session.

Search. Describe what you’re looking for (visual elements, actions, sounds, on-screen text) and Claude Code returns relevant moments. The plugin also supports image search, composed (image + text) search, and entity search.

Entity search is how you make “find every time this person appears” practical. You create an entity collection, upload reference images, create an entity, then search by entity ID (or @entity_id).

Under the hood, search experiences like these are what TwelveLabs built Marengo for: Marengo focuses on “any-to-any” retrieval tasks that power cross-modal search across text, image, audio, and video.

Analyze. Search is about finding moments. Analysis is about extracting meaning. The plugin supports summaries, topics, Q&A, action items; whatever you can describe in a prompt.

That “video-to-text” generation is the core strength of Pegasus: it integrates visual, audio, and speech information for text generation and reasoning over video.

Embed. If you’re building your own retrieval layer, clustering pipeline, or recommendation system, you can generate video embeddings directly.

How it works

From first principles, the design goal is: don’t make developers learn another tool; make video just another thing their agent can operate on.

Practically, that means a clean chain from Claude Code → MCP tools → TwelveLabs:

The plugin follows Claude Code’s plugin structure: a manifest for marketplace metadata, commands (slash commands under the twelvelabs: namespace), skills (natural-language triggers), and hooks (pre/post scripts around MCP calls).

The hook + state layer is what makes it feel “CLI-native” instead of “API wrapper.” Hooks wrap MCP calls with a predictable JSON contract (they read tool_name/tool_input and return continue/message), so they can validate inputs, cache results, and keep async tasks consistent.

The local .twelvelabs/config.json acts as a small runtime cache to track pending indexing tasks, completed videos, and recent results - managed through a helper that uses file locking (so you avoid state corruption when multiple steps happen quickly).

At the bottom of the stack is the MCP server itself. The plugin configures twelvelabs-mcp as an MCP server run via npx, and video operations flow through MCP tool calls with the mcp__twelvelabs-mcp__* prefix.

Conceptually, it’s the same MCP idea we introduced with the MCP Server launch: expose video understanding as discoverable “tools” so MCP-compatible clients can use them without bespoke integration code.

Why Claude Code

This isn’t a standalone app because developers don’t need another app.

Claude Code is already where people summarize diffs, refactor modules, trace incidents, and generate docs. The moment video enters that same workflow (demo recordings, customer interviews, user session replays), you want the same loop: ask a question, get a precise answer, ask a follow-up, ship the fix.

The plugin keeps that loop in one place. You can use explicit slash commands when you want deterministic behavior, or switch to natural language when you’re exploring.

And because the plugin configures the MCP server, commands, skills, and hooks automatically, setup stays close to “one environment variable and you’re done.”

Getting started

Install is three lines. (You may need to restart Claude Code after installing for the plugin and MCP server to take effect.)

export TWELVELABS_API_KEY

If you’re new to TwelveLabs, you can start on the Free plan (the pricing page lists up to 10 hours of indexing with a 600-minute usage limit and 90 days of index access), and it explicitly states you don’t need a credit card to begin.

What’s next

This first release focuses on the essentials: indexing, multimodal search (text/image/entity), analysis, and embeddings - all inside the workflow developers already use every day.

From here, the most useful roadmap is the one guided by real workflows. If you try the plugin and hit friction (missing command, awkward output shape, a “this should be one step” moment), open an issue or PR in the repo. It’s MIT licensed and built to be extended.

Useful links

TwelveLabs Claude Code Plugin Repo: https://github.com/twelvelabs-io/twelve-labs-claude-code-plugin

TwelveLabs MCP Server Announcement: https://www.twelvelabs.io/blog/twelve-labs-mcp-server

TwelveLabs API Docs: https://docs.twelvelabs.io/docs/get-started/quickstart

TwelveLabs Playground: https://playground.twelvelabs.io/

TwelveLabs Video Foundation Models: https://www.twelvelabs.io/product/models-overview

TwelveLabs Pricing: https://www.twelvelabs.io/pricing