Company

Bringing the Models to the Mission: Deploying TwelveLabs in Air-Gapped Environment

Julian Herwitz

TwelveLabs is now deployable fully disconnected. Video intelligence runs directly in mission environments where network access or reliable connectivity isn't an option.

TwelveLabs is now deployable fully disconnected. Video intelligence runs directly in mission environments where network access or reliable connectivity isn't an option.

In this article

뉴스레터 구독하기

뉴스레터 구독하기

영상 이해 분야의 최신 기술 업데이트, 튜토리얼 및 인사이트를 받아보세요.

영상 이해 분야의 최신 기술 업데이트, 튜토리얼 및 인사이트를 받아보세요.

AI로 영상을 검색하고, 분석하고, 탐색하세요.

2026. 5. 14.

6 Minutes

링크 복사하기

Disconnected, not Degraded

Video data is among the richest and most unwieldy signals in modern operations. As footage proliferates, operators risk losing critical insights in noise and volume. And the networks that house this data are siloed from the tools that can make it actionable.

We couldn’t let our customers sacrifice mission capability with an insecure or pared-down solution. That’s why we’ve introduced a fully air-gapped deployment of the TwelveLabs stack.

The same Marengo and Pegasus models running in our commercial SaaS can now be used to summarize, analyze, and search videos in a disconnected environment. Customers can bring the same API contracts, inference workflows, and product behavior direct to where they’re needed most.

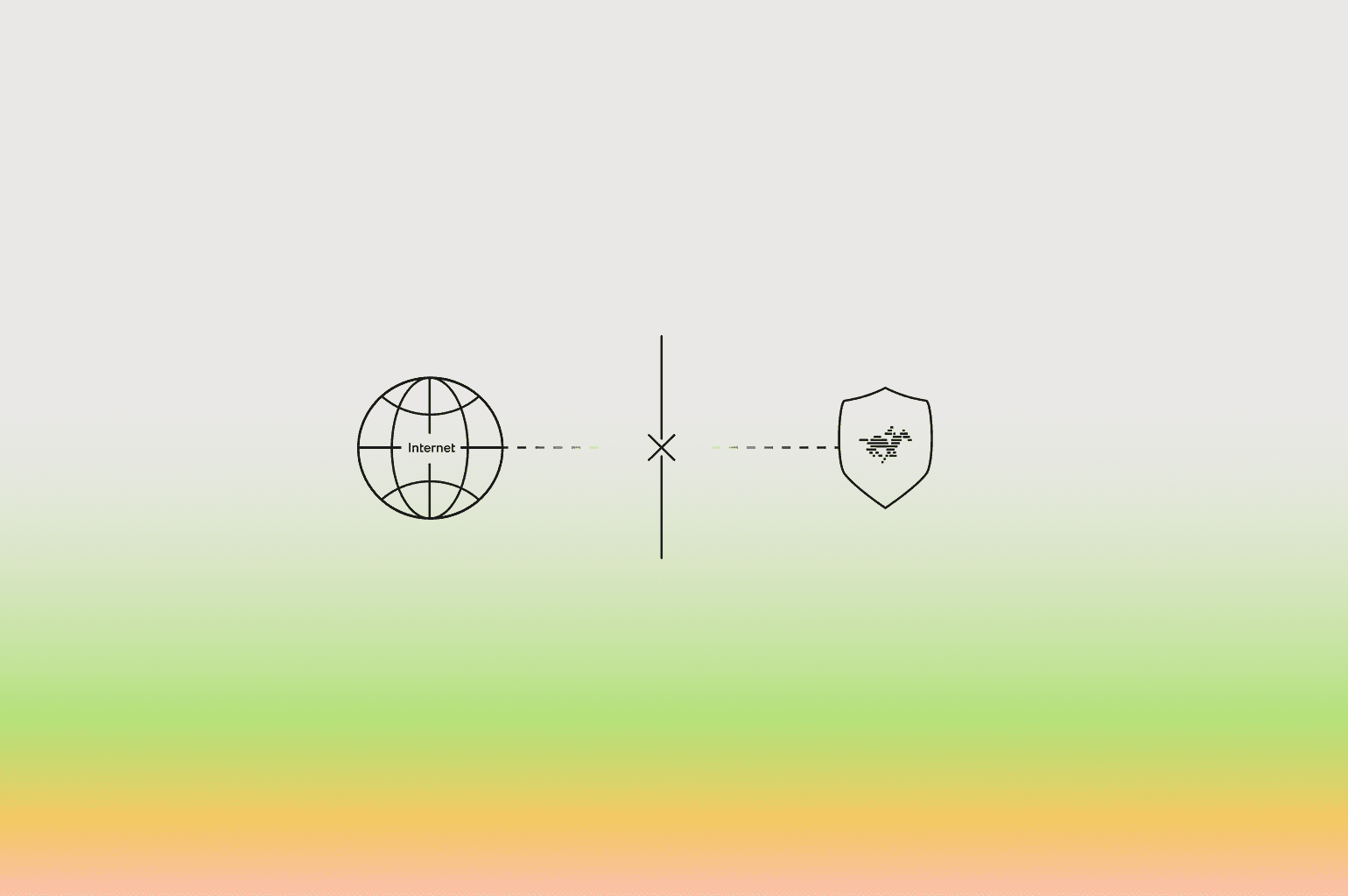

Figure 1: An air-gapped setup removes public internet dependency while keeping TwelveLabs capabilities available inside the secure environment.

Offline by Design

The air-gapped deployment isn’t just a port of the commercial product. It’s been built from the ground up using offline-first design principles to ensure a reliable, portable platform is delivered to customers.

No network required, anywhere. External dependencies, services, and configuration are never used. Enables operation in otherwise inaccessible restricted environments.

Runs on existing infrastructure. Deploys with built-in automation to the clusters and GPUs already provisioned in the environment. Up and running faster without procurement or provisioning.

Hardened for the most secure enclaves. Ships with FIPS-compliant signed images, customer IdP integration, and local observability tools. Drops into existing security postures, minimizing review burden and runtime surface area.

Under the Hood

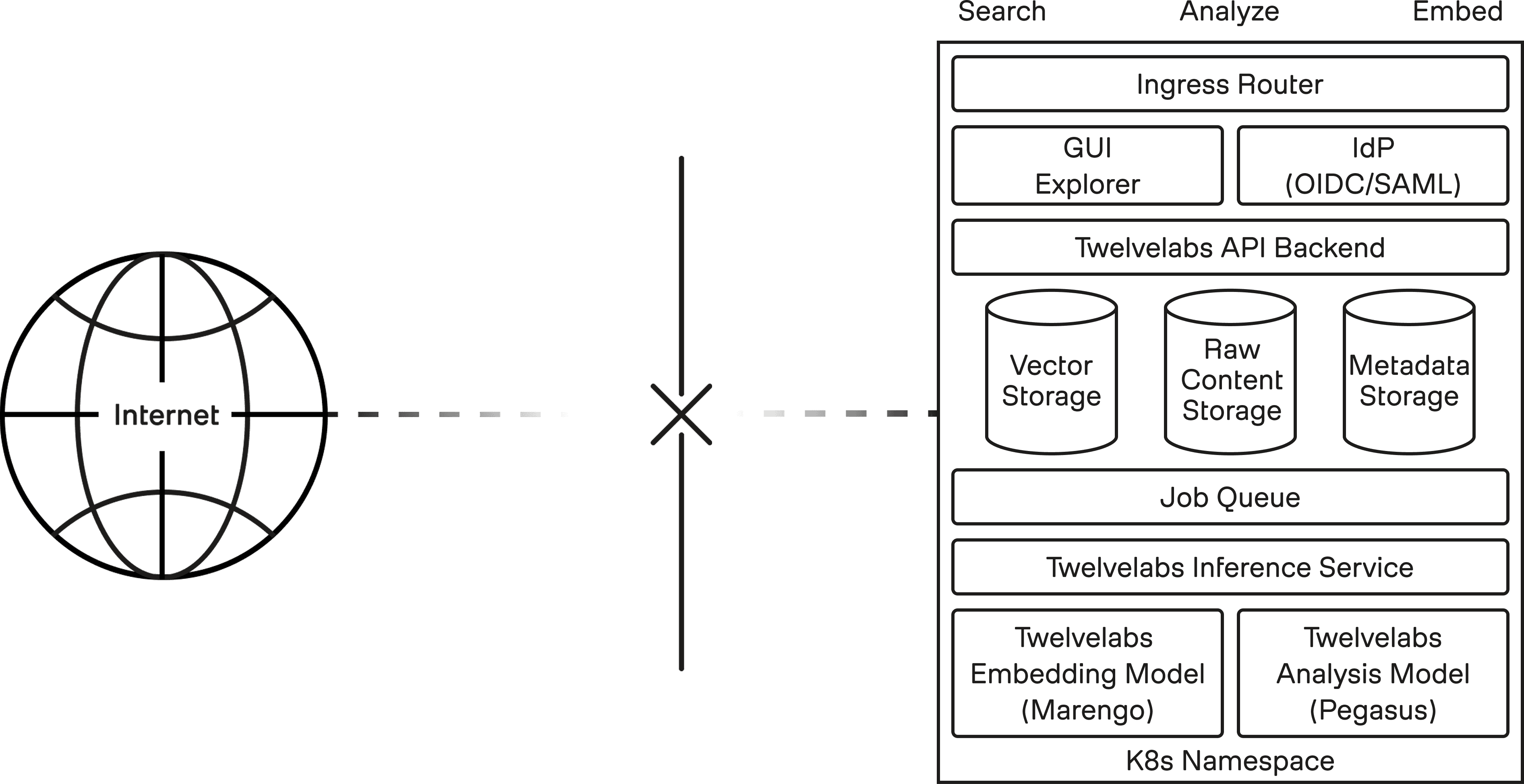

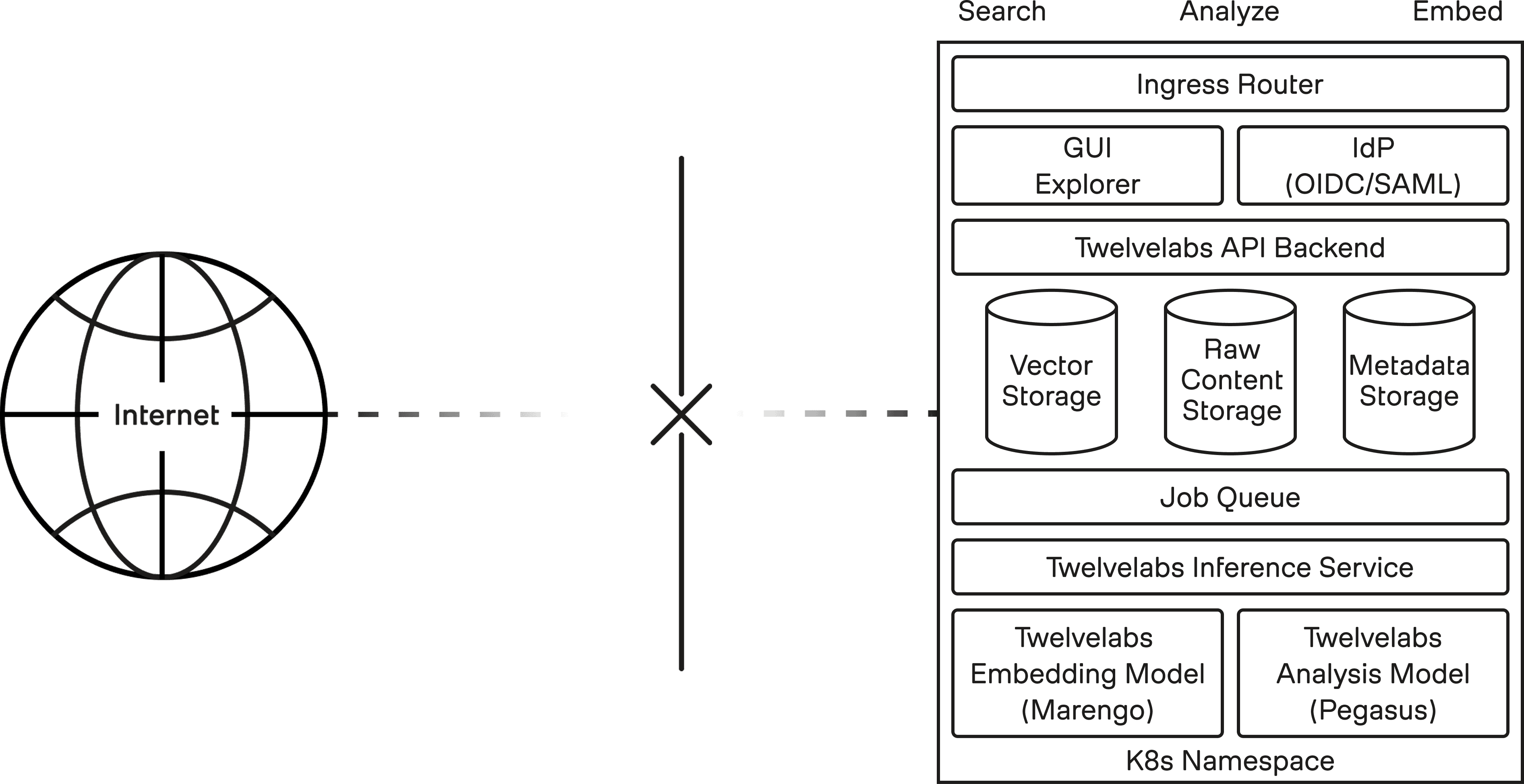

Figure 2: A self-contained TwelveLabs deployment with product, storage, queueing, and model inference services running inside an isolated Kubernetes namespace.

These are the TwelveLabs components:

GUI Explorer (Playground) - Web UI for exploring video collections, testing model capabilities, and ad-hoc analysis.

API Backend - RESTful API that handles user auth, content storage, job lifecycle, and video processing for playback (HLS, thumbnails).

Inference Service - Model-serving layer that preprocesses raw multimedia content for inference and orchestrates model execution.

Embedding Model (Marengo) - Generates multimodal vector embeddings for search and retrieval (by text, image, and entity).

Analysis Model (Pegasus) - Reasons over multimedia content to generate answers, summaries, and time-based metadata.

Supporting infrastructure (self-hosted, swappable, commodity defaults):

Ingress Router - Handles inbound request routing and TLS.

IdP - Authenticates callers against the customer's identity provider via OIDC or SAML.

Vector Storage - Embeddings for multimodal search and retrieval.

Raw Content Storage - S3-compatible storage for video processing and playback.

Metadata Storage - Job lifecycle and processed outputs (transcription, analysis).

Job Queue - Decouples API from async inference execution.

How we know it works

The most important challenge we faced was matching the quality and performance of TwelveLabs’ commercial product, when running on customer hardware in the customer’s environment. To do so, we implemented three levels of testing that gate each release.

Commercial parity. A deployment is only as good as its test suite. Every release runs the same evaluation and regression suites as the commercial product, against the same eval sets and pass thresholds. A release that regresses against the SaaS baseline doesn't ship.

Proportional customer POC. Customer workloads are categorized into t-shirt tiers (S/M/L), which define expected ingestion throughput, search and analysis latency, concurrency limits, and resource utilization under load. Prior to production deployment, these are validated in the customer’s environment with a test run on proportional hardware and data volumes.

Validation against representative content. Use-cases for TwelveLabs models vary greatly by customer - different subject matter, objectives, signal quality, and topicality. We work closely with each customer to create a representative validation set that ensures the deployment is useful on day one.

Running Wild

Figure 3: Examples of disconnected environments where video intelligence may need to run close to the data.

The architecture runs across a wide range of disconnected environments, like high-trust classified systems, low-connectivity networks, and on-prem enclaves. The mission workloads run alongside the data, where they're most effective:

Multi-source, multi-channel FMV. Discovery and analysis across hours, days, or years of FMV from simultaneous feeds - surfacing moments of interest and associations over time without eyes on every frame.

Wide-area geospatial imagery. Object identification and spatiotemporal reasoning on high-resolution overhead images - find vehicles, structures, or activity patterns in seconds.

Forward-deployed edge devices. Local closed-circuit search in remote or isolated environments - anywhere with a single GPU node.

Getting Started

The air-gapped deployment just needs a Kubernetes cluster and at least one CUDA-ready 24+GB GPU to get started.

Data gravity is a limiting force. AI deployed alongside turns it into a multiplier.

Evaluating AI for your disconnected environment? We’d love to dive deep on architecture - reach out at gov@twelvelabs.io.

Disconnected, not Degraded

Video data is among the richest and most unwieldy signals in modern operations. As footage proliferates, operators risk losing critical insights in noise and volume. And the networks that house this data are siloed from the tools that can make it actionable.

We couldn’t let our customers sacrifice mission capability with an insecure or pared-down solution. That’s why we’ve introduced a fully air-gapped deployment of the TwelveLabs stack.

The same Marengo and Pegasus models running in our commercial SaaS can now be used to summarize, analyze, and search videos in a disconnected environment. Customers can bring the same API contracts, inference workflows, and product behavior direct to where they’re needed most.

Figure 1: An air-gapped setup removes public internet dependency while keeping TwelveLabs capabilities available inside the secure environment.

Offline by Design

The air-gapped deployment isn’t just a port of the commercial product. It’s been built from the ground up using offline-first design principles to ensure a reliable, portable platform is delivered to customers.

No network required, anywhere. External dependencies, services, and configuration are never used. Enables operation in otherwise inaccessible restricted environments.

Runs on existing infrastructure. Deploys with built-in automation to the clusters and GPUs already provisioned in the environment. Up and running faster without procurement or provisioning.

Hardened for the most secure enclaves. Ships with FIPS-compliant signed images, customer IdP integration, and local observability tools. Drops into existing security postures, minimizing review burden and runtime surface area.

Under the Hood

Figure 2: A self-contained TwelveLabs deployment with product, storage, queueing, and model inference services running inside an isolated Kubernetes namespace.

These are the TwelveLabs components:

GUI Explorer (Playground) - Web UI for exploring video collections, testing model capabilities, and ad-hoc analysis.

API Backend - RESTful API that handles user auth, content storage, job lifecycle, and video processing for playback (HLS, thumbnails).

Inference Service - Model-serving layer that preprocesses raw multimedia content for inference and orchestrates model execution.

Embedding Model (Marengo) - Generates multimodal vector embeddings for search and retrieval (by text, image, and entity).

Analysis Model (Pegasus) - Reasons over multimedia content to generate answers, summaries, and time-based metadata.

Supporting infrastructure (self-hosted, swappable, commodity defaults):

Ingress Router - Handles inbound request routing and TLS.

IdP - Authenticates callers against the customer's identity provider via OIDC or SAML.

Vector Storage - Embeddings for multimodal search and retrieval.

Raw Content Storage - S3-compatible storage for video processing and playback.

Metadata Storage - Job lifecycle and processed outputs (transcription, analysis).

Job Queue - Decouples API from async inference execution.

How we know it works

The most important challenge we faced was matching the quality and performance of TwelveLabs’ commercial product, when running on customer hardware in the customer’s environment. To do so, we implemented three levels of testing that gate each release.

Commercial parity. A deployment is only as good as its test suite. Every release runs the same evaluation and regression suites as the commercial product, against the same eval sets and pass thresholds. A release that regresses against the SaaS baseline doesn't ship.

Proportional customer POC. Customer workloads are categorized into t-shirt tiers (S/M/L), which define expected ingestion throughput, search and analysis latency, concurrency limits, and resource utilization under load. Prior to production deployment, these are validated in the customer’s environment with a test run on proportional hardware and data volumes.

Validation against representative content. Use-cases for TwelveLabs models vary greatly by customer - different subject matter, objectives, signal quality, and topicality. We work closely with each customer to create a representative validation set that ensures the deployment is useful on day one.

Running Wild

Figure 3: Examples of disconnected environments where video intelligence may need to run close to the data.

The architecture runs across a wide range of disconnected environments, like high-trust classified systems, low-connectivity networks, and on-prem enclaves. The mission workloads run alongside the data, where they're most effective:

Multi-source, multi-channel FMV. Discovery and analysis across hours, days, or years of FMV from simultaneous feeds - surfacing moments of interest and associations over time without eyes on every frame.

Wide-area geospatial imagery. Object identification and spatiotemporal reasoning on high-resolution overhead images - find vehicles, structures, or activity patterns in seconds.

Forward-deployed edge devices. Local closed-circuit search in remote or isolated environments - anywhere with a single GPU node.

Getting Started

The air-gapped deployment just needs a Kubernetes cluster and at least one CUDA-ready 24+GB GPU to get started.

Data gravity is a limiting force. AI deployed alongside turns it into a multiplier.

Evaluating AI for your disconnected environment? We’d love to dive deep on architecture - reach out at gov@twelvelabs.io.

Disconnected, not Degraded

Video data is among the richest and most unwieldy signals in modern operations. As footage proliferates, operators risk losing critical insights in noise and volume. And the networks that house this data are siloed from the tools that can make it actionable.

We couldn’t let our customers sacrifice mission capability with an insecure or pared-down solution. That’s why we’ve introduced a fully air-gapped deployment of the TwelveLabs stack.

The same Marengo and Pegasus models running in our commercial SaaS can now be used to summarize, analyze, and search videos in a disconnected environment. Customers can bring the same API contracts, inference workflows, and product behavior direct to where they’re needed most.

Figure 1: An air-gapped setup removes public internet dependency while keeping TwelveLabs capabilities available inside the secure environment.

Offline by Design

The air-gapped deployment isn’t just a port of the commercial product. It’s been built from the ground up using offline-first design principles to ensure a reliable, portable platform is delivered to customers.

No network required, anywhere. External dependencies, services, and configuration are never used. Enables operation in otherwise inaccessible restricted environments.

Runs on existing infrastructure. Deploys with built-in automation to the clusters and GPUs already provisioned in the environment. Up and running faster without procurement or provisioning.

Hardened for the most secure enclaves. Ships with FIPS-compliant signed images, customer IdP integration, and local observability tools. Drops into existing security postures, minimizing review burden and runtime surface area.

Under the Hood

Figure 2: A self-contained TwelveLabs deployment with product, storage, queueing, and model inference services running inside an isolated Kubernetes namespace.

These are the TwelveLabs components:

GUI Explorer (Playground) - Web UI for exploring video collections, testing model capabilities, and ad-hoc analysis.

API Backend - RESTful API that handles user auth, content storage, job lifecycle, and video processing for playback (HLS, thumbnails).

Inference Service - Model-serving layer that preprocesses raw multimedia content for inference and orchestrates model execution.

Embedding Model (Marengo) - Generates multimodal vector embeddings for search and retrieval (by text, image, and entity).

Analysis Model (Pegasus) - Reasons over multimedia content to generate answers, summaries, and time-based metadata.

Supporting infrastructure (self-hosted, swappable, commodity defaults):

Ingress Router - Handles inbound request routing and TLS.

IdP - Authenticates callers against the customer's identity provider via OIDC or SAML.

Vector Storage - Embeddings for multimodal search and retrieval.

Raw Content Storage - S3-compatible storage for video processing and playback.

Metadata Storage - Job lifecycle and processed outputs (transcription, analysis).

Job Queue - Decouples API from async inference execution.

How we know it works

The most important challenge we faced was matching the quality and performance of TwelveLabs’ commercial product, when running on customer hardware in the customer’s environment. To do so, we implemented three levels of testing that gate each release.

Commercial parity. A deployment is only as good as its test suite. Every release runs the same evaluation and regression suites as the commercial product, against the same eval sets and pass thresholds. A release that regresses against the SaaS baseline doesn't ship.

Proportional customer POC. Customer workloads are categorized into t-shirt tiers (S/M/L), which define expected ingestion throughput, search and analysis latency, concurrency limits, and resource utilization under load. Prior to production deployment, these are validated in the customer’s environment with a test run on proportional hardware and data volumes.

Validation against representative content. Use-cases for TwelveLabs models vary greatly by customer - different subject matter, objectives, signal quality, and topicality. We work closely with each customer to create a representative validation set that ensures the deployment is useful on day one.

Running Wild

Figure 3: Examples of disconnected environments where video intelligence may need to run close to the data.

The architecture runs across a wide range of disconnected environments, like high-trust classified systems, low-connectivity networks, and on-prem enclaves. The mission workloads run alongside the data, where they're most effective:

Multi-source, multi-channel FMV. Discovery and analysis across hours, days, or years of FMV from simultaneous feeds - surfacing moments of interest and associations over time without eyes on every frame.

Wide-area geospatial imagery. Object identification and spatiotemporal reasoning on high-resolution overhead images - find vehicles, structures, or activity patterns in seconds.

Forward-deployed edge devices. Local closed-circuit search in remote or isolated environments - anywhere with a single GPU node.

Getting Started

The air-gapped deployment just needs a Kubernetes cluster and at least one CUDA-ready 24+GB GPU to get started.

Data gravity is a limiting force. AI deployed alongside turns it into a multiplier.

Evaluating AI for your disconnected environment? We’d love to dive deep on architecture - reach out at gov@twelvelabs.io.