Product

Pegasus 1 Beta: Setting New Standards in Video-Language Modeling

Twelve Labs is launching Pegasus-1 in open beta, upgrading from the alpha version with 17 billion parameters, a 15x increase in video processing resolution, and improved training techniques that deliver state-of-the-art results in video question answering, summarization, and conversation benchmarks against models including Gemini Pro 1.5.

Twelve Labs is launching Pegasus-1 in open beta, upgrading from the alpha version with 17 billion parameters, a 15x increase in video processing resolution, and improved training techniques that deliver state-of-the-art results in video question answering, summarization, and conversation benchmarks against models including Gemini Pro 1.5.

In this article

No headings found on page

Join our newsletter

Join our newsletter

Receive the latest advancements, tutorials, and industry insights in video understanding

Receive the latest advancements, tutorials, and industry insights in video understanding

Search, analyze, and explore your videos with AI.

Mar 12, 2024

10 Min

Copy link to article

Check out the Pegasus-1 technical report on arXiV and HuggingFace!

1 - Introduction

At Twelve Labs, our goal is to advance video understanding through the creation of innovative multimodal AI models. In a previous post, "Introducing Video-to-Text and Pegasus-1 (80B)," we introduced the alpha version of Pegasus-1. This foundational model can generate descriptive text from video input. Today, we're thrilled to announce the launch of Pegasus-1's open beta.

Pegasus-1 is designed to understand and articulate complex video content, transforming how we interact with and analyze multimedia. With roughly 17 billion parameters, this model is a significant progression in multimodal AI. It can process and generate language from video input with exceptional accuracy and detail.

In this update, we'll explore the enhancements made to Pegasus-1 since its alpha release. These include improvements in data quality, video processing, and training methods. We will also share benchmarking results against leading commercial and open-source models, demonstrating Pegasus-1's superior performance in video summarization, question answering, and conversations. Beyond quantitative metrics, Pegasus-1 also shows qualitative improvements through its enhanced world knowledge and ability to capture detailed visual information.

2 - Model Overview

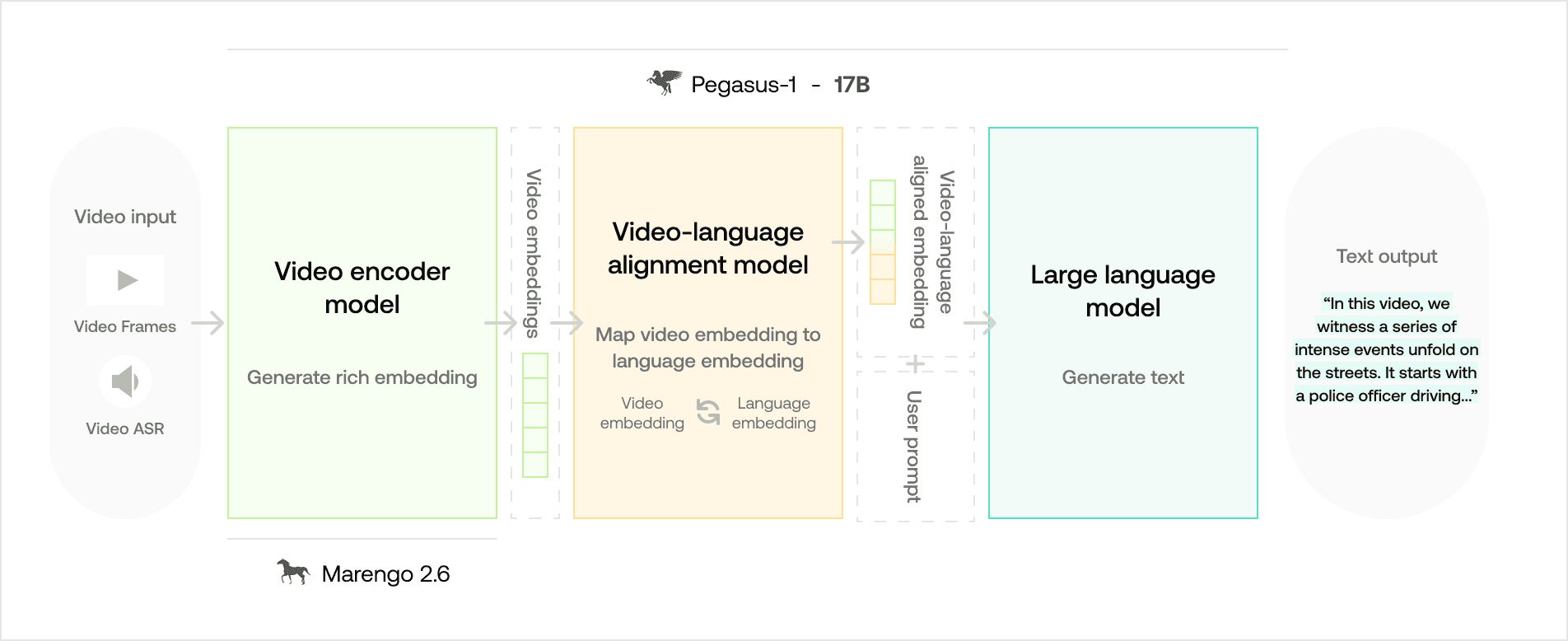

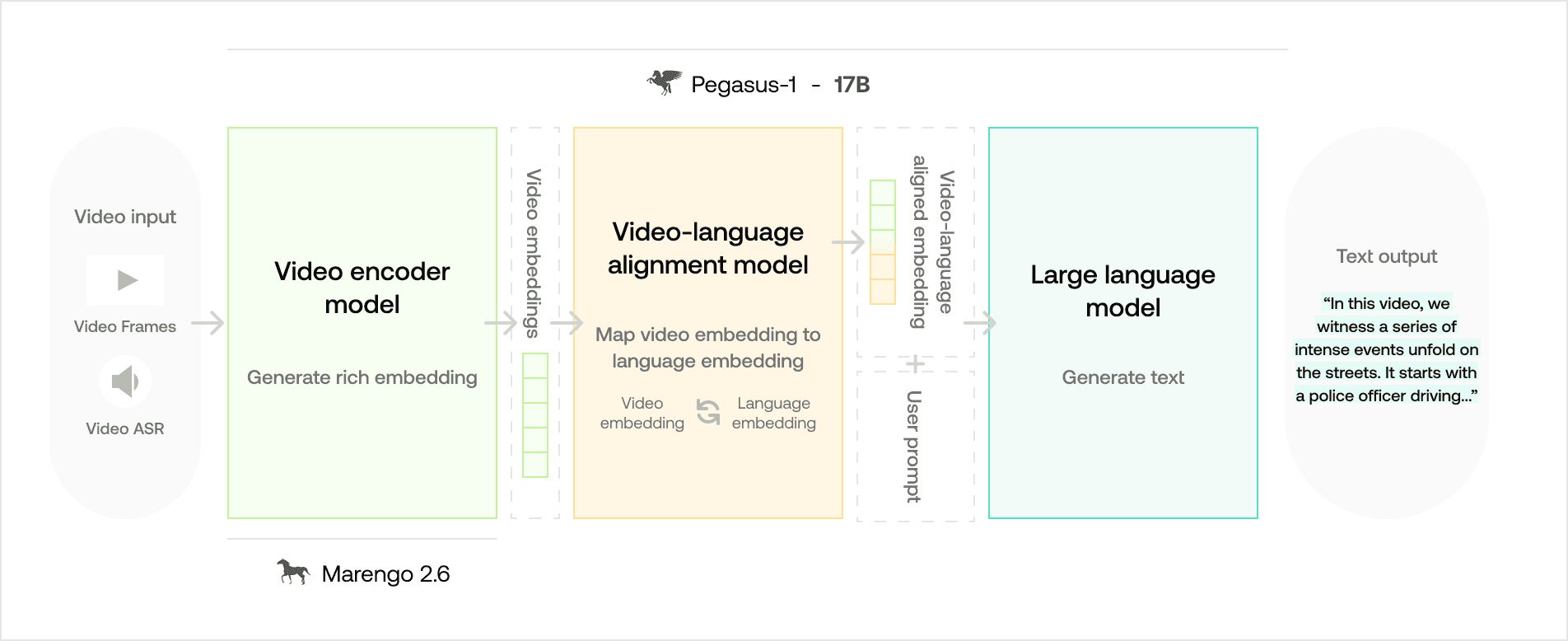

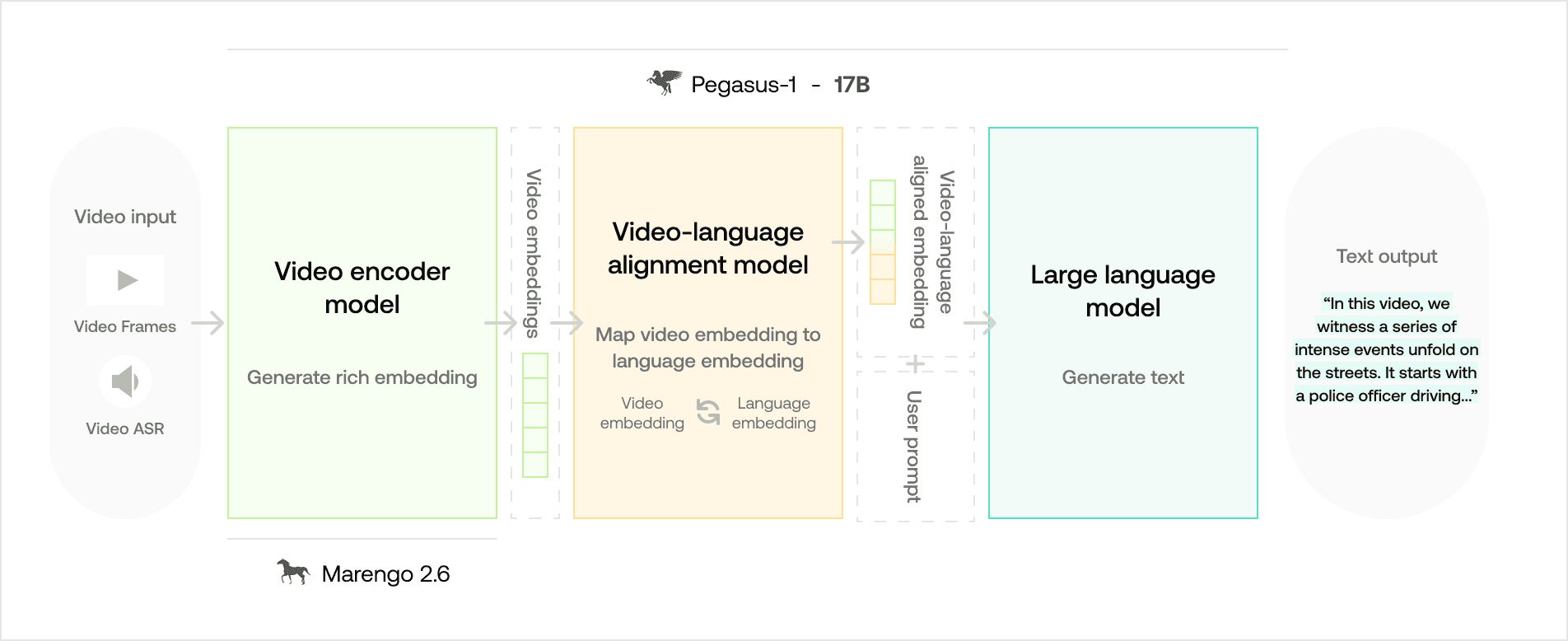

As a quick recap, Pegasus-1 is a multimodal foundation model designed to bridge the gap between video content and language, enabling machines to interpret and generate text based on video input. The architecture of Pegasus-1 is composed of three main components:

The video encoder model processes the video input to generate rich embeddings from both video frames and audio speech recognition (ASR) data. These embeddings are dense representations that capture the visual and auditory essence of the video content.

The video-language alignment model maps the video embeddings to corresponding language embeddings, creating a shared space where video and text representations are aligned. This alignment is crucial for the model to understand the correspondence between what is seen in the video and the language that describes it.

The large language model decoder takes the aligned embeddings and user prompts to generate coherent and contextually relevant text output. This output can range from descriptive summaries to answers to specific questions about the video content.

Compared to the alpha version, the open-beta version of Pegasus-1 boasts approximately 17B parameters, making it a compact yet powerful tool for interpreting and generating text based on video data.

3 - Major Improvements

As we progress from the alpha to the open-beta version, we continue to refine and enhance the model to deliver even more accurate and detailed video-language understanding. These enhancements are driven by three key factors: high-quality data, optimized video processing, and refined training techniques.

3.1 - Data Improvement

In accordance with previous findings, we also find that the quality and granularity of the captions had a more significant impact on the model's performance than the sheer quantity of data. For instance, Pegasus-1, trained on 100,000 high-quality video-text pairs, easily outperforms the same architecture trained on a much larger dataset (10M+) with lower-quality captions.

With this experimental evidence in mind, we have designed an efficient data annotation pipeline to create high-quality video captions for the aforementioned 10M+ videos. Training on such a large amount of high quality video-text pairs, Pegasus attains foundational video understanding capabilities that are not observed in other models.

3.2 - Video Processing Improvement

We made substantial changes to the video processing pipeline to optimize both spatial and temporal resolution. We increased the number of patches per frame by 10x (spatial) and the number of frames by 1.5x (temporal), resulting in a 15x increase in the total number of patches per video. This enhancement allows Pegasus-1 to capture and convey more information per frame.

Pegasus-1 is now also able to better grasp the overall story and context of the video in a more coherent manner, as evidenced by both qualitative and quantitative analyses, particularly in question-answering datasets.

3.3 - Training Improvement

As a multimodal foundation model, Pegasus-1 is trained on massive multimodal datasets over multiple stages. However, multi-stage training often suffers from the phenomenon known as catastrophic forgetting. This occurs when a model, upon learning new information, rapidly forgets the old information it was previously trained on. This issue becomes even more pressing in multimodal models that undergo sequential training across modalities.

To address this, we employ a strategic training regimen that involves multiple stages - each meticulously designed to balance the acquisition of new knowledge with the preservation of previously learned information. The key to this approach lies in the selective updates (unfreezing) of model parameters and the careful adjustment of learning rates throughout the training process.

The open-beta version of Pegasus-1, compared to the alpha version, boasts enhanced capabilities, such as a heightened ability to capture fine-grained temporal moments and a reduced propensity for hallucination, resulting in increased robustness across diverse video domains. It also demonstrates expanded world knowledge and an improved capacity to list various moments in temporal order, rather than concentrating on a singular scene.

4 - Quantitative Benchmark Results

In our comprehensive benchmarking efforts, Pegasus-1 has been evaluated against a spectrum of both commercial and open-source models. This section will detail the performance of Pegasus-1 in comparison to these models across various video-language modeling tasks.

4.1 - Baseline Models

The baseline models against which Pegasus-1 was benchmarked include:

Gemini Pro (1.5): A commercial multimodal model from Google DeepMind known for its robust video-language capabilities, released in November 2023 and most recently updated in February 2024. We are comparing against its most recent version, namely Gemini Pro 1.5.

Whisper + ChatGPT-3.5 (OpenAI): This combination is one of the few widely adopted approaches to video summarization. By leveraging the state-of-the-art speech-to-text and large language models, summaries are derived primarily from the video’s auditory content. A significant drawback is the oversight of valuable visual information within the video.

Vendor A’s Summary API: Widely adopted commercial product for audio and video summary generation. Vendor A’s Summary API seems to be based only on transcription data and language models (akin to ASR+ChatGPT3.5) to output a video summary.

Video-ChatGPT: Developed by Maaz et al. (June 2023), it is a video language model with a chat interface. The model processes video frames to capture visual events within a video. Note that it does not utilize the conversational information within the video.

VideoChat2: Developed by Li et al. (November 2023), it is a state-of-the-art open-source multimodal LLM, which employs progressive multi-modal training with diverse instruction-tuning data.

We specifically excluded image-based vision-language models such as LLaVA and GPT-4V from our comparison because they lack native video processing capabilities, which is a critical requirement for the tasks we are evaluating. Specifically, here are their limitations.

Many of these models can only see a single image, which perform poorly on most video benchmark datasets.

Some of these models (e.g. GPT-4V) can see multiple images at once, but they can process only a few frames (10 or less) of a video at a time, which are not sufficient for most videos that are longer than 1 minute.

Image-based models especially exhibit limitations when dealing with the dynamic and fluid nature of video content, which is not easily observable when the models treat the input as a series of images rather than a coherent video.

Moreover, the time it takes for these models to process videos is impractically long for real-world applications. This is because they lack the mechanisms to efficiently handle the temporal dimension of videos, which is essential for understanding the unfolding narrative and actions within the video content.

4.2 - Results on Video Question Answering

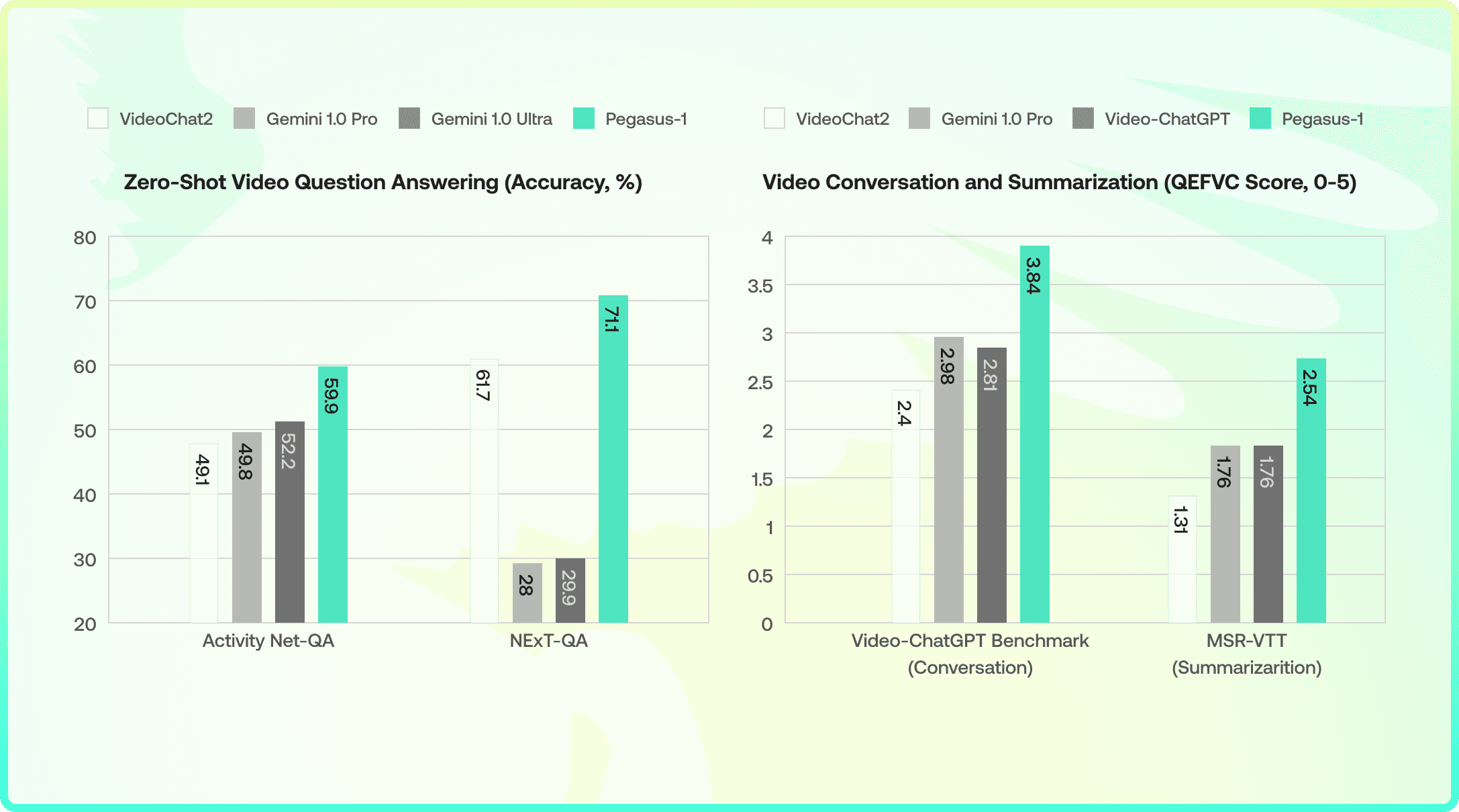

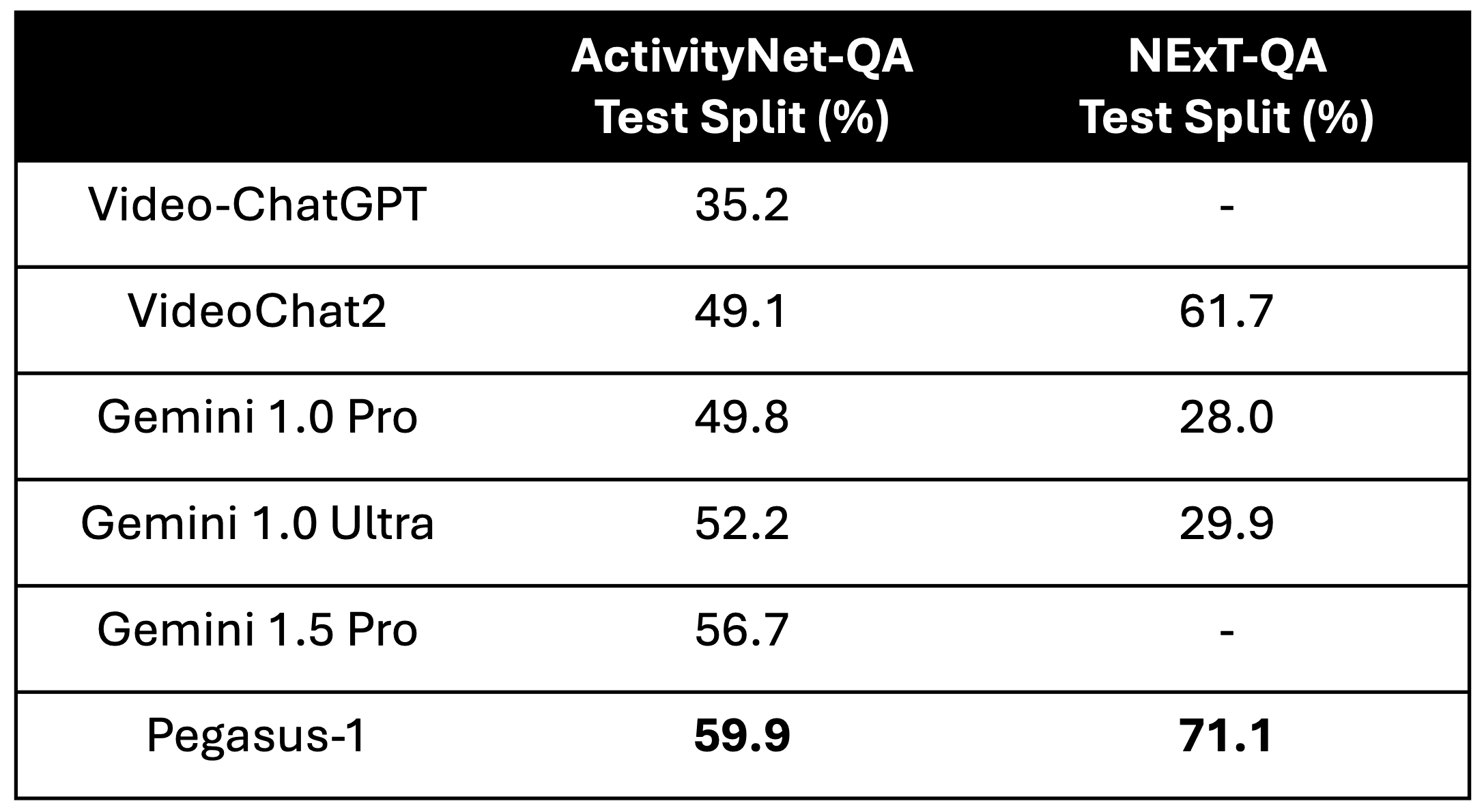

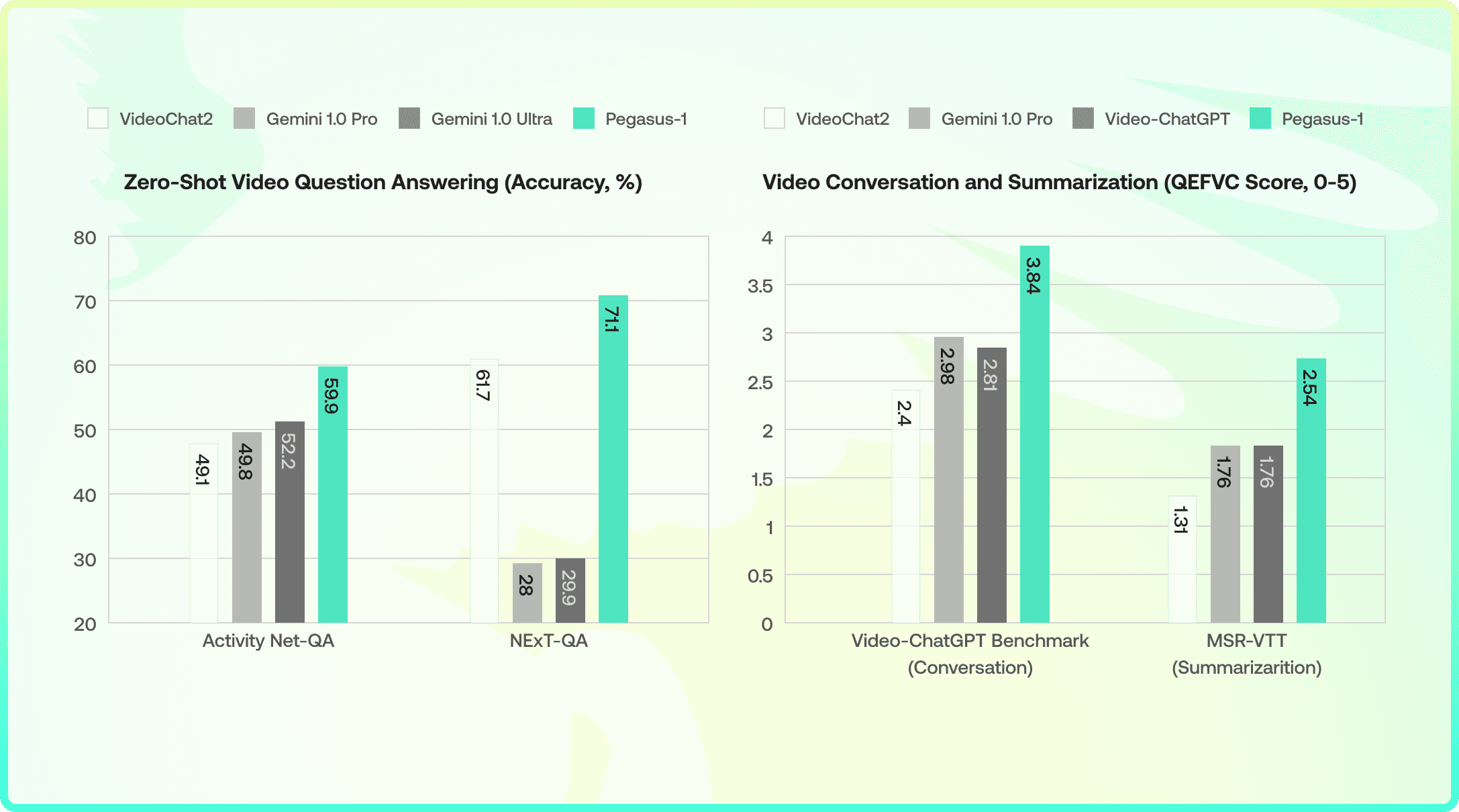

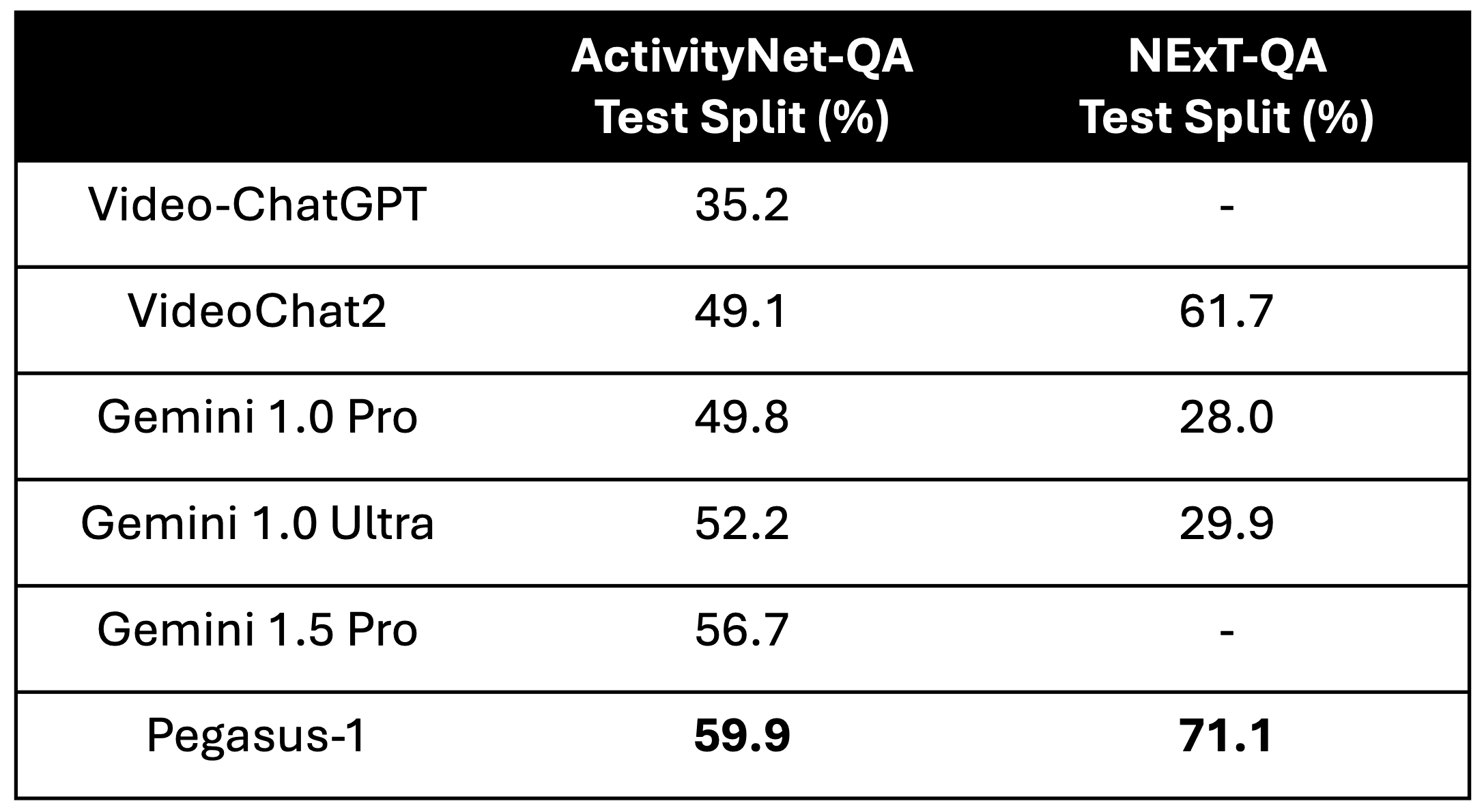

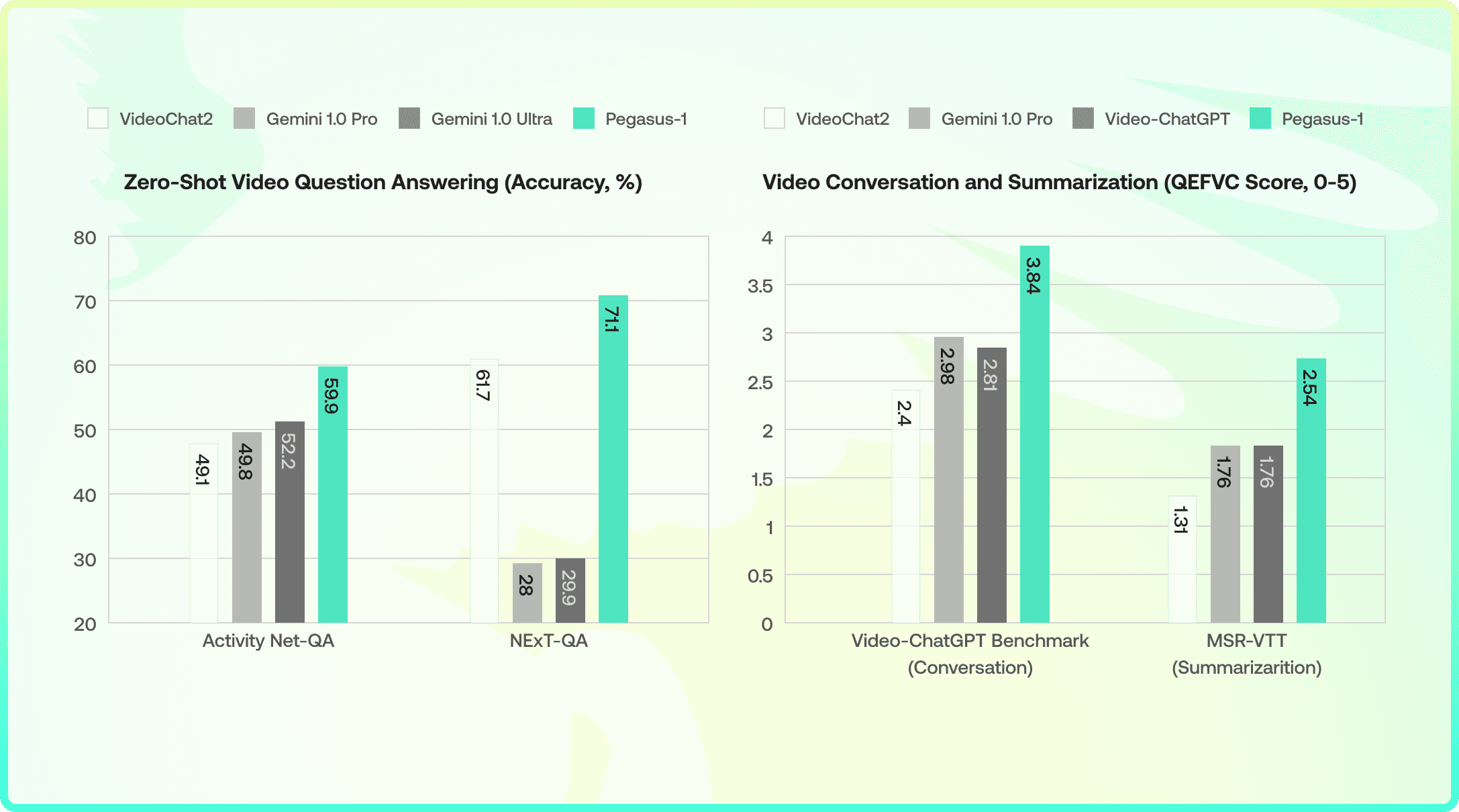

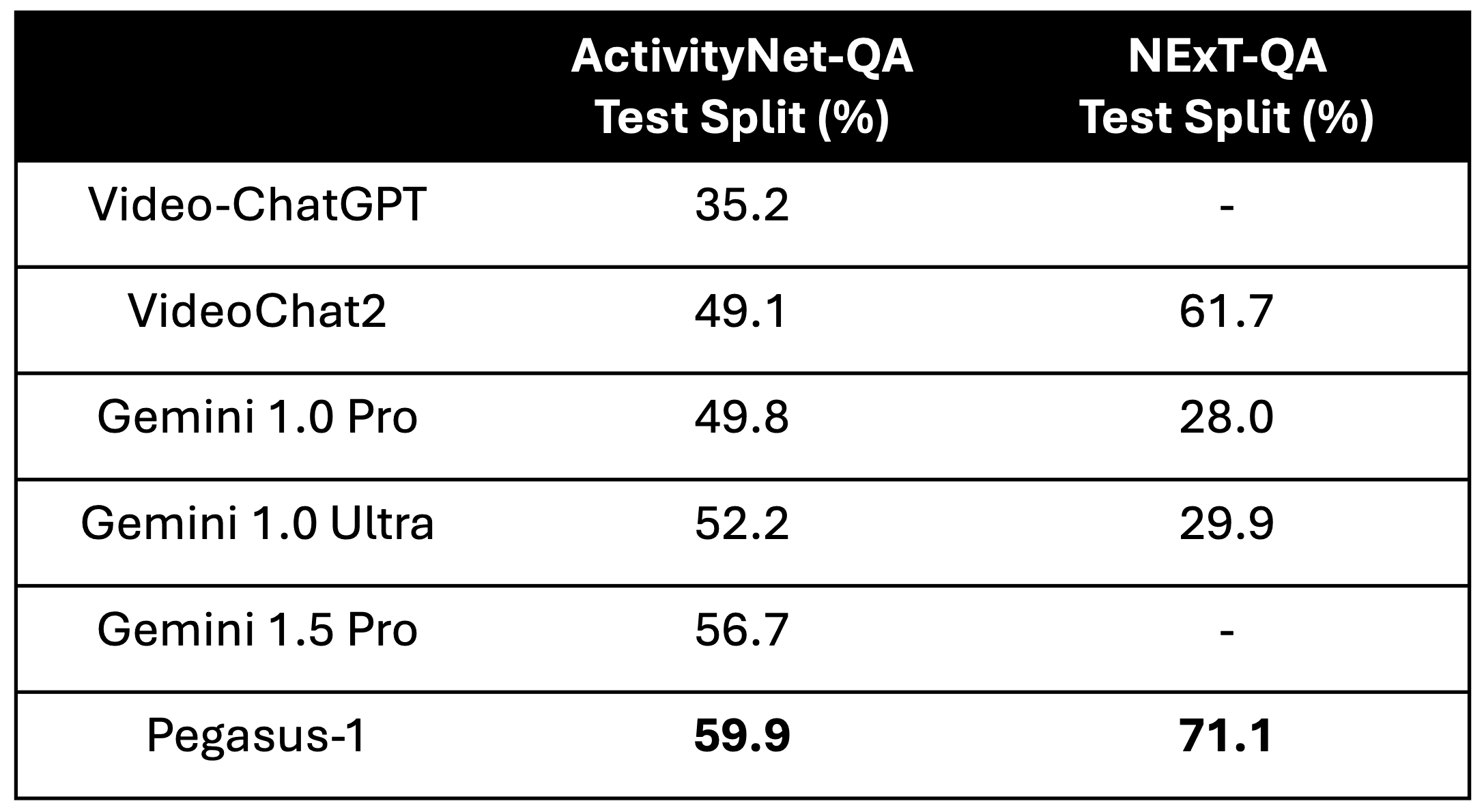

In Video Question Answering tasks, Pegasus-1's zero-shot performance on both ActivityNet-QA and NExT-QA datasets is particularly noteworthy. Pegasus-1 demonstrates a remarkable ability to generalize and understand various videos to accurately answer video-related questions without task-specific training.

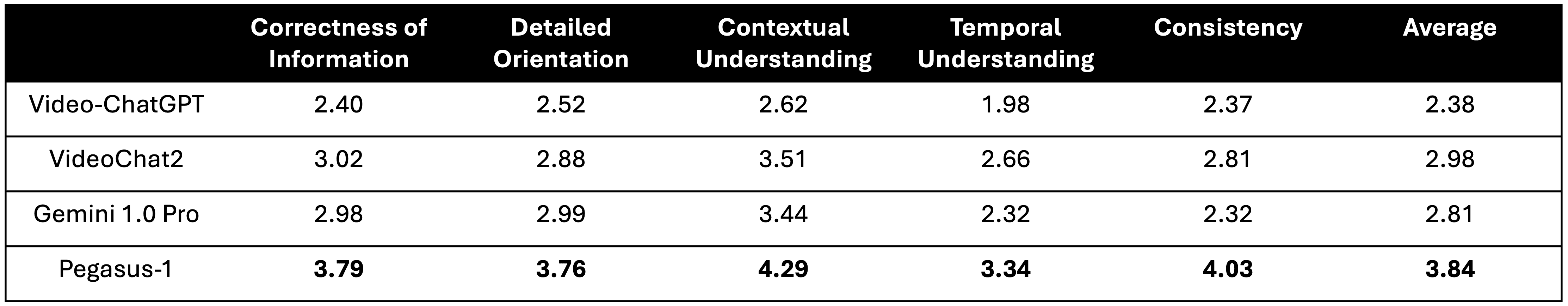

4.3 - Results on Video Conversations

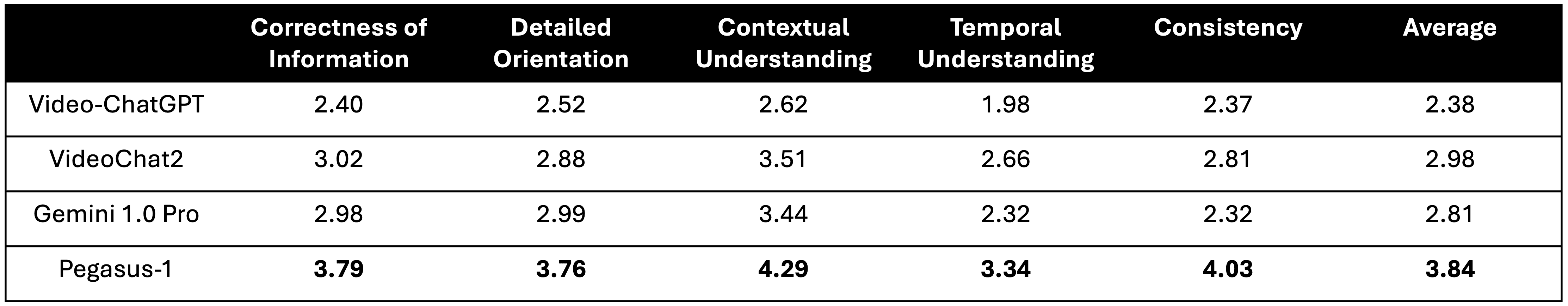

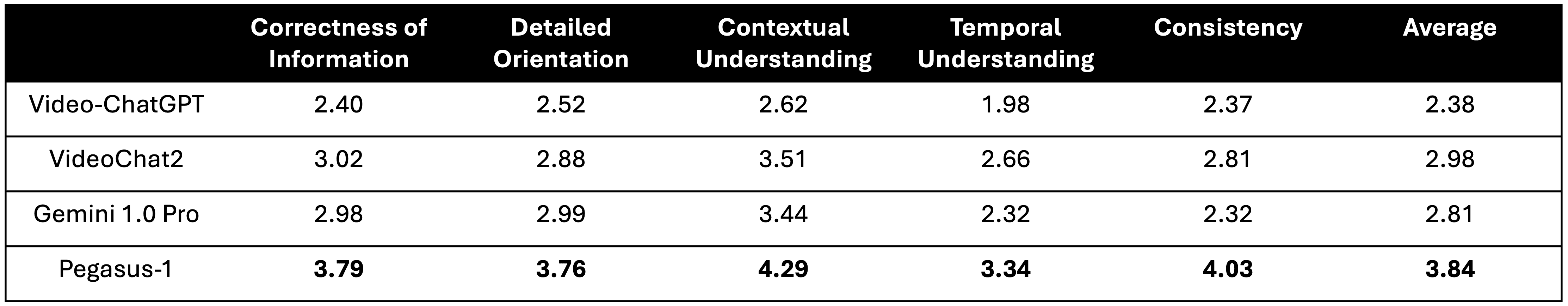

The Video-ChatGPT Benchmark (also known as QEFVC) results highlight Pegasus-1's adeptness in handling video conversations. Pegasus-1 leads the pack with scores that reflect its proficiency in Correctness, Detail, Context, Temporal understanding, and Consistency. Notably, Pegasus-1 scored 3.79 in Correctness and 4.29 in Detail, showcasing its nuanced grasp of video conversations and the context in which they occur.

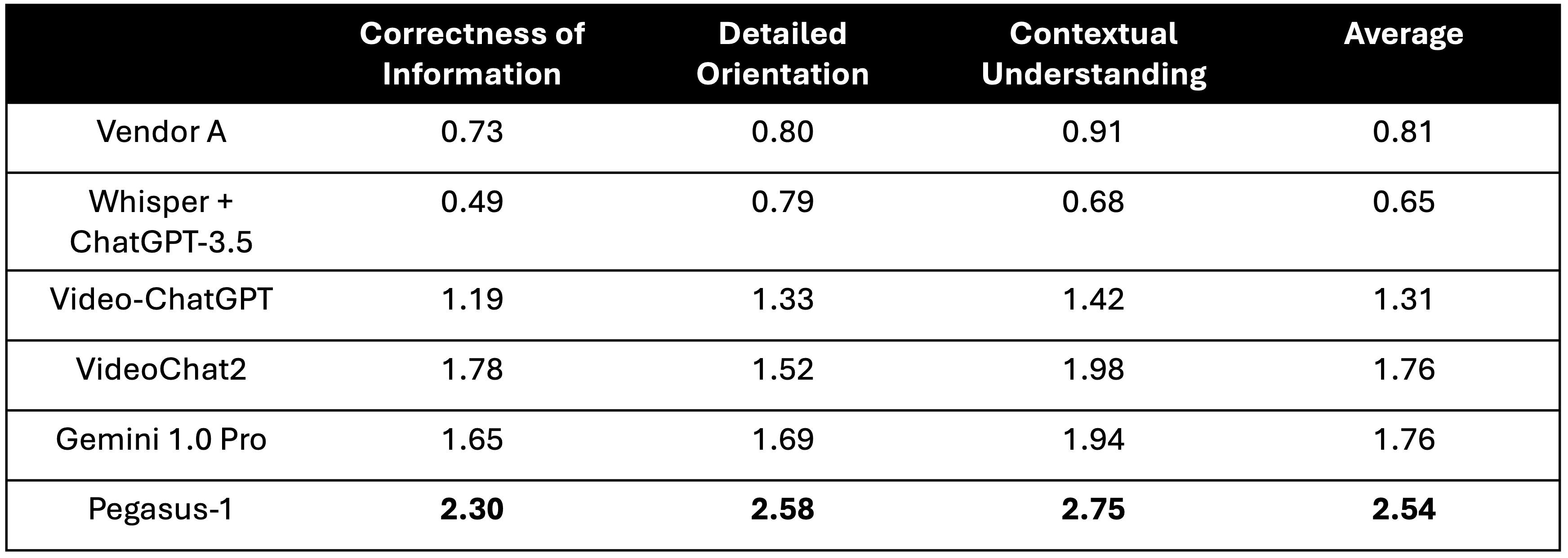

4.4 - Results on Video Summarization

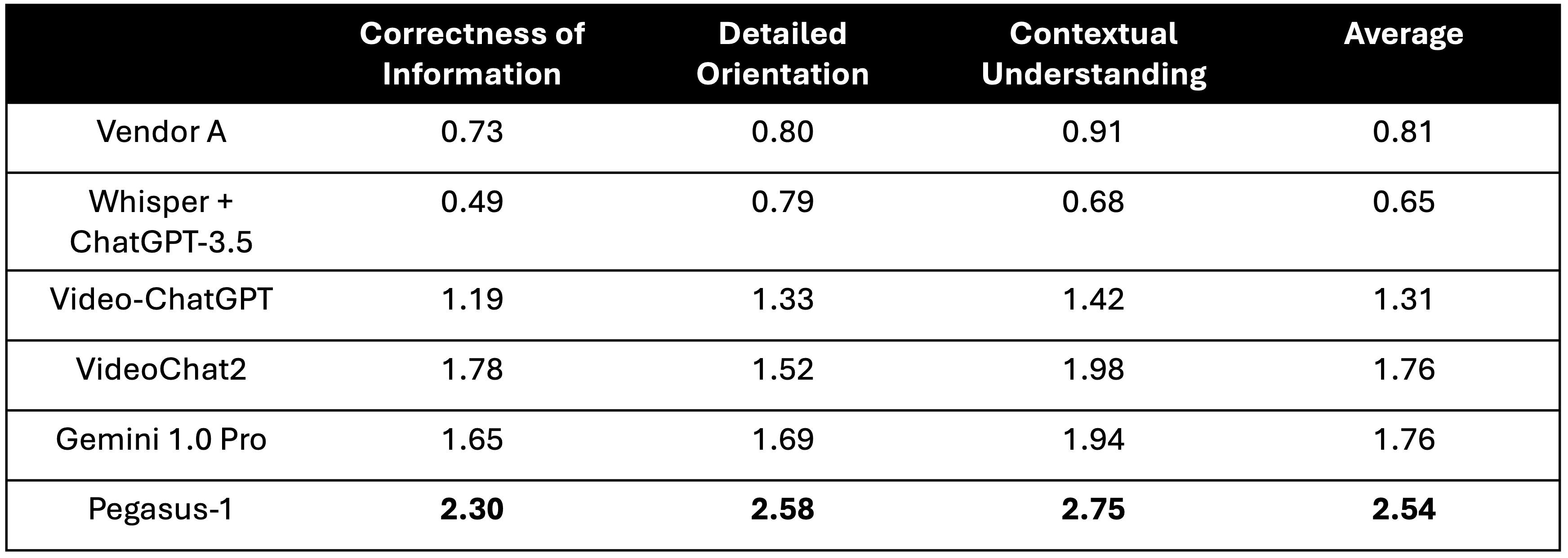

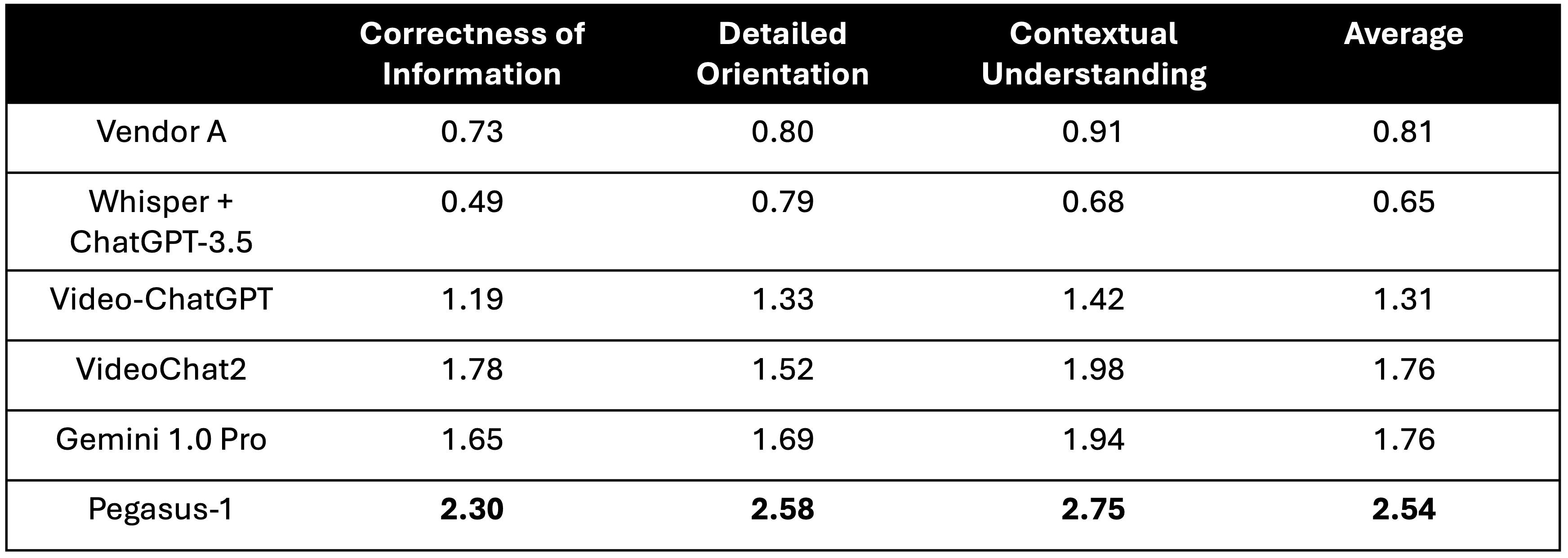

Lastly, Pegasus-1 has demonstrated superior performance on summarizing videos. We compare Pegasus-1 against its competitors on MSR-VTT Dataset, using VideoChatGPT Benchmark’s scoring scheme (”Temporal Understanding” and “Consistency” are omitted due to the nature of summarization). As shown above, Pegasus-1 outperforms the baseline models all metrics by a significant margin.

Through these benchmarks, Pegasus-1 has established itself as a formidable contender in the video-language modeling arena, setting new standards for zero-shot performance and generalization in video understanding tasks.

5 - Qualitative Examples

These are sample examples that are randomly selected from diverse domains to illustrate the capabilities of Pegasus-1.

E-Learning Video

Generated Title

Setting Up a Tent: A Step-by-Step Guide to Camping with Parks Canada

Generated Topic

Camping: Setting Up and Securing a Tent with Parks Canada

Generated Hashtags

#camping, #tent, #set up, #fire pit, #security, #learn to camp, #Parks Canada

Generated Chapters

Step 1: Set up 00:00:00 - 00:00:08

Choosing a campsite and setting up the tent.

Step 2: Build a fire pit 00:00:09 - 00:00:36

Creating a fire pit for cooking and warmth.

Step 3: Set up your tent 00:00:37 - 00:01:14

Unfolding and positioning the tent.

Step 4: Security 00:01:14 - 00:01:48

Securing the tent to the ground.

Step 5: Attach the tent fly 00:01:49 - 00:01:54

Adding the rainfly to the tent.

Step 6: Add extra comfort 00:01:55 - 00:02:16

Setting up bedding and other gear inside the tent.

Step 7: Take down your tent 00:02:17 - 00:02:30

Dismantling and packing away the tent.

Step 8: Pack up 00:02:31 - 00:02:58

Folding and storing the tent in its carrying case.

Step 9: Learn about program 00:03:00 - 00:03:06

Promoting Parks Canada's educational programs.

Ad Video

Generating Answers based on Custom Prompts

Prompt: Give me an analysis how the advertisement uses facial expressions to convey their message

Output: The advertisement strategically utilizes facial expressions to effectively convey the message of elegance, joy, and luxury associated with the brand. The models in the advertisement are portrayed with confident, warm smiles, which suggests that the Bvlgari jewelry brings happiness and enhances the wearer's beauty. These expressions convey a sense of positivity and create an emotional connection with the audience.

Furthermore, the calm and composed expressions of the models reflect the timeless and sophisticated nature of the brand. By showcasing the jewelry on the models' faces in close-up shots, the advertisement emphasizes the connection between the product and the positive emotions displayed. This technique aims to evoke desire and aspiration in the viewers, associating the brand with a sense of luxury and elegance.

From the base description of the video, it can be inferred that the advertisement features a woman showcasing various pieces of Bvlgari jewelry in different settings. The video starts with the woman standing next to a tree, wearing a necklace and earrings, with the Bvlgari logo visible on the trunk. She is then seen walking down a tree-lined driveway, wearing a different dress but the same necklace. The setting transitions to an opulent interior, where the woman is shown examining and wearing a ring, a bracelet, and a necklace with a large pendant.

The video also includes scenes of a peacock and a peacock feather, which may symbolize luxury and elegance. Towards the end of the video, the woman is joined by another model, both wearing Bvlgari jewelry. The advertisement concludes with the Bvlgari logo, accompanied by the words 'Wonderful Extravaganza' and 'Bvlgari Rome', indicating the brand and the location associated with the advertisement.

In summary, the advertisement effectively utilizes facial expressions to convey a message of elegance, joy, and luxury. The models' confident and warm smiles, along with the close-up shots of their faces while wearing the jewelry, create an emotional connection with the audience. The overall visual elements of the advertisement, such as the luxurious settings and the presence of peacock symbolism, further enhance the perception of the brand's elegance and sophistication.

Movie Trailer Video

Generating Answers based on Custom Prompts

Prompt: Would you recommend this video to young children

Output: Based on my comprehensive analysis of the video, I would not recommend this video to young children. The video contains intense action scenes, violence, and mature themes that are not suitable for young viewers. The visual information suggests that the video is a trailer for a film or television series with a thrilling and action-packed narrative.

The video opens with a fiery orange title card, transitioning to a dark and atmospheric shot of a church interior illuminated by candles. A man in a suit is seen walking down the aisle, followed by another man in a similar suit. This scene is followed by a passionate embrace between a woman and a man, hinting at a romantic element in the story.

Throughout the trailer, there are various settings and scenes that suggest action and suspense. These include a confrontation between two men, a moment of contemplation while looking at a pocket watch, a tense exchange between characters, and a high-speed chase through city streets at night. There are also shots of men walking away from an explosion and a glimpse of a desert landscape. These elements indicate intense action sequences that may not be suitable for young children.

Additionally, there is a shot of a man looking at a gun, indicating the potential for violence or conflict in the storyline. The trailer concludes with shots of the Eiffel Tower at sunset and a confrontation between two men before revealing the title "IN MARC A DANCER" and the release date "MARCH 24".

In summary, the video contains intense action scenes, violence, and mature themes that are not suitable for young children. It is a thrilling and action-packed trailer for a film or television series.

6 - Limitations

Safety & Biases: Pegasus-1 is designed with safety mechanisms; however, as with any AI model, there is a risk of generating content that could be considered harmful or inappropriate without proper oversight and regulation. Our understanding of ethical and safety measures for video foundation models is ongoing. As we continue testing and gather feedback, a detailed evaluation and ethics report will be made available.

Video Duration: Our API supports videos ranging from 4 seconds to 20 minutes in length. This constraint is due to computational and memory considerations, which are common challenges in handling large-scale video data. As a result, users may need to segment longer videos into smaller parts to fully leverage the model's capabilities. We will work on natively supporting longer duration in future releases.

Hallucinations: Pegasus-1 can occasionally produce inaccurate outputs. While we have improved it from our alpha version to reduce hallucination, users should be mindful of this limitation, especially for tasks where high precision is required and factual correctness is critical.

7 - Closing Remarks

The journey of Pegasus-1 from its alpha to the beta version has been marked by significant enhancements. The meticulous improvements in training data quality, video processing capabilities, and advanced training techniques have culminated in a model that not only understands video content more deeply but also interacts in a conversational context with a level of sophistication that was previously unattainable.

The benchmark results speak for themselves, placing Pegasus-1 at the forefront of the industry, outperforming established models like Google's Gemini Pro and setting new standards in video QA and video conversational frameworks. These quantitative measures, alongside the qualitative improvements in world knowledge and detail recognition, underscore the transformative potential of Pegasus-1.

While we recognize the limitations of Pegasus-1, including safety concerns, video length constraints, and occasional hallucinations, these are areas of active research and development. Our commitment to improving Pegasus-1 remains steadfast as we aim to push the boundaries of video understanding technology.

Twelve Labs Team

This is a joint team effort across multiple functional groups including model and data (”core” indicates Core Contributor), engineering, product and business development. (First-name alphabetical order)

Model: Aiden Lee, Cooper Han, Flynn Jang (core), Jae Lee, Jay Yi (core), Jeff Kim, Jeremy Kim, Kyle Park, Lucas Lee, Mars Ha, Minjoon Seo, Ray Jung (core), William Go (core)

Data: Daniel Kim (core), Jay Suh (core)

Deployment: Abraham Jo, Ed Park, Hassan Kianinejad, SJ Kim, Tony Moon, Wade Jeong

Product: Andrei Popescu, Esther Kim, EK Yoon, Genie Heo, Henry Choi, Jenna Kang, Kevin Han, Noah Seo, Sunny Nguyen, Ryan Won, Yeonhoo Park

Business & Operations: Anthony Giuliani, Dave Chung, Hans Yoon, James Le, Jenny Ahn, June Lee, Maninder Saini, Meredith Sanders Soyoung Lee, Sue Kim, Travis Couture

Resources:

Link to sign up and play with our API

Link to the API documentation

Link to our Discord community to connect with fellow users and developers

If you use this model in your work, please use the following BibTeX citation and cite the author as Twelve Labs:

@misc{pegasus-1-beta, author = {Twelve Labs Team}, title = {Pegasus-1 Open Beta: Setting New Standards in Video-Language Modeling}, url = {https://www.twelvelabs.io/blog/pegasus-1-beta}, year = {2024}}}

Check out the Pegasus-1 technical report on arXiV and HuggingFace!

1 - Introduction

At Twelve Labs, our goal is to advance video understanding through the creation of innovative multimodal AI models. In a previous post, "Introducing Video-to-Text and Pegasus-1 (80B)," we introduced the alpha version of Pegasus-1. This foundational model can generate descriptive text from video input. Today, we're thrilled to announce the launch of Pegasus-1's open beta.

Pegasus-1 is designed to understand and articulate complex video content, transforming how we interact with and analyze multimedia. With roughly 17 billion parameters, this model is a significant progression in multimodal AI. It can process and generate language from video input with exceptional accuracy and detail.

In this update, we'll explore the enhancements made to Pegasus-1 since its alpha release. These include improvements in data quality, video processing, and training methods. We will also share benchmarking results against leading commercial and open-source models, demonstrating Pegasus-1's superior performance in video summarization, question answering, and conversations. Beyond quantitative metrics, Pegasus-1 also shows qualitative improvements through its enhanced world knowledge and ability to capture detailed visual information.

2 - Model Overview

As a quick recap, Pegasus-1 is a multimodal foundation model designed to bridge the gap between video content and language, enabling machines to interpret and generate text based on video input. The architecture of Pegasus-1 is composed of three main components:

The video encoder model processes the video input to generate rich embeddings from both video frames and audio speech recognition (ASR) data. These embeddings are dense representations that capture the visual and auditory essence of the video content.

The video-language alignment model maps the video embeddings to corresponding language embeddings, creating a shared space where video and text representations are aligned. This alignment is crucial for the model to understand the correspondence between what is seen in the video and the language that describes it.

The large language model decoder takes the aligned embeddings and user prompts to generate coherent and contextually relevant text output. This output can range from descriptive summaries to answers to specific questions about the video content.

Compared to the alpha version, the open-beta version of Pegasus-1 boasts approximately 17B parameters, making it a compact yet powerful tool for interpreting and generating text based on video data.

3 - Major Improvements

As we progress from the alpha to the open-beta version, we continue to refine and enhance the model to deliver even more accurate and detailed video-language understanding. These enhancements are driven by three key factors: high-quality data, optimized video processing, and refined training techniques.

3.1 - Data Improvement

In accordance with previous findings, we also find that the quality and granularity of the captions had a more significant impact on the model's performance than the sheer quantity of data. For instance, Pegasus-1, trained on 100,000 high-quality video-text pairs, easily outperforms the same architecture trained on a much larger dataset (10M+) with lower-quality captions.

With this experimental evidence in mind, we have designed an efficient data annotation pipeline to create high-quality video captions for the aforementioned 10M+ videos. Training on such a large amount of high quality video-text pairs, Pegasus attains foundational video understanding capabilities that are not observed in other models.

3.2 - Video Processing Improvement

We made substantial changes to the video processing pipeline to optimize both spatial and temporal resolution. We increased the number of patches per frame by 10x (spatial) and the number of frames by 1.5x (temporal), resulting in a 15x increase in the total number of patches per video. This enhancement allows Pegasus-1 to capture and convey more information per frame.

Pegasus-1 is now also able to better grasp the overall story and context of the video in a more coherent manner, as evidenced by both qualitative and quantitative analyses, particularly in question-answering datasets.

3.3 - Training Improvement

As a multimodal foundation model, Pegasus-1 is trained on massive multimodal datasets over multiple stages. However, multi-stage training often suffers from the phenomenon known as catastrophic forgetting. This occurs when a model, upon learning new information, rapidly forgets the old information it was previously trained on. This issue becomes even more pressing in multimodal models that undergo sequential training across modalities.

To address this, we employ a strategic training regimen that involves multiple stages - each meticulously designed to balance the acquisition of new knowledge with the preservation of previously learned information. The key to this approach lies in the selective updates (unfreezing) of model parameters and the careful adjustment of learning rates throughout the training process.

The open-beta version of Pegasus-1, compared to the alpha version, boasts enhanced capabilities, such as a heightened ability to capture fine-grained temporal moments and a reduced propensity for hallucination, resulting in increased robustness across diverse video domains. It also demonstrates expanded world knowledge and an improved capacity to list various moments in temporal order, rather than concentrating on a singular scene.

4 - Quantitative Benchmark Results

In our comprehensive benchmarking efforts, Pegasus-1 has been evaluated against a spectrum of both commercial and open-source models. This section will detail the performance of Pegasus-1 in comparison to these models across various video-language modeling tasks.

4.1 - Baseline Models

The baseline models against which Pegasus-1 was benchmarked include:

Gemini Pro (1.5): A commercial multimodal model from Google DeepMind known for its robust video-language capabilities, released in November 2023 and most recently updated in February 2024. We are comparing against its most recent version, namely Gemini Pro 1.5.

Whisper + ChatGPT-3.5 (OpenAI): This combination is one of the few widely adopted approaches to video summarization. By leveraging the state-of-the-art speech-to-text and large language models, summaries are derived primarily from the video’s auditory content. A significant drawback is the oversight of valuable visual information within the video.

Vendor A’s Summary API: Widely adopted commercial product for audio and video summary generation. Vendor A’s Summary API seems to be based only on transcription data and language models (akin to ASR+ChatGPT3.5) to output a video summary.

Video-ChatGPT: Developed by Maaz et al. (June 2023), it is a video language model with a chat interface. The model processes video frames to capture visual events within a video. Note that it does not utilize the conversational information within the video.

VideoChat2: Developed by Li et al. (November 2023), it is a state-of-the-art open-source multimodal LLM, which employs progressive multi-modal training with diverse instruction-tuning data.

We specifically excluded image-based vision-language models such as LLaVA and GPT-4V from our comparison because they lack native video processing capabilities, which is a critical requirement for the tasks we are evaluating. Specifically, here are their limitations.

Many of these models can only see a single image, which perform poorly on most video benchmark datasets.

Some of these models (e.g. GPT-4V) can see multiple images at once, but they can process only a few frames (10 or less) of a video at a time, which are not sufficient for most videos that are longer than 1 minute.

Image-based models especially exhibit limitations when dealing with the dynamic and fluid nature of video content, which is not easily observable when the models treat the input as a series of images rather than a coherent video.

Moreover, the time it takes for these models to process videos is impractically long for real-world applications. This is because they lack the mechanisms to efficiently handle the temporal dimension of videos, which is essential for understanding the unfolding narrative and actions within the video content.

4.2 - Results on Video Question Answering

In Video Question Answering tasks, Pegasus-1's zero-shot performance on both ActivityNet-QA and NExT-QA datasets is particularly noteworthy. Pegasus-1 demonstrates a remarkable ability to generalize and understand various videos to accurately answer video-related questions without task-specific training.

4.3 - Results on Video Conversations

The Video-ChatGPT Benchmark (also known as QEFVC) results highlight Pegasus-1's adeptness in handling video conversations. Pegasus-1 leads the pack with scores that reflect its proficiency in Correctness, Detail, Context, Temporal understanding, and Consistency. Notably, Pegasus-1 scored 3.79 in Correctness and 4.29 in Detail, showcasing its nuanced grasp of video conversations and the context in which they occur.

4.4 - Results on Video Summarization

Lastly, Pegasus-1 has demonstrated superior performance on summarizing videos. We compare Pegasus-1 against its competitors on MSR-VTT Dataset, using VideoChatGPT Benchmark’s scoring scheme (”Temporal Understanding” and “Consistency” are omitted due to the nature of summarization). As shown above, Pegasus-1 outperforms the baseline models all metrics by a significant margin.

Through these benchmarks, Pegasus-1 has established itself as a formidable contender in the video-language modeling arena, setting new standards for zero-shot performance and generalization in video understanding tasks.

5 - Qualitative Examples

These are sample examples that are randomly selected from diverse domains to illustrate the capabilities of Pegasus-1.

E-Learning Video

Generated Title

Setting Up a Tent: A Step-by-Step Guide to Camping with Parks Canada

Generated Topic

Camping: Setting Up and Securing a Tent with Parks Canada

Generated Hashtags

#camping, #tent, #set up, #fire pit, #security, #learn to camp, #Parks Canada

Generated Chapters

Step 1: Set up 00:00:00 - 00:00:08

Choosing a campsite and setting up the tent.

Step 2: Build a fire pit 00:00:09 - 00:00:36

Creating a fire pit for cooking and warmth.

Step 3: Set up your tent 00:00:37 - 00:01:14

Unfolding and positioning the tent.

Step 4: Security 00:01:14 - 00:01:48

Securing the tent to the ground.

Step 5: Attach the tent fly 00:01:49 - 00:01:54

Adding the rainfly to the tent.

Step 6: Add extra comfort 00:01:55 - 00:02:16

Setting up bedding and other gear inside the tent.

Step 7: Take down your tent 00:02:17 - 00:02:30

Dismantling and packing away the tent.

Step 8: Pack up 00:02:31 - 00:02:58

Folding and storing the tent in its carrying case.

Step 9: Learn about program 00:03:00 - 00:03:06

Promoting Parks Canada's educational programs.

Ad Video

Generating Answers based on Custom Prompts

Prompt: Give me an analysis how the advertisement uses facial expressions to convey their message

Output: The advertisement strategically utilizes facial expressions to effectively convey the message of elegance, joy, and luxury associated with the brand. The models in the advertisement are portrayed with confident, warm smiles, which suggests that the Bvlgari jewelry brings happiness and enhances the wearer's beauty. These expressions convey a sense of positivity and create an emotional connection with the audience.

Furthermore, the calm and composed expressions of the models reflect the timeless and sophisticated nature of the brand. By showcasing the jewelry on the models' faces in close-up shots, the advertisement emphasizes the connection between the product and the positive emotions displayed. This technique aims to evoke desire and aspiration in the viewers, associating the brand with a sense of luxury and elegance.

From the base description of the video, it can be inferred that the advertisement features a woman showcasing various pieces of Bvlgari jewelry in different settings. The video starts with the woman standing next to a tree, wearing a necklace and earrings, with the Bvlgari logo visible on the trunk. She is then seen walking down a tree-lined driveway, wearing a different dress but the same necklace. The setting transitions to an opulent interior, where the woman is shown examining and wearing a ring, a bracelet, and a necklace with a large pendant.

The video also includes scenes of a peacock and a peacock feather, which may symbolize luxury and elegance. Towards the end of the video, the woman is joined by another model, both wearing Bvlgari jewelry. The advertisement concludes with the Bvlgari logo, accompanied by the words 'Wonderful Extravaganza' and 'Bvlgari Rome', indicating the brand and the location associated with the advertisement.

In summary, the advertisement effectively utilizes facial expressions to convey a message of elegance, joy, and luxury. The models' confident and warm smiles, along with the close-up shots of their faces while wearing the jewelry, create an emotional connection with the audience. The overall visual elements of the advertisement, such as the luxurious settings and the presence of peacock symbolism, further enhance the perception of the brand's elegance and sophistication.

Movie Trailer Video

Generating Answers based on Custom Prompts

Prompt: Would you recommend this video to young children

Output: Based on my comprehensive analysis of the video, I would not recommend this video to young children. The video contains intense action scenes, violence, and mature themes that are not suitable for young viewers. The visual information suggests that the video is a trailer for a film or television series with a thrilling and action-packed narrative.

The video opens with a fiery orange title card, transitioning to a dark and atmospheric shot of a church interior illuminated by candles. A man in a suit is seen walking down the aisle, followed by another man in a similar suit. This scene is followed by a passionate embrace between a woman and a man, hinting at a romantic element in the story.

Throughout the trailer, there are various settings and scenes that suggest action and suspense. These include a confrontation between two men, a moment of contemplation while looking at a pocket watch, a tense exchange between characters, and a high-speed chase through city streets at night. There are also shots of men walking away from an explosion and a glimpse of a desert landscape. These elements indicate intense action sequences that may not be suitable for young children.

Additionally, there is a shot of a man looking at a gun, indicating the potential for violence or conflict in the storyline. The trailer concludes with shots of the Eiffel Tower at sunset and a confrontation between two men before revealing the title "IN MARC A DANCER" and the release date "MARCH 24".

In summary, the video contains intense action scenes, violence, and mature themes that are not suitable for young children. It is a thrilling and action-packed trailer for a film or television series.

6 - Limitations

Safety & Biases: Pegasus-1 is designed with safety mechanisms; however, as with any AI model, there is a risk of generating content that could be considered harmful or inappropriate without proper oversight and regulation. Our understanding of ethical and safety measures for video foundation models is ongoing. As we continue testing and gather feedback, a detailed evaluation and ethics report will be made available.

Video Duration: Our API supports videos ranging from 4 seconds to 20 minutes in length. This constraint is due to computational and memory considerations, which are common challenges in handling large-scale video data. As a result, users may need to segment longer videos into smaller parts to fully leverage the model's capabilities. We will work on natively supporting longer duration in future releases.

Hallucinations: Pegasus-1 can occasionally produce inaccurate outputs. While we have improved it from our alpha version to reduce hallucination, users should be mindful of this limitation, especially for tasks where high precision is required and factual correctness is critical.

7 - Closing Remarks

The journey of Pegasus-1 from its alpha to the beta version has been marked by significant enhancements. The meticulous improvements in training data quality, video processing capabilities, and advanced training techniques have culminated in a model that not only understands video content more deeply but also interacts in a conversational context with a level of sophistication that was previously unattainable.

The benchmark results speak for themselves, placing Pegasus-1 at the forefront of the industry, outperforming established models like Google's Gemini Pro and setting new standards in video QA and video conversational frameworks. These quantitative measures, alongside the qualitative improvements in world knowledge and detail recognition, underscore the transformative potential of Pegasus-1.

While we recognize the limitations of Pegasus-1, including safety concerns, video length constraints, and occasional hallucinations, these are areas of active research and development. Our commitment to improving Pegasus-1 remains steadfast as we aim to push the boundaries of video understanding technology.

Twelve Labs Team

This is a joint team effort across multiple functional groups including model and data (”core” indicates Core Contributor), engineering, product and business development. (First-name alphabetical order)

Model: Aiden Lee, Cooper Han, Flynn Jang (core), Jae Lee, Jay Yi (core), Jeff Kim, Jeremy Kim, Kyle Park, Lucas Lee, Mars Ha, Minjoon Seo, Ray Jung (core), William Go (core)

Data: Daniel Kim (core), Jay Suh (core)

Deployment: Abraham Jo, Ed Park, Hassan Kianinejad, SJ Kim, Tony Moon, Wade Jeong

Product: Andrei Popescu, Esther Kim, EK Yoon, Genie Heo, Henry Choi, Jenna Kang, Kevin Han, Noah Seo, Sunny Nguyen, Ryan Won, Yeonhoo Park

Business & Operations: Anthony Giuliani, Dave Chung, Hans Yoon, James Le, Jenny Ahn, June Lee, Maninder Saini, Meredith Sanders Soyoung Lee, Sue Kim, Travis Couture

Resources:

Link to sign up and play with our API

Link to the API documentation

Link to our Discord community to connect with fellow users and developers

If you use this model in your work, please use the following BibTeX citation and cite the author as Twelve Labs:

@misc{pegasus-1-beta, author = {Twelve Labs Team}, title = {Pegasus-1 Open Beta: Setting New Standards in Video-Language Modeling}, url = {https://www.twelvelabs.io/blog/pegasus-1-beta}, year = {2024}}}

Check out the Pegasus-1 technical report on arXiV and HuggingFace!

1 - Introduction

At Twelve Labs, our goal is to advance video understanding through the creation of innovative multimodal AI models. In a previous post, "Introducing Video-to-Text and Pegasus-1 (80B)," we introduced the alpha version of Pegasus-1. This foundational model can generate descriptive text from video input. Today, we're thrilled to announce the launch of Pegasus-1's open beta.

Pegasus-1 is designed to understand and articulate complex video content, transforming how we interact with and analyze multimedia. With roughly 17 billion parameters, this model is a significant progression in multimodal AI. It can process and generate language from video input with exceptional accuracy and detail.

In this update, we'll explore the enhancements made to Pegasus-1 since its alpha release. These include improvements in data quality, video processing, and training methods. We will also share benchmarking results against leading commercial and open-source models, demonstrating Pegasus-1's superior performance in video summarization, question answering, and conversations. Beyond quantitative metrics, Pegasus-1 also shows qualitative improvements through its enhanced world knowledge and ability to capture detailed visual information.

2 - Model Overview

As a quick recap, Pegasus-1 is a multimodal foundation model designed to bridge the gap between video content and language, enabling machines to interpret and generate text based on video input. The architecture of Pegasus-1 is composed of three main components:

The video encoder model processes the video input to generate rich embeddings from both video frames and audio speech recognition (ASR) data. These embeddings are dense representations that capture the visual and auditory essence of the video content.

The video-language alignment model maps the video embeddings to corresponding language embeddings, creating a shared space where video and text representations are aligned. This alignment is crucial for the model to understand the correspondence between what is seen in the video and the language that describes it.

The large language model decoder takes the aligned embeddings and user prompts to generate coherent and contextually relevant text output. This output can range from descriptive summaries to answers to specific questions about the video content.

Compared to the alpha version, the open-beta version of Pegasus-1 boasts approximately 17B parameters, making it a compact yet powerful tool for interpreting and generating text based on video data.

3 - Major Improvements

As we progress from the alpha to the open-beta version, we continue to refine and enhance the model to deliver even more accurate and detailed video-language understanding. These enhancements are driven by three key factors: high-quality data, optimized video processing, and refined training techniques.

3.1 - Data Improvement

In accordance with previous findings, we also find that the quality and granularity of the captions had a more significant impact on the model's performance than the sheer quantity of data. For instance, Pegasus-1, trained on 100,000 high-quality video-text pairs, easily outperforms the same architecture trained on a much larger dataset (10M+) with lower-quality captions.

With this experimental evidence in mind, we have designed an efficient data annotation pipeline to create high-quality video captions for the aforementioned 10M+ videos. Training on such a large amount of high quality video-text pairs, Pegasus attains foundational video understanding capabilities that are not observed in other models.

3.2 - Video Processing Improvement

We made substantial changes to the video processing pipeline to optimize both spatial and temporal resolution. We increased the number of patches per frame by 10x (spatial) and the number of frames by 1.5x (temporal), resulting in a 15x increase in the total number of patches per video. This enhancement allows Pegasus-1 to capture and convey more information per frame.

Pegasus-1 is now also able to better grasp the overall story and context of the video in a more coherent manner, as evidenced by both qualitative and quantitative analyses, particularly in question-answering datasets.

3.3 - Training Improvement

As a multimodal foundation model, Pegasus-1 is trained on massive multimodal datasets over multiple stages. However, multi-stage training often suffers from the phenomenon known as catastrophic forgetting. This occurs when a model, upon learning new information, rapidly forgets the old information it was previously trained on. This issue becomes even more pressing in multimodal models that undergo sequential training across modalities.

To address this, we employ a strategic training regimen that involves multiple stages - each meticulously designed to balance the acquisition of new knowledge with the preservation of previously learned information. The key to this approach lies in the selective updates (unfreezing) of model parameters and the careful adjustment of learning rates throughout the training process.

The open-beta version of Pegasus-1, compared to the alpha version, boasts enhanced capabilities, such as a heightened ability to capture fine-grained temporal moments and a reduced propensity for hallucination, resulting in increased robustness across diverse video domains. It also demonstrates expanded world knowledge and an improved capacity to list various moments in temporal order, rather than concentrating on a singular scene.

4 - Quantitative Benchmark Results

In our comprehensive benchmarking efforts, Pegasus-1 has been evaluated against a spectrum of both commercial and open-source models. This section will detail the performance of Pegasus-1 in comparison to these models across various video-language modeling tasks.

4.1 - Baseline Models

The baseline models against which Pegasus-1 was benchmarked include:

Gemini Pro (1.5): A commercial multimodal model from Google DeepMind known for its robust video-language capabilities, released in November 2023 and most recently updated in February 2024. We are comparing against its most recent version, namely Gemini Pro 1.5.

Whisper + ChatGPT-3.5 (OpenAI): This combination is one of the few widely adopted approaches to video summarization. By leveraging the state-of-the-art speech-to-text and large language models, summaries are derived primarily from the video’s auditory content. A significant drawback is the oversight of valuable visual information within the video.

Vendor A’s Summary API: Widely adopted commercial product for audio and video summary generation. Vendor A’s Summary API seems to be based only on transcription data and language models (akin to ASR+ChatGPT3.5) to output a video summary.

Video-ChatGPT: Developed by Maaz et al. (June 2023), it is a video language model with a chat interface. The model processes video frames to capture visual events within a video. Note that it does not utilize the conversational information within the video.

VideoChat2: Developed by Li et al. (November 2023), it is a state-of-the-art open-source multimodal LLM, which employs progressive multi-modal training with diverse instruction-tuning data.

We specifically excluded image-based vision-language models such as LLaVA and GPT-4V from our comparison because they lack native video processing capabilities, which is a critical requirement for the tasks we are evaluating. Specifically, here are their limitations.

Many of these models can only see a single image, which perform poorly on most video benchmark datasets.

Some of these models (e.g. GPT-4V) can see multiple images at once, but they can process only a few frames (10 or less) of a video at a time, which are not sufficient for most videos that are longer than 1 minute.

Image-based models especially exhibit limitations when dealing with the dynamic and fluid nature of video content, which is not easily observable when the models treat the input as a series of images rather than a coherent video.

Moreover, the time it takes for these models to process videos is impractically long for real-world applications. This is because they lack the mechanisms to efficiently handle the temporal dimension of videos, which is essential for understanding the unfolding narrative and actions within the video content.

4.2 - Results on Video Question Answering

In Video Question Answering tasks, Pegasus-1's zero-shot performance on both ActivityNet-QA and NExT-QA datasets is particularly noteworthy. Pegasus-1 demonstrates a remarkable ability to generalize and understand various videos to accurately answer video-related questions without task-specific training.

4.3 - Results on Video Conversations

The Video-ChatGPT Benchmark (also known as QEFVC) results highlight Pegasus-1's adeptness in handling video conversations. Pegasus-1 leads the pack with scores that reflect its proficiency in Correctness, Detail, Context, Temporal understanding, and Consistency. Notably, Pegasus-1 scored 3.79 in Correctness and 4.29 in Detail, showcasing its nuanced grasp of video conversations and the context in which they occur.

4.4 - Results on Video Summarization

Lastly, Pegasus-1 has demonstrated superior performance on summarizing videos. We compare Pegasus-1 against its competitors on MSR-VTT Dataset, using VideoChatGPT Benchmark’s scoring scheme (”Temporal Understanding” and “Consistency” are omitted due to the nature of summarization). As shown above, Pegasus-1 outperforms the baseline models all metrics by a significant margin.

Through these benchmarks, Pegasus-1 has established itself as a formidable contender in the video-language modeling arena, setting new standards for zero-shot performance and generalization in video understanding tasks.

5 - Qualitative Examples

These are sample examples that are randomly selected from diverse domains to illustrate the capabilities of Pegasus-1.

E-Learning Video

Generated Title

Setting Up a Tent: A Step-by-Step Guide to Camping with Parks Canada

Generated Topic

Camping: Setting Up and Securing a Tent with Parks Canada

Generated Hashtags

#camping, #tent, #set up, #fire pit, #security, #learn to camp, #Parks Canada

Generated Chapters

Step 1: Set up 00:00:00 - 00:00:08

Choosing a campsite and setting up the tent.

Step 2: Build a fire pit 00:00:09 - 00:00:36

Creating a fire pit for cooking and warmth.

Step 3: Set up your tent 00:00:37 - 00:01:14

Unfolding and positioning the tent.

Step 4: Security 00:01:14 - 00:01:48

Securing the tent to the ground.

Step 5: Attach the tent fly 00:01:49 - 00:01:54

Adding the rainfly to the tent.

Step 6: Add extra comfort 00:01:55 - 00:02:16

Setting up bedding and other gear inside the tent.

Step 7: Take down your tent 00:02:17 - 00:02:30

Dismantling and packing away the tent.

Step 8: Pack up 00:02:31 - 00:02:58

Folding and storing the tent in its carrying case.

Step 9: Learn about program 00:03:00 - 00:03:06

Promoting Parks Canada's educational programs.

Ad Video

Generating Answers based on Custom Prompts

Prompt: Give me an analysis how the advertisement uses facial expressions to convey their message

Output: The advertisement strategically utilizes facial expressions to effectively convey the message of elegance, joy, and luxury associated with the brand. The models in the advertisement are portrayed with confident, warm smiles, which suggests that the Bvlgari jewelry brings happiness and enhances the wearer's beauty. These expressions convey a sense of positivity and create an emotional connection with the audience.

Furthermore, the calm and composed expressions of the models reflect the timeless and sophisticated nature of the brand. By showcasing the jewelry on the models' faces in close-up shots, the advertisement emphasizes the connection between the product and the positive emotions displayed. This technique aims to evoke desire and aspiration in the viewers, associating the brand with a sense of luxury and elegance.

From the base description of the video, it can be inferred that the advertisement features a woman showcasing various pieces of Bvlgari jewelry in different settings. The video starts with the woman standing next to a tree, wearing a necklace and earrings, with the Bvlgari logo visible on the trunk. She is then seen walking down a tree-lined driveway, wearing a different dress but the same necklace. The setting transitions to an opulent interior, where the woman is shown examining and wearing a ring, a bracelet, and a necklace with a large pendant.

The video also includes scenes of a peacock and a peacock feather, which may symbolize luxury and elegance. Towards the end of the video, the woman is joined by another model, both wearing Bvlgari jewelry. The advertisement concludes with the Bvlgari logo, accompanied by the words 'Wonderful Extravaganza' and 'Bvlgari Rome', indicating the brand and the location associated with the advertisement.

In summary, the advertisement effectively utilizes facial expressions to convey a message of elegance, joy, and luxury. The models' confident and warm smiles, along with the close-up shots of their faces while wearing the jewelry, create an emotional connection with the audience. The overall visual elements of the advertisement, such as the luxurious settings and the presence of peacock symbolism, further enhance the perception of the brand's elegance and sophistication.

Movie Trailer Video

Generating Answers based on Custom Prompts

Prompt: Would you recommend this video to young children

Output: Based on my comprehensive analysis of the video, I would not recommend this video to young children. The video contains intense action scenes, violence, and mature themes that are not suitable for young viewers. The visual information suggests that the video is a trailer for a film or television series with a thrilling and action-packed narrative.

The video opens with a fiery orange title card, transitioning to a dark and atmospheric shot of a church interior illuminated by candles. A man in a suit is seen walking down the aisle, followed by another man in a similar suit. This scene is followed by a passionate embrace between a woman and a man, hinting at a romantic element in the story.

Throughout the trailer, there are various settings and scenes that suggest action and suspense. These include a confrontation between two men, a moment of contemplation while looking at a pocket watch, a tense exchange between characters, and a high-speed chase through city streets at night. There are also shots of men walking away from an explosion and a glimpse of a desert landscape. These elements indicate intense action sequences that may not be suitable for young children.

Additionally, there is a shot of a man looking at a gun, indicating the potential for violence or conflict in the storyline. The trailer concludes with shots of the Eiffel Tower at sunset and a confrontation between two men before revealing the title "IN MARC A DANCER" and the release date "MARCH 24".

In summary, the video contains intense action scenes, violence, and mature themes that are not suitable for young children. It is a thrilling and action-packed trailer for a film or television series.

6 - Limitations

Safety & Biases: Pegasus-1 is designed with safety mechanisms; however, as with any AI model, there is a risk of generating content that could be considered harmful or inappropriate without proper oversight and regulation. Our understanding of ethical and safety measures for video foundation models is ongoing. As we continue testing and gather feedback, a detailed evaluation and ethics report will be made available.

Video Duration: Our API supports videos ranging from 4 seconds to 20 minutes in length. This constraint is due to computational and memory considerations, which are common challenges in handling large-scale video data. As a result, users may need to segment longer videos into smaller parts to fully leverage the model's capabilities. We will work on natively supporting longer duration in future releases.

Hallucinations: Pegasus-1 can occasionally produce inaccurate outputs. While we have improved it from our alpha version to reduce hallucination, users should be mindful of this limitation, especially for tasks where high precision is required and factual correctness is critical.

7 - Closing Remarks

The journey of Pegasus-1 from its alpha to the beta version has been marked by significant enhancements. The meticulous improvements in training data quality, video processing capabilities, and advanced training techniques have culminated in a model that not only understands video content more deeply but also interacts in a conversational context with a level of sophistication that was previously unattainable.

The benchmark results speak for themselves, placing Pegasus-1 at the forefront of the industry, outperforming established models like Google's Gemini Pro and setting new standards in video QA and video conversational frameworks. These quantitative measures, alongside the qualitative improvements in world knowledge and detail recognition, underscore the transformative potential of Pegasus-1.

While we recognize the limitations of Pegasus-1, including safety concerns, video length constraints, and occasional hallucinations, these are areas of active research and development. Our commitment to improving Pegasus-1 remains steadfast as we aim to push the boundaries of video understanding technology.

Twelve Labs Team

This is a joint team effort across multiple functional groups including model and data (”core” indicates Core Contributor), engineering, product and business development. (First-name alphabetical order)

Model: Aiden Lee, Cooper Han, Flynn Jang (core), Jae Lee, Jay Yi (core), Jeff Kim, Jeremy Kim, Kyle Park, Lucas Lee, Mars Ha, Minjoon Seo, Ray Jung (core), William Go (core)

Data: Daniel Kim (core), Jay Suh (core)

Deployment: Abraham Jo, Ed Park, Hassan Kianinejad, SJ Kim, Tony Moon, Wade Jeong

Product: Andrei Popescu, Esther Kim, EK Yoon, Genie Heo, Henry Choi, Jenna Kang, Kevin Han, Noah Seo, Sunny Nguyen, Ryan Won, Yeonhoo Park

Business & Operations: Anthony Giuliani, Dave Chung, Hans Yoon, James Le, Jenny Ahn, June Lee, Maninder Saini, Meredith Sanders Soyoung Lee, Sue Kim, Travis Couture

Resources:

Link to sign up and play with our API

Link to the API documentation

Link to our Discord community to connect with fellow users and developers

If you use this model in your work, please use the following BibTeX citation and cite the author as Twelve Labs:

@misc{pegasus-1-beta, author = {Twelve Labs Team}, title = {Pegasus-1 Open Beta: Setting New Standards in Video-Language Modeling}, url = {https://www.twelvelabs.io/blog/pegasus-1-beta}, year = {2024}}}

Related articles

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved