Product

Video vs Text for Public Sector: A Video-Native Case For Spatio-Temporal Reasoning with Marengo and Pegasus

Mike Mascari

General-purpose LLMs struggle with mission-critical video analysis because they lose temporal context through frame sampling and can't reliably reason over time; yet most DoD and Intelligence Community workloads depend on understanding event sequences, causality, and patterns across hours of footage. Specialized video models like TwelveLabs' Marengo (perception/retrieval) and Pegasus (reasoning/reporting) are purpose-built to preserve spatiotemporal information and produce grounded, time-aligned intelligence products that analysts can trust and audit. When selecting video AI for public sector missions, the key question isn't "Can it describe a scene?" but "Can it reason over timelines and produce evidence-backed outputs?"

General-purpose LLMs struggle with mission-critical video analysis because they lose temporal context through frame sampling and can't reliably reason over time; yet most DoD and Intelligence Community workloads depend on understanding event sequences, causality, and patterns across hours of footage. Specialized video models like TwelveLabs' Marengo (perception/retrieval) and Pegasus (reasoning/reporting) are purpose-built to preserve spatiotemporal information and produce grounded, time-aligned intelligence products that analysts can trust and audit. When selecting video AI for public sector missions, the key question isn't "Can it describe a scene?" but "Can it reason over timelines and produce evidence-backed outputs?"

In this article

Join our newsletter

Join our newsletter

Receive the latest advancements, tutorials, and industry insights in video understanding

Receive the latest advancements, tutorials, and industry insights in video understanding

Search, analyze, and explore your videos with AI.

Mar 24, 2026

12 Minutes

Copy link to article

Video is not text. It’s a timeline.

If you work in DoD or the Intelligence Community, you already know the uncomfortable truth: we are collecting more video than humans can watch.

ISR platforms, wide-area sensors, fixed cameras, body-worn cameras, drones; everything is becoming a video stream. And the hard part isn’t collecting the footage; it’s turning raw motion imagery into timely, trustworthy intelligence products.

Public reports have described this as an “information deluge” in military intelligence, where analysts risk becoming overwhelmed by the scale of modern collection.

So it’s natural that teams ask: “Can we just put this video into an LLM?”

Sometimes you can get a decent answer for a short clip. But for mission workloads including hours of footage, multiple feeds, real timeline questions (“what happened before/after?”, “who interacted with whom?”, “what changed?”), general-purpose LLMs fail in predictable ways.

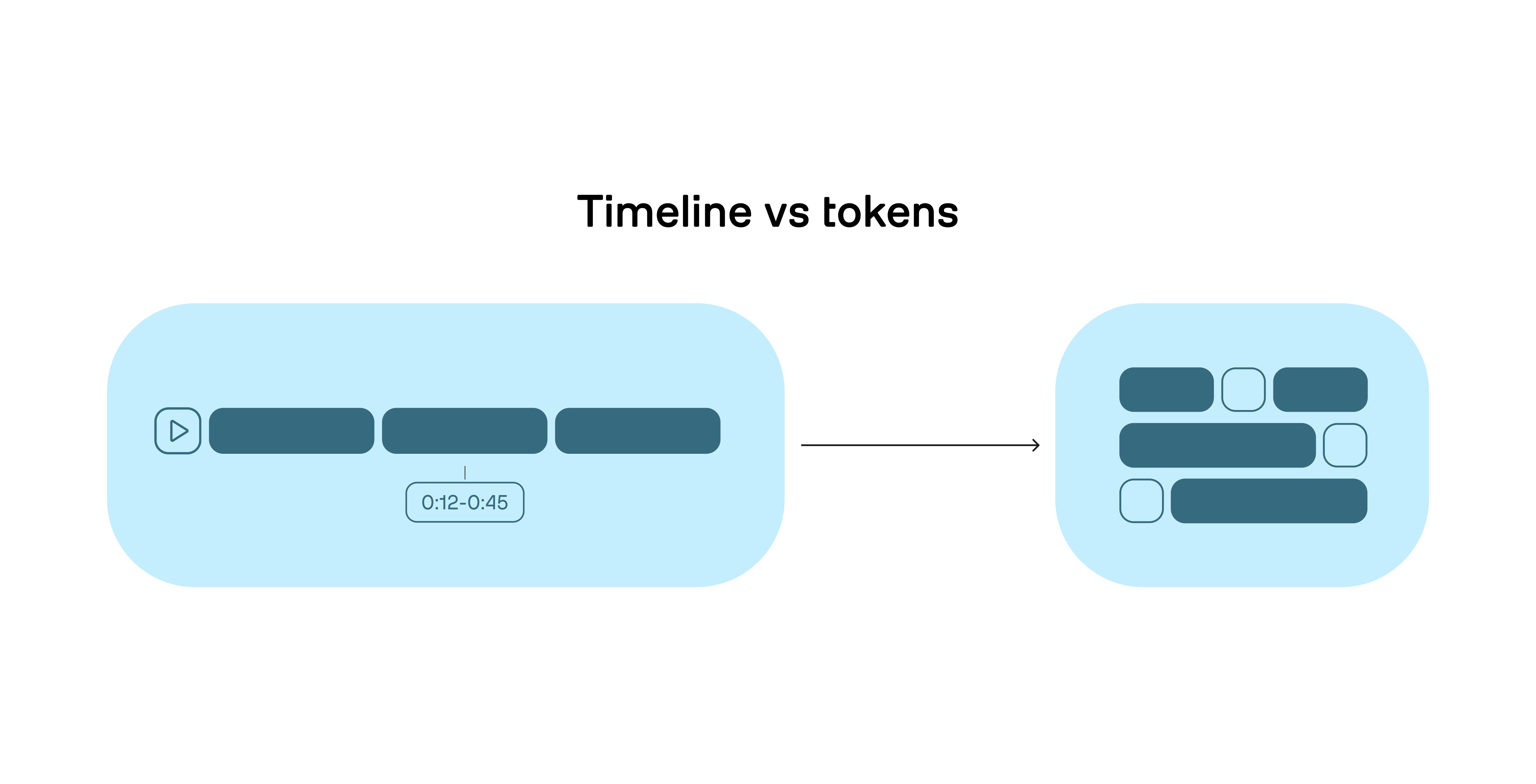

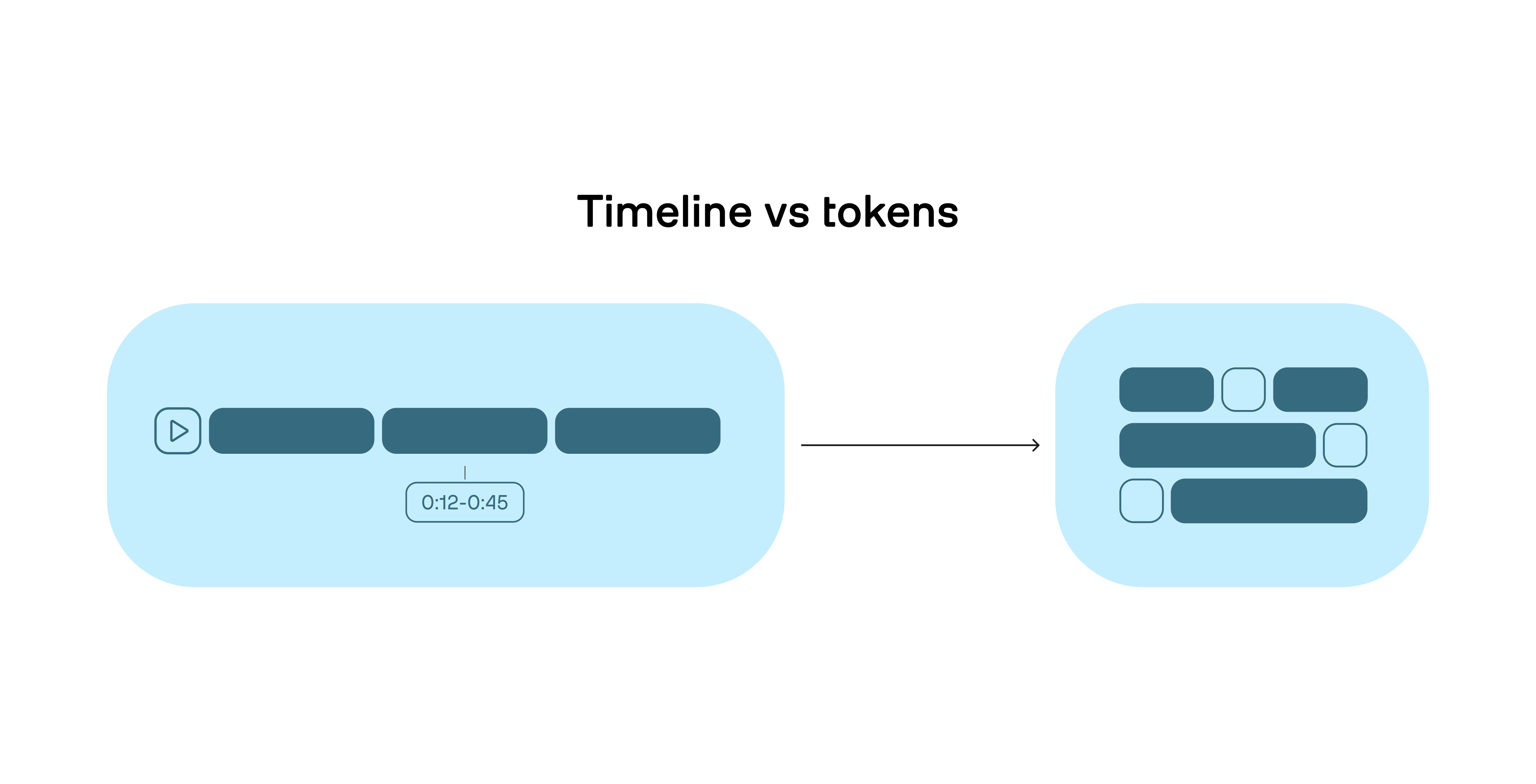

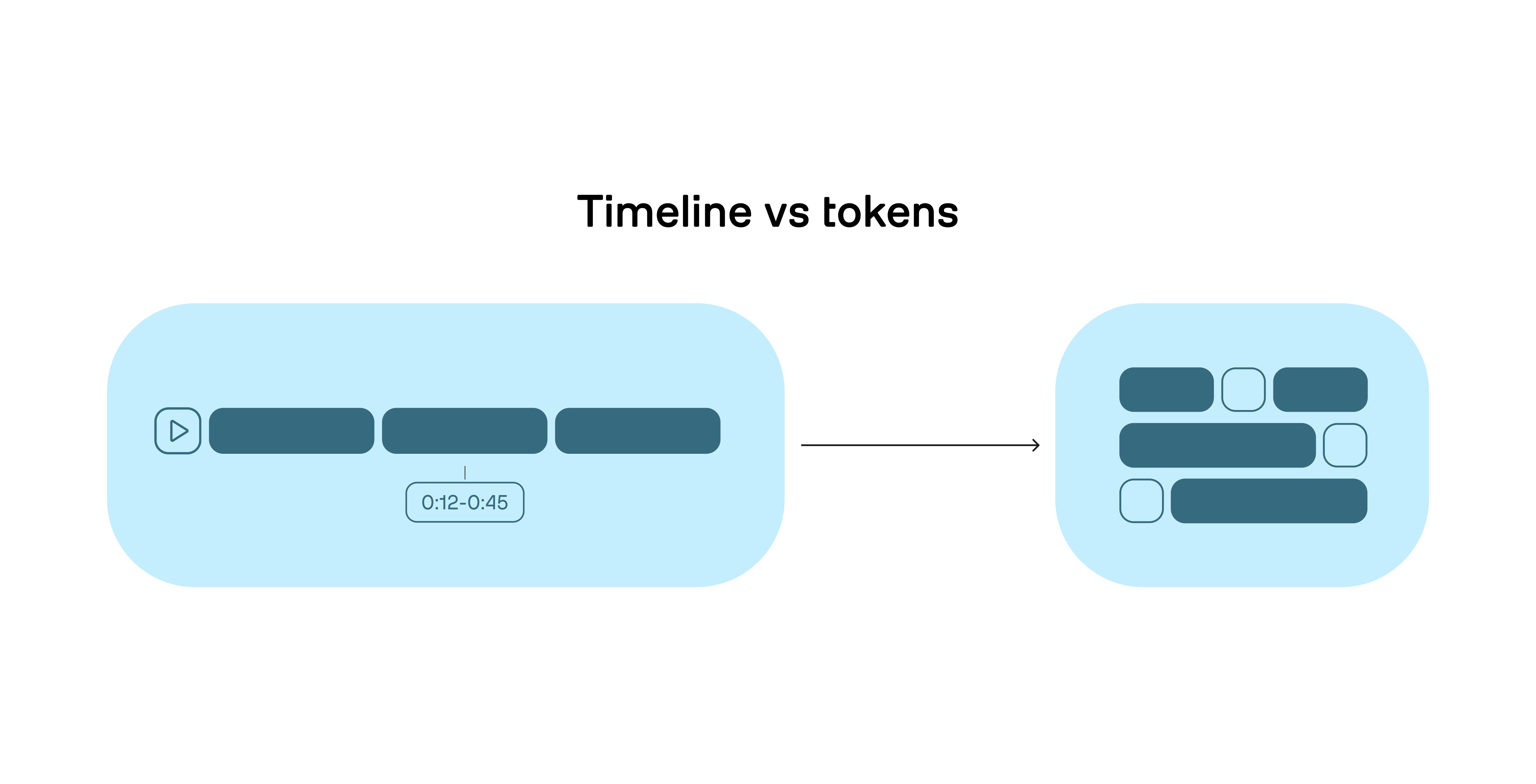

And the reason is simple: Text is a sequence of tokens. Video is a spatiotemporal signal.

Figure 1: Video is continuous time; LLMs operate on discrete tokens; forcing video into tokens causes information loss.

The hidden requirement in mission video: temporal reasoning

Most people hear “video understanding” and think “recognize objects.” Mission video rarely fails because you couldn’t detect a person or a vehicle. It fails because you couldn’t reason over time, such as:

What happened first, and what happened next?

Did the observed behavior escalate?

What is the causal chain?

Is this a normal pattern of life or an anomaly?

These are timeline questions. You can’t answer them reliably if the model’s internal representation collapses video into a handful of static snapshots or a loosely grounded caption.

Why general-purpose LLMs break on video

This is where it’s important to be precise: general-purpose LLMs are “bad at video” not because they’re unintelligent, but because the common way we feed video into them loses the very information missions require.

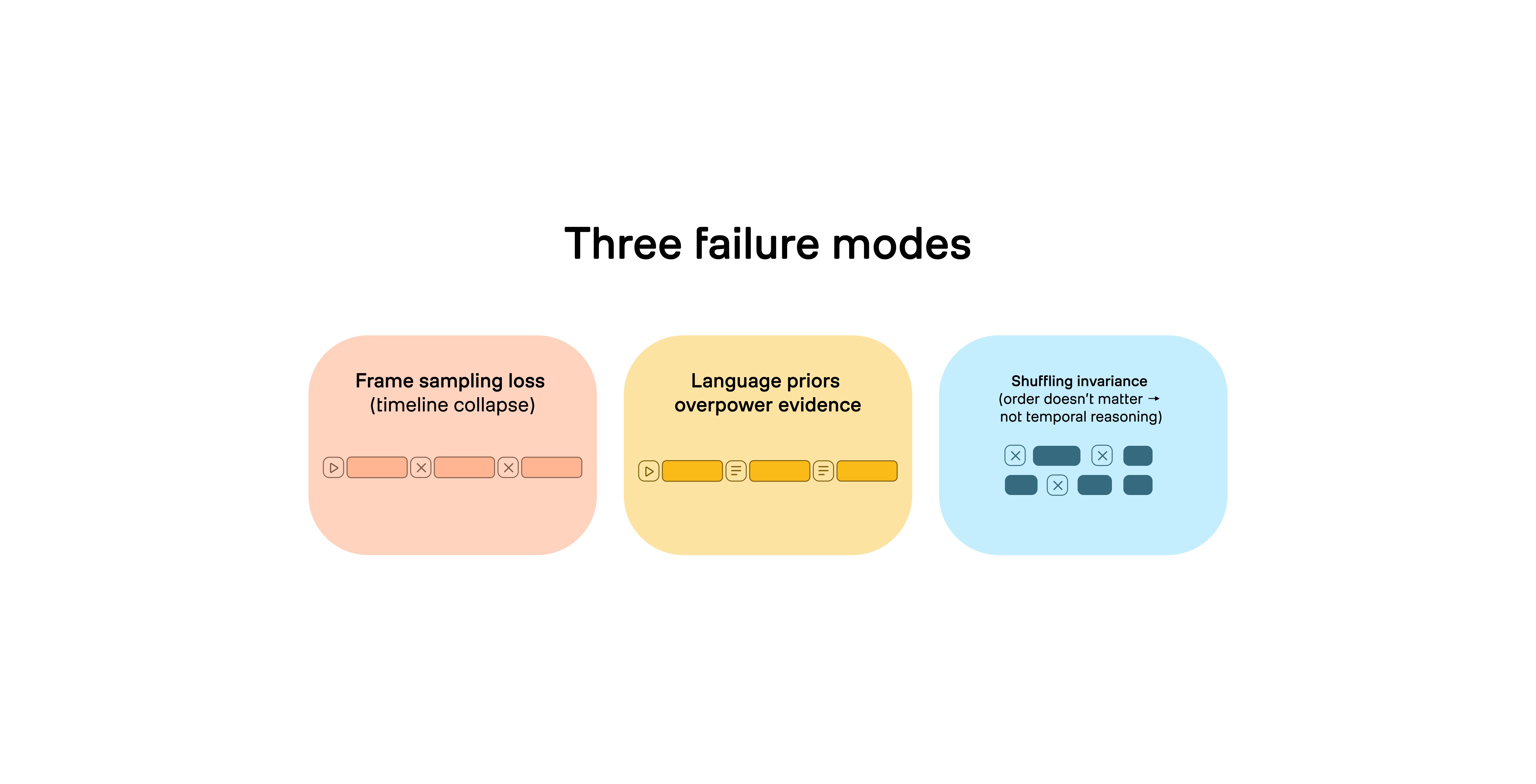

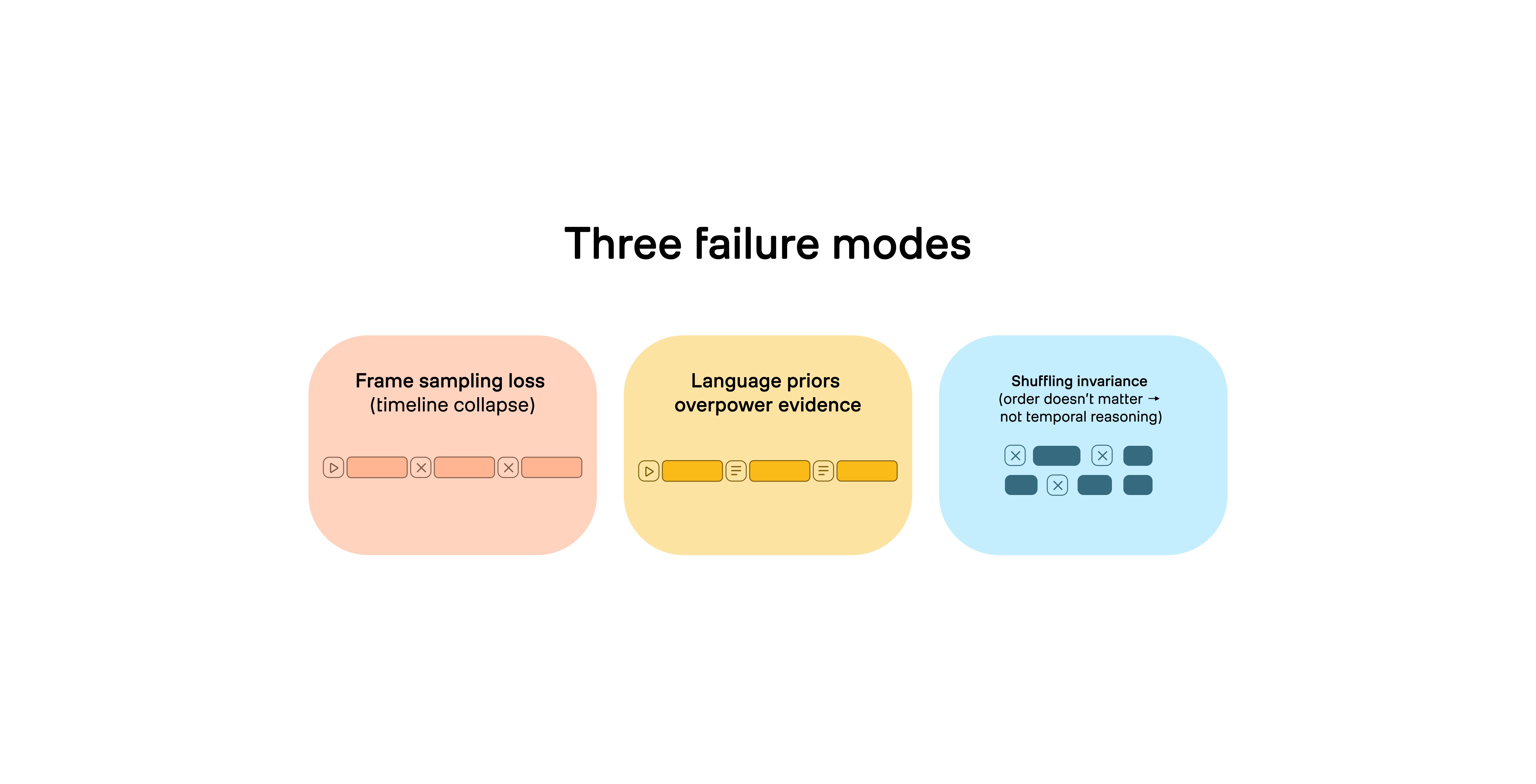

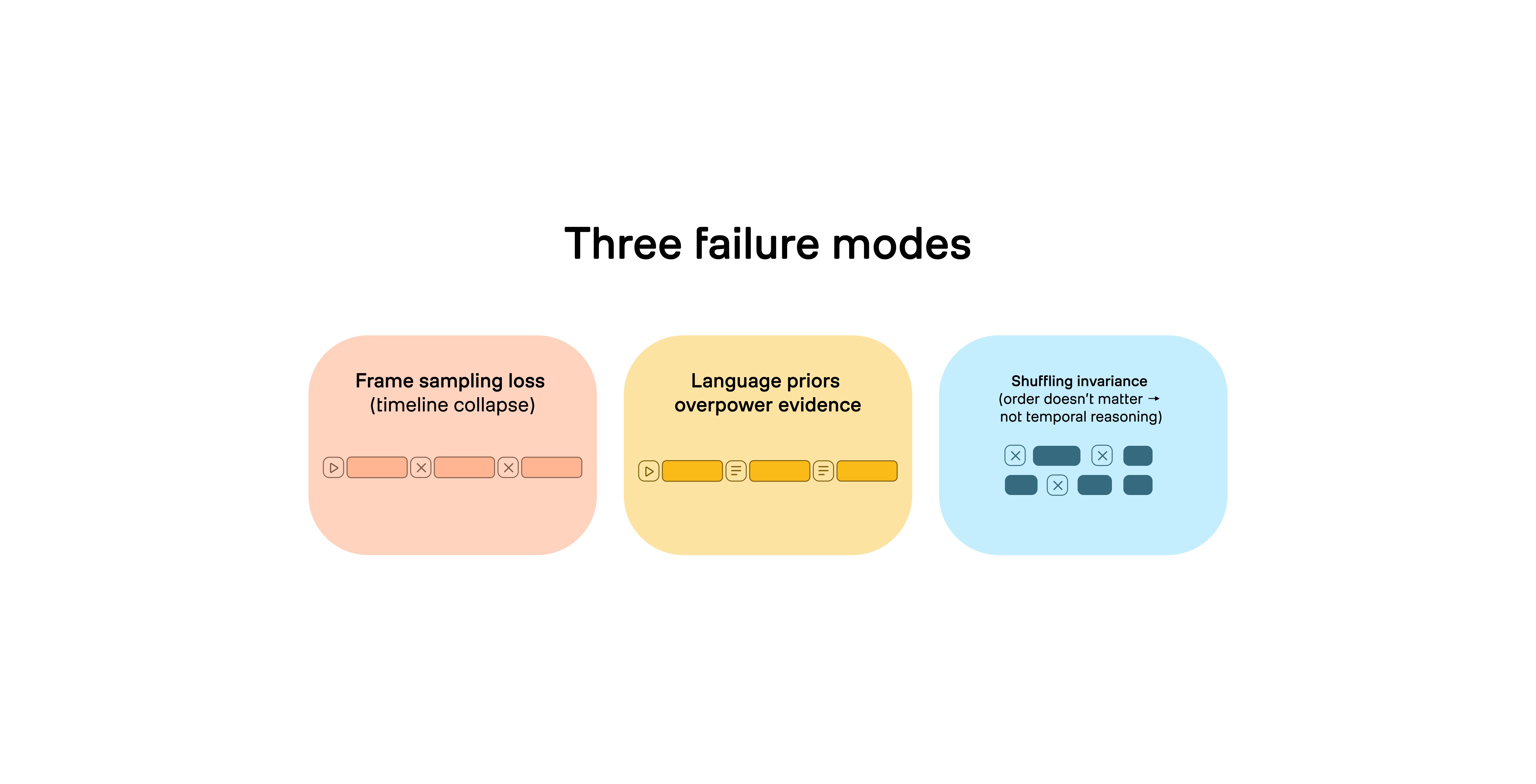

There are three core failure modes.

Figure 2: Why “general LLM + sampled frames” fails for video missions.

1 - Frame sampling destroys the timeline

Video is dense. A standard stream might capture ~30 frames per second. But many “video-to-LLM” systems only pass along a tiny fraction of those frames (sometimes as low as ~1 frame per second) because processing every frame is expensive.

The SlowFocus paper makes this explicit: under compute constraints, video LLMs typically need to sparsely sample the original video (e.g., “retaining one frame every second”) and also compress per-frame tokens through adapters; therefore forcing a tradeoff between frame-level detail and video-level temporal coverage.

That tradeoff is not benign. If you throw away most frames, you throw away:

Transitions (what changed)

Fine-grained actions (what someone did, not just what they were)

Causal cues (the “because” between events)

Synchronization (what audio happened at the same time as an action)

For mission workloads, those are the exact cues you care about.

2 - Language priors overpower evidence

LLMs are trained to be fluent. That’s a strength until it becomes a liability.

Apple’s 2025 workshop paper on video LLM benchmarking calls out “strong language priors,” where models can answer questions correctly without watching the video at all.

In a mission setting, “plausible” is not good enough. You need the model to point to what happened in this footage, not what usually happens in the world. This is also why the risk profile feels different in public sector: a confident guess can become an unintentional false positive, a missed event, or a narrative error that cascades into decisions.

3 - Shuffling invariance proves the model isn’t reasoning over time

Here’s a simple test that matters more than many public benchmarks: If you shuffle the frames out of order… does the model still answer the same way?

Apple’s paper also highlights “shuffling invariance,” where some video LLMs maintain similar performance even when frames are temporally shuffled. If a system can’t tell the difference between correct order and shuffled order, it can’t reliably reason about event sequence, escalation, or causality; which are core elements of FMV (Full-Motion Video) exploitation and pattern-of-life analysis.

The production mismatch: wrong context, wrong memory, wrong reasoning

Even when a demo looks good, most general-purpose stacks run into “production gravity” on long-form, mission-scale video:

Wrong context: treating video like tokens tends to lose spatiotemporal continuity.

Wrong memory: “video memory” is not the same as text RAG; missions require retrieval over huge archives with time-aligned evidence.

Wrong reasoning: text-first reasoning struggles with motion and progression unless you supply scoped, time-aligned evidence.

This is why mission video systems don’t win by “prompting harder.” They win by engineering the context a reasoning system can trust.

What specialized video models do differently

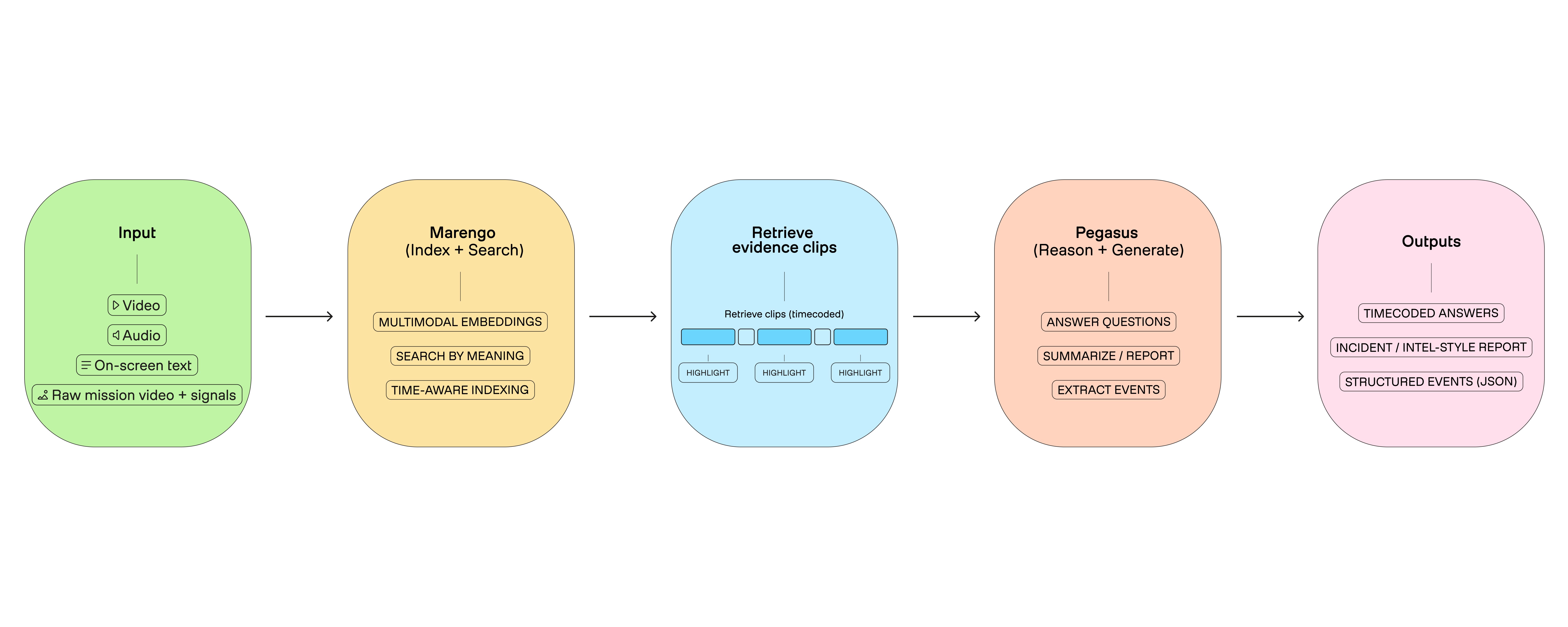

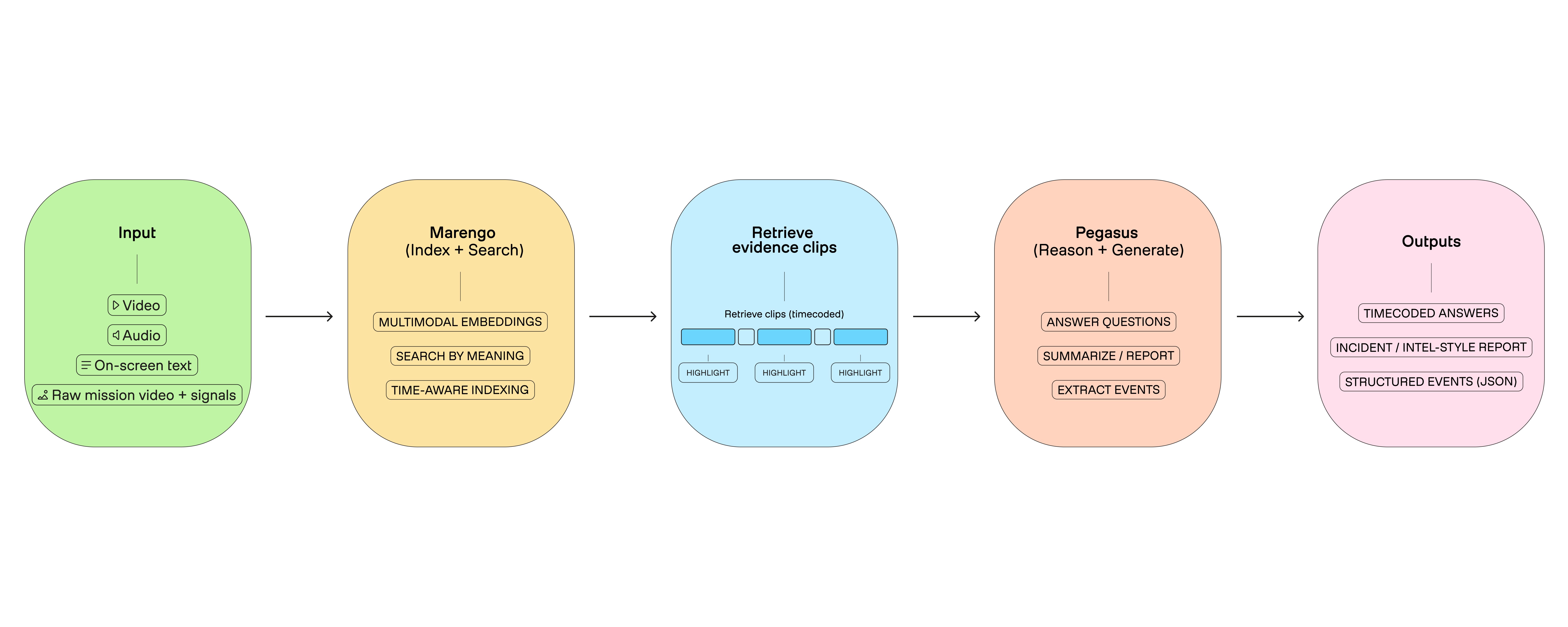

A mission-grade video system doesn’t try to force everything through one monolithic prompt. It splits the problem into two roles:

A perception layer that converts raw video into a searchable spatiotemporal representation.

A reasoning/reporting layer that converts selected evidence into grounded, structured outputs.

That division shows up clearly in TwelveLabs models:

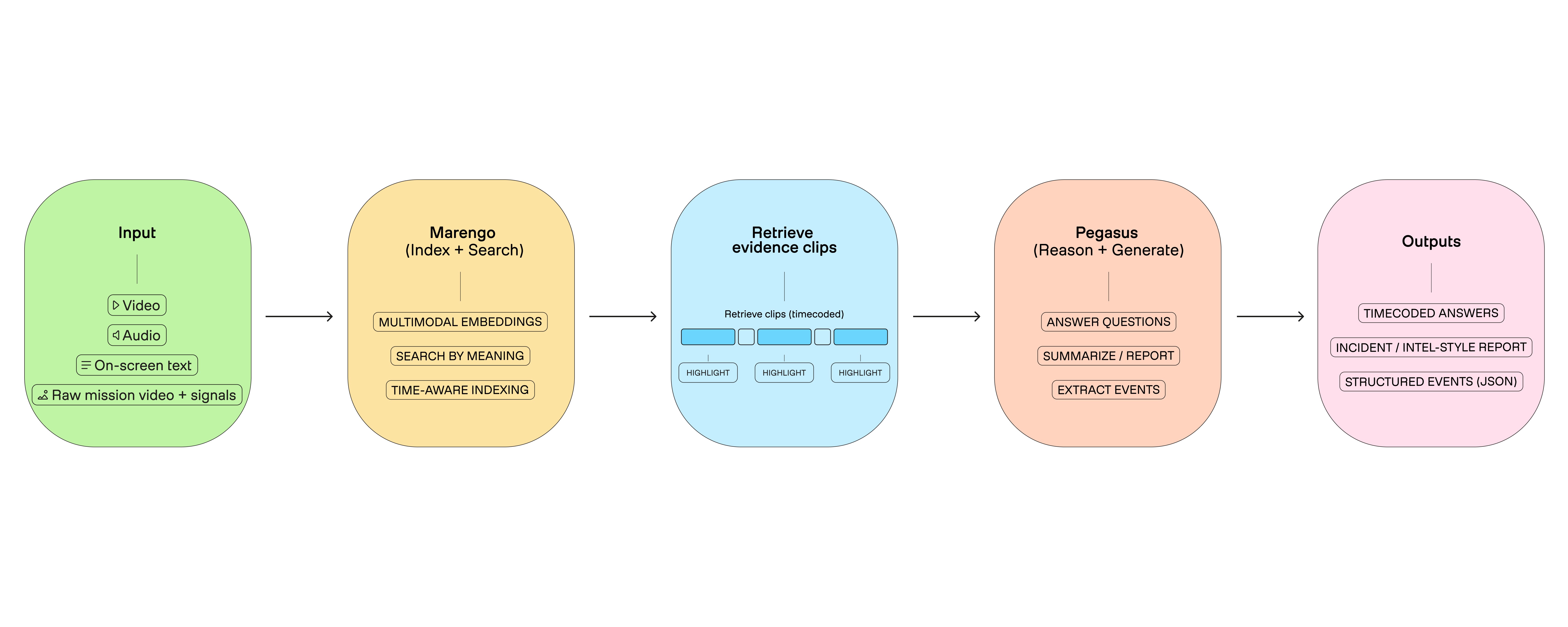

Marengo: unify video/image/text/audio into shared representations for “any-to-any” retrieval. This is the “perception engine”.

Pegasus: leverage those representations to turn video into precise text (reports, chapters, summaries). This is the “reasoning and reporting engine”.

Figure 3: Marengo = video indexing + retrieval, Pegasus = reasoning + outputs.

1 - Marengo: build long-term spatiotemporal memory over video

The mission requirement isn’t “understand this clip.” It’s “operate over corpuses of video with reliability.” That’s why retrieval matters. In the “context engineering” view, retrieval is the core hallucination reduction lever: better selection → less guessing.

Marengo is built to be that retrieval substrate:

It’s a multimodal encoder that turns video, image, text, and audio into unified representations, enabling cross-modal search.

It supports mission-relevant query patterns: entity search, multimedia composed retrieval (image + text), and long-form processing requirements.

From an operational standpoint, this is your “long-term memory” layer: index once, then answer many mission questions quickly by retrieving the right time-bounded segments, rather than repeatedly pushing raw video through a general LLM.

2 - Pegasus: turn retrieved evidence into grounded intelligence products

Once you have evidence clips, the mission question becomes: can the system produce outputs that analysts can trust, review, and integrate?

Pegasus is the video-to-text component that produces structured outputs (chapters, summaries, reports, metadata) aligned to time, returning explanations grounded in multimodal evidence. This enables you to get standardized fields (start, end, label, evidence, confidence) from the evidence clips and support exports suitable for downstream tools.

In mission workflows, an answer without timecodes is a non-answer. This is also why the “output format” discussion is not a cosmetic detail. It determines whether a model can plug into real PED (processing, exploitation, dissemination) workflows.

This requirement maps to auditability. Many common public sector patterns (investigations, incident reconstruction, intelligence reporting, compliance) require:

What happened

Where it happened in the footage

Why it was flagged

What evidence supports it

That’s how you turn AI into an analyst multiplier instead of an opaque narrator.

What to Demand in An Evaluation

In practical public sector terms, specialized video models support workloads like:

Persistent surveillance across multiple feeds, where systems surface activities of interest without requiring continuous human monitoring.

Pattern-of-life analysis via tracking entities across time, detecting anomalies, and constructing timelines.

Post-event forensics: searching archived video to reconstruct event sequences and establish timelines.

If you’re selecting video AI for DoD/IC missions, ask for evaluations that explicitly test temporal reasoning; not just “does it describe a scene.”

Test shuffling invariance and long-form performance, not only aggregate benchmark scores.

Validate representative content (extended FMV, degraded quality, multi-sensor feeds) and specify timeline-anchored output requirements.

Follow this “production constraints” list: long-form processing, entity tracking across scene transitions, temporal queries, near-real-time latency requirements, edge deployment constraints, and interoperability standards.

Conclusion

General-purpose LLMs are transforming how we work with text. But mission video is not text.

Video is a timeline, and the highest-value mission questions depend on temporal reasoning: event order, causality, progression, and long-range dependencies.

When the mission requires evidence, timecodes, and auditability, you need models and infrastructure designed for video from day one: a retrieval substrate that preserves spatiotemporal context, plus a reasoning layer that generates grounded outputs aligned to that timeline.

That is why the choice isn’t “AI vs no AI.” It’s video AI that actually understands time vs systems that only process frames.

Video is not text. It’s a timeline.

If you work in DoD or the Intelligence Community, you already know the uncomfortable truth: we are collecting more video than humans can watch.

ISR platforms, wide-area sensors, fixed cameras, body-worn cameras, drones; everything is becoming a video stream. And the hard part isn’t collecting the footage; it’s turning raw motion imagery into timely, trustworthy intelligence products.

Public reports have described this as an “information deluge” in military intelligence, where analysts risk becoming overwhelmed by the scale of modern collection.

So it’s natural that teams ask: “Can we just put this video into an LLM?”

Sometimes you can get a decent answer for a short clip. But for mission workloads including hours of footage, multiple feeds, real timeline questions (“what happened before/after?”, “who interacted with whom?”, “what changed?”), general-purpose LLMs fail in predictable ways.

And the reason is simple: Text is a sequence of tokens. Video is a spatiotemporal signal.

Figure 1: Video is continuous time; LLMs operate on discrete tokens; forcing video into tokens causes information loss.

The hidden requirement in mission video: temporal reasoning

Most people hear “video understanding” and think “recognize objects.” Mission video rarely fails because you couldn’t detect a person or a vehicle. It fails because you couldn’t reason over time, such as:

What happened first, and what happened next?

Did the observed behavior escalate?

What is the causal chain?

Is this a normal pattern of life or an anomaly?

These are timeline questions. You can’t answer them reliably if the model’s internal representation collapses video into a handful of static snapshots or a loosely grounded caption.

Why general-purpose LLMs break on video

This is where it’s important to be precise: general-purpose LLMs are “bad at video” not because they’re unintelligent, but because the common way we feed video into them loses the very information missions require.

There are three core failure modes.

Figure 2: Why “general LLM + sampled frames” fails for video missions.

1 - Frame sampling destroys the timeline

Video is dense. A standard stream might capture ~30 frames per second. But many “video-to-LLM” systems only pass along a tiny fraction of those frames (sometimes as low as ~1 frame per second) because processing every frame is expensive.

The SlowFocus paper makes this explicit: under compute constraints, video LLMs typically need to sparsely sample the original video (e.g., “retaining one frame every second”) and also compress per-frame tokens through adapters; therefore forcing a tradeoff between frame-level detail and video-level temporal coverage.

That tradeoff is not benign. If you throw away most frames, you throw away:

Transitions (what changed)

Fine-grained actions (what someone did, not just what they were)

Causal cues (the “because” between events)

Synchronization (what audio happened at the same time as an action)

For mission workloads, those are the exact cues you care about.

2 - Language priors overpower evidence

LLMs are trained to be fluent. That’s a strength until it becomes a liability.

Apple’s 2025 workshop paper on video LLM benchmarking calls out “strong language priors,” where models can answer questions correctly without watching the video at all.

In a mission setting, “plausible” is not good enough. You need the model to point to what happened in this footage, not what usually happens in the world. This is also why the risk profile feels different in public sector: a confident guess can become an unintentional false positive, a missed event, or a narrative error that cascades into decisions.

3 - Shuffling invariance proves the model isn’t reasoning over time

Here’s a simple test that matters more than many public benchmarks: If you shuffle the frames out of order… does the model still answer the same way?

Apple’s paper also highlights “shuffling invariance,” where some video LLMs maintain similar performance even when frames are temporally shuffled. If a system can’t tell the difference between correct order and shuffled order, it can’t reliably reason about event sequence, escalation, or causality; which are core elements of FMV (Full-Motion Video) exploitation and pattern-of-life analysis.

The production mismatch: wrong context, wrong memory, wrong reasoning

Even when a demo looks good, most general-purpose stacks run into “production gravity” on long-form, mission-scale video:

Wrong context: treating video like tokens tends to lose spatiotemporal continuity.

Wrong memory: “video memory” is not the same as text RAG; missions require retrieval over huge archives with time-aligned evidence.

Wrong reasoning: text-first reasoning struggles with motion and progression unless you supply scoped, time-aligned evidence.

This is why mission video systems don’t win by “prompting harder.” They win by engineering the context a reasoning system can trust.

What specialized video models do differently

A mission-grade video system doesn’t try to force everything through one monolithic prompt. It splits the problem into two roles:

A perception layer that converts raw video into a searchable spatiotemporal representation.

A reasoning/reporting layer that converts selected evidence into grounded, structured outputs.

That division shows up clearly in TwelveLabs models:

Marengo: unify video/image/text/audio into shared representations for “any-to-any” retrieval. This is the “perception engine”.

Pegasus: leverage those representations to turn video into precise text (reports, chapters, summaries). This is the “reasoning and reporting engine”.

Figure 3: Marengo = video indexing + retrieval, Pegasus = reasoning + outputs.

1 - Marengo: build long-term spatiotemporal memory over video

The mission requirement isn’t “understand this clip.” It’s “operate over corpuses of video with reliability.” That’s why retrieval matters. In the “context engineering” view, retrieval is the core hallucination reduction lever: better selection → less guessing.

Marengo is built to be that retrieval substrate:

It’s a multimodal encoder that turns video, image, text, and audio into unified representations, enabling cross-modal search.

It supports mission-relevant query patterns: entity search, multimedia composed retrieval (image + text), and long-form processing requirements.

From an operational standpoint, this is your “long-term memory” layer: index once, then answer many mission questions quickly by retrieving the right time-bounded segments, rather than repeatedly pushing raw video through a general LLM.

2 - Pegasus: turn retrieved evidence into grounded intelligence products

Once you have evidence clips, the mission question becomes: can the system produce outputs that analysts can trust, review, and integrate?

Pegasus is the video-to-text component that produces structured outputs (chapters, summaries, reports, metadata) aligned to time, returning explanations grounded in multimodal evidence. This enables you to get standardized fields (start, end, label, evidence, confidence) from the evidence clips and support exports suitable for downstream tools.

In mission workflows, an answer without timecodes is a non-answer. This is also why the “output format” discussion is not a cosmetic detail. It determines whether a model can plug into real PED (processing, exploitation, dissemination) workflows.

This requirement maps to auditability. Many common public sector patterns (investigations, incident reconstruction, intelligence reporting, compliance) require:

What happened

Where it happened in the footage

Why it was flagged

What evidence supports it

That’s how you turn AI into an analyst multiplier instead of an opaque narrator.

What to Demand in An Evaluation

In practical public sector terms, specialized video models support workloads like:

Persistent surveillance across multiple feeds, where systems surface activities of interest without requiring continuous human monitoring.

Pattern-of-life analysis via tracking entities across time, detecting anomalies, and constructing timelines.

Post-event forensics: searching archived video to reconstruct event sequences and establish timelines.

If you’re selecting video AI for DoD/IC missions, ask for evaluations that explicitly test temporal reasoning; not just “does it describe a scene.”

Test shuffling invariance and long-form performance, not only aggregate benchmark scores.

Validate representative content (extended FMV, degraded quality, multi-sensor feeds) and specify timeline-anchored output requirements.

Follow this “production constraints” list: long-form processing, entity tracking across scene transitions, temporal queries, near-real-time latency requirements, edge deployment constraints, and interoperability standards.

Conclusion

General-purpose LLMs are transforming how we work with text. But mission video is not text.

Video is a timeline, and the highest-value mission questions depend on temporal reasoning: event order, causality, progression, and long-range dependencies.

When the mission requires evidence, timecodes, and auditability, you need models and infrastructure designed for video from day one: a retrieval substrate that preserves spatiotemporal context, plus a reasoning layer that generates grounded outputs aligned to that timeline.

That is why the choice isn’t “AI vs no AI.” It’s video AI that actually understands time vs systems that only process frames.

Video is not text. It’s a timeline.

If you work in DoD or the Intelligence Community, you already know the uncomfortable truth: we are collecting more video than humans can watch.

ISR platforms, wide-area sensors, fixed cameras, body-worn cameras, drones; everything is becoming a video stream. And the hard part isn’t collecting the footage; it’s turning raw motion imagery into timely, trustworthy intelligence products.

Public reports have described this as an “information deluge” in military intelligence, where analysts risk becoming overwhelmed by the scale of modern collection.

So it’s natural that teams ask: “Can we just put this video into an LLM?”

Sometimes you can get a decent answer for a short clip. But for mission workloads including hours of footage, multiple feeds, real timeline questions (“what happened before/after?”, “who interacted with whom?”, “what changed?”), general-purpose LLMs fail in predictable ways.

And the reason is simple: Text is a sequence of tokens. Video is a spatiotemporal signal.

Figure 1: Video is continuous time; LLMs operate on discrete tokens; forcing video into tokens causes information loss.

The hidden requirement in mission video: temporal reasoning

Most people hear “video understanding” and think “recognize objects.” Mission video rarely fails because you couldn’t detect a person or a vehicle. It fails because you couldn’t reason over time, such as:

What happened first, and what happened next?

Did the observed behavior escalate?

What is the causal chain?

Is this a normal pattern of life or an anomaly?

These are timeline questions. You can’t answer them reliably if the model’s internal representation collapses video into a handful of static snapshots or a loosely grounded caption.

Why general-purpose LLMs break on video

This is where it’s important to be precise: general-purpose LLMs are “bad at video” not because they’re unintelligent, but because the common way we feed video into them loses the very information missions require.

There are three core failure modes.

Figure 2: Why “general LLM + sampled frames” fails for video missions.

1 - Frame sampling destroys the timeline

Video is dense. A standard stream might capture ~30 frames per second. But many “video-to-LLM” systems only pass along a tiny fraction of those frames (sometimes as low as ~1 frame per second) because processing every frame is expensive.

The SlowFocus paper makes this explicit: under compute constraints, video LLMs typically need to sparsely sample the original video (e.g., “retaining one frame every second”) and also compress per-frame tokens through adapters; therefore forcing a tradeoff between frame-level detail and video-level temporal coverage.

That tradeoff is not benign. If you throw away most frames, you throw away:

Transitions (what changed)

Fine-grained actions (what someone did, not just what they were)

Causal cues (the “because” between events)

Synchronization (what audio happened at the same time as an action)

For mission workloads, those are the exact cues you care about.

2 - Language priors overpower evidence

LLMs are trained to be fluent. That’s a strength until it becomes a liability.

Apple’s 2025 workshop paper on video LLM benchmarking calls out “strong language priors,” where models can answer questions correctly without watching the video at all.

In a mission setting, “plausible” is not good enough. You need the model to point to what happened in this footage, not what usually happens in the world. This is also why the risk profile feels different in public sector: a confident guess can become an unintentional false positive, a missed event, or a narrative error that cascades into decisions.

3 - Shuffling invariance proves the model isn’t reasoning over time

Here’s a simple test that matters more than many public benchmarks: If you shuffle the frames out of order… does the model still answer the same way?

Apple’s paper also highlights “shuffling invariance,” where some video LLMs maintain similar performance even when frames are temporally shuffled. If a system can’t tell the difference between correct order and shuffled order, it can’t reliably reason about event sequence, escalation, or causality; which are core elements of FMV (Full-Motion Video) exploitation and pattern-of-life analysis.

The production mismatch: wrong context, wrong memory, wrong reasoning

Even when a demo looks good, most general-purpose stacks run into “production gravity” on long-form, mission-scale video:

Wrong context: treating video like tokens tends to lose spatiotemporal continuity.

Wrong memory: “video memory” is not the same as text RAG; missions require retrieval over huge archives with time-aligned evidence.

Wrong reasoning: text-first reasoning struggles with motion and progression unless you supply scoped, time-aligned evidence.

This is why mission video systems don’t win by “prompting harder.” They win by engineering the context a reasoning system can trust.

What specialized video models do differently

A mission-grade video system doesn’t try to force everything through one monolithic prompt. It splits the problem into two roles:

A perception layer that converts raw video into a searchable spatiotemporal representation.

A reasoning/reporting layer that converts selected evidence into grounded, structured outputs.

That division shows up clearly in TwelveLabs models:

Marengo: unify video/image/text/audio into shared representations for “any-to-any” retrieval. This is the “perception engine”.

Pegasus: leverage those representations to turn video into precise text (reports, chapters, summaries). This is the “reasoning and reporting engine”.

Figure 3: Marengo = video indexing + retrieval, Pegasus = reasoning + outputs.

1 - Marengo: build long-term spatiotemporal memory over video

The mission requirement isn’t “understand this clip.” It’s “operate over corpuses of video with reliability.” That’s why retrieval matters. In the “context engineering” view, retrieval is the core hallucination reduction lever: better selection → less guessing.

Marengo is built to be that retrieval substrate:

It’s a multimodal encoder that turns video, image, text, and audio into unified representations, enabling cross-modal search.

It supports mission-relevant query patterns: entity search, multimedia composed retrieval (image + text), and long-form processing requirements.

From an operational standpoint, this is your “long-term memory” layer: index once, then answer many mission questions quickly by retrieving the right time-bounded segments, rather than repeatedly pushing raw video through a general LLM.

2 - Pegasus: turn retrieved evidence into grounded intelligence products

Once you have evidence clips, the mission question becomes: can the system produce outputs that analysts can trust, review, and integrate?

Pegasus is the video-to-text component that produces structured outputs (chapters, summaries, reports, metadata) aligned to time, returning explanations grounded in multimodal evidence. This enables you to get standardized fields (start, end, label, evidence, confidence) from the evidence clips and support exports suitable for downstream tools.

In mission workflows, an answer without timecodes is a non-answer. This is also why the “output format” discussion is not a cosmetic detail. It determines whether a model can plug into real PED (processing, exploitation, dissemination) workflows.

This requirement maps to auditability. Many common public sector patterns (investigations, incident reconstruction, intelligence reporting, compliance) require:

What happened

Where it happened in the footage

Why it was flagged

What evidence supports it

That’s how you turn AI into an analyst multiplier instead of an opaque narrator.

What to Demand in An Evaluation

In practical public sector terms, specialized video models support workloads like:

Persistent surveillance across multiple feeds, where systems surface activities of interest without requiring continuous human monitoring.

Pattern-of-life analysis via tracking entities across time, detecting anomalies, and constructing timelines.

Post-event forensics: searching archived video to reconstruct event sequences and establish timelines.

If you’re selecting video AI for DoD/IC missions, ask for evaluations that explicitly test temporal reasoning; not just “does it describe a scene.”

Test shuffling invariance and long-form performance, not only aggregate benchmark scores.

Validate representative content (extended FMV, degraded quality, multi-sensor feeds) and specify timeline-anchored output requirements.

Follow this “production constraints” list: long-form processing, entity tracking across scene transitions, temporal queries, near-real-time latency requirements, edge deployment constraints, and interoperability standards.

Conclusion

General-purpose LLMs are transforming how we work with text. But mission video is not text.

Video is a timeline, and the highest-value mission questions depend on temporal reasoning: event order, causality, progression, and long-range dependencies.

When the mission requires evidence, timecodes, and auditability, you need models and infrastructure designed for video from day one: a retrieval substrate that preserves spatiotemporal context, plus a reasoning layer that generates grounded outputs aligned to that timeline.

That is why the choice isn’t “AI vs no AI.” It’s video AI that actually understands time vs systems that only process frames.

Related articles

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved