Company

Why Video Understanding Is the Most Important Infrastructure Decision in Media Right Now

Allie Pavan Bernacchi

A case for why the window on video intelligence in media and entertainment is closing faster than most realize, and why TwelveLabs is the right bet right now.

A case for why the window on video intelligence in media and entertainment is closing faster than most realize, and why TwelveLabs is the right bet right now.

In this article

Join our newsletter

Join our newsletter

Receive the latest advancements, tutorials, and industry insights in video understanding

Receive the latest advancements, tutorials, and industry insights in video understanding

Search, analyze, and explore your videos with AI.

Apr 2, 2026

14 Minutes

Copy link to article

Media and entertainment companies are sitting on vast libraries of valuable content they cannot effectively find, use, or monetize because the intelligence layer that makes video truly searchable has never been built in.

This is not just an archive problem. From the moment new content is captured on set to the instant a sports highlight needs to hit a fan’s feed, the absence of video understanding creates friction at every stage of the content pipeline. It costs time, suppresses revenue, and leaves creative decisions under-informed.

The shift to cloud and the pressure to digitize legacy libraries are happening at the same time. The companies that treat video intelligence as a “phase three” initiative, instead of a foundational layer, will emerge from this transition years behind. The window to make that decision is open right now. The companies that move first will build a structural advantage in search, licensing, contextual advertising, and audience connection that late movers will struggle to close.

Content is everywhere and that's exactly the problem

There are now countless places where content lives: streaming platforms, social channels, OTT apps, VOD libraries, licensing portals, internal archives. The surface area of media distribution has never been larger. And yet, for most media and entertainment companies, the ability to connect audiences with the right piece of content at the right moment has not kept pace.

What we have today is mostly stale metadata, manual tagging, and broad genre categories. It works well enough, until it doesn’t. And increasingly, it doesn’t.

Audience expectations have shifted fundamentally. People do not just want to find a movie or a show. They want to find that scene, the one with a specific energy, a visual tone, an emotional beat that matches how they’re feeling right now. A search experience that cannot meet that expectation is not really search. It is a filter that loses people.

Content is scattered across more surfaces than ever, and our ability to intelligently surface it has not caught up. That gap has a cost, and it’s growing.

The dream scenario is closer than most realize

Here’s what fully realized video intelligence looks like for a media company.

Video understanding is the ability to automatically turn what’s inside video (visuals, speech, sounds, actions, and timing) into searchable, reusable information.

In the dream scenario, your entire content library (the archive, the back catalog, the unreleased, the licensed) becomes instantly searchable. Not by title or tag. By tone, vibe, mood, visual style, spoken word, and cultural moment. A clip of a city at dusk that feels like loneliness. A scene from a 1994 documentary with exactly the framing and pacing your editorial team is looking for. B-roll that perfectly matches the creative brief a brand partner just sent over.

When content is that discoverable, monetization opens up in every direction. Internal teams find and repurpose existing footage instead of commissioning new shoots. Licensing desks surface relevant clips for external buyers (other producers, brands, and partners) with a level of precision they have never had before. Editorial and marketing teams assemble themed collections and campaign assets at scale.

And consumers actually find what they are looking for. Not because an algorithm guessed right, but because what they searched for is what they got.

This is not a future scenario. The technology exists today. The bottleneck is adoption, and the companies moving now are the ones positioned to capitalize.

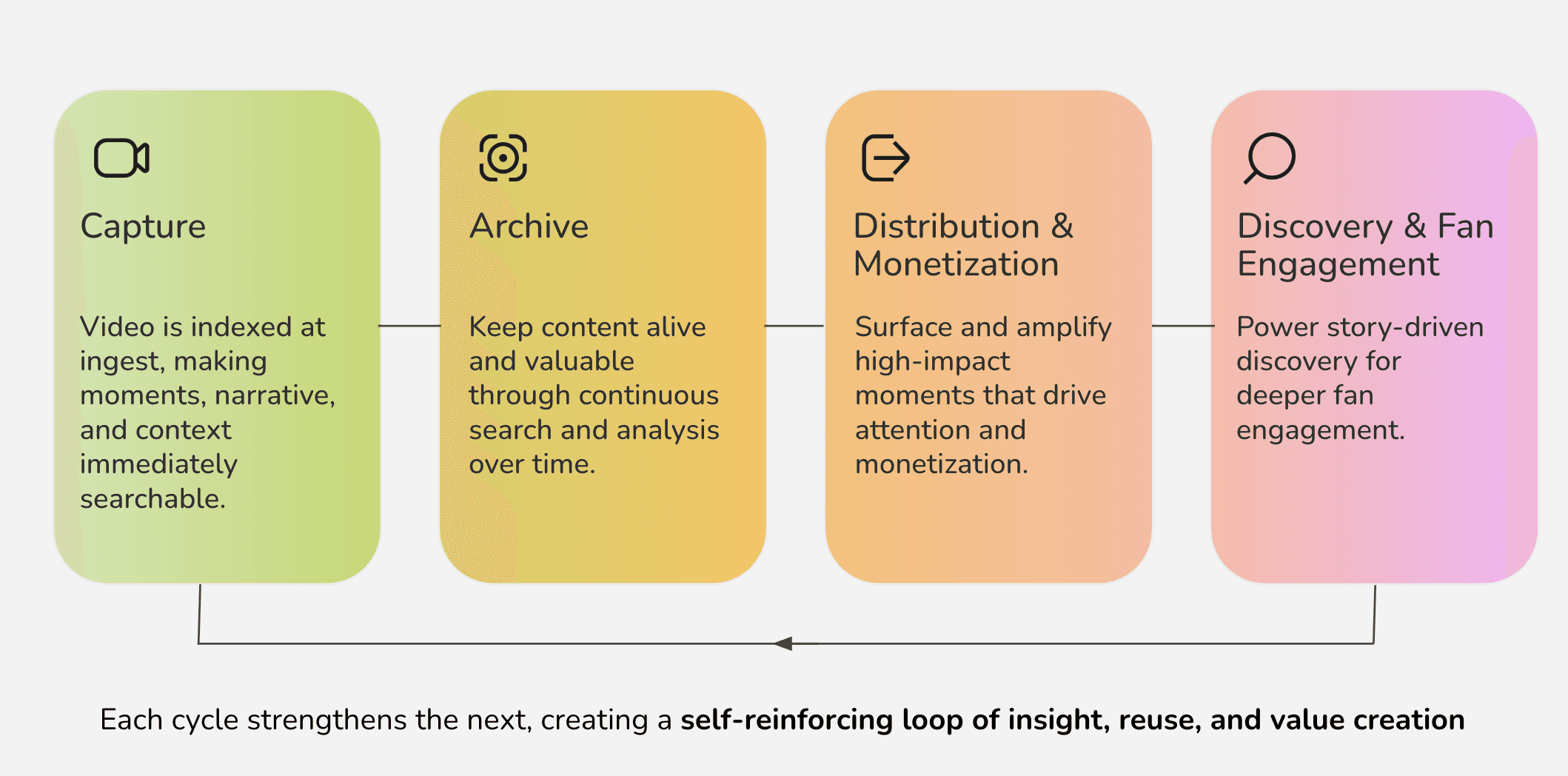

The flywheel starts at the very beginning of the content pipeline

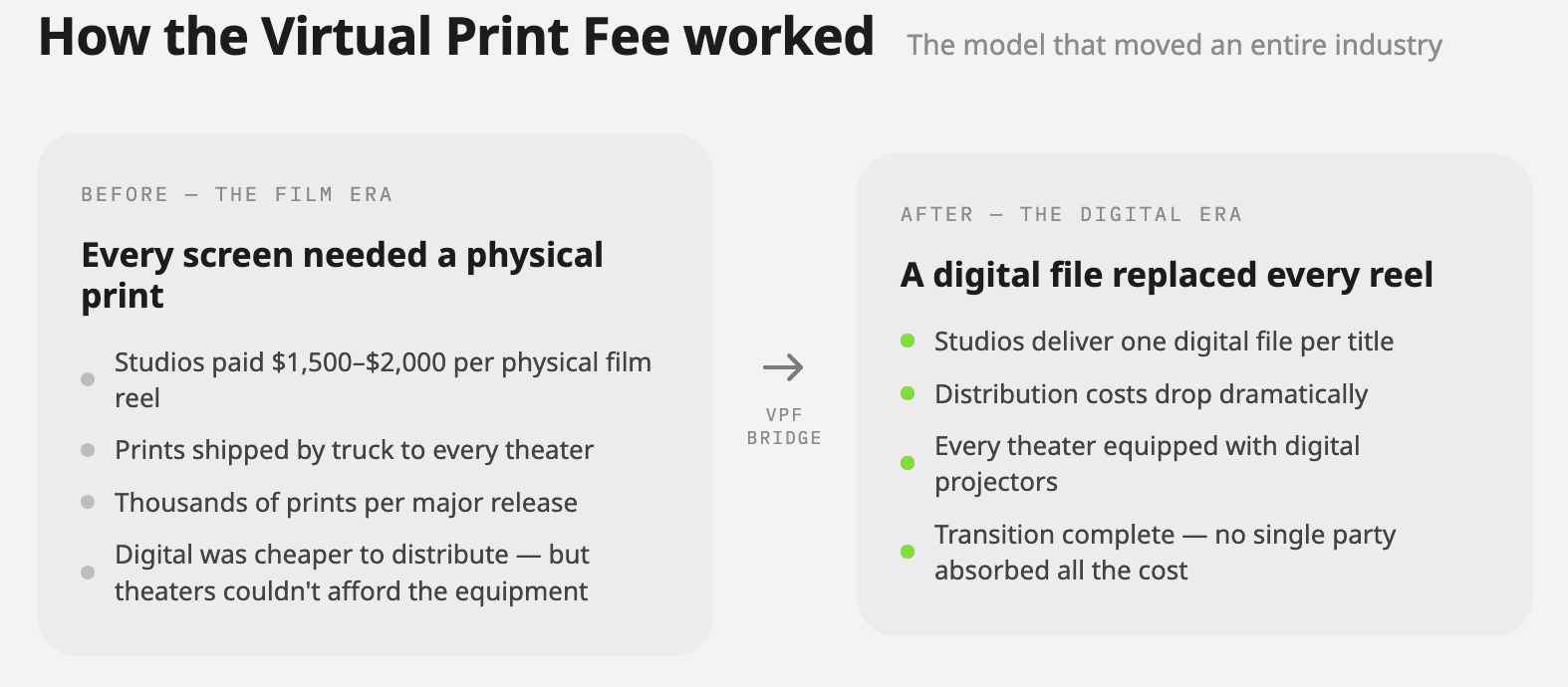

Figure 1: The Content Flywheel

The archive story is compelling, but it is only half of it.

The real competitive shift happens when video understanding is not something you apply retroactively. It happens when it is baked into the content pipeline from day one. Every piece of new content gets analyzed, indexed, and made searchable the moment it exists. That changes everything from production through distribution.

Think about what this looks like in practice.

On set, editors and directors can search through takes semantically, not just by clip number or timestamp, but by what is actually happening in the frame. Finding the version of a shot where the lighting hits a certain way, where the performance has a specific emotional register, where the background action lines up. The kind of judgment calls that currently take hours of scrubbing through footage start to compress into minutes.

For non-linear shoots, having a system that can surface a rough cut based on the strongest material, rather than requiring an editor to hold the entire shoot in their head, is a fundamental shift in how creative work gets done.

Quality control gets smarter too. Instead of manual review passes to catch continuity errors, inconsistent audio, or technical issues, models can flag anomalies across an entire body of footage before anything leaves the editing room.

Then look at what this unlocks on the distribution side.

In sports, the gap between something happening on the field and that moment appearing in a fan’s feed has always been about how fast a human can find the clip, clear it, and push it. Video understanding collapses that gap. A marketing team does not need to wait for someone to manually pull the highlight. They can query for it.

And not just the obvious “stats moment.” The celebration that echoes a rivalry. The emotional beat fans will actually share. The play that connects to five similar plays from the past decade, and together becomes a package, a sponsorship placement, or a licensing opportunity.

New content makes old content more valuable. Old content gives new content context and meaning.

This is not speed for speed’s sake. It is speed that enables something harder to achieve: using the best content, at the right moment, in the right way, for the right audience. Giving editors tools to make better creative decisions faster. Giving storytellers access to the full depth of what they have built. Giving audiences content that actually connects, not content that happened to surface first.

The promise of AI in media has too often been framed as automation: fewer people, faster output. What video understanding enables is enhancement: sharper creative instincts, better storytelling, deeper audience connection. The human judgment stays. It just has a lot more to work with.

Media companies are about to face three crises at once

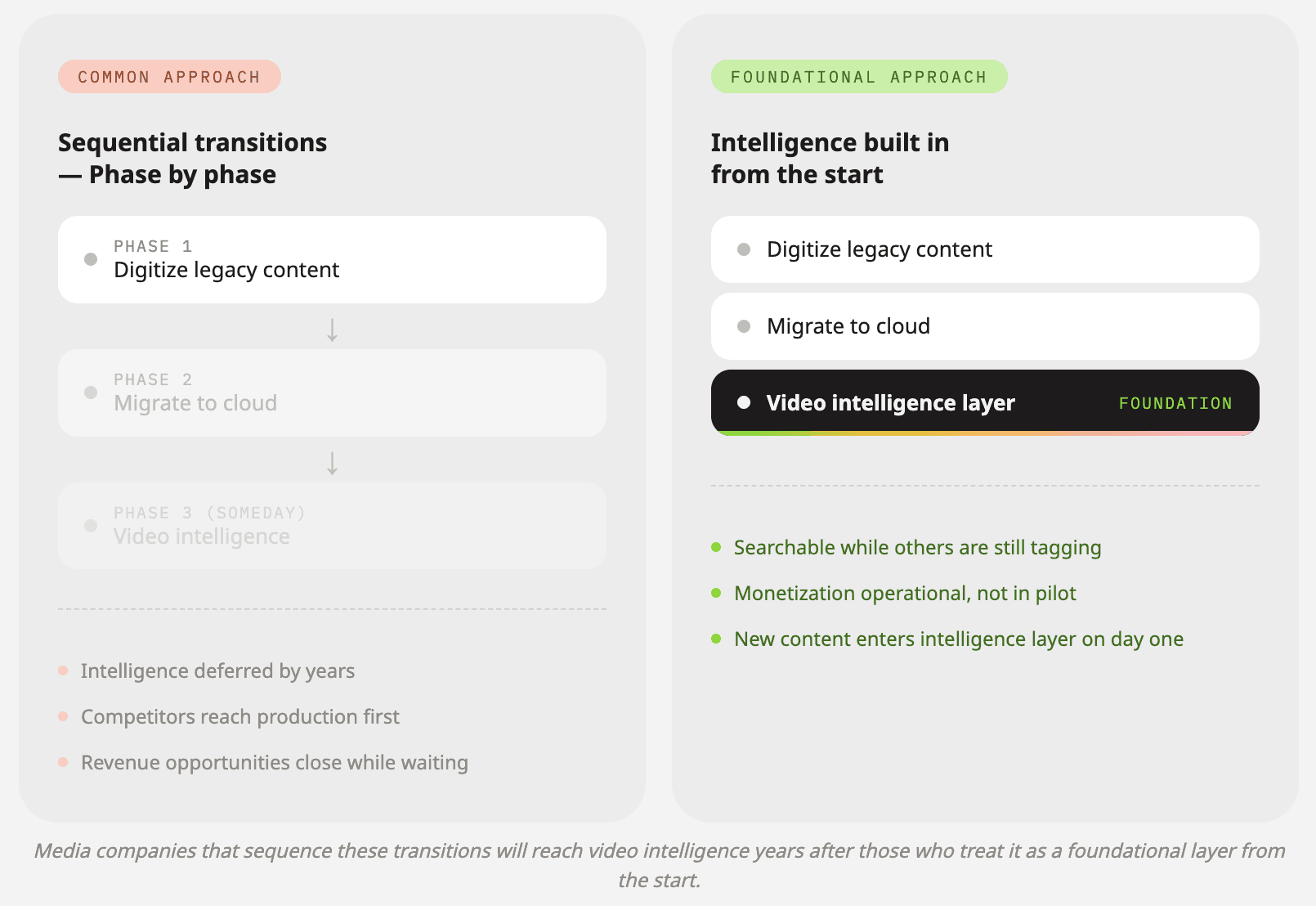

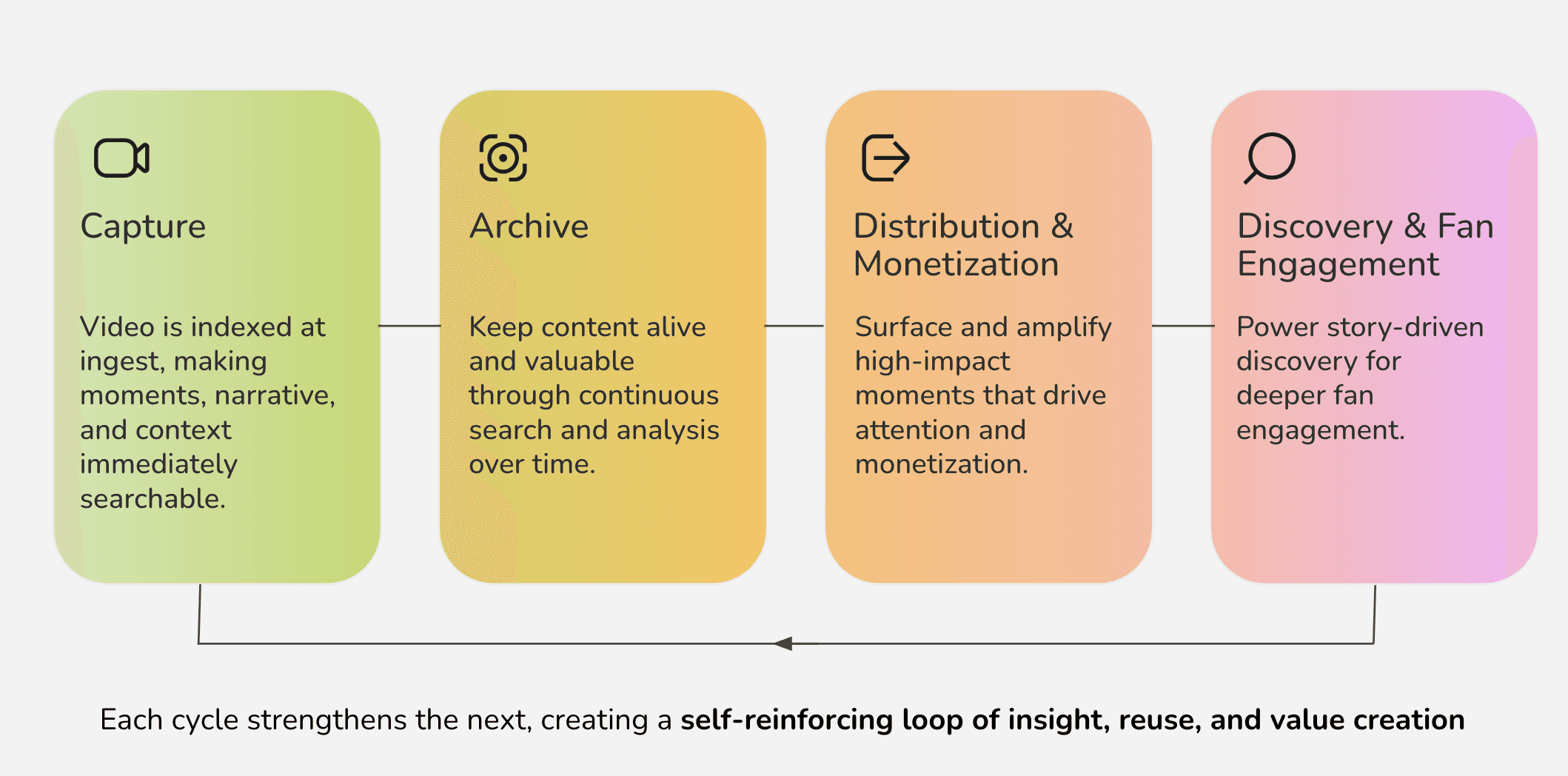

Figure 2: The 3 Crises for Media Companies

The industry is under pressure from three simultaneous transitions: digitizing legacy content, migrating to cloud infrastructure, and making their libraries knowable and searchable. Each is a significant undertaking on its own. All three at once, with the same teams and the same budgets, is a recipe for paralysis, or worse, the wrong prioritization calls.

A lot of organizations try to sequence these efforts. Finish the digitization. Finish the cloud migration. Then deal with discoverability and intelligence.

That logic sounds reasonable. It is also how you fall irreparably behind.

The companies treating video intelligence as a foundational layer from the start will emerge from this transition with a structural advantage. Their content will be searchable while others are still tagging. Their monetization options will be operational while others are still in pilot. Their audience relationships will be deeper while others are still figuring out where their content even lives.

Sitting on millions of hours of valuable content, paying storage costs every month, while that content generates no revenue and reaches no one, is like hiding cash under your mattress. It’s yours, technically. But it’s not working for you.

And if you've already made the investment, this is even more urgent.

A growing number of media companies have done the hard work. They digitized. They migrated to the cloud. Their content is no longer locked in a vault or sitting on tape in a warehouse. It’s accessible. It’s backed up. Teams across the organization technically have access to it.

And yet, nobody can find anything. Nobody knows what most of it actually contains. Nobody can run a search that returns something useful. The archive is in the cloud, but it’s still dark inside.

That is not a solved problem. That is the original problem with a much more expensive monthly bill attached to it. You traded physical storage costs for cloud storage costs, added significant infrastructure overhead, and the content is still sitting there generating zero revenue and reaching zero people.

The only difference is that now it is faster to not find things.

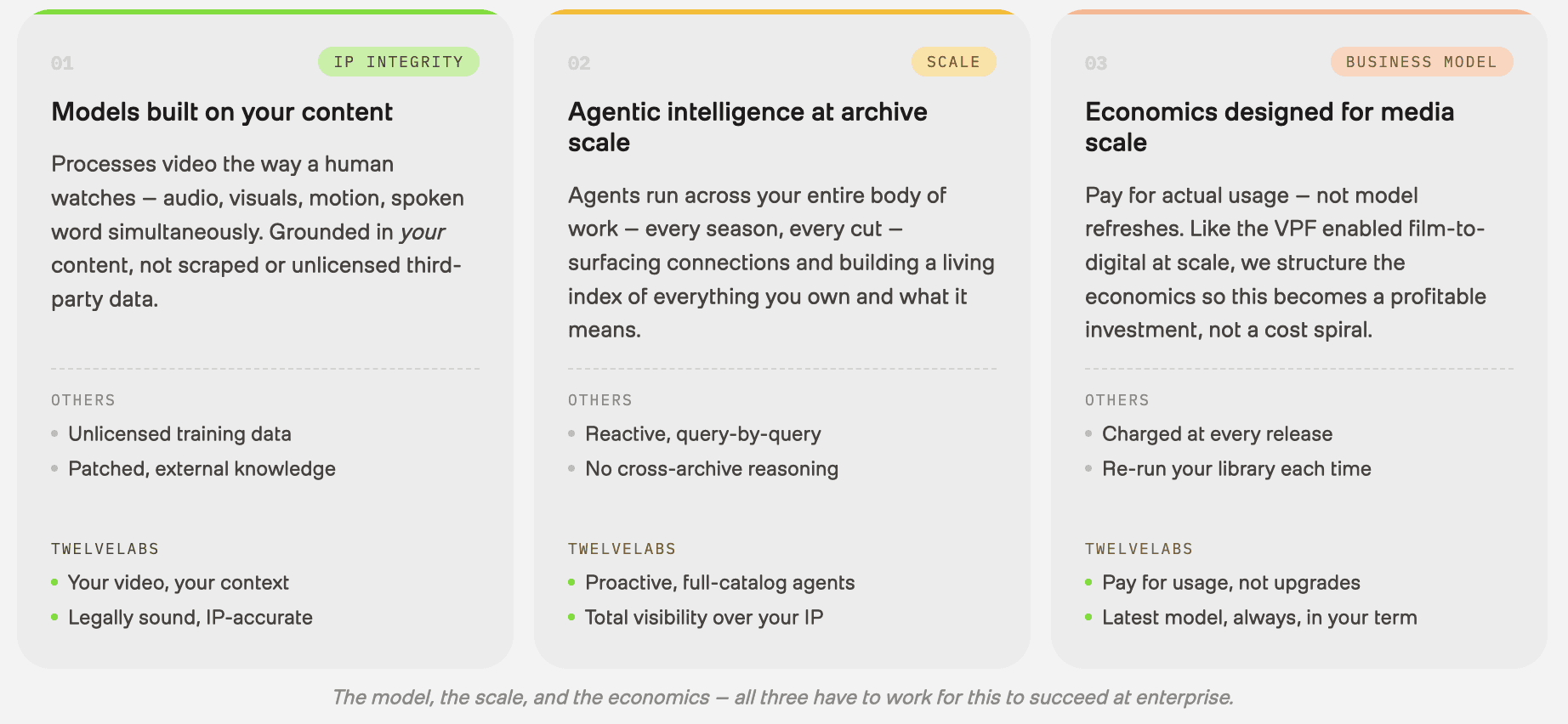

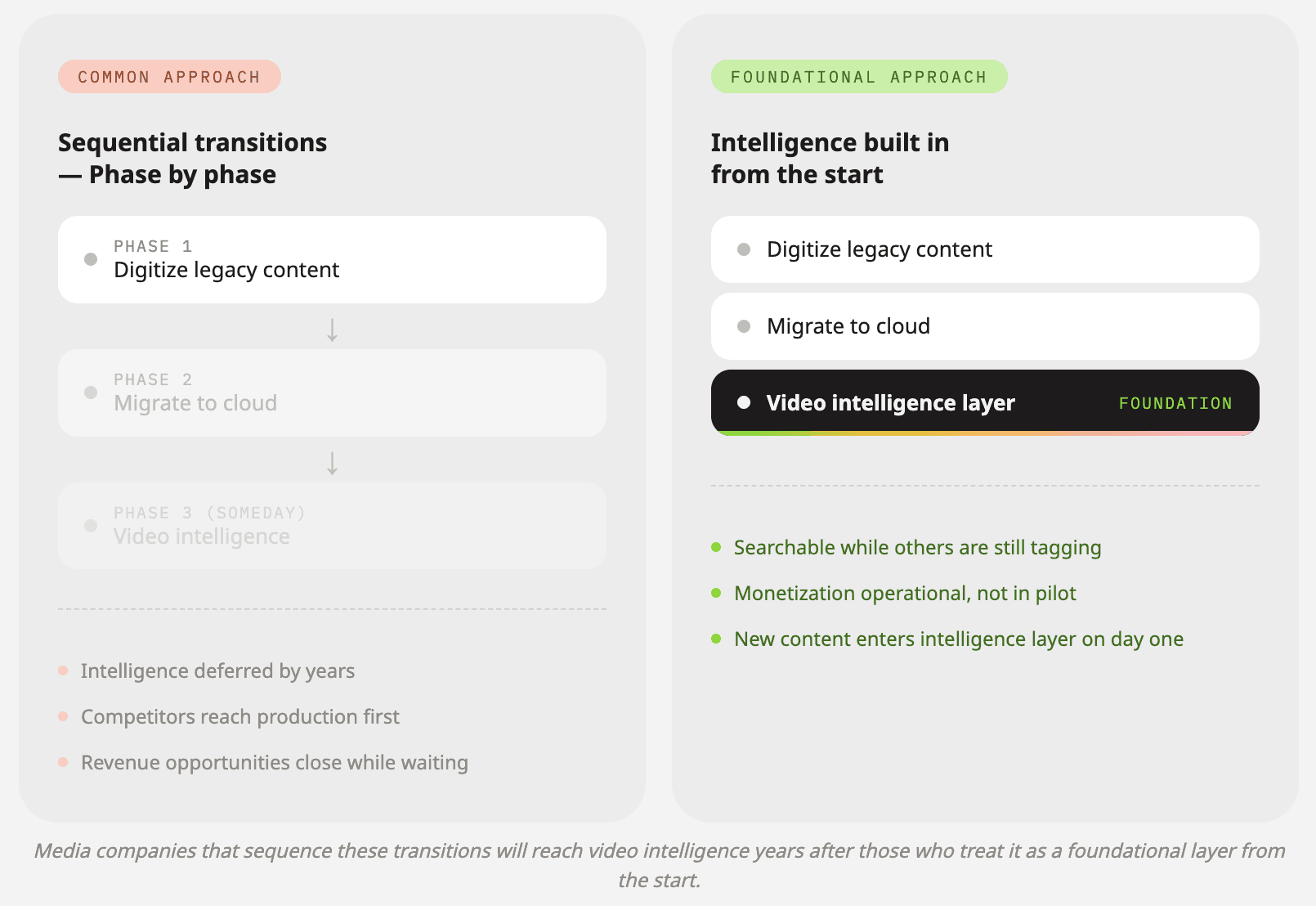

What makes TwelveLabs different isn't just the technology, but an entire novel approach

Figure 3: The TwelveLabs Differentiators

A lot of the AI video conversation right now is focused on benchmarks: who scores highest, whose embeddings are most accurate. That matters. But it is not what determines whether video intelligence actually works at enterprise scale in media and entertainment.

What determines that is simple:

Where does the model’s knowledge come from?

How does it run across large archives?

What do the economics look like when you scale it?

On all three of those questions, what we have built is different.

Built to ground intelligence in your content

Media companies care about IP integrity and factual accuracy. They want the intelligence layer grounded in their library, their rights, and their context. Our approach starts from the video itself, processing it the way a human would watch it, taking in audio, visuals, motion, and spoken word simultaneously, plus whatever additional context you want to add.

This is not a philosophical distinction. It changes adoption.

When the industry is debating training data provenance and licensing, media leaders are not just asking “is it accurate?” They are also asking “is it defensible?”

Built specifically for video, with an eye toward what comes next

Video is not just another modality. It is time. It is sequence. It is context that unfolds.

As the market moves from one-off queries to workflows that operate across entire libraries, the winners will be the companies that can reason over archives at scale, not just answer questions about a single clip.

That is where video intelligence is going, and it is what we are building toward.

A partnership model designed for scale

Most AI vendors charge you every time a new model is released, forcing you to rerun your entire library at your expense. That is not a partnership. That is a toll booth at every upgrade cycle.

We operate differently. The goal is to build value together over time, not extract it at every new version.

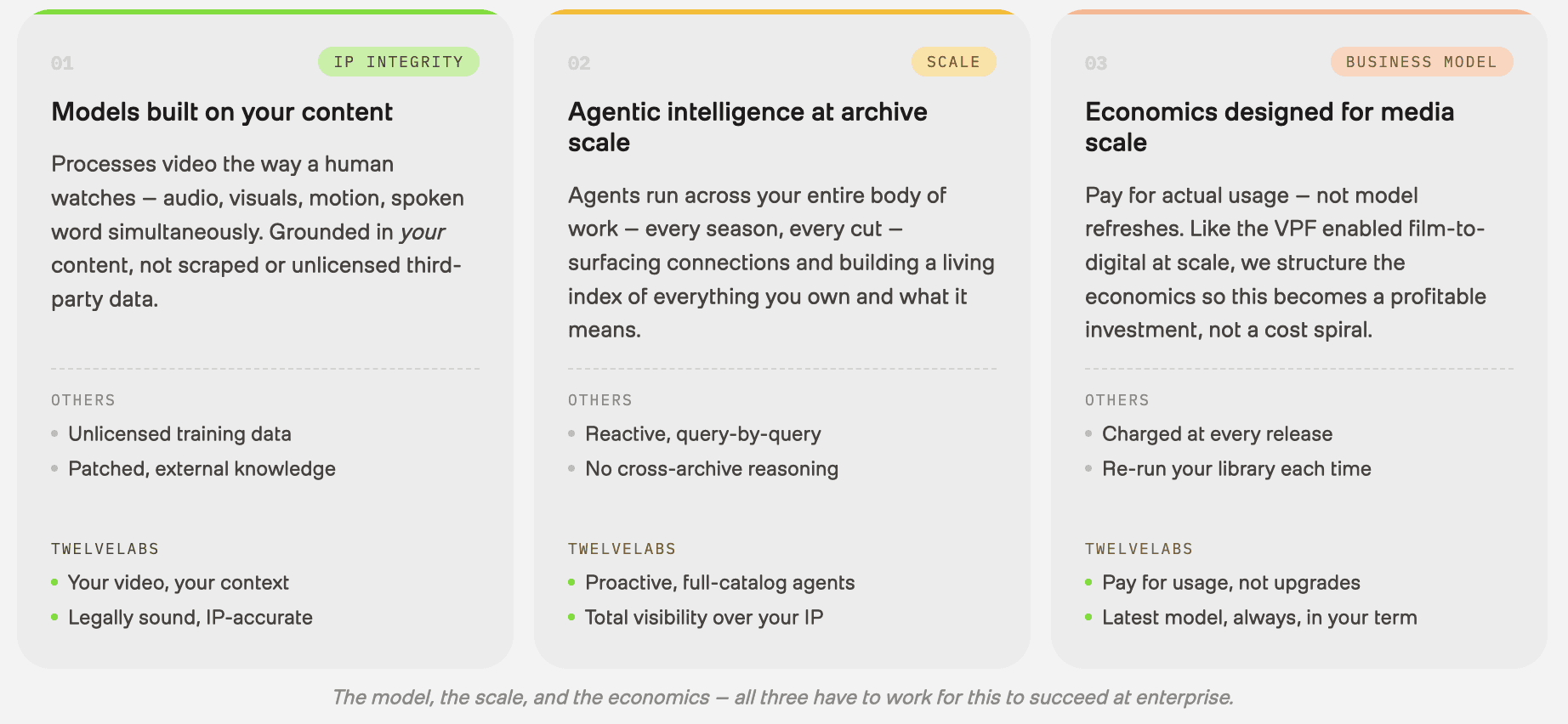

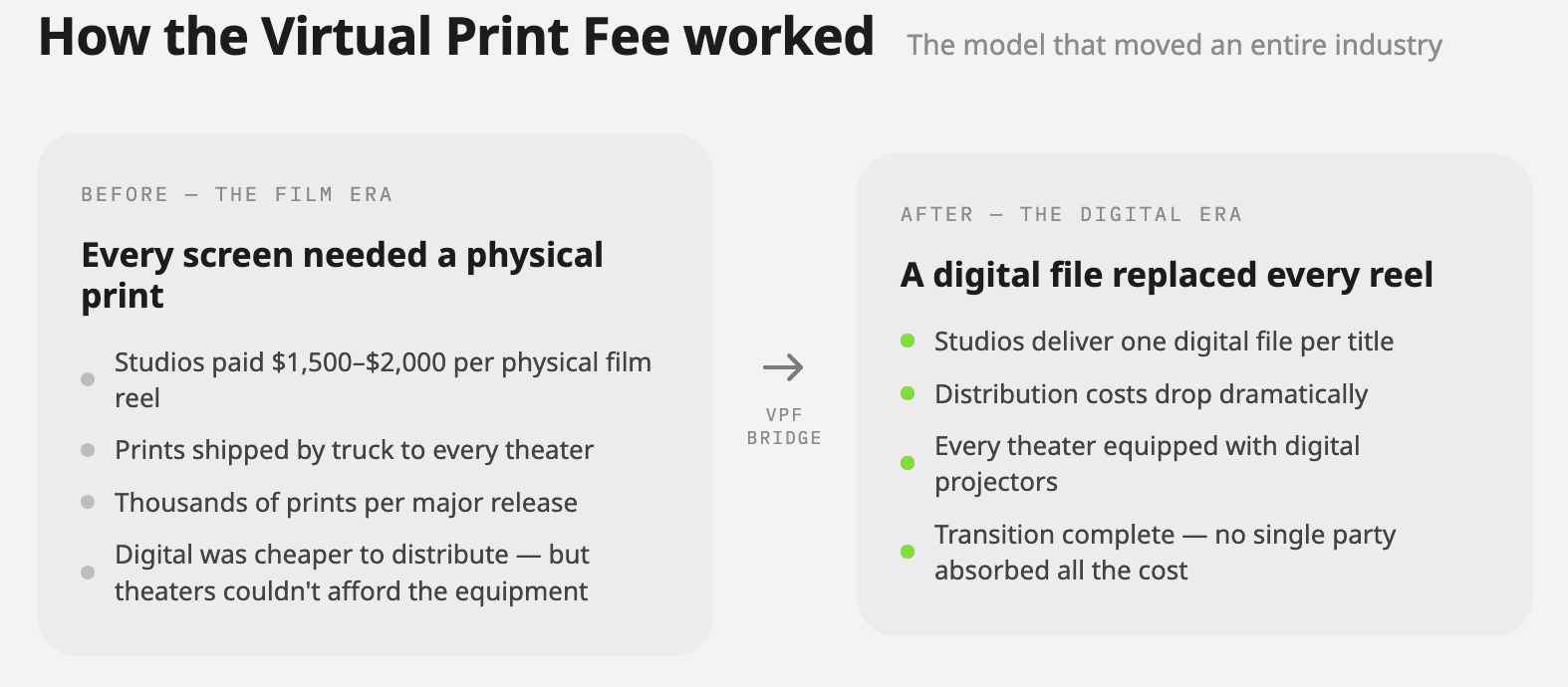

The Virtual Print Fee analogy

The analogy I keep coming back to is the Virtual Print Fee, a financial mechanism that helped the theatrical distribution industry move from film to digital without requiring theater owners to absorb impossible upfront costs.

The whole ecosystem recognized it needed to support the transition. It worked because the economics were structured to make adoption viable at scale.

That is the philosophy we apply to video intelligence. If the economics do not allow you to scale profitably, the best technology in the world does not matter.

Figure 4: The Virtual Print Fee Analogy

The cost of waiting isn't just savings you're not capturing, it's revenue that doesn't exist yet

There is a tendency to frame this urgency around cost reduction. Sure, video intelligence can eliminate manual tagging labor, reduce production costs, and compress timelines. That is real.

But that framing undersells what is actually at stake.

The more significant cost of waiting is that revenue models are forming now, and early movers will have time to build and optimize them long before late movers show up.

Contextual advertising is the clearest example. The shift from broad demographic targeting to scene-level, mood-level, moment-level ad placement is already happening. A brand that wants their spot adjacent to content that feels adventurous, sun-drenched, and high-energy (not just categorized as “travel/lifestyle”) is a fundamentally more valuable advertising deal.

Publishers who can offer that specificity will command meaningfully higher CPMs, but only if their content is semantically understood at that depth.

The same logic applies to licensing and syndication. These are markets where the value accrues to whoever has the most accessible, contextually rich catalog.

Waiting is not neutral. It is conceding ground in markets that are forming right now.

This doesn't have to be a heavy lift

One assumption I want to push back on is that moving on video intelligence requires a massive infrastructure overhaul. It does not, or at least, it does not have to.

TwelveLabs models are available in Amazon Bedrock, which means teams building on AWS can adopt video intelligence with enterprise-grade controls and without managing underlying infrastructure.

The infrastructure is already there. The models are already there. The path from “we want to explore this” to “this is running in production at scale” is shorter than the industry has historically assumed.

The window is open now

The foundational decisions that will determine who leads in video intelligence are being made right now. Not in two years. Now.

The companies moving in the next few months will look very different from the ones that wait until this becomes table stakes.

If you are thinking about what this could look like for your content pipeline and your workflows, let’s talk. We are building this with partners today, and we are building it to last.

Media and entertainment companies are sitting on vast libraries of valuable content they cannot effectively find, use, or monetize because the intelligence layer that makes video truly searchable has never been built in.

This is not just an archive problem. From the moment new content is captured on set to the instant a sports highlight needs to hit a fan’s feed, the absence of video understanding creates friction at every stage of the content pipeline. It costs time, suppresses revenue, and leaves creative decisions under-informed.

The shift to cloud and the pressure to digitize legacy libraries are happening at the same time. The companies that treat video intelligence as a “phase three” initiative, instead of a foundational layer, will emerge from this transition years behind. The window to make that decision is open right now. The companies that move first will build a structural advantage in search, licensing, contextual advertising, and audience connection that late movers will struggle to close.

Content is everywhere and that's exactly the problem

There are now countless places where content lives: streaming platforms, social channels, OTT apps, VOD libraries, licensing portals, internal archives. The surface area of media distribution has never been larger. And yet, for most media and entertainment companies, the ability to connect audiences with the right piece of content at the right moment has not kept pace.

What we have today is mostly stale metadata, manual tagging, and broad genre categories. It works well enough, until it doesn’t. And increasingly, it doesn’t.

Audience expectations have shifted fundamentally. People do not just want to find a movie or a show. They want to find that scene, the one with a specific energy, a visual tone, an emotional beat that matches how they’re feeling right now. A search experience that cannot meet that expectation is not really search. It is a filter that loses people.

Content is scattered across more surfaces than ever, and our ability to intelligently surface it has not caught up. That gap has a cost, and it’s growing.

The dream scenario is closer than most realize

Here’s what fully realized video intelligence looks like for a media company.

Video understanding is the ability to automatically turn what’s inside video (visuals, speech, sounds, actions, and timing) into searchable, reusable information.

In the dream scenario, your entire content library (the archive, the back catalog, the unreleased, the licensed) becomes instantly searchable. Not by title or tag. By tone, vibe, mood, visual style, spoken word, and cultural moment. A clip of a city at dusk that feels like loneliness. A scene from a 1994 documentary with exactly the framing and pacing your editorial team is looking for. B-roll that perfectly matches the creative brief a brand partner just sent over.

When content is that discoverable, monetization opens up in every direction. Internal teams find and repurpose existing footage instead of commissioning new shoots. Licensing desks surface relevant clips for external buyers (other producers, brands, and partners) with a level of precision they have never had before. Editorial and marketing teams assemble themed collections and campaign assets at scale.

And consumers actually find what they are looking for. Not because an algorithm guessed right, but because what they searched for is what they got.

This is not a future scenario. The technology exists today. The bottleneck is adoption, and the companies moving now are the ones positioned to capitalize.

The flywheel starts at the very beginning of the content pipeline

Figure 1: The Content Flywheel

The archive story is compelling, but it is only half of it.

The real competitive shift happens when video understanding is not something you apply retroactively. It happens when it is baked into the content pipeline from day one. Every piece of new content gets analyzed, indexed, and made searchable the moment it exists. That changes everything from production through distribution.

Think about what this looks like in practice.

On set, editors and directors can search through takes semantically, not just by clip number or timestamp, but by what is actually happening in the frame. Finding the version of a shot where the lighting hits a certain way, where the performance has a specific emotional register, where the background action lines up. The kind of judgment calls that currently take hours of scrubbing through footage start to compress into minutes.

For non-linear shoots, having a system that can surface a rough cut based on the strongest material, rather than requiring an editor to hold the entire shoot in their head, is a fundamental shift in how creative work gets done.

Quality control gets smarter too. Instead of manual review passes to catch continuity errors, inconsistent audio, or technical issues, models can flag anomalies across an entire body of footage before anything leaves the editing room.

Then look at what this unlocks on the distribution side.

In sports, the gap between something happening on the field and that moment appearing in a fan’s feed has always been about how fast a human can find the clip, clear it, and push it. Video understanding collapses that gap. A marketing team does not need to wait for someone to manually pull the highlight. They can query for it.

And not just the obvious “stats moment.” The celebration that echoes a rivalry. The emotional beat fans will actually share. The play that connects to five similar plays from the past decade, and together becomes a package, a sponsorship placement, or a licensing opportunity.

New content makes old content more valuable. Old content gives new content context and meaning.

This is not speed for speed’s sake. It is speed that enables something harder to achieve: using the best content, at the right moment, in the right way, for the right audience. Giving editors tools to make better creative decisions faster. Giving storytellers access to the full depth of what they have built. Giving audiences content that actually connects, not content that happened to surface first.

The promise of AI in media has too often been framed as automation: fewer people, faster output. What video understanding enables is enhancement: sharper creative instincts, better storytelling, deeper audience connection. The human judgment stays. It just has a lot more to work with.

Media companies are about to face three crises at once

Figure 2: The 3 Crises for Media Companies

The industry is under pressure from three simultaneous transitions: digitizing legacy content, migrating to cloud infrastructure, and making their libraries knowable and searchable. Each is a significant undertaking on its own. All three at once, with the same teams and the same budgets, is a recipe for paralysis, or worse, the wrong prioritization calls.

A lot of organizations try to sequence these efforts. Finish the digitization. Finish the cloud migration. Then deal with discoverability and intelligence.

That logic sounds reasonable. It is also how you fall irreparably behind.

The companies treating video intelligence as a foundational layer from the start will emerge from this transition with a structural advantage. Their content will be searchable while others are still tagging. Their monetization options will be operational while others are still in pilot. Their audience relationships will be deeper while others are still figuring out where their content even lives.

Sitting on millions of hours of valuable content, paying storage costs every month, while that content generates no revenue and reaches no one, is like hiding cash under your mattress. It’s yours, technically. But it’s not working for you.

And if you've already made the investment, this is even more urgent.

A growing number of media companies have done the hard work. They digitized. They migrated to the cloud. Their content is no longer locked in a vault or sitting on tape in a warehouse. It’s accessible. It’s backed up. Teams across the organization technically have access to it.

And yet, nobody can find anything. Nobody knows what most of it actually contains. Nobody can run a search that returns something useful. The archive is in the cloud, but it’s still dark inside.

That is not a solved problem. That is the original problem with a much more expensive monthly bill attached to it. You traded physical storage costs for cloud storage costs, added significant infrastructure overhead, and the content is still sitting there generating zero revenue and reaching zero people.

The only difference is that now it is faster to not find things.

What makes TwelveLabs different isn't just the technology, but an entire novel approach

Figure 3: The TwelveLabs Differentiators

A lot of the AI video conversation right now is focused on benchmarks: who scores highest, whose embeddings are most accurate. That matters. But it is not what determines whether video intelligence actually works at enterprise scale in media and entertainment.

What determines that is simple:

Where does the model’s knowledge come from?

How does it run across large archives?

What do the economics look like when you scale it?

On all three of those questions, what we have built is different.

Built to ground intelligence in your content

Media companies care about IP integrity and factual accuracy. They want the intelligence layer grounded in their library, their rights, and their context. Our approach starts from the video itself, processing it the way a human would watch it, taking in audio, visuals, motion, and spoken word simultaneously, plus whatever additional context you want to add.

This is not a philosophical distinction. It changes adoption.

When the industry is debating training data provenance and licensing, media leaders are not just asking “is it accurate?” They are also asking “is it defensible?”

Built specifically for video, with an eye toward what comes next

Video is not just another modality. It is time. It is sequence. It is context that unfolds.

As the market moves from one-off queries to workflows that operate across entire libraries, the winners will be the companies that can reason over archives at scale, not just answer questions about a single clip.

That is where video intelligence is going, and it is what we are building toward.

A partnership model designed for scale

Most AI vendors charge you every time a new model is released, forcing you to rerun your entire library at your expense. That is not a partnership. That is a toll booth at every upgrade cycle.

We operate differently. The goal is to build value together over time, not extract it at every new version.

The Virtual Print Fee analogy

The analogy I keep coming back to is the Virtual Print Fee, a financial mechanism that helped the theatrical distribution industry move from film to digital without requiring theater owners to absorb impossible upfront costs.

The whole ecosystem recognized it needed to support the transition. It worked because the economics were structured to make adoption viable at scale.

That is the philosophy we apply to video intelligence. If the economics do not allow you to scale profitably, the best technology in the world does not matter.

Figure 4: The Virtual Print Fee Analogy

The cost of waiting isn't just savings you're not capturing, it's revenue that doesn't exist yet

There is a tendency to frame this urgency around cost reduction. Sure, video intelligence can eliminate manual tagging labor, reduce production costs, and compress timelines. That is real.

But that framing undersells what is actually at stake.

The more significant cost of waiting is that revenue models are forming now, and early movers will have time to build and optimize them long before late movers show up.

Contextual advertising is the clearest example. The shift from broad demographic targeting to scene-level, mood-level, moment-level ad placement is already happening. A brand that wants their spot adjacent to content that feels adventurous, sun-drenched, and high-energy (not just categorized as “travel/lifestyle”) is a fundamentally more valuable advertising deal.

Publishers who can offer that specificity will command meaningfully higher CPMs, but only if their content is semantically understood at that depth.

The same logic applies to licensing and syndication. These are markets where the value accrues to whoever has the most accessible, contextually rich catalog.

Waiting is not neutral. It is conceding ground in markets that are forming right now.

This doesn't have to be a heavy lift

One assumption I want to push back on is that moving on video intelligence requires a massive infrastructure overhaul. It does not, or at least, it does not have to.

TwelveLabs models are available in Amazon Bedrock, which means teams building on AWS can adopt video intelligence with enterprise-grade controls and without managing underlying infrastructure.

The infrastructure is already there. The models are already there. The path from “we want to explore this” to “this is running in production at scale” is shorter than the industry has historically assumed.

The window is open now

The foundational decisions that will determine who leads in video intelligence are being made right now. Not in two years. Now.

The companies moving in the next few months will look very different from the ones that wait until this becomes table stakes.

If you are thinking about what this could look like for your content pipeline and your workflows, let’s talk. We are building this with partners today, and we are building it to last.

Related articles

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved