Product

Not everything worth solving fits a benchmark

Dan Kim

Academic benchmarks test on edited footage. Production reality is hours of raw, uncut video that needs to be segmented, searched, and understood at scale. That gap is where TwelveLabs builds.

Academic benchmarks test on edited footage. Production reality is hours of raw, uncut video that needs to be segmented, searched, and understood at scale. That gap is where TwelveLabs builds.

In this article

Join our newsletter

Join our newsletter

Receive the latest advancements, tutorials, and industry insights in video understanding

Receive the latest advancements, tutorials, and industry insights in video understanding

Search, analyze, and explore your videos with AI.

May 12, 2026

5 mins

Copy link to article

I fairly often grab coffee with researchers nearing graduation. The conversation tends to circle back to the same question: "There's almost nothing public about what TwelveLabs actually does. No papers, no media coverage."

It's a fair point. Academic publishing and media exposure aren't where we put most of our energy. That tends to be true for B2B companies, and that's where our center of gravity sits today.

There's a structural reason behind it, too. A lot of the problems we actually work on don't map cleanly onto what academic benchmarks were built to measure.

The world beyond edited footage

When most people hear "video," they picture a YouTube clip: cleanly edited, every cut purposeful. Academic video benchmarks work the same way. Movie clips, music videos, news broadcasts. Already-edited content as the source data. From a 30-second Reel or Short up through a 2-hour feature film, every frame in the final cut carries intent. Understanding scenes and answering questions about them is hard, but it's a well-defined problem.

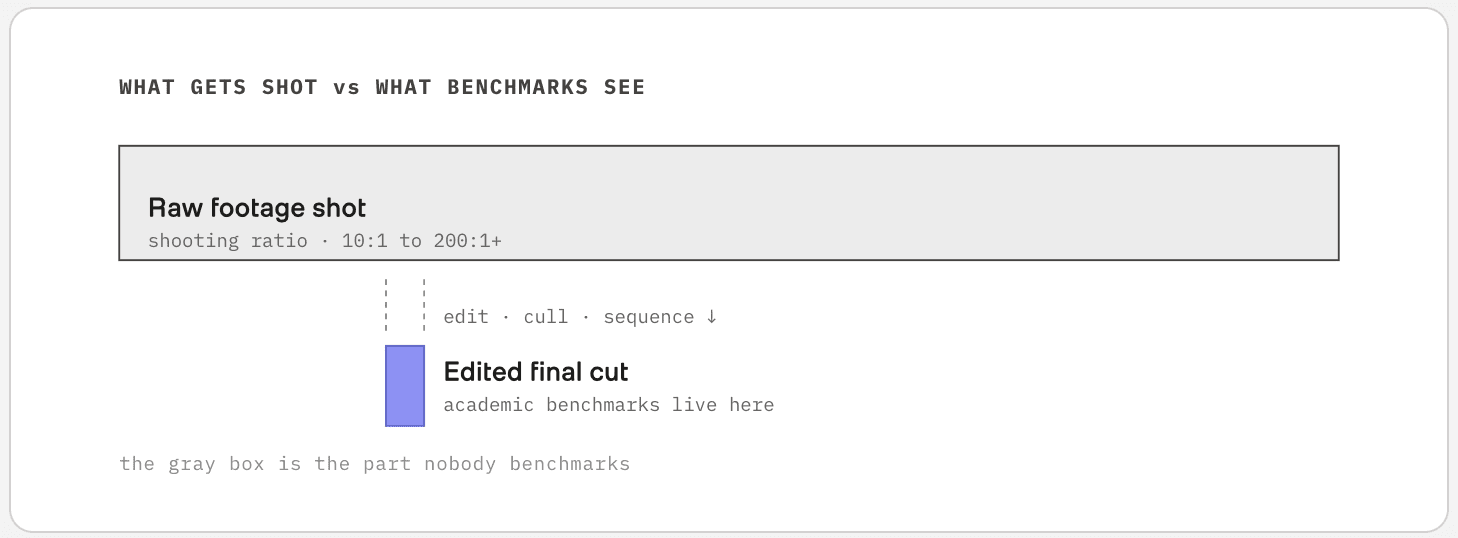

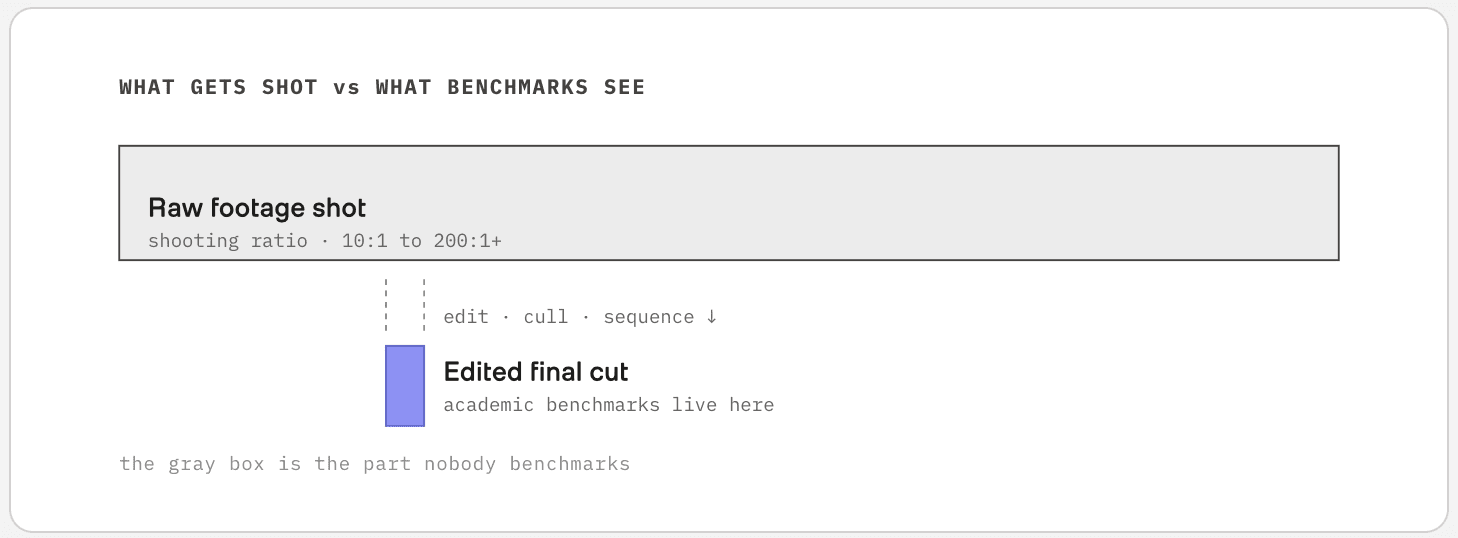

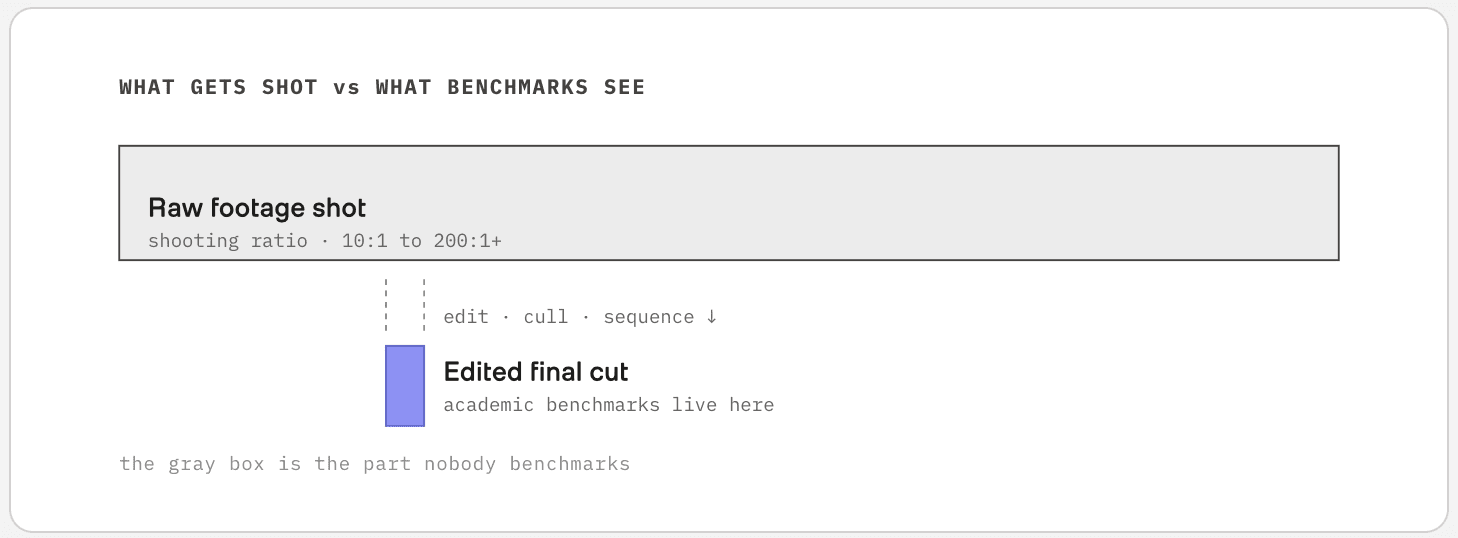

To make any of that, whether a 30-second clip or a 2-hour film, somewhere between tens and hundreds of times more raw footage gets shot first. The industry calls this the shooting ratio. It varies wildly: 10:1 to 30:1 for typical digital production, 20:1 to 80:1 for documentaries, sometimes more than 200:1 for big action films. Editors comb through days of multi-camera footage, pull what's usable, cut it, sequence it, and call that the final product.

The web's video distribution, and the academic benchmarks built on it, sees only the small lavender box. Production reality lives in the gray box that comes before edit.

And that raw footage doesn't sit inside the distribution of video on the web. The footage academic benchmarks use is already-edited output, and the problem of processing the source material itself simply isn't in any benchmark.

What reality looks like

People who work with video for a living, like broadcasters, sports leagues, and security companies, generate thousands to tens of thousands of hours of footage every day. Multiple cameras, every day. What they need most is to know where in those tens of thousands of hours they should actually look.

"Why not use a large foundation model?" Realistically, you can't. Cost is part of it, but the deeper problem is structural. Searching video requires embeddings, and current general-purpose multimodal embeddings can only handle very short clip lengths. To embed tens of thousands of hours, you'd have to chop everything into short pieces and call the API on each chunk, and deciding how to chop them is itself a research problem. Even setting that aside, no media company can absorb that cost daily.

So what actually runs in production today is the previous generation of expert models. Cheap, primitive tagging like "this clip has a person," or "this person is walking," stored at scale. Not precise, but the only economically viable choice.

Segmentation as the core problem

The gap between those two worlds is what we focus on. Far more capable than expert models, but without the cost explosion of general-purpose ones. And at the heart of it sits segmentation.

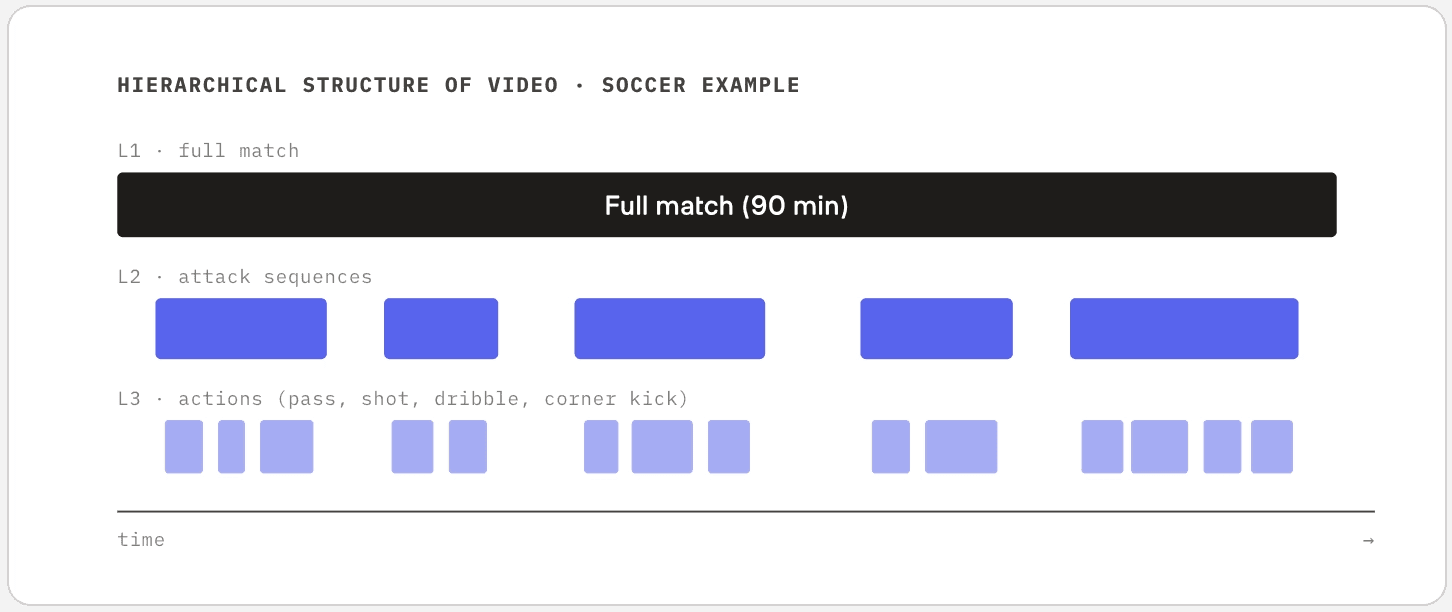

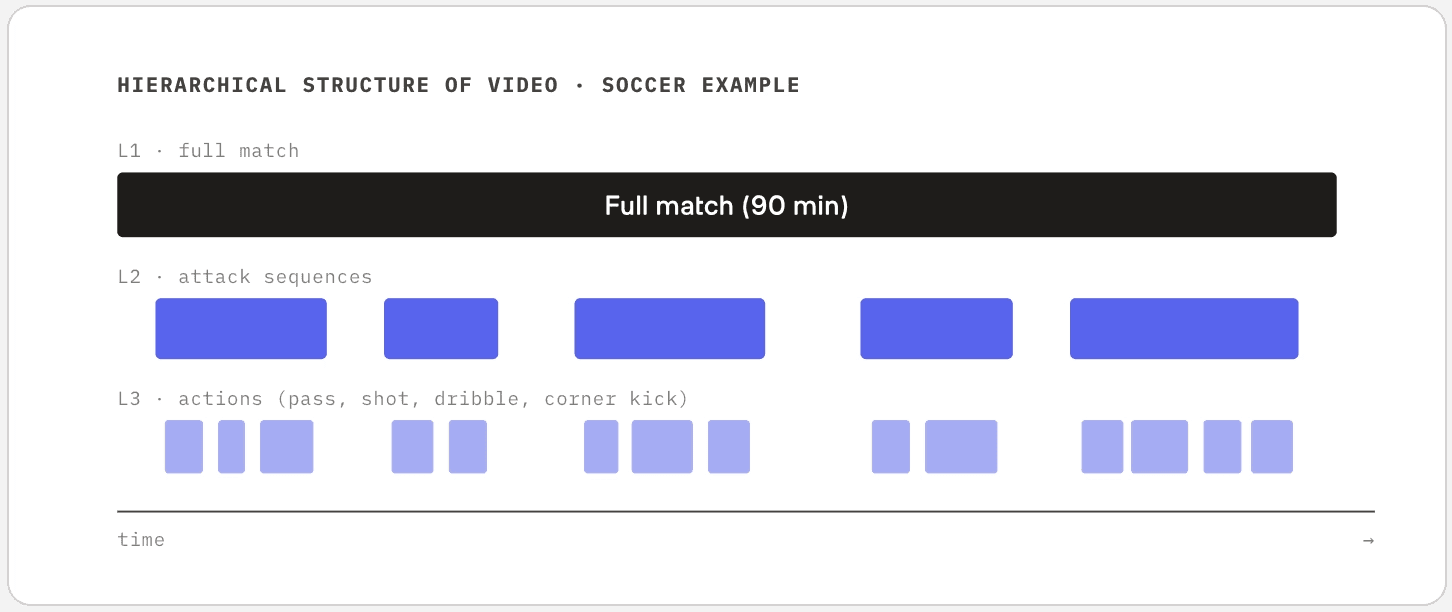

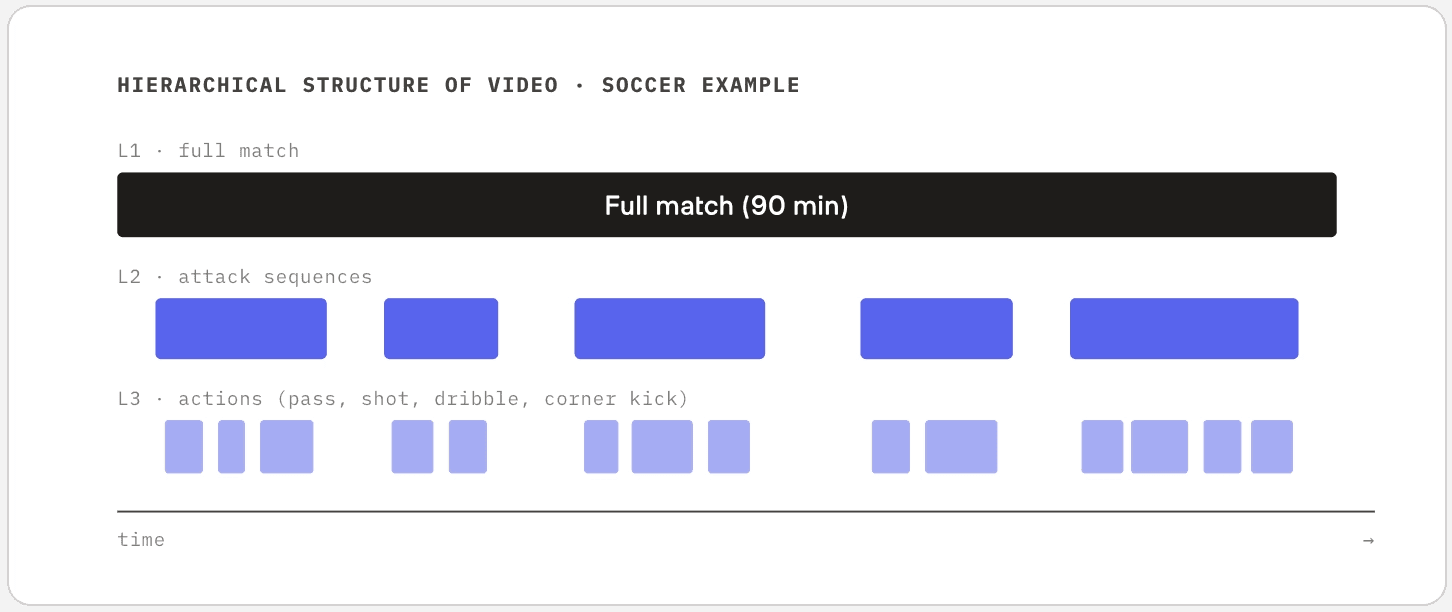

Given a long video, where do you cut to get a meaningful unit? It's similar in spirit to chunking documents in text-domain RAG, but video is harder. The content has a natural hierarchy along the time axis. A soccer match has the whole game, then attack sequences inside it, then individual passes or shots inside those. You have to model this hierarchy before you can distinguish "find the three-pointer" from "find the through-pass."

Academia has worked on this under the banner of boundary detection, and recently diffusion-based approaches have opened up a new direction. The more interesting move, though, is leaving the single-layer framing behind. Even within one video, boundaries exist at multiple time scales at once. A whole-game boundary, a possession boundary, an individual-action boundary. Modeling those layers jointly is closer to how production teams actually need to query their footage.

Even within the same video, the segment unit you need to return depends on which layer the query targets. "Highlights of the first half" lives at L2, "all corner kicks" lives at L3. Boundary detection in academia tends to focus on a single layer at a time; real usage requires modeling multiple layers at once.

The efficiency problem in embeddings

Embedding models have a similar story. VLM-based embeddings (VLM2Vec and others) have been getting a lot of attention. The trouble is that this approach runs the entire LLM stack on top of the visual encoder, even when you only need an embedding. No matter how much you optimize the hardware, inference is structurally slow.

For a customer who has to process tens of thousands of hours of video daily, accuracy numbers alone don't tell the whole story. Our own models sit at SOTA on academic benchmarks, and yet two models hitting the same number can have completely different inference cost structures. In production, that gap is what determines whether a model is actually deployable at scale. Academic evaluation tends not to capture that dimension, but in practice it's decisive.

Focus and trade-offs

"You have so much video data, couldn't you do world models too?" We get that question a lot. On raw volume alone, big platforms have far more, and they can fund long-horizon research without revenue pressure. For a startup, the more pressing question isn't whether to take something on but in what order. World models are part of what we'll get to eventually. The call we made was to go deeper on what we already do best first.

A startup's lever is focus. Doing everything is big-tech territory. Our goal is to occupy an irreplaceable seat in the video processing pipeline. We're at the stage of deepening the moat where we already do well.

This gap is the opportunity

The gap is simple to state. Benchmarks evaluate you on edited videos, but real users have to do the editing themselves, stripping raw footage down to what matters. The seat we're trying to occupy sits in that space.

It's the gap between what academia measures with benchmarks and what industry actually pays for. In the video domain, this gap is unusually wide. In language, academia and industry are roughly looking in the same direction. In video, plenty of things never show up in benchmarks. The things you only learn by using.

That's why so much of our weight goes into shipping products. The gap itself is the moat.

I fairly often grab coffee with researchers nearing graduation. The conversation tends to circle back to the same question: "There's almost nothing public about what TwelveLabs actually does. No papers, no media coverage."

It's a fair point. Academic publishing and media exposure aren't where we put most of our energy. That tends to be true for B2B companies, and that's where our center of gravity sits today.

There's a structural reason behind it, too. A lot of the problems we actually work on don't map cleanly onto what academic benchmarks were built to measure.

The world beyond edited footage

When most people hear "video," they picture a YouTube clip: cleanly edited, every cut purposeful. Academic video benchmarks work the same way. Movie clips, music videos, news broadcasts. Already-edited content as the source data. From a 30-second Reel or Short up through a 2-hour feature film, every frame in the final cut carries intent. Understanding scenes and answering questions about them is hard, but it's a well-defined problem.

To make any of that, whether a 30-second clip or a 2-hour film, somewhere between tens and hundreds of times more raw footage gets shot first. The industry calls this the shooting ratio. It varies wildly: 10:1 to 30:1 for typical digital production, 20:1 to 80:1 for documentaries, sometimes more than 200:1 for big action films. Editors comb through days of multi-camera footage, pull what's usable, cut it, sequence it, and call that the final product.

The web's video distribution, and the academic benchmarks built on it, sees only the small lavender box. Production reality lives in the gray box that comes before edit.

And that raw footage doesn't sit inside the distribution of video on the web. The footage academic benchmarks use is already-edited output, and the problem of processing the source material itself simply isn't in any benchmark.

What reality looks like

People who work with video for a living, like broadcasters, sports leagues, and security companies, generate thousands to tens of thousands of hours of footage every day. Multiple cameras, every day. What they need most is to know where in those tens of thousands of hours they should actually look.

"Why not use a large foundation model?" Realistically, you can't. Cost is part of it, but the deeper problem is structural. Searching video requires embeddings, and current general-purpose multimodal embeddings can only handle very short clip lengths. To embed tens of thousands of hours, you'd have to chop everything into short pieces and call the API on each chunk, and deciding how to chop them is itself a research problem. Even setting that aside, no media company can absorb that cost daily.

So what actually runs in production today is the previous generation of expert models. Cheap, primitive tagging like "this clip has a person," or "this person is walking," stored at scale. Not precise, but the only economically viable choice.

Segmentation as the core problem

The gap between those two worlds is what we focus on. Far more capable than expert models, but without the cost explosion of general-purpose ones. And at the heart of it sits segmentation.

Given a long video, where do you cut to get a meaningful unit? It's similar in spirit to chunking documents in text-domain RAG, but video is harder. The content has a natural hierarchy along the time axis. A soccer match has the whole game, then attack sequences inside it, then individual passes or shots inside those. You have to model this hierarchy before you can distinguish "find the three-pointer" from "find the through-pass."

Academia has worked on this under the banner of boundary detection, and recently diffusion-based approaches have opened up a new direction. The more interesting move, though, is leaving the single-layer framing behind. Even within one video, boundaries exist at multiple time scales at once. A whole-game boundary, a possession boundary, an individual-action boundary. Modeling those layers jointly is closer to how production teams actually need to query their footage.

Even within the same video, the segment unit you need to return depends on which layer the query targets. "Highlights of the first half" lives at L2, "all corner kicks" lives at L3. Boundary detection in academia tends to focus on a single layer at a time; real usage requires modeling multiple layers at once.

The efficiency problem in embeddings

Embedding models have a similar story. VLM-based embeddings (VLM2Vec and others) have been getting a lot of attention. The trouble is that this approach runs the entire LLM stack on top of the visual encoder, even when you only need an embedding. No matter how much you optimize the hardware, inference is structurally slow.

For a customer who has to process tens of thousands of hours of video daily, accuracy numbers alone don't tell the whole story. Our own models sit at SOTA on academic benchmarks, and yet two models hitting the same number can have completely different inference cost structures. In production, that gap is what determines whether a model is actually deployable at scale. Academic evaluation tends not to capture that dimension, but in practice it's decisive.

Focus and trade-offs

"You have so much video data, couldn't you do world models too?" We get that question a lot. On raw volume alone, big platforms have far more, and they can fund long-horizon research without revenue pressure. For a startup, the more pressing question isn't whether to take something on but in what order. World models are part of what we'll get to eventually. The call we made was to go deeper on what we already do best first.

A startup's lever is focus. Doing everything is big-tech territory. Our goal is to occupy an irreplaceable seat in the video processing pipeline. We're at the stage of deepening the moat where we already do well.

This gap is the opportunity

The gap is simple to state. Benchmarks evaluate you on edited videos, but real users have to do the editing themselves, stripping raw footage down to what matters. The seat we're trying to occupy sits in that space.

It's the gap between what academia measures with benchmarks and what industry actually pays for. In the video domain, this gap is unusually wide. In language, academia and industry are roughly looking in the same direction. In video, plenty of things never show up in benchmarks. The things you only learn by using.

That's why so much of our weight goes into shipping products. The gap itself is the moat.

I fairly often grab coffee with researchers nearing graduation. The conversation tends to circle back to the same question: "There's almost nothing public about what TwelveLabs actually does. No papers, no media coverage."

It's a fair point. Academic publishing and media exposure aren't where we put most of our energy. That tends to be true for B2B companies, and that's where our center of gravity sits today.

There's a structural reason behind it, too. A lot of the problems we actually work on don't map cleanly onto what academic benchmarks were built to measure.

The world beyond edited footage

When most people hear "video," they picture a YouTube clip: cleanly edited, every cut purposeful. Academic video benchmarks work the same way. Movie clips, music videos, news broadcasts. Already-edited content as the source data. From a 30-second Reel or Short up through a 2-hour feature film, every frame in the final cut carries intent. Understanding scenes and answering questions about them is hard, but it's a well-defined problem.

To make any of that, whether a 30-second clip or a 2-hour film, somewhere between tens and hundreds of times more raw footage gets shot first. The industry calls this the shooting ratio. It varies wildly: 10:1 to 30:1 for typical digital production, 20:1 to 80:1 for documentaries, sometimes more than 200:1 for big action films. Editors comb through days of multi-camera footage, pull what's usable, cut it, sequence it, and call that the final product.

The web's video distribution, and the academic benchmarks built on it, sees only the small lavender box. Production reality lives in the gray box that comes before edit.

And that raw footage doesn't sit inside the distribution of video on the web. The footage academic benchmarks use is already-edited output, and the problem of processing the source material itself simply isn't in any benchmark.

What reality looks like

People who work with video for a living, like broadcasters, sports leagues, and security companies, generate thousands to tens of thousands of hours of footage every day. Multiple cameras, every day. What they need most is to know where in those tens of thousands of hours they should actually look.

"Why not use a large foundation model?" Realistically, you can't. Cost is part of it, but the deeper problem is structural. Searching video requires embeddings, and current general-purpose multimodal embeddings can only handle very short clip lengths. To embed tens of thousands of hours, you'd have to chop everything into short pieces and call the API on each chunk, and deciding how to chop them is itself a research problem. Even setting that aside, no media company can absorb that cost daily.

So what actually runs in production today is the previous generation of expert models. Cheap, primitive tagging like "this clip has a person," or "this person is walking," stored at scale. Not precise, but the only economically viable choice.

Segmentation as the core problem

The gap between those two worlds is what we focus on. Far more capable than expert models, but without the cost explosion of general-purpose ones. And at the heart of it sits segmentation.

Given a long video, where do you cut to get a meaningful unit? It's similar in spirit to chunking documents in text-domain RAG, but video is harder. The content has a natural hierarchy along the time axis. A soccer match has the whole game, then attack sequences inside it, then individual passes or shots inside those. You have to model this hierarchy before you can distinguish "find the three-pointer" from "find the through-pass."

Academia has worked on this under the banner of boundary detection, and recently diffusion-based approaches have opened up a new direction. The more interesting move, though, is leaving the single-layer framing behind. Even within one video, boundaries exist at multiple time scales at once. A whole-game boundary, a possession boundary, an individual-action boundary. Modeling those layers jointly is closer to how production teams actually need to query their footage.

Even within the same video, the segment unit you need to return depends on which layer the query targets. "Highlights of the first half" lives at L2, "all corner kicks" lives at L3. Boundary detection in academia tends to focus on a single layer at a time; real usage requires modeling multiple layers at once.

The efficiency problem in embeddings

Embedding models have a similar story. VLM-based embeddings (VLM2Vec and others) have been getting a lot of attention. The trouble is that this approach runs the entire LLM stack on top of the visual encoder, even when you only need an embedding. No matter how much you optimize the hardware, inference is structurally slow.

For a customer who has to process tens of thousands of hours of video daily, accuracy numbers alone don't tell the whole story. Our own models sit at SOTA on academic benchmarks, and yet two models hitting the same number can have completely different inference cost structures. In production, that gap is what determines whether a model is actually deployable at scale. Academic evaluation tends not to capture that dimension, but in practice it's decisive.

Focus and trade-offs

"You have so much video data, couldn't you do world models too?" We get that question a lot. On raw volume alone, big platforms have far more, and they can fund long-horizon research without revenue pressure. For a startup, the more pressing question isn't whether to take something on but in what order. World models are part of what we'll get to eventually. The call we made was to go deeper on what we already do best first.

A startup's lever is focus. Doing everything is big-tech territory. Our goal is to occupy an irreplaceable seat in the video processing pipeline. We're at the stage of deepening the moat where we already do well.

This gap is the opportunity

The gap is simple to state. Benchmarks evaluate you on edited videos, but real users have to do the editing themselves, stripping raw footage down to what matters. The seat we're trying to occupy sits in that space.

It's the gap between what academia measures with benchmarks and what industry actually pays for. In the video domain, this gap is unusually wide. In language, academia and industry are roughly looking in the same direction. In video, plenty of things never show up in benchmarks. The things you only learn by using.

That's why so much of our weight goes into shipping products. The gap itself is the moat.

Related articles

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved