Company

Rebuilding Our HLS Pipeline In House: How TwelveLabs Eliminated a Costly Playback Bottleneck

Noah Seo, Yeonhoo Park

TwelveLabs rebuilt its HLS transcoding pipeline in-house after the outsourced vendor became too slow and expensive, achieving a 6.6x speed improvement and 87% cost reduction by optimizing data movement, local NVMe disk I/O, and parallel segment uploads rather than FFmpeg settings alone.

TwelveLabs rebuilt its HLS transcoding pipeline in-house after the outsourced vendor became too slow and expensive, achieving a 6.6x speed improvement and 87% cost reduction by optimizing data movement, local NVMe disk I/O, and parallel segment uploads rather than FFmpeg settings alone.

In this article

Join our newsletter

Join our newsletter

Receive the latest advancements, tutorials, and industry insights in video understanding

Receive the latest advancements, tutorials, and industry insights in video understanding

Search, analyze, and explore your videos with AI.

Apr 21, 2026

12 Minutes

Copy link to article

When people think about “video intelligence,” they often picture model training or retrieval quality. In practice, the user experience is just as dependent on the unglamorous infrastructure that turns raw video into something you can actually watch, scrub, and inspect while results are loading.

This post is a story about one of those unglamorous systems: HLS generation, which is the process of converting a newly uploaded video into a segmented streaming format that plays instantly in a browser. HLS (HTTP Live Streaming) delivers media using a playlist (commonly .m3u8) that points to many small media segments, so players can start quickly, adapt to network conditions, and seek efficiently.

Last year, we hit a painful mismatch: our indexing pipeline became extremely fast, but our playable streaming pipeline (initially outsourced to a managed third-party transcoding service) was comparatively slow and increasingly expensive. The result was a confusing product moment: indexing would finish, but users couldn’t actually watch the video in our Playground because HLS generation was still running.

So we rebuilt it.

1 - The bottleneck we couldn’t ignore

Our trigger was simple: we shipped Marengo 3.0 support for longer videos (up to 4 hours, previously ~2 hours) last year, and suddenly an old limitation became impossible to ignore. For long uploads, playback preparation could lag well behind indexing, which created a frustrating product gap: the system could already understand the video, but the browser still could not play it smoothly. This mismatch showed up both externally and internally. Users could see results before playback was ready, and teams naturally expected the experience to feel “done” once indexing finished.

2 - Build vs buy: why we moved away from the vendor pipeline

We did not immediately decide to rebuild the pipeline ourselves. Before taking that step, we tested whether the vendor's accelerated mode could close enough of the gap.

In our evaluation, acceleration improved throughput by roughly 3.9× compared with the baseline setup. But the result still fell short of the product experience we were aiming for.

It also came at a higher price point. The accelerated path required a more expensive tier, which in our analysis was about 60% costlier than the baseline configuration.

So while acceleration improved performance, it did not change the underlying tradeoff. It made the existing path better, but not good enough (and not cheaply enough) to be the long-term answer.

So our decision was driven by a trio of constraints:

Performance: the user experience was gated by HLS, not indexing.

Cost: the outsourced pipeline had become a material monthly line item and was trending up.

Future requirements: we want to support on-prem deployments, and relying on a specialized cloud vendor for key playback infrastructure makes that direction harder.

That last point is subtle but important. An on-prem, air-gapped deployment forces you to optimize for “what can we run ourselves?” and reduce dependencies that require managed cloud services. Our teams were already moving core async infrastructure toward primitives that can run in Kubernetes and in restricted environments.

An in-house HLS pipeline fits that strategic direction.

3 - What we built: a practical, scalable HLS pipeline

Before touching architecture, we needed to validate something basic: could we get raw FFmpeg transcoding fast enough?

HLS output typically involves segmenting media and writing a playlist file that references those segments. FFmpeg even provides a dedicated HLS muxer for this purpose.

But performance wasn’t the only requirement. We needed a system that could:

run continuously at scale,

integrate into our existing upload → ingest → index workflows, and

fail safely (retry, alert, triage) when real-world video inputs break assumptions.

Our first design proposal formalized a simple approach: build an in-house “HLS convert service” using FFmpeg, with a queue-driven worker fleet.

3.1 - The high-level flow

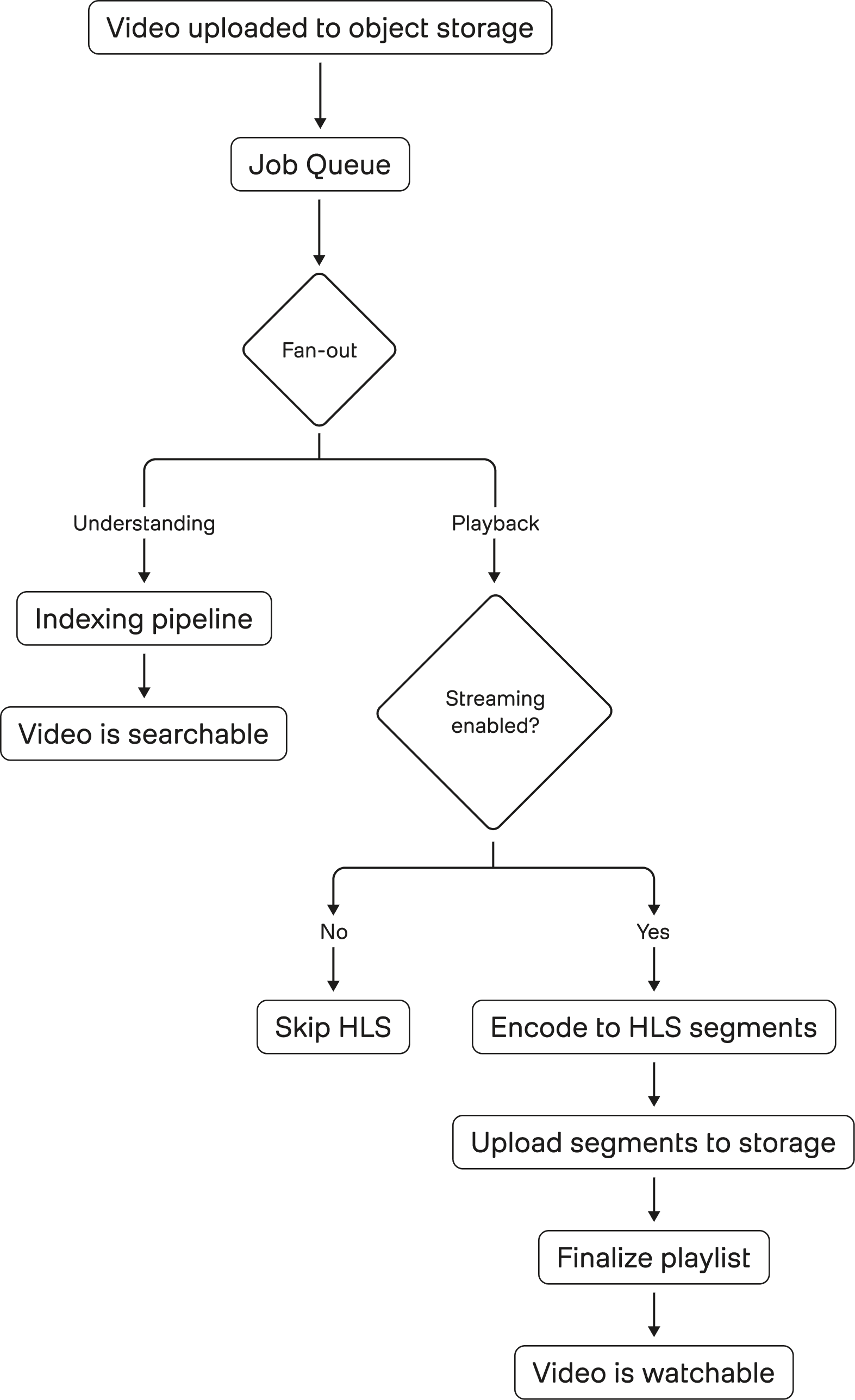

The rough architecture looks like this:

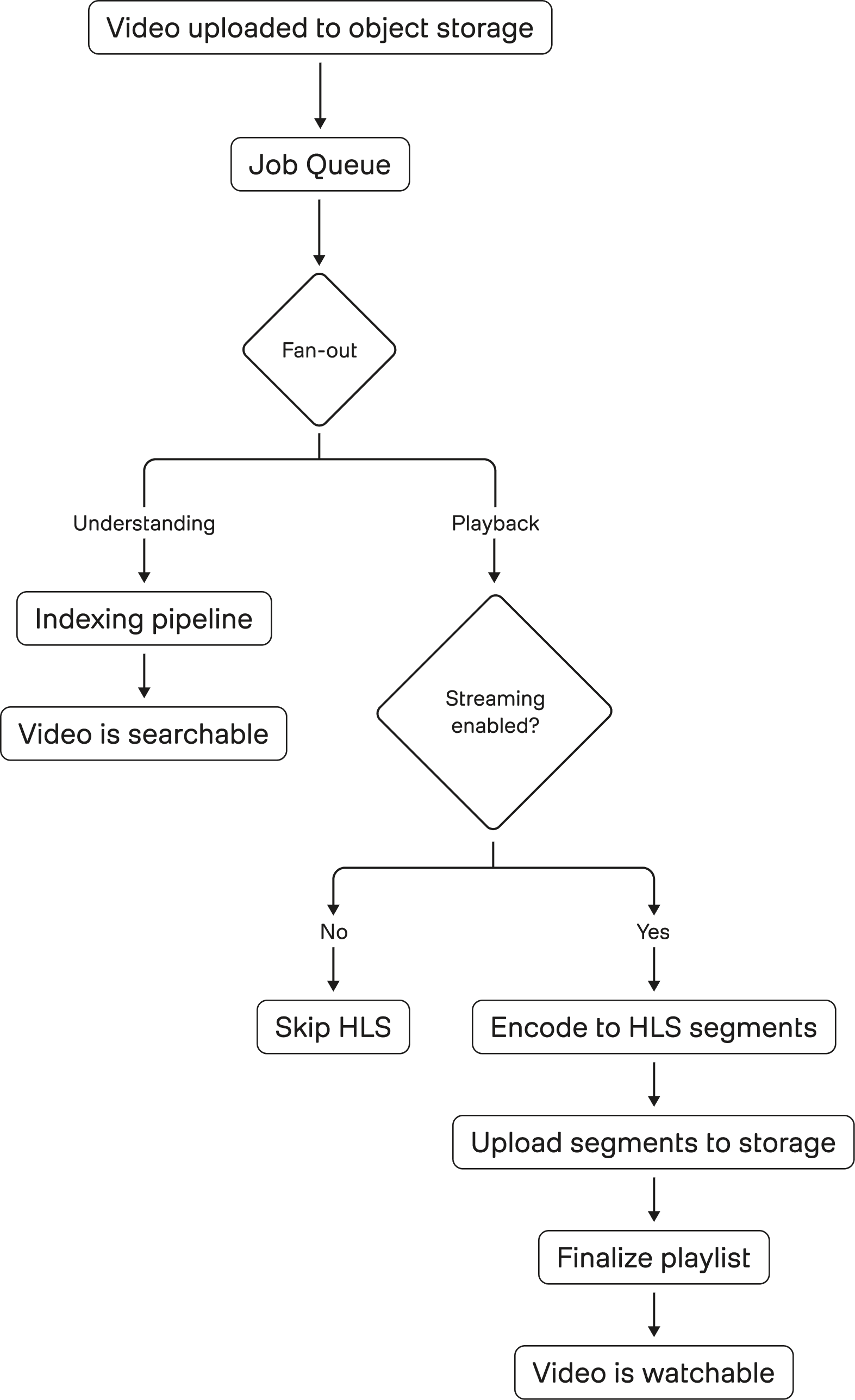

Figure 1: Video ingestion fans out into two independent paths. The understanding pipeline produces searchable representations, while the playback pipeline prepares HLS segments for streaming. These paths are decoupled in intent but share the same input, making any inefficiency in playback directly visible as a product bottleneck.

A backend orchestrator service that produces “convert tasks” into a Redis-based queue and consumes completion messages to update job state.

Redis used as both job queue and message broker, with separate streams for conversion tasks and completion signals (SUCCEEDED/FAILED).

Workers designed as “HLS converter pods” that run FFmpeg, write HLS segments + playlist, and upload results to storage.

3.2 - Autoscaling

Encoding workloads are bursty. A demo day or model rollout can create sudden spikes in “videos that need to become playable.” We didn’t want to over-provision a constantly running HLS fleet.

So we tied worker count to queue dynamics using KEDA, a Kubernetes event-driven autoscaler that can scale workloads based on event sources (including Redis).

Also, to ensure fair-usage across customers and overcome the noisy-neighbor problem, the queue's messages are produced at a target that is distributed across different customers.

3.3 - Reliability

Outsourcing HLS doesn’t eliminate failures; it just externalizes them. Bringing it in house meant accepting we’d own the last mile reliability.

From the beginning, we planned for fault tolerance:

retry on failure with backoff,

a dead-letter queue for “poison jobs,”

and clear failure reporting and observability so support and engineers can debug quickly.

And yes, we still hit incidents. An example incident log happens when an internal user reported “HLS is never ending,” and the root-cause investigation pointed to the in-house HLS consumer not processing the request as expected (“hls task is never activated”).

Owning this system forced us to build the operational muscle we’d previously gotten “for free”:

better logging at the start of handlers,

better alert coverage for consumer health,

and better guarantees that queued work doesn’t silently stall.

As a result, we adopted concrete reliability practices such as consumer cleanup via cronjob and heartbeat mechanisms to reduce timeouts.

4 - The real speed work wasn’t “FFmpeg flags”

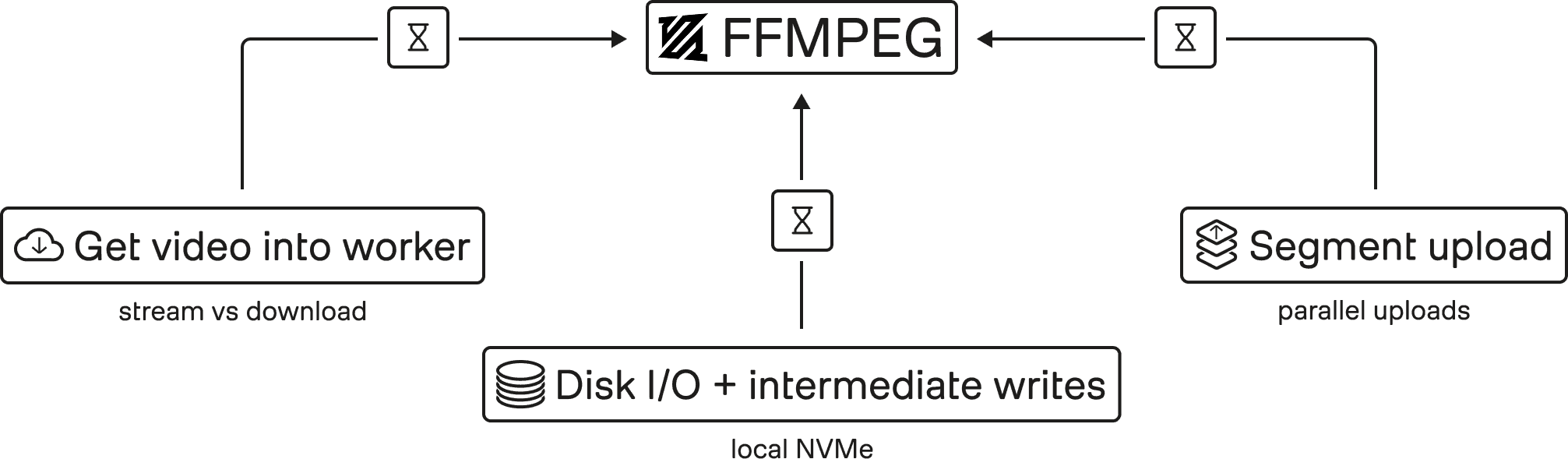

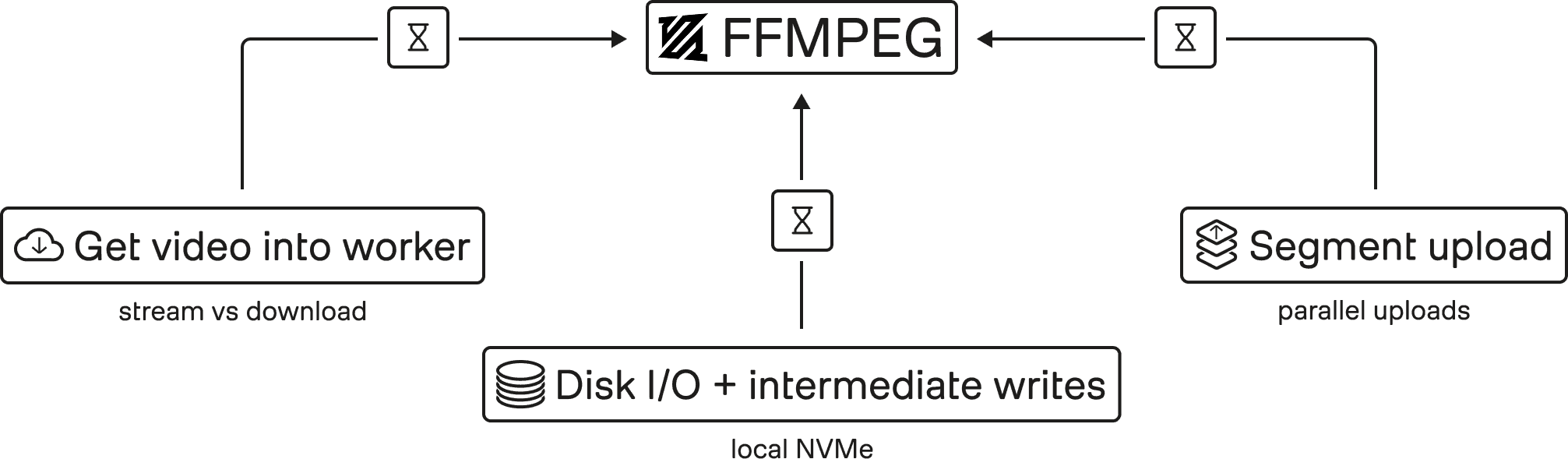

Figure 2: FFmpeg is only one stage in the pipeline. End-to-end latency is dominated by how video moves through the system: getting data into workers (stream vs. download), disk I/O and intermediate writes, and uploading segments back to storage. Optimizing these boundaries (not encoder flags) drives real throughput gains.

A common misconception about video pipelines is that performance is mostly about picking the “right FFmpeg command.” In practice, the big gains often come from everything around FFmpeg.

For us, initial targets weren’t reached immediately, and the bottlenecks we had to chase included moving video into workers, disk I/O, intermediate writes, and benchmarking CPU vs GPU choices.

Here are the kinds of design choices that mattered.

4.1 - Stream the source or download up front

Pulling video into a worker sounds trivial; until you do it at scale on large uploads.

In practice, we use a hybrid retrieval strategy: depending on file size and runtime heuristics, workers either stream from a presigned URL or download the asset locally. This lets us avoid unnecessary data movement on large uploads while keeping smaller jobs simple and efficient.

4.2 - Upload segments in parallel

HLS output is many files: a playlist plus hundreds or thousands of segments, depending on duration and segment size.

So upload behavior matters. Segment upload parallelism can become a first-order factor in end-to-end “time to playable,” especially when workers finish encoding but then serialize uploads.

We treated the pipeline holistically: encode + package + ship.

4.3 - Local NVMe and disk I/O realities

When you write intermediate outputs (and final HLS segments) to disk, storage throughput becomes part of the critical path. In workloads that process many videos with large HLS outputs, naive EBS-backed disk I/O became a surprisingly significant bottleneck: CPU and memory were available, but the pipeline still stalled on storage.

That is why we deliberately chose local NVMe, building on prior internal platform work to make high-throughput node-local storage usable in EKS. In practice, that meant the system could sustain the write and packaging rates the media pipeline actually needed.

5 - Results: the pipeline got faster, cheaper, and more deployable

Figure 3: By redesigning the system around data movement instead of isolated encoding steps, the pipeline achieves three concrete improvements: faster time-to-playable, lower infrastructure cost, and greater deployment flexibility. The result is a playback system that scales with video volume instead of becoming a bottleneck.

We measured success in “time to playable,” cost, and whether we were on a path to vendor feature parity.

5.1 - Performance

Compared with the previous pipeline, average processing speed improved by about 6.6×, reducing the time required to make long videos playable from over an hour to just a few minutes.

We still track downstream bottlenecks (because the product experience is never one number) but this was enough to eliminate the most painful “indexing finished, playback isn’t ready” confusion.

5.2 - Cost

Using our previous managed setup as a baseline, HLS preparation cost dropped from roughly $0.045 to $0.006 per video hour in compute, an ~87% reduction. Even after accounting for storage, the in-house pipeline remained dramatically cheaper.

5.3 - Future requirements

Building HLS in house isn’t only about speed and cost. It’s also about compatibility with future deployment models.

We’re actively investing in infrastructure choices that work in air-gapped environments and reduce operational overhead for on-prem deployments.

An in-house HLS pipeline fits that same pattern: fewer external dependencies, more control over the operational envelope, and fewer “core product features” that are blocked on third-party cloud availability or pricing structures.

6 - Broader lessons for any engineering team

The story here is “HLS migration,” but the takeaways are broader.

First, optimize for the user’s critical path, not the team’s favorite subsystem. We were proud of fast indexing, but users experienced the product through a different bottleneck: playback readiness.

Second, benchmark end-to-end and don’t overfocus on the obvious hotspots. We pursued bottlenecks beyond FFmpeg itself (data movement, disk I/O, upload patterns, and queue orchestration) because “the command is fast” doesn’t mean “the system is fast.”

Third, bringing infrastructure in house isn’t purely a technical decision; it’s an economic and product decision. The driver wasn’t “we want to own transcoding”: it was (a) user-visible latency, (b) a cost curve that didn’t make sense, and (c) future deployment requirements.

Fourth, if you bring it in house, treat reliability as the product. We hit issues like consumers not activating tasks and had to improve logging, monitoring, and operational practices (heartbeats, cleanup cronjobs, alerting).

Finally, ship incrementally. Our goals were explicit: target a higher real-time ratio and reach feature parity with the vendor where it mattered for user experience. We didn’t need perfection on day one; we needed a safer, cheaper, faster path that could be iterated.

When people think about “video intelligence,” they often picture model training or retrieval quality. In practice, the user experience is just as dependent on the unglamorous infrastructure that turns raw video into something you can actually watch, scrub, and inspect while results are loading.

This post is a story about one of those unglamorous systems: HLS generation, which is the process of converting a newly uploaded video into a segmented streaming format that plays instantly in a browser. HLS (HTTP Live Streaming) delivers media using a playlist (commonly .m3u8) that points to many small media segments, so players can start quickly, adapt to network conditions, and seek efficiently.

Last year, we hit a painful mismatch: our indexing pipeline became extremely fast, but our playable streaming pipeline (initially outsourced to a managed third-party transcoding service) was comparatively slow and increasingly expensive. The result was a confusing product moment: indexing would finish, but users couldn’t actually watch the video in our Playground because HLS generation was still running.

So we rebuilt it.

1 - The bottleneck we couldn’t ignore

Our trigger was simple: we shipped Marengo 3.0 support for longer videos (up to 4 hours, previously ~2 hours) last year, and suddenly an old limitation became impossible to ignore. For long uploads, playback preparation could lag well behind indexing, which created a frustrating product gap: the system could already understand the video, but the browser still could not play it smoothly. This mismatch showed up both externally and internally. Users could see results before playback was ready, and teams naturally expected the experience to feel “done” once indexing finished.

2 - Build vs buy: why we moved away from the vendor pipeline

We did not immediately decide to rebuild the pipeline ourselves. Before taking that step, we tested whether the vendor's accelerated mode could close enough of the gap.

In our evaluation, acceleration improved throughput by roughly 3.9× compared with the baseline setup. But the result still fell short of the product experience we were aiming for.

It also came at a higher price point. The accelerated path required a more expensive tier, which in our analysis was about 60% costlier than the baseline configuration.

So while acceleration improved performance, it did not change the underlying tradeoff. It made the existing path better, but not good enough (and not cheaply enough) to be the long-term answer.

So our decision was driven by a trio of constraints:

Performance: the user experience was gated by HLS, not indexing.

Cost: the outsourced pipeline had become a material monthly line item and was trending up.

Future requirements: we want to support on-prem deployments, and relying on a specialized cloud vendor for key playback infrastructure makes that direction harder.

That last point is subtle but important. An on-prem, air-gapped deployment forces you to optimize for “what can we run ourselves?” and reduce dependencies that require managed cloud services. Our teams were already moving core async infrastructure toward primitives that can run in Kubernetes and in restricted environments.

An in-house HLS pipeline fits that strategic direction.

3 - What we built: a practical, scalable HLS pipeline

Before touching architecture, we needed to validate something basic: could we get raw FFmpeg transcoding fast enough?

HLS output typically involves segmenting media and writing a playlist file that references those segments. FFmpeg even provides a dedicated HLS muxer for this purpose.

But performance wasn’t the only requirement. We needed a system that could:

run continuously at scale,

integrate into our existing upload → ingest → index workflows, and

fail safely (retry, alert, triage) when real-world video inputs break assumptions.

Our first design proposal formalized a simple approach: build an in-house “HLS convert service” using FFmpeg, with a queue-driven worker fleet.

3.1 - The high-level flow

The rough architecture looks like this:

Figure 1: Video ingestion fans out into two independent paths. The understanding pipeline produces searchable representations, while the playback pipeline prepares HLS segments for streaming. These paths are decoupled in intent but share the same input, making any inefficiency in playback directly visible as a product bottleneck.

A backend orchestrator service that produces “convert tasks” into a Redis-based queue and consumes completion messages to update job state.

Redis used as both job queue and message broker, with separate streams for conversion tasks and completion signals (SUCCEEDED/FAILED).

Workers designed as “HLS converter pods” that run FFmpeg, write HLS segments + playlist, and upload results to storage.

3.2 - Autoscaling

Encoding workloads are bursty. A demo day or model rollout can create sudden spikes in “videos that need to become playable.” We didn’t want to over-provision a constantly running HLS fleet.

So we tied worker count to queue dynamics using KEDA, a Kubernetes event-driven autoscaler that can scale workloads based on event sources (including Redis).

Also, to ensure fair-usage across customers and overcome the noisy-neighbor problem, the queue's messages are produced at a target that is distributed across different customers.

3.3 - Reliability

Outsourcing HLS doesn’t eliminate failures; it just externalizes them. Bringing it in house meant accepting we’d own the last mile reliability.

From the beginning, we planned for fault tolerance:

retry on failure with backoff,

a dead-letter queue for “poison jobs,”

and clear failure reporting and observability so support and engineers can debug quickly.

And yes, we still hit incidents. An example incident log happens when an internal user reported “HLS is never ending,” and the root-cause investigation pointed to the in-house HLS consumer not processing the request as expected (“hls task is never activated”).

Owning this system forced us to build the operational muscle we’d previously gotten “for free”:

better logging at the start of handlers,

better alert coverage for consumer health,

and better guarantees that queued work doesn’t silently stall.

As a result, we adopted concrete reliability practices such as consumer cleanup via cronjob and heartbeat mechanisms to reduce timeouts.

4 - The real speed work wasn’t “FFmpeg flags”

Figure 2: FFmpeg is only one stage in the pipeline. End-to-end latency is dominated by how video moves through the system: getting data into workers (stream vs. download), disk I/O and intermediate writes, and uploading segments back to storage. Optimizing these boundaries (not encoder flags) drives real throughput gains.

A common misconception about video pipelines is that performance is mostly about picking the “right FFmpeg command.” In practice, the big gains often come from everything around FFmpeg.

For us, initial targets weren’t reached immediately, and the bottlenecks we had to chase included moving video into workers, disk I/O, intermediate writes, and benchmarking CPU vs GPU choices.

Here are the kinds of design choices that mattered.

4.1 - Stream the source or download up front

Pulling video into a worker sounds trivial; until you do it at scale on large uploads.

In practice, we use a hybrid retrieval strategy: depending on file size and runtime heuristics, workers either stream from a presigned URL or download the asset locally. This lets us avoid unnecessary data movement on large uploads while keeping smaller jobs simple and efficient.

4.2 - Upload segments in parallel

HLS output is many files: a playlist plus hundreds or thousands of segments, depending on duration and segment size.

So upload behavior matters. Segment upload parallelism can become a first-order factor in end-to-end “time to playable,” especially when workers finish encoding but then serialize uploads.

We treated the pipeline holistically: encode + package + ship.

4.3 - Local NVMe and disk I/O realities

When you write intermediate outputs (and final HLS segments) to disk, storage throughput becomes part of the critical path. In workloads that process many videos with large HLS outputs, naive EBS-backed disk I/O became a surprisingly significant bottleneck: CPU and memory were available, but the pipeline still stalled on storage.

That is why we deliberately chose local NVMe, building on prior internal platform work to make high-throughput node-local storage usable in EKS. In practice, that meant the system could sustain the write and packaging rates the media pipeline actually needed.

5 - Results: the pipeline got faster, cheaper, and more deployable

Figure 3: By redesigning the system around data movement instead of isolated encoding steps, the pipeline achieves three concrete improvements: faster time-to-playable, lower infrastructure cost, and greater deployment flexibility. The result is a playback system that scales with video volume instead of becoming a bottleneck.

We measured success in “time to playable,” cost, and whether we were on a path to vendor feature parity.

5.1 - Performance

Compared with the previous pipeline, average processing speed improved by about 6.6×, reducing the time required to make long videos playable from over an hour to just a few minutes.

We still track downstream bottlenecks (because the product experience is never one number) but this was enough to eliminate the most painful “indexing finished, playback isn’t ready” confusion.

5.2 - Cost

Using our previous managed setup as a baseline, HLS preparation cost dropped from roughly $0.045 to $0.006 per video hour in compute, an ~87% reduction. Even after accounting for storage, the in-house pipeline remained dramatically cheaper.

5.3 - Future requirements

Building HLS in house isn’t only about speed and cost. It’s also about compatibility with future deployment models.

We’re actively investing in infrastructure choices that work in air-gapped environments and reduce operational overhead for on-prem deployments.

An in-house HLS pipeline fits that same pattern: fewer external dependencies, more control over the operational envelope, and fewer “core product features” that are blocked on third-party cloud availability or pricing structures.

6 - Broader lessons for any engineering team

The story here is “HLS migration,” but the takeaways are broader.

First, optimize for the user’s critical path, not the team’s favorite subsystem. We were proud of fast indexing, but users experienced the product through a different bottleneck: playback readiness.

Second, benchmark end-to-end and don’t overfocus on the obvious hotspots. We pursued bottlenecks beyond FFmpeg itself (data movement, disk I/O, upload patterns, and queue orchestration) because “the command is fast” doesn’t mean “the system is fast.”

Third, bringing infrastructure in house isn’t purely a technical decision; it’s an economic and product decision. The driver wasn’t “we want to own transcoding”: it was (a) user-visible latency, (b) a cost curve that didn’t make sense, and (c) future deployment requirements.

Fourth, if you bring it in house, treat reliability as the product. We hit issues like consumers not activating tasks and had to improve logging, monitoring, and operational practices (heartbeats, cleanup cronjobs, alerting).

Finally, ship incrementally. Our goals were explicit: target a higher real-time ratio and reach feature parity with the vendor where it mattered for user experience. We didn’t need perfection on day one; we needed a safer, cheaper, faster path that could be iterated.

Related articles

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved