Tutorials

How TwelveLabs Saves Hours of Manual Video Annotation with a Few API Calls

Nathan Che

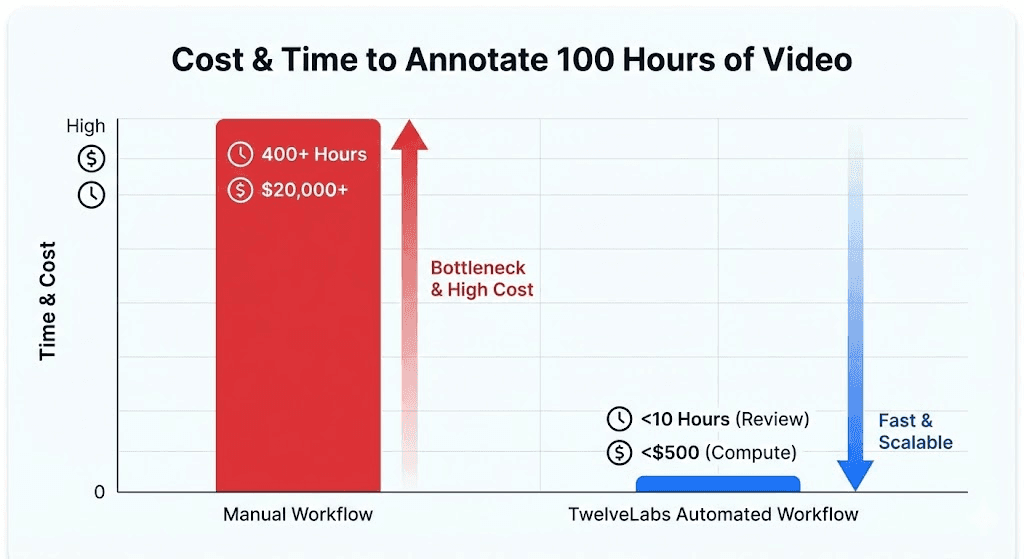

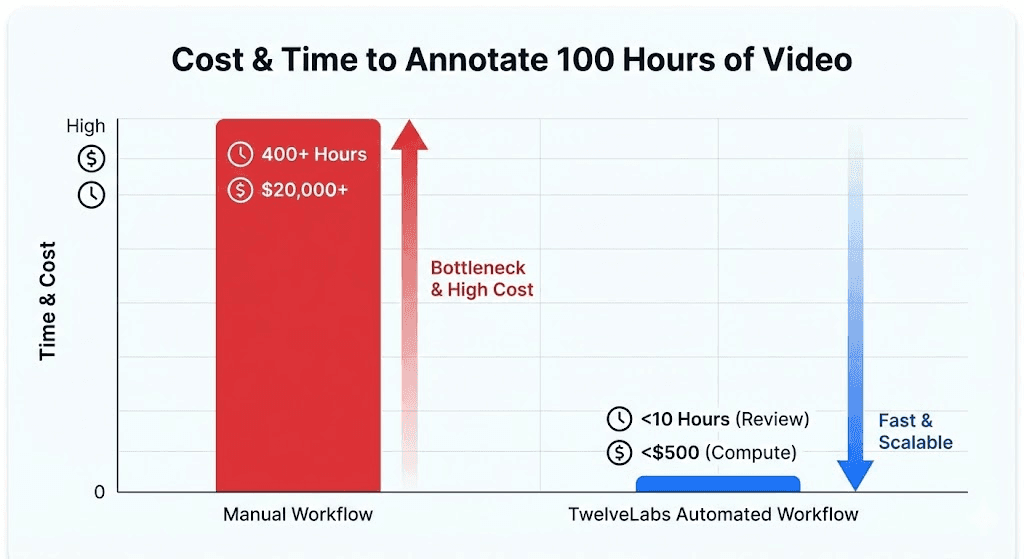

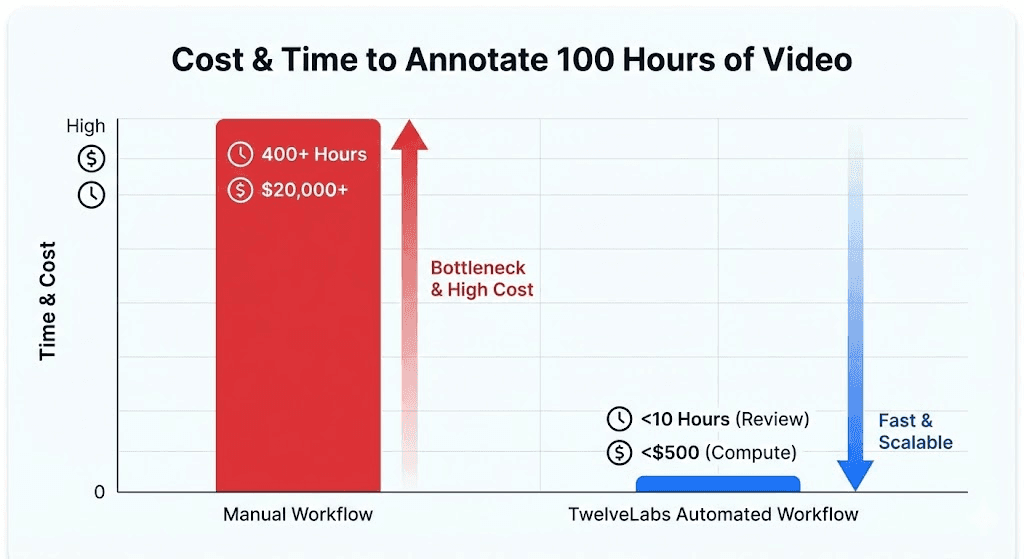

Annotating one hour of video takes a human reviewer 4 hours and costs $100 per hour for specialized domains. This tutorial builds a Next.js reference application that replaces that workflow using TwelveLabs Marengo embeddings and Pegasus structured analysis. The output: timestamped, schema-enforced training labels exported as JSON, CSV, or COCO; ready for your ML pipeline.

Annotating one hour of video takes a human reviewer 4 hours and costs $100 per hour for specialized domains. This tutorial builds a Next.js reference application that replaces that workflow using TwelveLabs Marengo embeddings and Pegasus structured analysis. The output: timestamped, schema-enforced training labels exported as JSON, CSV, or COCO; ready for your ML pipeline.

In this article

No headings found on page

Join our newsletter

Join our newsletter

Receive the latest advancements, tutorials, and industry insights in video understanding

Receive the latest advancements, tutorials, and industry insights in video understanding

Search, analyze, and explore your videos with AI.

Mar 9, 2026

6 Minutes

Copy link to article

Introduction

Labeling video data is one of the most expensive bottlenecks in machine learning. A single hour of footage requires 4 hours of human annotation time, and at $100 per hour for domain specialists (radiologists, safety auditors, sports analysts) the math stops working long before you reach your back catalog.

The footage exists. Dashcams, retail cameras, warehouse feeds, surgical recordings, sports broadcasts: enterprises sit on thousands of hours of video containing precisely the training signals their models need. But the path from raw footage to structured labels still runs through a labeling platform where human reviewers scrub frame by frame.

This tutorial builds a different pipeline. Instead of sending video to annotators, we send it to TwelveLabs' multimodal foundation models. Marengo encodes each video into dense, temporal embeddings that capture visual, audio, and contextual information in 512 dimensions. Pegasus then reasons over those embeddings and returns structured, timestamped annotations (complete with scene classifications, object detections, and confidence scores) all enforced by a strict JSON schema.

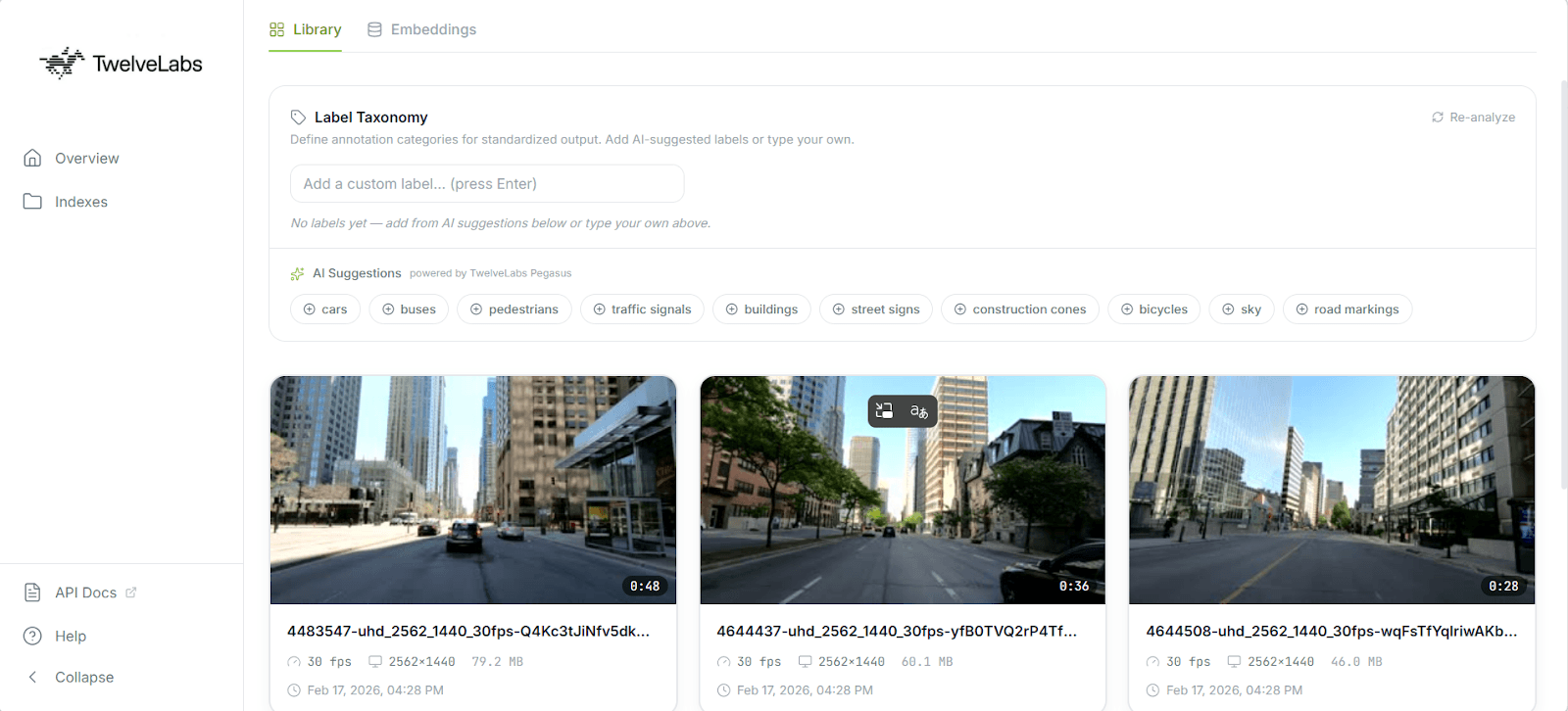

By the end of this walkthrough, you will have a working Next.js application that indexes video, auto-generates training labels against a custom taxonomy, visualizes your dataset in 2D embedding space, and exports to COCO, JSON, or CSV for direct ingestion into PyTorch or any standard ML pipeline.

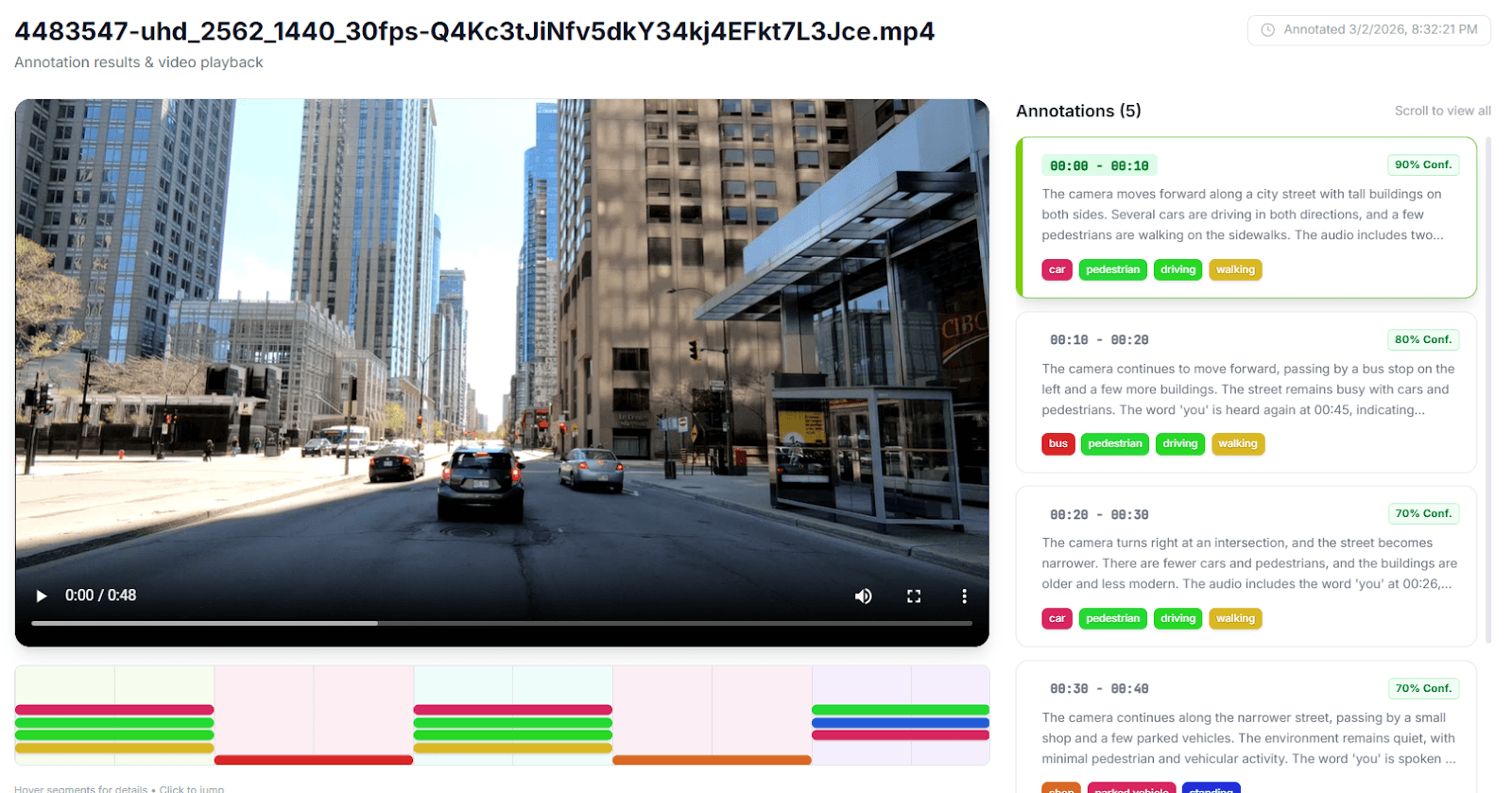

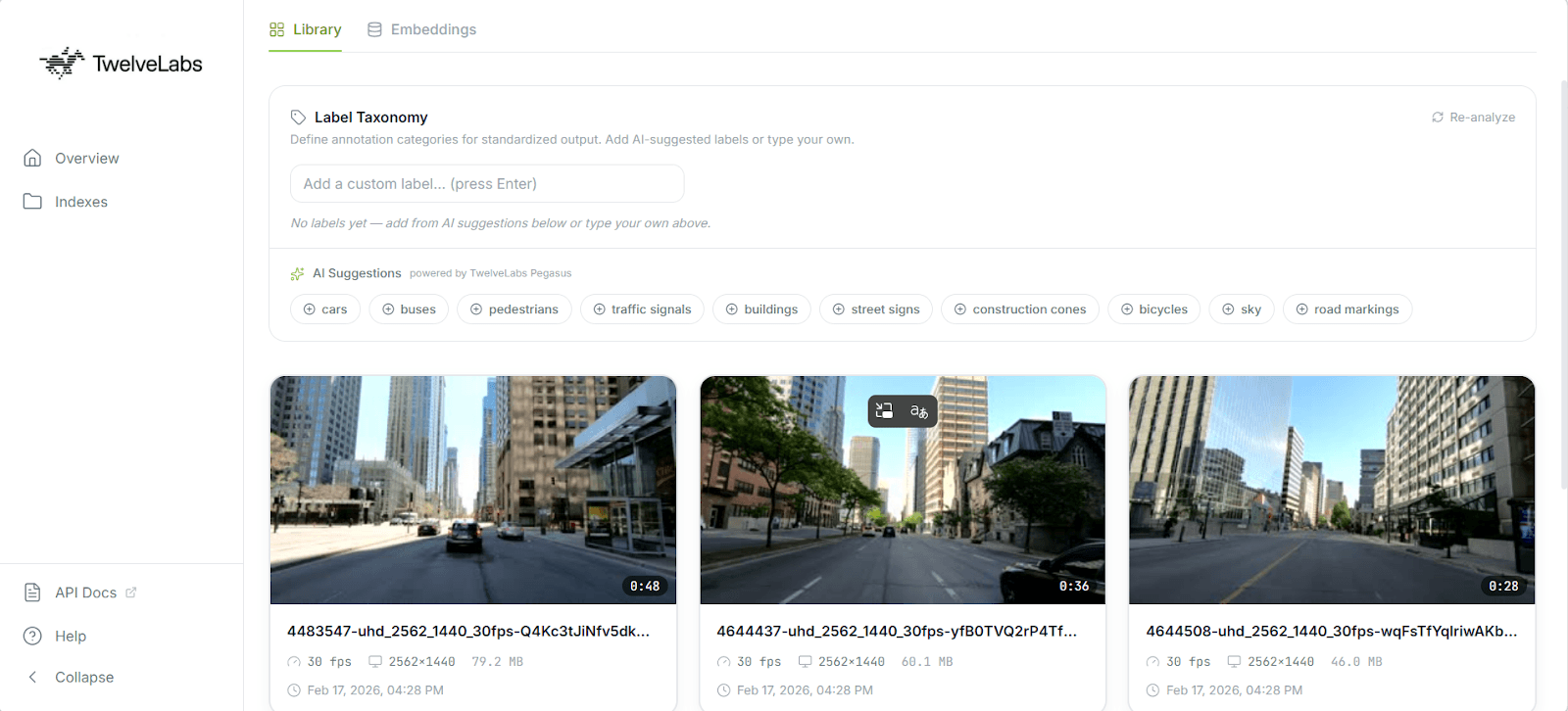

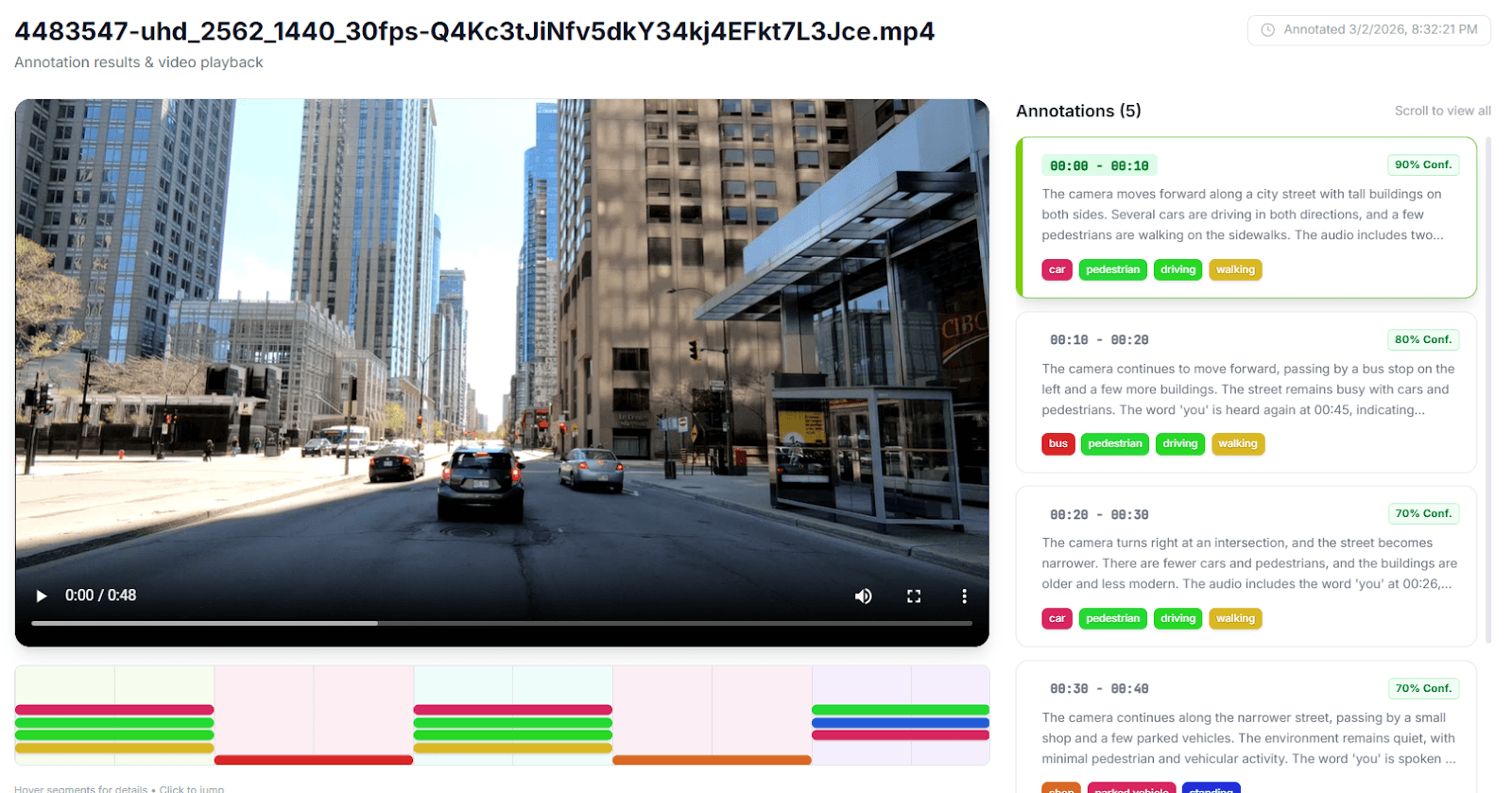

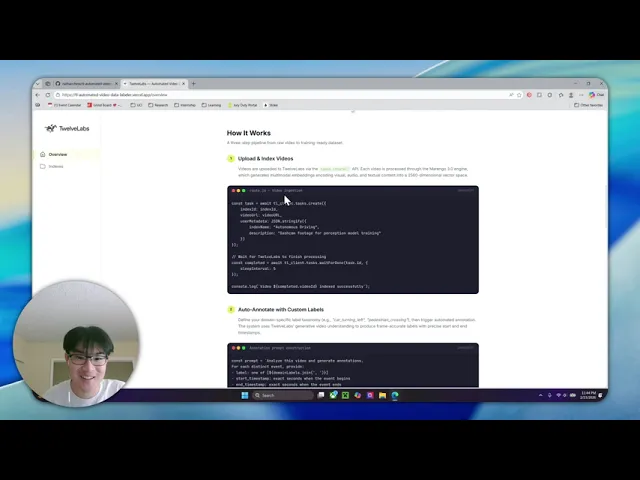

Here is the finished application in action:

Architecture Overview

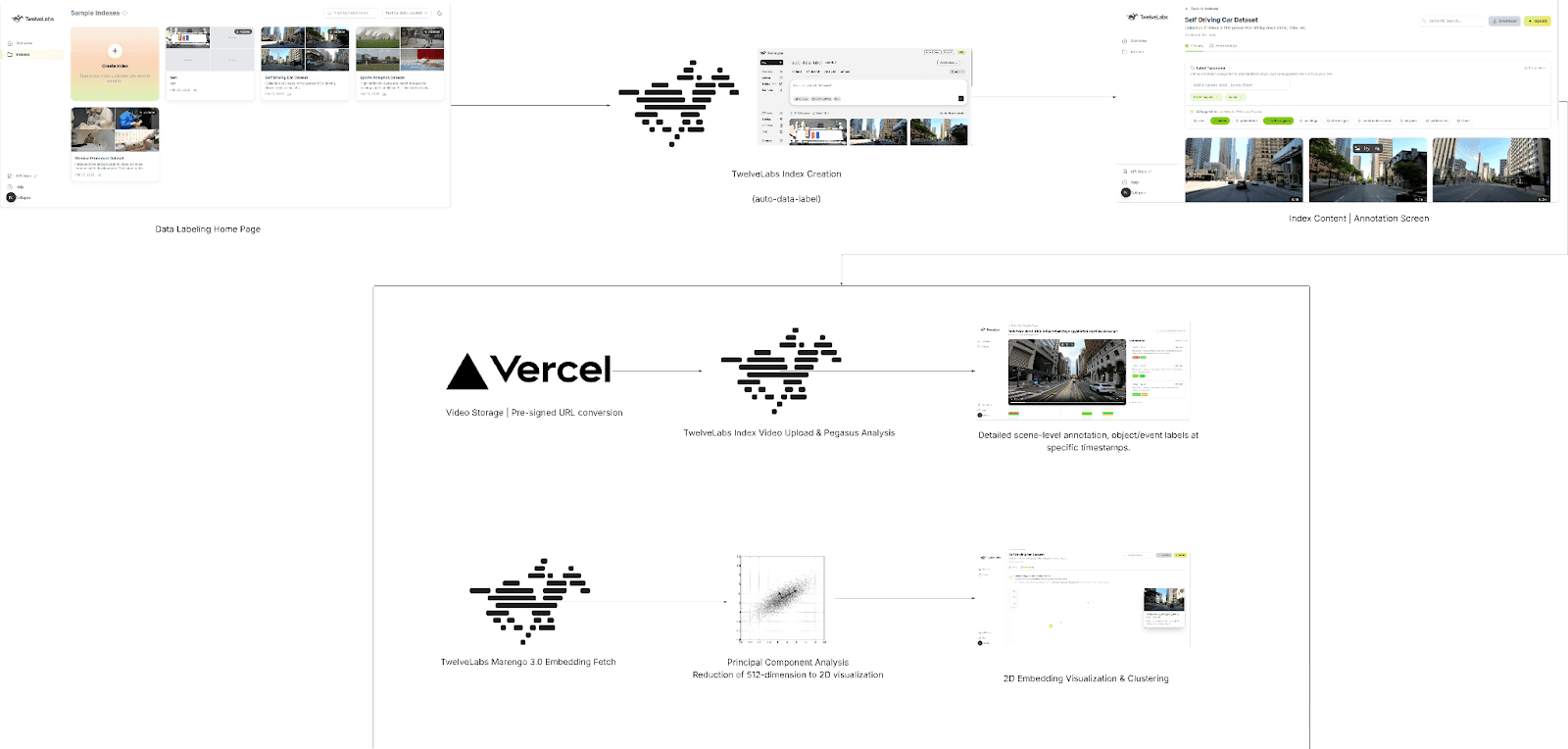

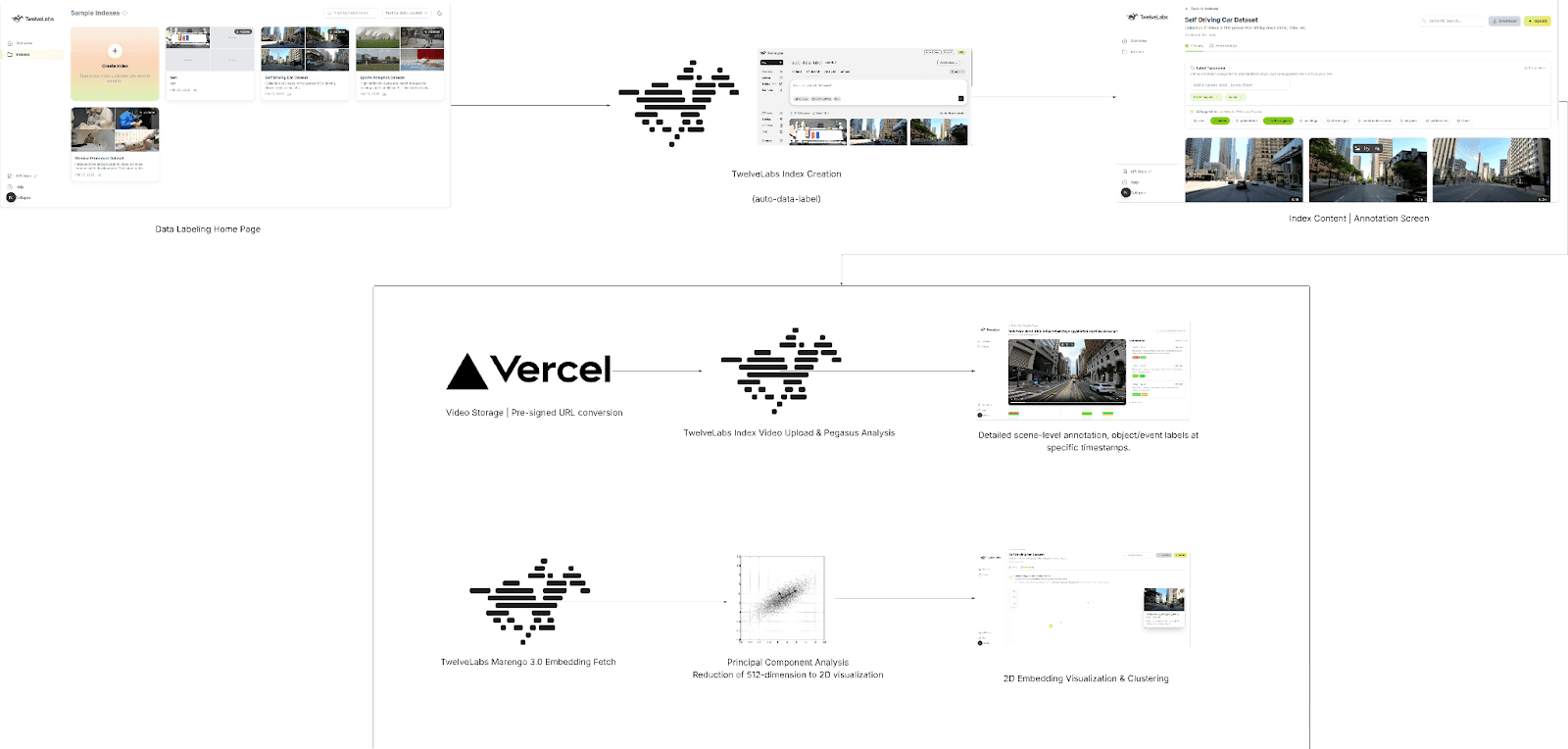

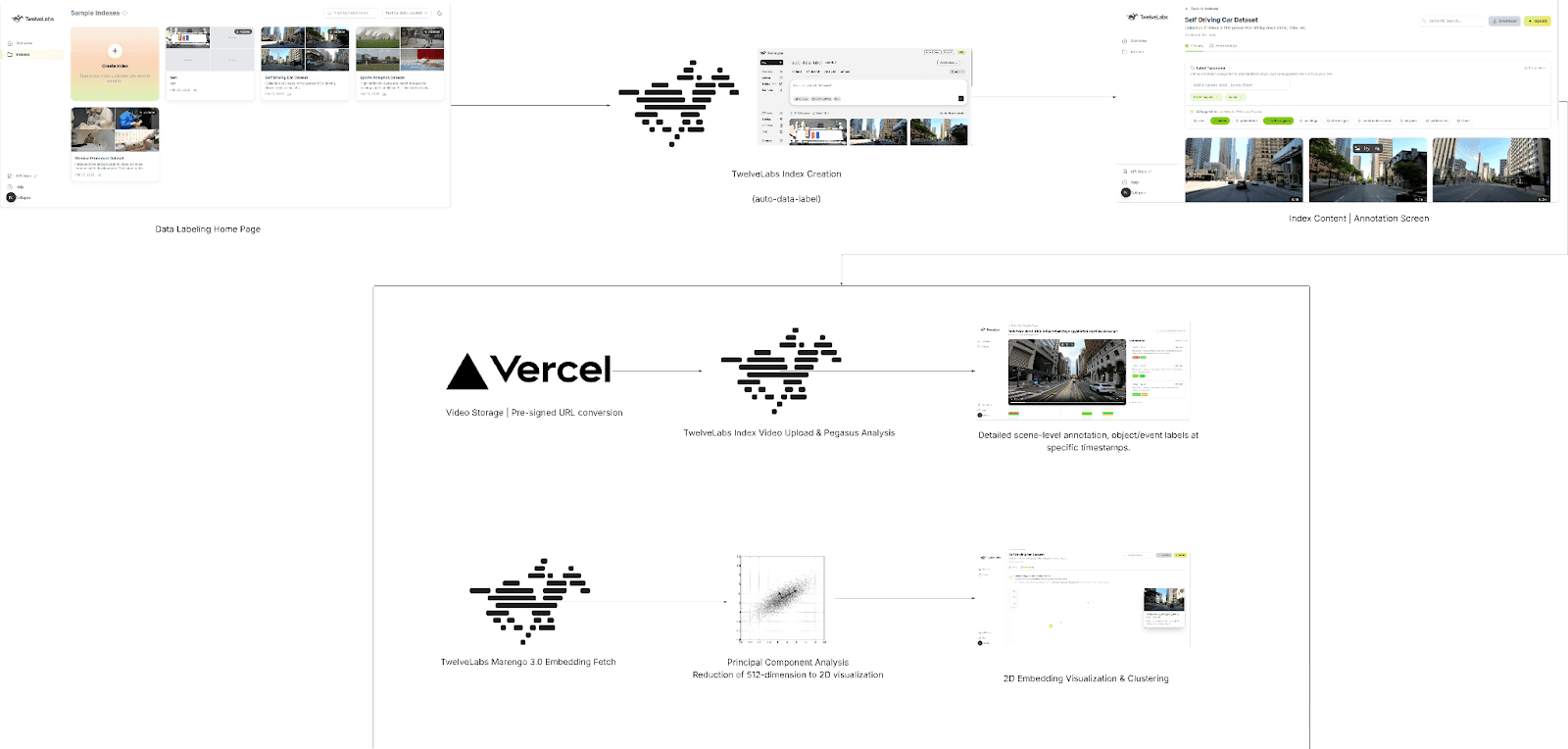

Before writing any code, here is the full pipeline. Each layer was chosen to solve a specific constraint of production video annotation.

Next.js 16 + React 19 handles both the frontend dashboard and the backend API routes. Serverless functions let us orchestrate the entire annotation pipeline without running a persistent server, which matters when you want ISV partners or internal teams to deploy this with a single vercel deploy.

Vercel Blob provides temporary video storage. Serverless environments impose strict payload limits (4.5 MB on Vercel), so the client uploads video files directly to Blob storage and passes only the resulting public URL to our API routes. This decouples upload size from function constraints.

TwelveLabs Marengo extracts 512-dimensional vector embeddings from each video. These are not frame-level features: Marengo processes video as a continuous multimodal signal across visual, audio, and temporal dimensions, producing a sequence of segment-level embeddings that capture semantic meaning rather than pixel data. This is what makes downstream clustering and visualization possible without any manual feature engineering.

TwelveLabs Pegasus (Analyze API) acts as the reasoning engine. Given the embeddings and a user-defined label taxonomy, Pegasus generates structured, machine-readable annotations with timestamps, scene classifications, and detected objects; all returned as JSON that maps directly to ML training formats.

For a deeper look at the data flow, the full architecture diagram is available on LucidChart: [TwelveLabs] - Automated Video Data Labeling Solution

Step 1: Prerequisite & Setup

You will need three things to run this project locally:

Node.js 18+ (20+ recommended)

TwelveLabs API Key from the TwelveLabs Dashboard

Vercel Blob Token for handling temporary video uploads

Clone the repository and install dependencies:

>> git clone https://github.com/nathanchess/tl-automated-video-data-labeler.git >> cd tl-automated-video-data-labeler >> npm

Set up your .env.local file:

TL_API_KEY=your_twelvelabs_api_key_here TL_INDEX_NAME=your_default_index_name_here BLOB_READ_WRITE_TOKEN

Start the development server with npm run dev. The dashboard will be available at http://localhost:3000/indexes.

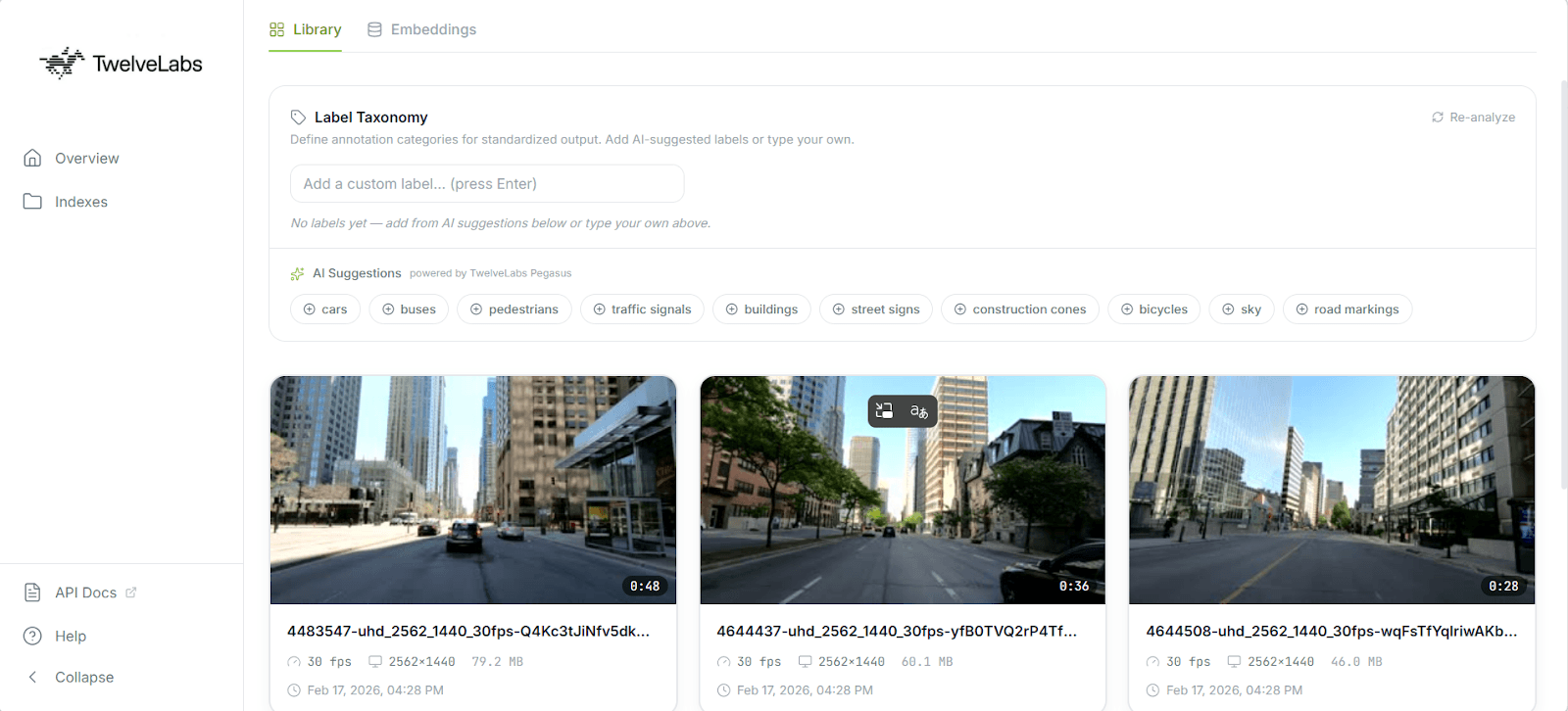

Step 2: Video Ingestion and Indexing

The ingestion flow begins when a user drops video files into the dashboard UI. The client uploads each file to Vercel Blob, then sends the resulting public URLs to a Next.js API route that triggers TwelveLabs indexing.

Here is the server-side logic in src/app/api/videos/route.js:

import { TwelveLabs } from 'twelvelabs-js'; import { NextResponse } from 'next/server'; const tl_client = new TwelveLabs({ apiKey: process.env.TL_API_KEY }); export async function POST(request) { const { videoURLs, metadata } = await request.json(); // 1. Locate the target index const indexPager = await tl_client.indexes.list(); let indexId = null; for await (const index of indexPager) { if (index.indexName === process.env.TL_INDEX_NAME) indexId = index.id; } if (!indexId) return NextResponse.json({ error: 'Index not found' }, { status: 404 }); // 2. Create tasks and wait for completion for (const videoURL of videoURLs) { const task = await tl_client.tasks.create({ indexId, videoUrl: videoURL, userMetadata: JSON.stringify(metadata), }); const completedTask = await tl_client.tasks.waitForDone(task.id, { sleepInterval: 5, }); if (completedTask.status !== 'ready') { throw new Error(`Task ${completedTask.id} failed`); } } return new Response('ok'); }

Production note: Video processing is fundamentally asynchronous. The waitForDone call here blocks the HTTP request until indexing completes, which works for a reference application processing a handful of clips. For production pipelines handling hours of footage, offload indexing to a background queue (Inngest, Upstash, or similar) and use TwelveLabs webhooks to notify your application when processing is complete. The SDK fully supports this pattern.

Step 3: Extracting and Pooling Embeddings

Once videos are indexed, we retrieve them along with their Marengo embeddings. These vectors are what power semantic grouping and the 2D visualization we build later.

const videoPager = await tl_client.indexes.videos.list(indexId); const videos = []; for await (const video of videoPager) { let embeddings = []; try { const videoData = await tl_client.indexes.videos.retrieve(indexId, video.id, { embeddingOption: ['visual'], }); const segments = videoData.embedding?.videoEmbedding?.segments || []; if (segments.length > 0) { const dim = segments[0].float?.length; if (dim) { const sum = new Array(dim).fill(0); let count = 0; // Average the embeddings across all temporal segments for (const seg of segments) { if (seg.float && seg.float.length === dim) { for (let i = 0; i < dim; i++) sum[i] += seg.float[i]; count++; } } if (count > 0) embeddings = sum.map((val) => val / count); } } } catch (embErr) { console.warn(`Failed to get embeddings for ${video.id}`); } videos.push({ ...video, embeddings }); }

Why mean pooling? Marengo outputs a sequence of embeddings mapped to temporal segments; an embedding for seconds 0–5, another for 5–10, and so on. That temporal granularity is valuable for search (finding the exact moment a forklift enters a restricted zone), but to visualize an entire video as a single point in a 2D scatterplot, we need a single vector per video.

Mean pooling (averaging the float values across all segments) produces a composite vector that represents the overall semantic content of the video. If the footage is primarily a forklift operating in a warehouse, the averaged vector will land in the "forklift operation" region of embedding space, regardless of which specific 5-second segment you examine.

Step 4: Zero-Shot Auto-Labeling with Pegasus

The core problem with using general-purpose LLMs for annotation is output structure. Language models return free-form text, which means developers end up writing fragile regular expressions to extract timestamps, labels, and confidence scores from prose paragraphs. One unexpected formatting change and the parser breaks.

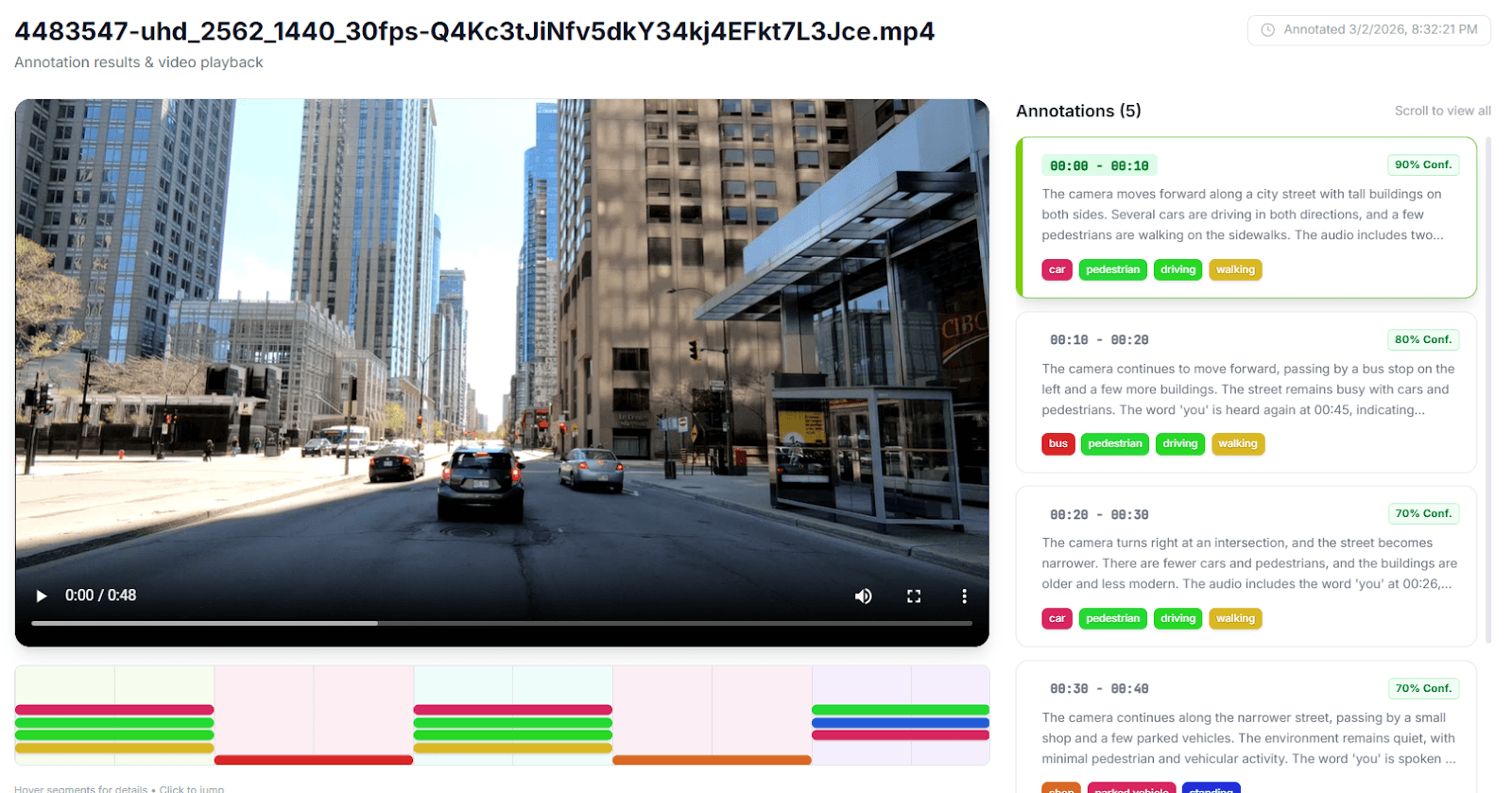

The TwelveLabs Analyze API eliminates this by enforcing a strict JSON schema on Pegasus's output. You define exactly the structure your ML pipeline expects, inject the user's custom label taxonomy (e.g., shoplifting, forklift_violation, surgical_clamp) directly into the prompt, and receive machine-parseable annotations with zero post-processing.

const response_format = { type: 'json_schema', json_schema: { type: 'object', properties: { annotations: { type: 'array', items: { type: 'object', properties: { start_timestamp: { type: 'string' }, end_timestamp: { type: 'string' }, description: { type: 'string' }, scene_classification: { type: 'string' }, detected_objects: { type: 'array', items: { type: 'object', properties: { label: { type: 'string' }, confidence_score: { type: 'number' }, start_timestamp: { type: 'string' }, end_timestamp: { type: 'string' }, }, }, }, }, required: ['start_timestamp', 'end_timestamp', 'description', 'scene_classification', 'detected_objects'], }, }, }, }, };

This schema guarantees that every annotation includes start and end timestamps, a natural-language description, a scene classification label, and an array of detected objects (each with its own confidence score and temporal bounds). Because Pegasus is a zero-shot generative model, no fine-tuning is required. You define the taxonomy, pass the schema, and receive structured training data in seconds.

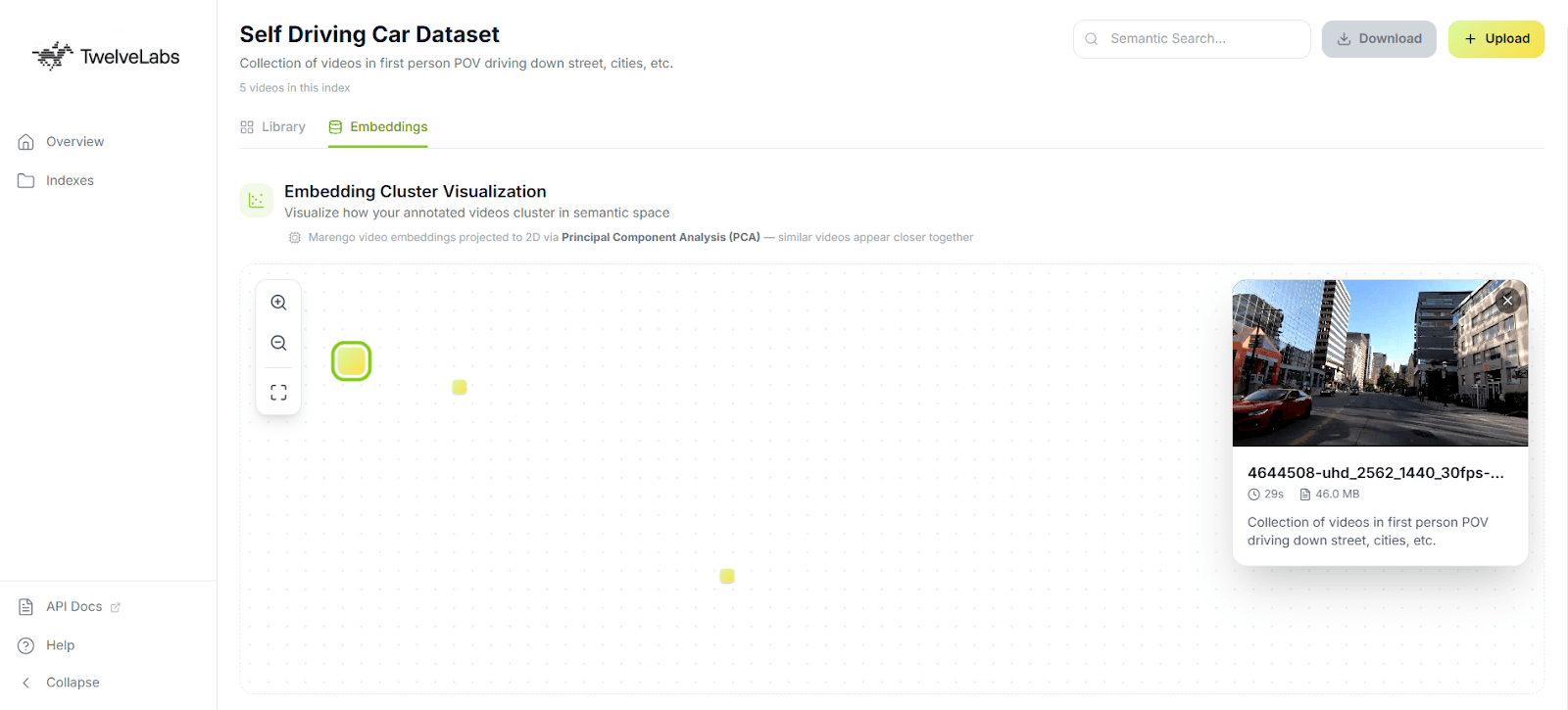

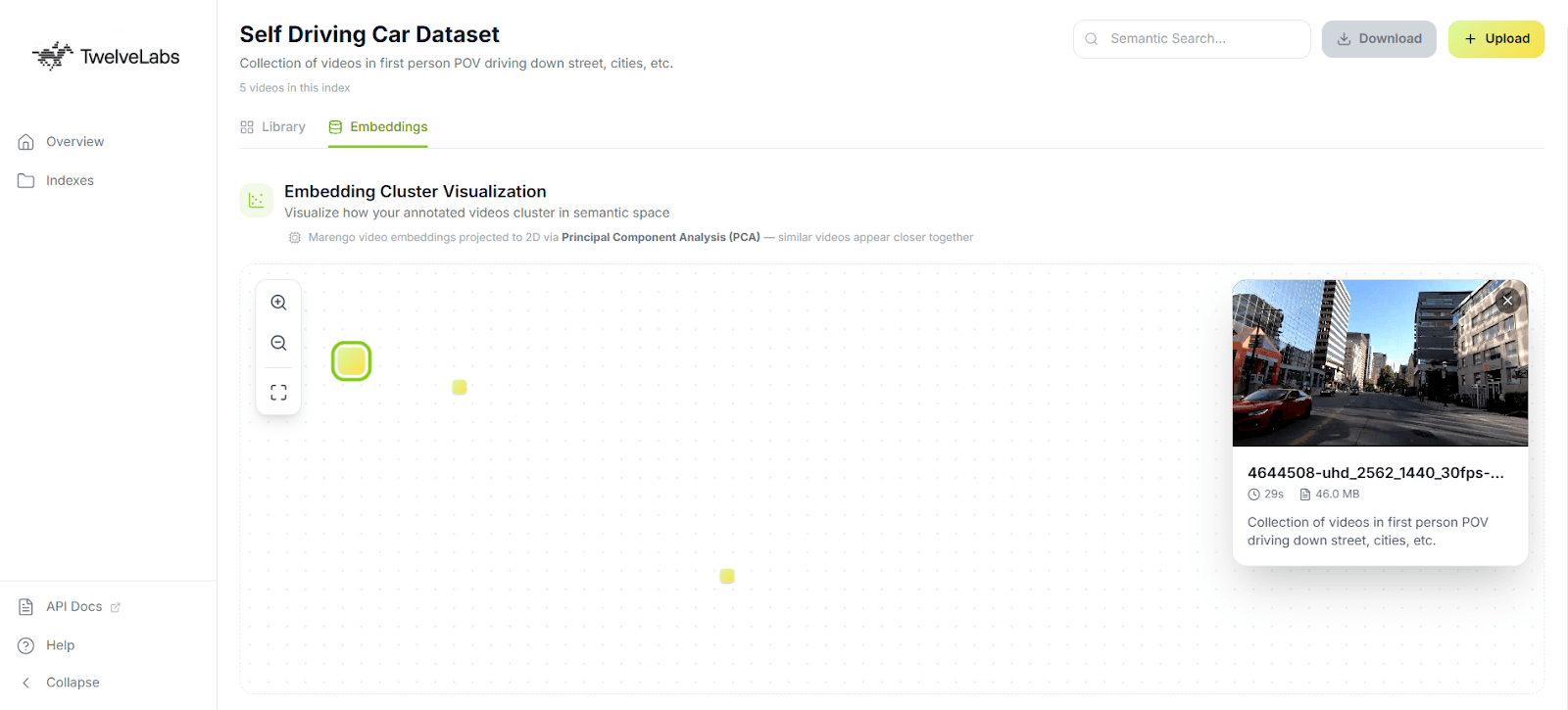

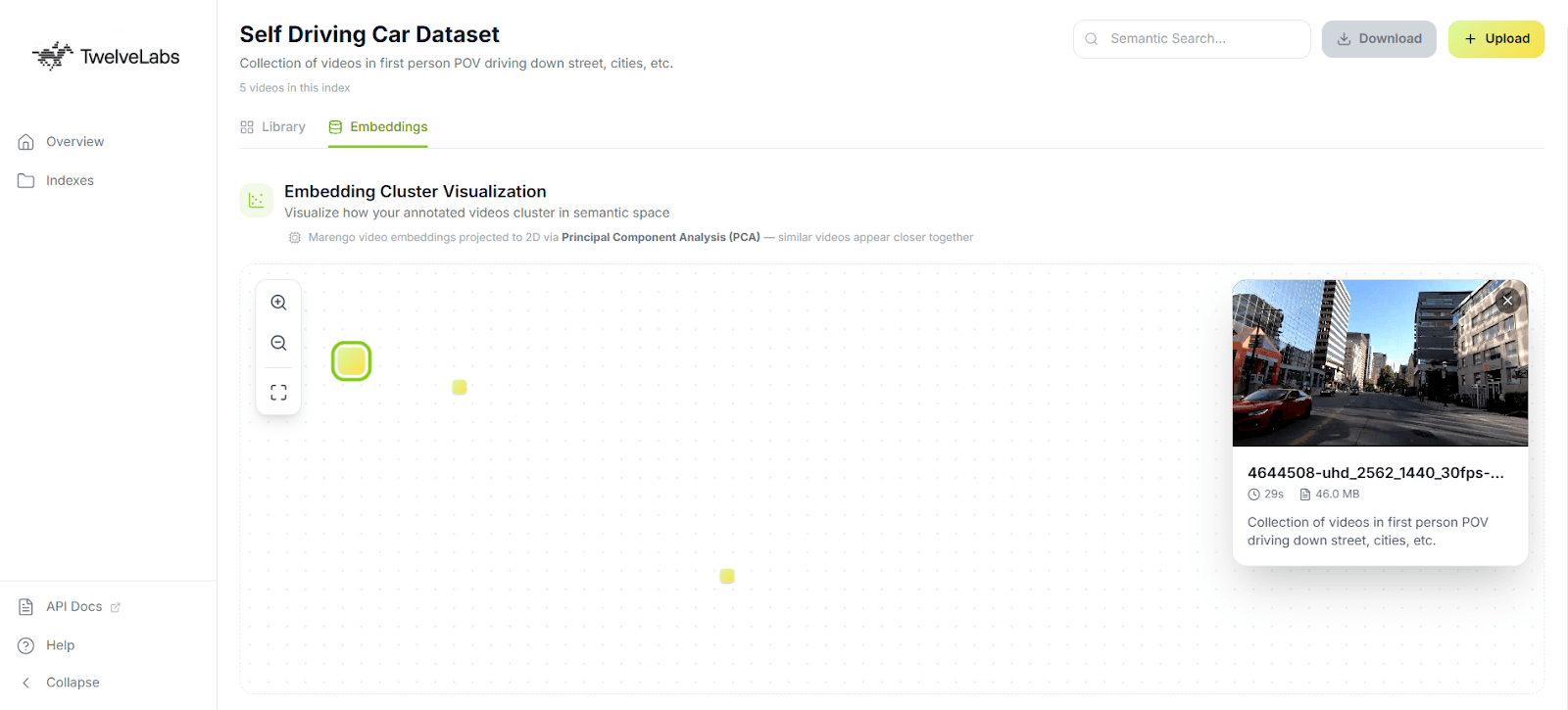

Step 5: Interactive Embedding Visualization with PCA

Numbers and labels are necessary for ML pipelines, but for data curation work, you need to see your dataset. The application includes an in-browser 2D scatter plot that projects Marengo's 512-dimensional embeddings into two dimensions using Principal Component Analysis (PCA).

Optimizing PCA for Browser-Based Visualization

Computing PCA on high-dimensional data is expensive. The standard approach (constructing a 512 × 512 covariance matrix and finding its eigenvalues via singular value decomposition) will freeze a browser tab. When the user is curating a dataset of 50 videos, they need immediate visual feedback, not a loading spinner.

The solution is a Gram matrix approach. Instead of computing the full D × D covariance matrix (where D = 512), we compute an N × N matrix of inner products between videos (where N = the number of videos in the dataset). For a 50-video dataset, finding the eigenvectors of a 50 × 50 matrix is orders of magnitude faster than operating on 512 dimensions; reducing complexity from O(D³) to O(N³) and keeping the visualization fluid in the main browser thread.

What the visualization reveals for annotation quality: Tight, well-separated clusters indicate that your labeled categories correspond to genuinely distinct semantic content. Outliers (points that sit far from any cluster) flag either rare events worth separate annotation attention (a safety incident in routine warehouse footage) or noisy data that may be misclassified. This gives data PMs and annotators immediate, actionable feedback on dataset health before they commit to expensive model training.

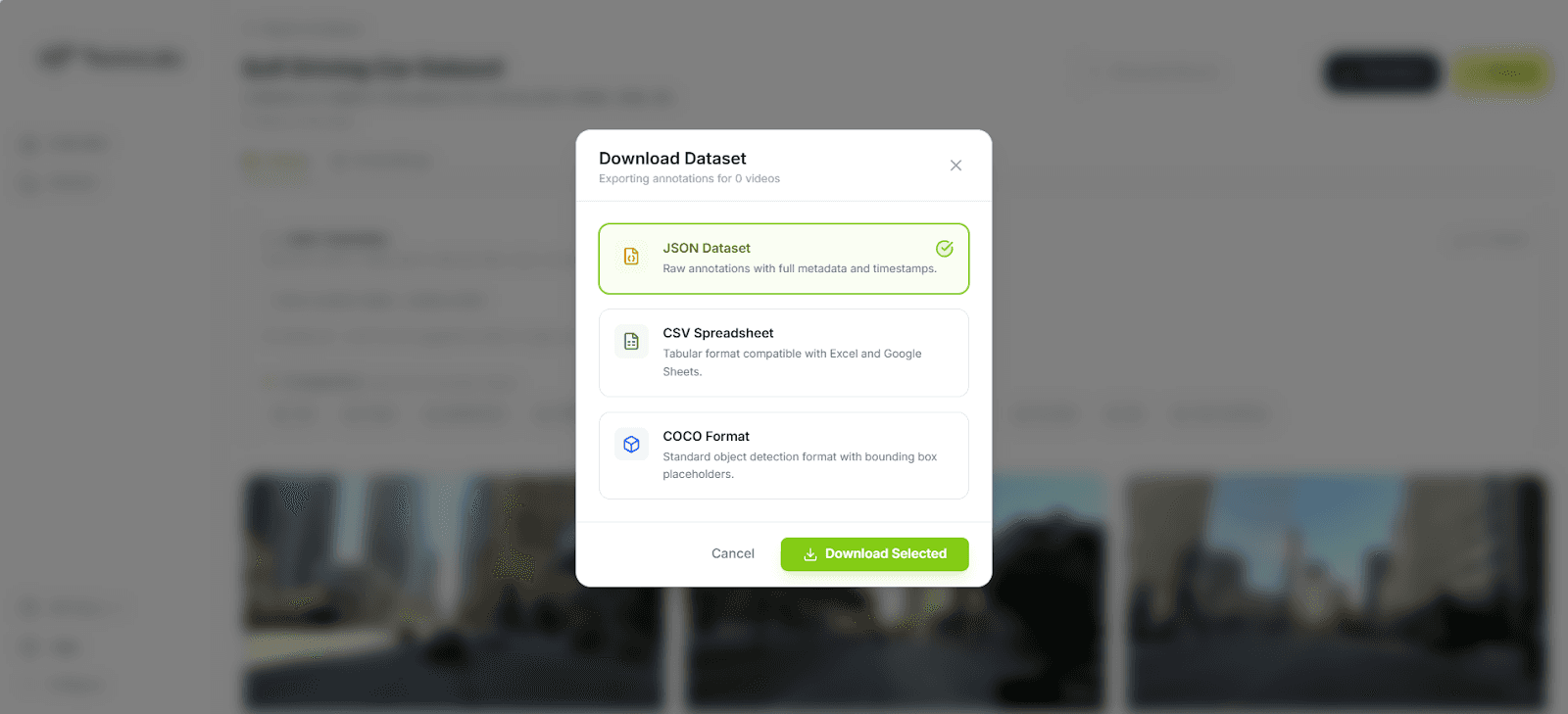

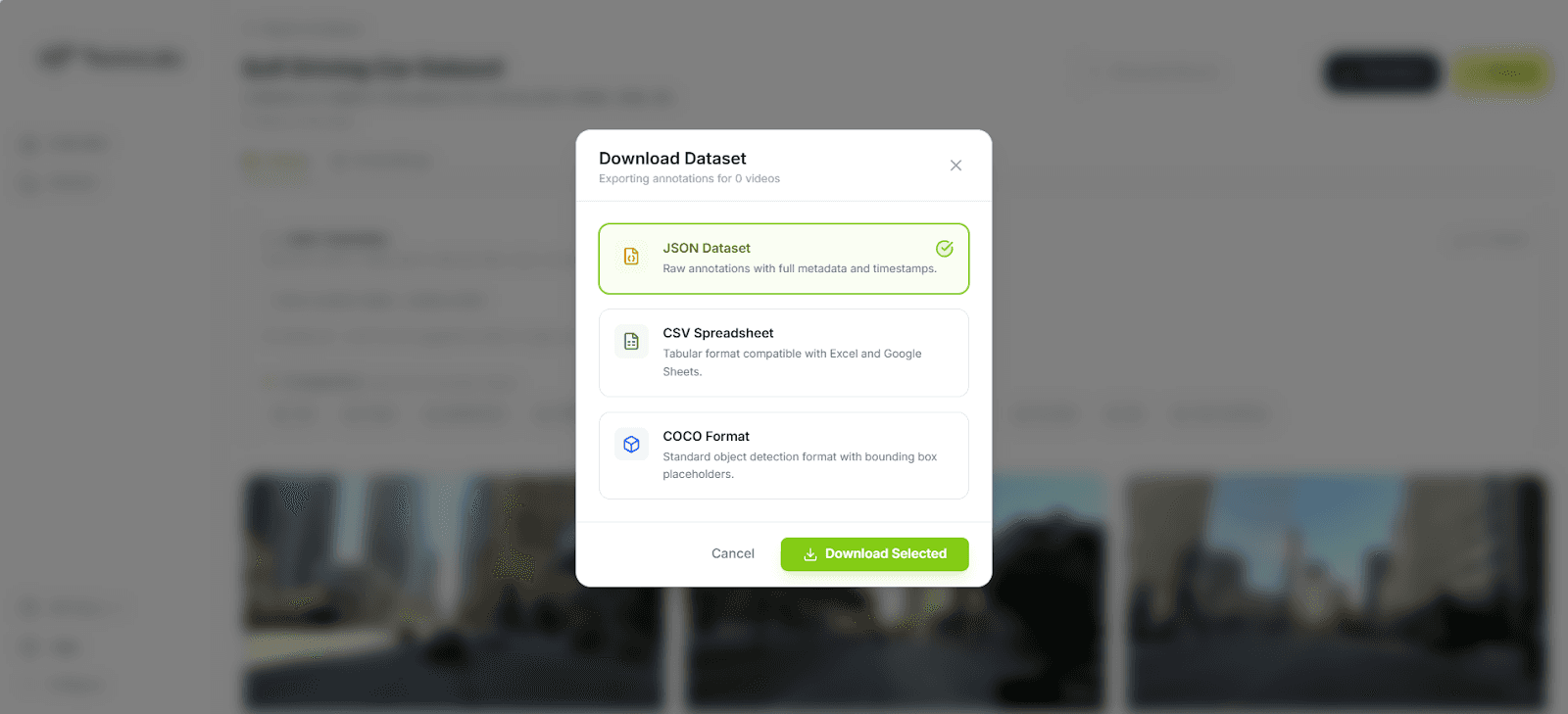

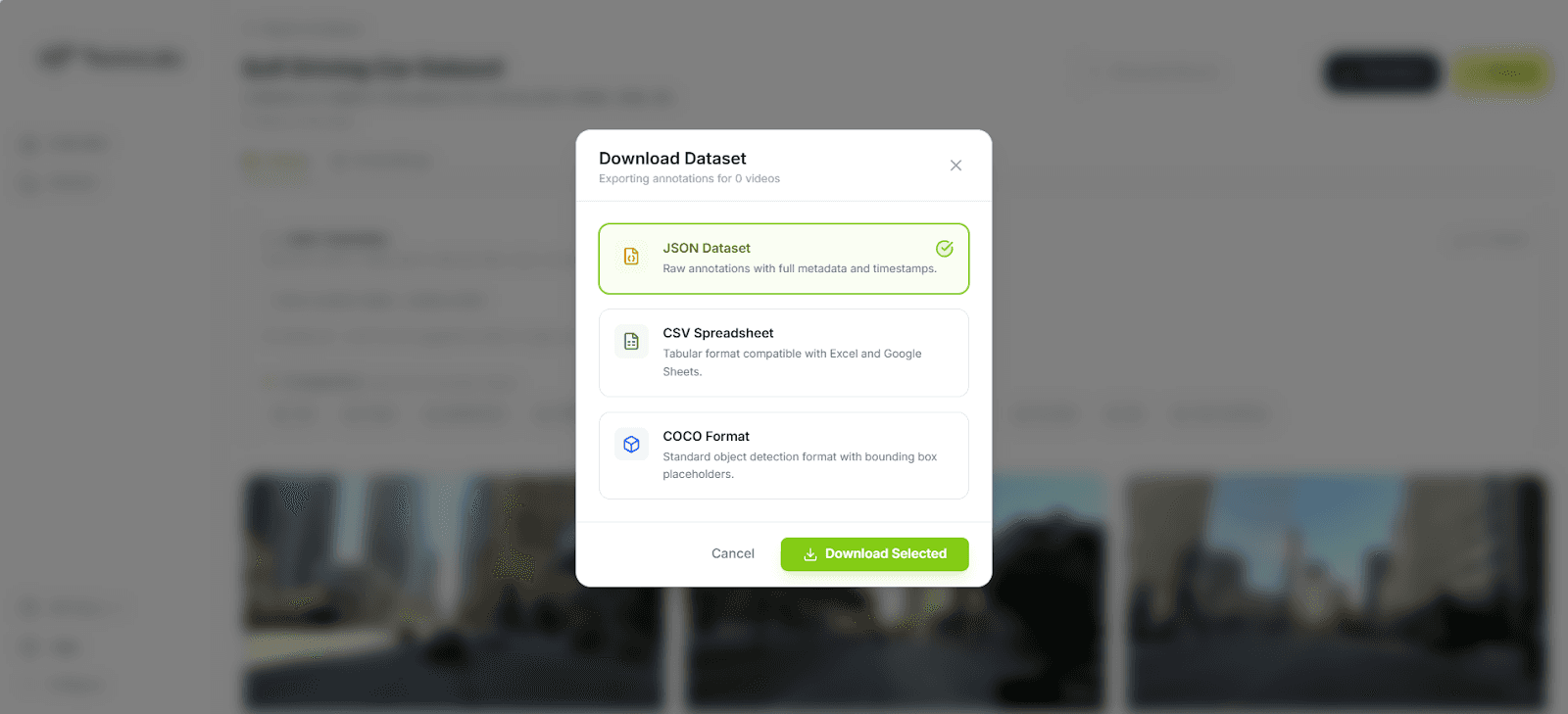

Step 6: Exporting for ML Pipelines

A curated dataset is only as valuable as its compatibility with downstream training tools. The application natively supports three export formats:

JSON provides the raw structured output for programmatic parsing in custom data loaders. Every field from the Pegasus annotation schema is preserved, including nested detected objects with confidence scores.

CSV flattens the annotations into spreadsheet-friendly rows with timestamps, labels, and descriptions; useful for quick manual audits or feeding into pandas-based preprocessing scripts.

COCO (Common Objects in Context) structures annotations into the

videos,annotations, andcategoriesarrays expected by major training frameworks. This is the format PyTorch DataLoaders consume natively, and it imports directly into workforce platforms like Label Studio and CVAT for a final human-in-the-loop review.

The COCO export is particularly important because it eliminates the reformatting step that typically sits between annotation and training. You go from raw video to indexed embeddings to structured labels to training-ready dataset (all within a single pipeline).

Business Impact: What This Changes

For ML and Computer Vision Teams

The economics of this pipeline shift the annotation bottleneck from human labor to compute:

Traditional manual workflow: 2–4 hours of human effort per 1 hour of video, at $50–100/hour for domain expertise. A 500-hour back catalog requires 1,000–2,000 hours of annotation labor; roughly 6–12 months of full-time work.

TwelveLabs automated workflow: Approximately 1 minute of compute per video for indexing and structured annotation, plus targeted human review of the auto-generated labels. The same 500-hour back catalog processes in days, not months.

Humans stay in the loop for quality assurance, but the expensive first pass (finding the 4 seconds of relevant action in a 2-hour video) is handled by the models. This makes it economically feasible to label historical footage that was previously too costly to touch.

For Data Labeling Platform ISVs

This application is a product blueprint. Integrating AI-first video annotation into your platform reduces per-project annotation effort by 80–90% based on the time savings demonstrated in this pipeline (minutes of compute replacing hours of manual review). That shifts your business model from selling raw human hours to selling high-margin, scalable intelligence; a differentiation that matters as annotation becomes increasingly commoditized.

Conclusion

This reference application demonstrates a complete pipeline for converting raw video into structured, training-ready datasets using TwelveLabs' video understanding APIs. Marengo encodes footage into dense temporal embeddings. Pegasus converts those embeddings into timestamped, schema-enforced training labels. The browser-based dashboard handles ingestion, visualization, and export (all without a persistent backend).

What you have after running this tutorial: a working application that you can point at your own footage, define your own label taxonomy, and generate ML-ready annotations in the time it takes to index the video.

The full source code is available on GitHub. Clone it, configure your TwelveLabs index, and start building.

To explore what else you can build with TwelveLabs' video understanding APIs, visit the documentation or reach out to our team.

Resources

Introduction

Labeling video data is one of the most expensive bottlenecks in machine learning. A single hour of footage requires 4 hours of human annotation time, and at $100 per hour for domain specialists (radiologists, safety auditors, sports analysts) the math stops working long before you reach your back catalog.

The footage exists. Dashcams, retail cameras, warehouse feeds, surgical recordings, sports broadcasts: enterprises sit on thousands of hours of video containing precisely the training signals their models need. But the path from raw footage to structured labels still runs through a labeling platform where human reviewers scrub frame by frame.

This tutorial builds a different pipeline. Instead of sending video to annotators, we send it to TwelveLabs' multimodal foundation models. Marengo encodes each video into dense, temporal embeddings that capture visual, audio, and contextual information in 512 dimensions. Pegasus then reasons over those embeddings and returns structured, timestamped annotations (complete with scene classifications, object detections, and confidence scores) all enforced by a strict JSON schema.

By the end of this walkthrough, you will have a working Next.js application that indexes video, auto-generates training labels against a custom taxonomy, visualizes your dataset in 2D embedding space, and exports to COCO, JSON, or CSV for direct ingestion into PyTorch or any standard ML pipeline.

Here is the finished application in action:

Architecture Overview

Before writing any code, here is the full pipeline. Each layer was chosen to solve a specific constraint of production video annotation.

Next.js 16 + React 19 handles both the frontend dashboard and the backend API routes. Serverless functions let us orchestrate the entire annotation pipeline without running a persistent server, which matters when you want ISV partners or internal teams to deploy this with a single vercel deploy.

Vercel Blob provides temporary video storage. Serverless environments impose strict payload limits (4.5 MB on Vercel), so the client uploads video files directly to Blob storage and passes only the resulting public URL to our API routes. This decouples upload size from function constraints.

TwelveLabs Marengo extracts 512-dimensional vector embeddings from each video. These are not frame-level features: Marengo processes video as a continuous multimodal signal across visual, audio, and temporal dimensions, producing a sequence of segment-level embeddings that capture semantic meaning rather than pixel data. This is what makes downstream clustering and visualization possible without any manual feature engineering.

TwelveLabs Pegasus (Analyze API) acts as the reasoning engine. Given the embeddings and a user-defined label taxonomy, Pegasus generates structured, machine-readable annotations with timestamps, scene classifications, and detected objects; all returned as JSON that maps directly to ML training formats.

For a deeper look at the data flow, the full architecture diagram is available on LucidChart: [TwelveLabs] - Automated Video Data Labeling Solution

Step 1: Prerequisite & Setup

You will need three things to run this project locally:

Node.js 18+ (20+ recommended)

TwelveLabs API Key from the TwelveLabs Dashboard

Vercel Blob Token for handling temporary video uploads

Clone the repository and install dependencies:

>> git clone https://github.com/nathanchess/tl-automated-video-data-labeler.git >> cd tl-automated-video-data-labeler >> npm

Set up your .env.local file:

TL_API_KEY=your_twelvelabs_api_key_here TL_INDEX_NAME=your_default_index_name_here BLOB_READ_WRITE_TOKEN

Start the development server with npm run dev. The dashboard will be available at http://localhost:3000/indexes.

Step 2: Video Ingestion and Indexing

The ingestion flow begins when a user drops video files into the dashboard UI. The client uploads each file to Vercel Blob, then sends the resulting public URLs to a Next.js API route that triggers TwelveLabs indexing.

Here is the server-side logic in src/app/api/videos/route.js:

import { TwelveLabs } from 'twelvelabs-js'; import { NextResponse } from 'next/server'; const tl_client = new TwelveLabs({ apiKey: process.env.TL_API_KEY }); export async function POST(request) { const { videoURLs, metadata } = await request.json(); // 1. Locate the target index const indexPager = await tl_client.indexes.list(); let indexId = null; for await (const index of indexPager) { if (index.indexName === process.env.TL_INDEX_NAME) indexId = index.id; } if (!indexId) return NextResponse.json({ error: 'Index not found' }, { status: 404 }); // 2. Create tasks and wait for completion for (const videoURL of videoURLs) { const task = await tl_client.tasks.create({ indexId, videoUrl: videoURL, userMetadata: JSON.stringify(metadata), }); const completedTask = await tl_client.tasks.waitForDone(task.id, { sleepInterval: 5, }); if (completedTask.status !== 'ready') { throw new Error(`Task ${completedTask.id} failed`); } } return new Response('ok'); }

Production note: Video processing is fundamentally asynchronous. The waitForDone call here blocks the HTTP request until indexing completes, which works for a reference application processing a handful of clips. For production pipelines handling hours of footage, offload indexing to a background queue (Inngest, Upstash, or similar) and use TwelveLabs webhooks to notify your application when processing is complete. The SDK fully supports this pattern.

Step 3: Extracting and Pooling Embeddings

Once videos are indexed, we retrieve them along with their Marengo embeddings. These vectors are what power semantic grouping and the 2D visualization we build later.

const videoPager = await tl_client.indexes.videos.list(indexId); const videos = []; for await (const video of videoPager) { let embeddings = []; try { const videoData = await tl_client.indexes.videos.retrieve(indexId, video.id, { embeddingOption: ['visual'], }); const segments = videoData.embedding?.videoEmbedding?.segments || []; if (segments.length > 0) { const dim = segments[0].float?.length; if (dim) { const sum = new Array(dim).fill(0); let count = 0; // Average the embeddings across all temporal segments for (const seg of segments) { if (seg.float && seg.float.length === dim) { for (let i = 0; i < dim; i++) sum[i] += seg.float[i]; count++; } } if (count > 0) embeddings = sum.map((val) => val / count); } } } catch (embErr) { console.warn(`Failed to get embeddings for ${video.id}`); } videos.push({ ...video, embeddings }); }

Why mean pooling? Marengo outputs a sequence of embeddings mapped to temporal segments; an embedding for seconds 0–5, another for 5–10, and so on. That temporal granularity is valuable for search (finding the exact moment a forklift enters a restricted zone), but to visualize an entire video as a single point in a 2D scatterplot, we need a single vector per video.

Mean pooling (averaging the float values across all segments) produces a composite vector that represents the overall semantic content of the video. If the footage is primarily a forklift operating in a warehouse, the averaged vector will land in the "forklift operation" region of embedding space, regardless of which specific 5-second segment you examine.

Step 4: Zero-Shot Auto-Labeling with Pegasus

The core problem with using general-purpose LLMs for annotation is output structure. Language models return free-form text, which means developers end up writing fragile regular expressions to extract timestamps, labels, and confidence scores from prose paragraphs. One unexpected formatting change and the parser breaks.

The TwelveLabs Analyze API eliminates this by enforcing a strict JSON schema on Pegasus's output. You define exactly the structure your ML pipeline expects, inject the user's custom label taxonomy (e.g., shoplifting, forklift_violation, surgical_clamp) directly into the prompt, and receive machine-parseable annotations with zero post-processing.

const response_format = { type: 'json_schema', json_schema: { type: 'object', properties: { annotations: { type: 'array', items: { type: 'object', properties: { start_timestamp: { type: 'string' }, end_timestamp: { type: 'string' }, description: { type: 'string' }, scene_classification: { type: 'string' }, detected_objects: { type: 'array', items: { type: 'object', properties: { label: { type: 'string' }, confidence_score: { type: 'number' }, start_timestamp: { type: 'string' }, end_timestamp: { type: 'string' }, }, }, }, }, required: ['start_timestamp', 'end_timestamp', 'description', 'scene_classification', 'detected_objects'], }, }, }, }, };

This schema guarantees that every annotation includes start and end timestamps, a natural-language description, a scene classification label, and an array of detected objects (each with its own confidence score and temporal bounds). Because Pegasus is a zero-shot generative model, no fine-tuning is required. You define the taxonomy, pass the schema, and receive structured training data in seconds.

Step 5: Interactive Embedding Visualization with PCA

Numbers and labels are necessary for ML pipelines, but for data curation work, you need to see your dataset. The application includes an in-browser 2D scatter plot that projects Marengo's 512-dimensional embeddings into two dimensions using Principal Component Analysis (PCA).

Optimizing PCA for Browser-Based Visualization

Computing PCA on high-dimensional data is expensive. The standard approach (constructing a 512 × 512 covariance matrix and finding its eigenvalues via singular value decomposition) will freeze a browser tab. When the user is curating a dataset of 50 videos, they need immediate visual feedback, not a loading spinner.

The solution is a Gram matrix approach. Instead of computing the full D × D covariance matrix (where D = 512), we compute an N × N matrix of inner products between videos (where N = the number of videos in the dataset). For a 50-video dataset, finding the eigenvectors of a 50 × 50 matrix is orders of magnitude faster than operating on 512 dimensions; reducing complexity from O(D³) to O(N³) and keeping the visualization fluid in the main browser thread.

What the visualization reveals for annotation quality: Tight, well-separated clusters indicate that your labeled categories correspond to genuinely distinct semantic content. Outliers (points that sit far from any cluster) flag either rare events worth separate annotation attention (a safety incident in routine warehouse footage) or noisy data that may be misclassified. This gives data PMs and annotators immediate, actionable feedback on dataset health before they commit to expensive model training.

Step 6: Exporting for ML Pipelines

A curated dataset is only as valuable as its compatibility with downstream training tools. The application natively supports three export formats:

JSON provides the raw structured output for programmatic parsing in custom data loaders. Every field from the Pegasus annotation schema is preserved, including nested detected objects with confidence scores.

CSV flattens the annotations into spreadsheet-friendly rows with timestamps, labels, and descriptions; useful for quick manual audits or feeding into pandas-based preprocessing scripts.

COCO (Common Objects in Context) structures annotations into the

videos,annotations, andcategoriesarrays expected by major training frameworks. This is the format PyTorch DataLoaders consume natively, and it imports directly into workforce platforms like Label Studio and CVAT for a final human-in-the-loop review.

The COCO export is particularly important because it eliminates the reformatting step that typically sits between annotation and training. You go from raw video to indexed embeddings to structured labels to training-ready dataset (all within a single pipeline).

Business Impact: What This Changes

For ML and Computer Vision Teams

The economics of this pipeline shift the annotation bottleneck from human labor to compute:

Traditional manual workflow: 2–4 hours of human effort per 1 hour of video, at $50–100/hour for domain expertise. A 500-hour back catalog requires 1,000–2,000 hours of annotation labor; roughly 6–12 months of full-time work.

TwelveLabs automated workflow: Approximately 1 minute of compute per video for indexing and structured annotation, plus targeted human review of the auto-generated labels. The same 500-hour back catalog processes in days, not months.

Humans stay in the loop for quality assurance, but the expensive first pass (finding the 4 seconds of relevant action in a 2-hour video) is handled by the models. This makes it economically feasible to label historical footage that was previously too costly to touch.

For Data Labeling Platform ISVs

This application is a product blueprint. Integrating AI-first video annotation into your platform reduces per-project annotation effort by 80–90% based on the time savings demonstrated in this pipeline (minutes of compute replacing hours of manual review). That shifts your business model from selling raw human hours to selling high-margin, scalable intelligence; a differentiation that matters as annotation becomes increasingly commoditized.

Conclusion

This reference application demonstrates a complete pipeline for converting raw video into structured, training-ready datasets using TwelveLabs' video understanding APIs. Marengo encodes footage into dense temporal embeddings. Pegasus converts those embeddings into timestamped, schema-enforced training labels. The browser-based dashboard handles ingestion, visualization, and export (all without a persistent backend).

What you have after running this tutorial: a working application that you can point at your own footage, define your own label taxonomy, and generate ML-ready annotations in the time it takes to index the video.

The full source code is available on GitHub. Clone it, configure your TwelveLabs index, and start building.

To explore what else you can build with TwelveLabs' video understanding APIs, visit the documentation or reach out to our team.

Resources

Introduction

Labeling video data is one of the most expensive bottlenecks in machine learning. A single hour of footage requires 4 hours of human annotation time, and at $100 per hour for domain specialists (radiologists, safety auditors, sports analysts) the math stops working long before you reach your back catalog.

The footage exists. Dashcams, retail cameras, warehouse feeds, surgical recordings, sports broadcasts: enterprises sit on thousands of hours of video containing precisely the training signals their models need. But the path from raw footage to structured labels still runs through a labeling platform where human reviewers scrub frame by frame.

This tutorial builds a different pipeline. Instead of sending video to annotators, we send it to TwelveLabs' multimodal foundation models. Marengo encodes each video into dense, temporal embeddings that capture visual, audio, and contextual information in 512 dimensions. Pegasus then reasons over those embeddings and returns structured, timestamped annotations (complete with scene classifications, object detections, and confidence scores) all enforced by a strict JSON schema.

By the end of this walkthrough, you will have a working Next.js application that indexes video, auto-generates training labels against a custom taxonomy, visualizes your dataset in 2D embedding space, and exports to COCO, JSON, or CSV for direct ingestion into PyTorch or any standard ML pipeline.

Here is the finished application in action:

Architecture Overview

Before writing any code, here is the full pipeline. Each layer was chosen to solve a specific constraint of production video annotation.

Next.js 16 + React 19 handles both the frontend dashboard and the backend API routes. Serverless functions let us orchestrate the entire annotation pipeline without running a persistent server, which matters when you want ISV partners or internal teams to deploy this with a single vercel deploy.

Vercel Blob provides temporary video storage. Serverless environments impose strict payload limits (4.5 MB on Vercel), so the client uploads video files directly to Blob storage and passes only the resulting public URL to our API routes. This decouples upload size from function constraints.

TwelveLabs Marengo extracts 512-dimensional vector embeddings from each video. These are not frame-level features: Marengo processes video as a continuous multimodal signal across visual, audio, and temporal dimensions, producing a sequence of segment-level embeddings that capture semantic meaning rather than pixel data. This is what makes downstream clustering and visualization possible without any manual feature engineering.

TwelveLabs Pegasus (Analyze API) acts as the reasoning engine. Given the embeddings and a user-defined label taxonomy, Pegasus generates structured, machine-readable annotations with timestamps, scene classifications, and detected objects; all returned as JSON that maps directly to ML training formats.

For a deeper look at the data flow, the full architecture diagram is available on LucidChart: [TwelveLabs] - Automated Video Data Labeling Solution

Step 1: Prerequisite & Setup

You will need three things to run this project locally:

Node.js 18+ (20+ recommended)

TwelveLabs API Key from the TwelveLabs Dashboard

Vercel Blob Token for handling temporary video uploads

Clone the repository and install dependencies:

>> git clone https://github.com/nathanchess/tl-automated-video-data-labeler.git >> cd tl-automated-video-data-labeler >> npm

Set up your .env.local file:

TL_API_KEY=your_twelvelabs_api_key_here TL_INDEX_NAME=your_default_index_name_here BLOB_READ_WRITE_TOKEN

Start the development server with npm run dev. The dashboard will be available at http://localhost:3000/indexes.

Step 2: Video Ingestion and Indexing

The ingestion flow begins when a user drops video files into the dashboard UI. The client uploads each file to Vercel Blob, then sends the resulting public URLs to a Next.js API route that triggers TwelveLabs indexing.

Here is the server-side logic in src/app/api/videos/route.js:

import { TwelveLabs } from 'twelvelabs-js'; import { NextResponse } from 'next/server'; const tl_client = new TwelveLabs({ apiKey: process.env.TL_API_KEY }); export async function POST(request) { const { videoURLs, metadata } = await request.json(); // 1. Locate the target index const indexPager = await tl_client.indexes.list(); let indexId = null; for await (const index of indexPager) { if (index.indexName === process.env.TL_INDEX_NAME) indexId = index.id; } if (!indexId) return NextResponse.json({ error: 'Index not found' }, { status: 404 }); // 2. Create tasks and wait for completion for (const videoURL of videoURLs) { const task = await tl_client.tasks.create({ indexId, videoUrl: videoURL, userMetadata: JSON.stringify(metadata), }); const completedTask = await tl_client.tasks.waitForDone(task.id, { sleepInterval: 5, }); if (completedTask.status !== 'ready') { throw new Error(`Task ${completedTask.id} failed`); } } return new Response('ok'); }

Production note: Video processing is fundamentally asynchronous. The waitForDone call here blocks the HTTP request until indexing completes, which works for a reference application processing a handful of clips. For production pipelines handling hours of footage, offload indexing to a background queue (Inngest, Upstash, or similar) and use TwelveLabs webhooks to notify your application when processing is complete. The SDK fully supports this pattern.

Step 3: Extracting and Pooling Embeddings

Once videos are indexed, we retrieve them along with their Marengo embeddings. These vectors are what power semantic grouping and the 2D visualization we build later.

const videoPager = await tl_client.indexes.videos.list(indexId); const videos = []; for await (const video of videoPager) { let embeddings = []; try { const videoData = await tl_client.indexes.videos.retrieve(indexId, video.id, { embeddingOption: ['visual'], }); const segments = videoData.embedding?.videoEmbedding?.segments || []; if (segments.length > 0) { const dim = segments[0].float?.length; if (dim) { const sum = new Array(dim).fill(0); let count = 0; // Average the embeddings across all temporal segments for (const seg of segments) { if (seg.float && seg.float.length === dim) { for (let i = 0; i < dim; i++) sum[i] += seg.float[i]; count++; } } if (count > 0) embeddings = sum.map((val) => val / count); } } } catch (embErr) { console.warn(`Failed to get embeddings for ${video.id}`); } videos.push({ ...video, embeddings }); }

Why mean pooling? Marengo outputs a sequence of embeddings mapped to temporal segments; an embedding for seconds 0–5, another for 5–10, and so on. That temporal granularity is valuable for search (finding the exact moment a forklift enters a restricted zone), but to visualize an entire video as a single point in a 2D scatterplot, we need a single vector per video.

Mean pooling (averaging the float values across all segments) produces a composite vector that represents the overall semantic content of the video. If the footage is primarily a forklift operating in a warehouse, the averaged vector will land in the "forklift operation" region of embedding space, regardless of which specific 5-second segment you examine.

Step 4: Zero-Shot Auto-Labeling with Pegasus

The core problem with using general-purpose LLMs for annotation is output structure. Language models return free-form text, which means developers end up writing fragile regular expressions to extract timestamps, labels, and confidence scores from prose paragraphs. One unexpected formatting change and the parser breaks.

The TwelveLabs Analyze API eliminates this by enforcing a strict JSON schema on Pegasus's output. You define exactly the structure your ML pipeline expects, inject the user's custom label taxonomy (e.g., shoplifting, forklift_violation, surgical_clamp) directly into the prompt, and receive machine-parseable annotations with zero post-processing.

const response_format = { type: 'json_schema', json_schema: { type: 'object', properties: { annotations: { type: 'array', items: { type: 'object', properties: { start_timestamp: { type: 'string' }, end_timestamp: { type: 'string' }, description: { type: 'string' }, scene_classification: { type: 'string' }, detected_objects: { type: 'array', items: { type: 'object', properties: { label: { type: 'string' }, confidence_score: { type: 'number' }, start_timestamp: { type: 'string' }, end_timestamp: { type: 'string' }, }, }, }, }, required: ['start_timestamp', 'end_timestamp', 'description', 'scene_classification', 'detected_objects'], }, }, }, }, };

This schema guarantees that every annotation includes start and end timestamps, a natural-language description, a scene classification label, and an array of detected objects (each with its own confidence score and temporal bounds). Because Pegasus is a zero-shot generative model, no fine-tuning is required. You define the taxonomy, pass the schema, and receive structured training data in seconds.

Step 5: Interactive Embedding Visualization with PCA

Numbers and labels are necessary for ML pipelines, but for data curation work, you need to see your dataset. The application includes an in-browser 2D scatter plot that projects Marengo's 512-dimensional embeddings into two dimensions using Principal Component Analysis (PCA).

Optimizing PCA for Browser-Based Visualization

Computing PCA on high-dimensional data is expensive. The standard approach (constructing a 512 × 512 covariance matrix and finding its eigenvalues via singular value decomposition) will freeze a browser tab. When the user is curating a dataset of 50 videos, they need immediate visual feedback, not a loading spinner.

The solution is a Gram matrix approach. Instead of computing the full D × D covariance matrix (where D = 512), we compute an N × N matrix of inner products between videos (where N = the number of videos in the dataset). For a 50-video dataset, finding the eigenvectors of a 50 × 50 matrix is orders of magnitude faster than operating on 512 dimensions; reducing complexity from O(D³) to O(N³) and keeping the visualization fluid in the main browser thread.

What the visualization reveals for annotation quality: Tight, well-separated clusters indicate that your labeled categories correspond to genuinely distinct semantic content. Outliers (points that sit far from any cluster) flag either rare events worth separate annotation attention (a safety incident in routine warehouse footage) or noisy data that may be misclassified. This gives data PMs and annotators immediate, actionable feedback on dataset health before they commit to expensive model training.

Step 6: Exporting for ML Pipelines

A curated dataset is only as valuable as its compatibility with downstream training tools. The application natively supports three export formats:

JSON provides the raw structured output for programmatic parsing in custom data loaders. Every field from the Pegasus annotation schema is preserved, including nested detected objects with confidence scores.

CSV flattens the annotations into spreadsheet-friendly rows with timestamps, labels, and descriptions; useful for quick manual audits or feeding into pandas-based preprocessing scripts.

COCO (Common Objects in Context) structures annotations into the

videos,annotations, andcategoriesarrays expected by major training frameworks. This is the format PyTorch DataLoaders consume natively, and it imports directly into workforce platforms like Label Studio and CVAT for a final human-in-the-loop review.

The COCO export is particularly important because it eliminates the reformatting step that typically sits between annotation and training. You go from raw video to indexed embeddings to structured labels to training-ready dataset (all within a single pipeline).

Business Impact: What This Changes

For ML and Computer Vision Teams

The economics of this pipeline shift the annotation bottleneck from human labor to compute:

Traditional manual workflow: 2–4 hours of human effort per 1 hour of video, at $50–100/hour for domain expertise. A 500-hour back catalog requires 1,000–2,000 hours of annotation labor; roughly 6–12 months of full-time work.

TwelveLabs automated workflow: Approximately 1 minute of compute per video for indexing and structured annotation, plus targeted human review of the auto-generated labels. The same 500-hour back catalog processes in days, not months.

Humans stay in the loop for quality assurance, but the expensive first pass (finding the 4 seconds of relevant action in a 2-hour video) is handled by the models. This makes it economically feasible to label historical footage that was previously too costly to touch.

For Data Labeling Platform ISVs

This application is a product blueprint. Integrating AI-first video annotation into your platform reduces per-project annotation effort by 80–90% based on the time savings demonstrated in this pipeline (minutes of compute replacing hours of manual review). That shifts your business model from selling raw human hours to selling high-margin, scalable intelligence; a differentiation that matters as annotation becomes increasingly commoditized.

Conclusion

This reference application demonstrates a complete pipeline for converting raw video into structured, training-ready datasets using TwelveLabs' video understanding APIs. Marengo encodes footage into dense temporal embeddings. Pegasus converts those embeddings into timestamped, schema-enforced training labels. The browser-based dashboard handles ingestion, visualization, and export (all without a persistent backend).

What you have after running this tutorial: a working application that you can point at your own footage, define your own label taxonomy, and generate ML-ready annotations in the time it takes to index the video.

The full source code is available on GitHub. Clone it, configure your TwelveLabs index, and start building.

To explore what else you can build with TwelveLabs' video understanding APIs, visit the documentation or reach out to our team.

Resources

Related articles

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved

Platform

Enterprise

© 2021

-

2026

TwelveLabs, Inc. All Rights Reserved